Alleviating partisan gerrymandering: can math and computers help to eliminate wasted votes?

Partisan gerrymandering is a major cause for voter disenfranchisement in United States. However, convincing US courts to adopt specific measures to quantify gerrymandering has been of limited success to date. Recently, Stephanopoulos and McGhee introduced a new and precise measure of partisan gerrymandering via the so-called “efficiency gap” that computes the absolutes difference of wasted votes between two political parties in a two-party system. Quite importantly from a legal point of view, this measure was found legally convincing enough in a US appeals court in a case that claims that the legislative map of the state of Wisconsin was gerrymandered; the case is now pending in US Supreme Court. In this article, we show the following: (a) We provide interesting mathematical and computational complexity properties of the problem of minimizing the efficiency gap measure. To the best of our knowledge, these are the first non-trivial theoretical and algorithmic analyses of this measure of gerrymandering. (b) We provide a simple and fast algorithm that can “un-gerrymander” the district maps for the states of Texas, Virginia, Wisconsin and Pennsylvania by bring their efficiency gaps to acceptable levels from the current unacceptable levels. Our work thus shows that, notwithstanding the general worst case approximation hardness of the efficiency gap measure as shown by us,finding district maps with acceptable levels of efficiency gaps is a computationally tractable problem from a practical point of view. Based on these empirical results, we also provide some interesting insights into three practical issues related the efficiency gap measure. We believe that, should the US Supreme Court uphold the decision of lower courts, our research work and software will provide a crucial supporting hand to remove partisan gerrymandering.

💡 Research Summary

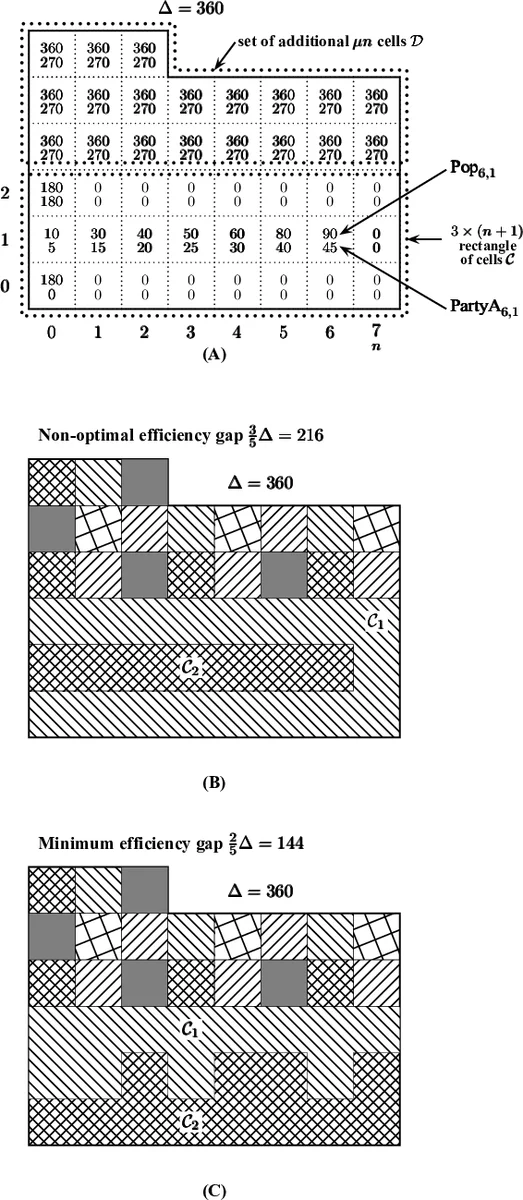

The paper tackles the problem of partisan gerrymandering by focusing on the “efficiency gap” (EG), a metric introduced by Stephanopoulos and McGhee that quantifies the difference in wasted votes between two parties. The authors first formalize the EG‑minimization problem: given a rectangular grid of cells, each with total population and party‑specific vote counts, partition the region into κ districts of equal population (an equipartition) so that the sum of absolute EG values across districts is minimized. They prove structural properties (Lemma 1, Corollary 2) showing that EG can only take a finite set of rational values, which dramatically reduces the search space.

On the theoretical side, they establish that the general EG‑minimization problem is NP‑hard (Theorem 4) and that even randomized local‑search algorithms cannot guarantee polynomial‑time optimality unless RP = NP. However, they also identify realistic conditions under which the problem becomes tractable. Theorem 10 shows that if districts are y‑convex (convex in one axis), a polynomial‑time algorithm can find an optimal solution. Theorem 11 extends this to districts that satisfy a modest compactness condition, allowing efficient approximation.

Algorithmically, the authors design a simple randomized local‑search heuristic. Starting from a random feasible partition, they repeatedly perform “swap” and “shift” operations that move individual cells between neighboring districts while preserving population balance. Each move is accepted only if it reduces the total EG. No temperature schedule or Markov‑chain transition probabilities are used, making the implementation straightforward. Empirically, the algorithm converges after only a few thousand iterations, typically within minutes.

The experimental evaluation uses 2012 U.S. House election data for four states: Wisconsin, Texas, Virginia, and Pennsylvania. Original EG values were 14.76 %, 4.09 %, 22.25 %, and 23.80 % respectively. After applying the heuristic, the gaps dropped to 3.80 %, 3.33 %, 3.61 %, and 8.64 %, representing a dramatic reduction while preserving district compactness. The runtime for each state was under five minutes on a standard workstation.

Beyond raw numbers, the paper discusses three practical considerations: (1) the relationship between EG and seat gain, warning that overly aggressive EG reduction can unintentionally alter the expected seat distribution; (2) the trade‑off between compactness and EG, showing that modest compactness constraints can be incorporated without sacrificing much EG improvement; and (3) the “naturalness” of existing district shapes, noting that states with historically organic boundaries tend to require fewer adjustments.

The authors argue that, should the U.S. Supreme Court uphold the lower‑court rulings that accept EG as a legal standard, their software could serve as a concrete tool for courts to order the redrawing of maps. They also critique judicial skepticism toward mathematical evidence, emphasizing that sophisticated models can be translated into fast, usable algorithms.

Finally, the paper outlines future work: extending the framework to finer‑granularity data such as Voting Tabulation Districts, handling multi‑party systems, and integrating additional criteria (community preservation, demographic fairness) into a multi‑objective optimization model. In sum, the study provides the first non‑trivial theoretical analysis of EG minimization, demonstrates its practical tractability on real‑world data, and offers a ready‑to‑use computational approach that could influence both academic research and legal practice in combating partisan gerrymandering.

Comments & Academic Discussion

Loading comments...

Leave a Comment