Supervised and Unsupervised Transfer Learning for Question Answering

Although transfer learning has been shown to be successful for tasks like object and speech recognition, its applicability to question answering (QA) has yet to be well-studied. In this paper, we conduct extensive experiments to investigate the transferability of knowledge learned from a source QA dataset to a target dataset using two QA models. The performance of both models on a TOEFL listening comprehension test (Tseng et al., 2016) and MCTest (Richardson et al., 2013) is significantly improved via a simple transfer learning technique from MovieQA (Tapaswi et al., 2016). In particular, one of the models achieves the state-of-the-art on all target datasets; for the TOEFL listening comprehension test, it outperforms the previous best model by 7%. Finally, we show that transfer learning is helpful even in unsupervised scenarios when correct answers for target QA dataset examples are not available.

💡 Research Summary

This paper investigates the applicability of transfer learning to the task of multiple‑choice question answering (MCQA). While transfer learning has become a standard technique in computer vision, speech recognition, and many natural language processing tasks, its impact on QA has not been thoroughly examined. The authors address this gap by conducting a series of controlled experiments that transfer knowledge from a large, publicly available source dataset (MovieQA) to two much smaller target datasets: the TOEFL listening comprehension test (both manual and ASR transcriptions) and the MCTest (two variants, MC160 and MC500).

The study focuses on context‑aware MCQA, where each instance consists of a story (or transcript), a question, and four or five answer choices expressed as natural language sentences. The goal is to select the single correct choice. Two well‑known neural QA models are employed: (1) End‑to‑End Memory Networks (MemN2N) and (2) a Query‑Based Attention Convolutional Neural Network (QA CNN). Both models are open‑source and have previously been applied to MCQA tasks. The authors adapt MemN2N to handle full‑sentence answer choices by adding an embedding layer for the choices and computing similarity with the aggregated question‑story representation. QA CNN builds separate similarity maps between story‑question and story‑choice pairs, processes them through a two‑stage CNN with query‑based attention, and finally classifies the choice with the highest score.

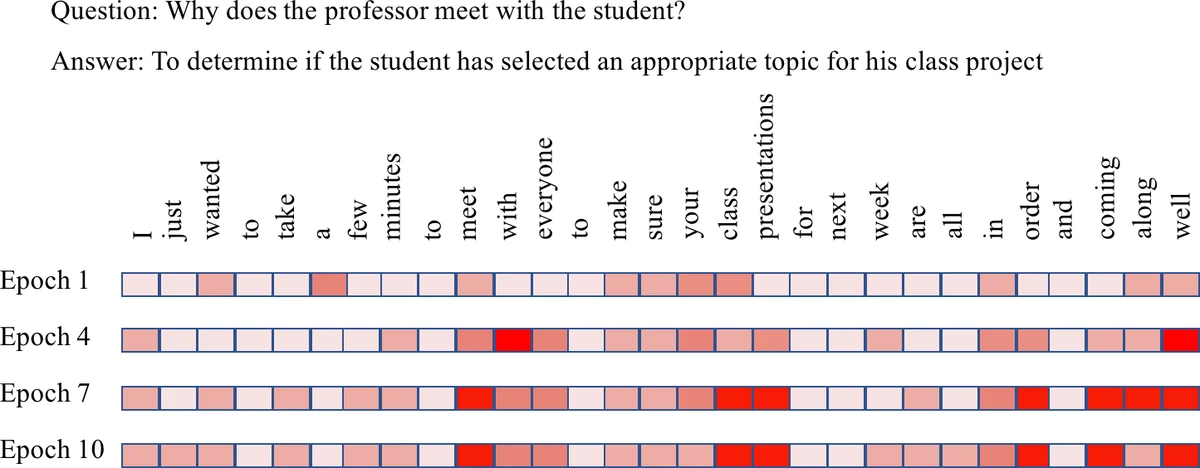

Transfer learning is implemented in a straightforward two‑step pipeline. First, the model is pre‑trained on the source dataset (MovieQA) using its abundant labeled examples. Second, the same model is fine‑tuned on the target dataset. Two transfer scenarios are explored: supervised transfer learning, where the target dataset provides correct answers during fine‑tuning, and unsupervised transfer learning, where the target dataset lacks any labels. In the unsupervised case, the authors adopt a self‑labeling strategy: after each epoch, the model predicts answers for all target questions, treats those predictions as pseudo‑labels, and continues fine‑tuning on this pseudo‑labeled data. This process repeats for a predefined number of epochs (Algorithm 1).

The experimental protocol mirrors the original training procedures for each model. For pre‑training, the authors follow the exact hyper‑parameter settings described in the MovieQA and QA CNN papers. During fine‑tuning, they train on the target training split, tune on the target development split, and report accuracy on the held‑out test split. Accuracy is the sole evaluation metric.

Results (Table 2) show consistent gains from transfer learning across all target datasets and both models. For the QA CNN, source‑only training already outperforms target‑only training (e.g., 51.2 % vs. 48.9 % on TOEFL‑manual). Adding the target data (source + target) yields further improvement, and fine‑tuning all parameters leads to the best scores: 56.1 % on TOEFL‑manual, 55.3 % on TOEFL‑ASR, 73.8 % on MC160, and 72.3 % on MC500. These numbers surpass previously reported state‑of‑the‑art results (e.g., 74.6 % on MC500 by Trischler et al., 2016). MemN2N also benefits from transfer, though the gains are smaller and the best performance is achieved by fine‑tuning only the embedding layers rather than the full network.

The unsupervised transfer experiments demonstrate that even without true labels, the self‑labeling approach can recover a substantial portion of the supervised gains, confirming that the knowledge encoded in the source‑trained model is robust enough to generate useful pseudo‑labels. This is particularly valuable for domains where annotating QA pairs is expensive or infeasible.

Key insights from the paper include:

- Generalizable Representations: Large‑scale textual QA data (MovieQA) can teach models to capture story‑level reasoning patterns that transfer to unrelated domains such as academic listening comprehension and elementary reading comprehension.

- Supervised vs. Unsupervised Transfer: While supervised fine‑tuning yields the highest gains, unsupervised self‑labeling still provides meaningful improvements, suggesting a practical pathway for low‑resource QA scenarios.

- Model‑Specific Transfer Dynamics: The more complex QA CNN benefits from fine‑tuning all layers, indicating that its hierarchical attention mechanisms adapt well to new domains. In contrast, MemN2N shows diminishing returns when all parameters are updated, implying that its simpler memory‑addressing scheme may already capture domain‑agnostic features after pre‑training.

- Data Efficiency: Transfer learning dramatically reduces the amount of target‑domain data needed to achieve competitive performance, which is crucial for QA datasets that are costly to collect.

The authors acknowledge several limitations. The experiments are confined to two domains (movie plots and educational listening/reading) and two model families; extending the analysis to other domains (e.g., biomedical QA) and newer transformer‑based architectures would strengthen the conclusions. Moreover, the unsupervised self‑labeling procedure may introduce noisy pseudo‑labels; future work could explore confidence‑based filtering or semi‑supervised techniques to mitigate this noise.

In summary, the paper provides compelling empirical evidence that both supervised and unsupervised transfer learning are effective strategies for improving MCQA performance on small target datasets. By demonstrating state‑of‑the‑art results on TOEFL listening comprehension and MCTest, the work establishes a solid baseline for future research on domain adaptation in question answering.

Comments & Academic Discussion

Loading comments...

Leave a Comment