Consistent CCG Parsing over Multiple Sentences for Improved Logical Reasoning

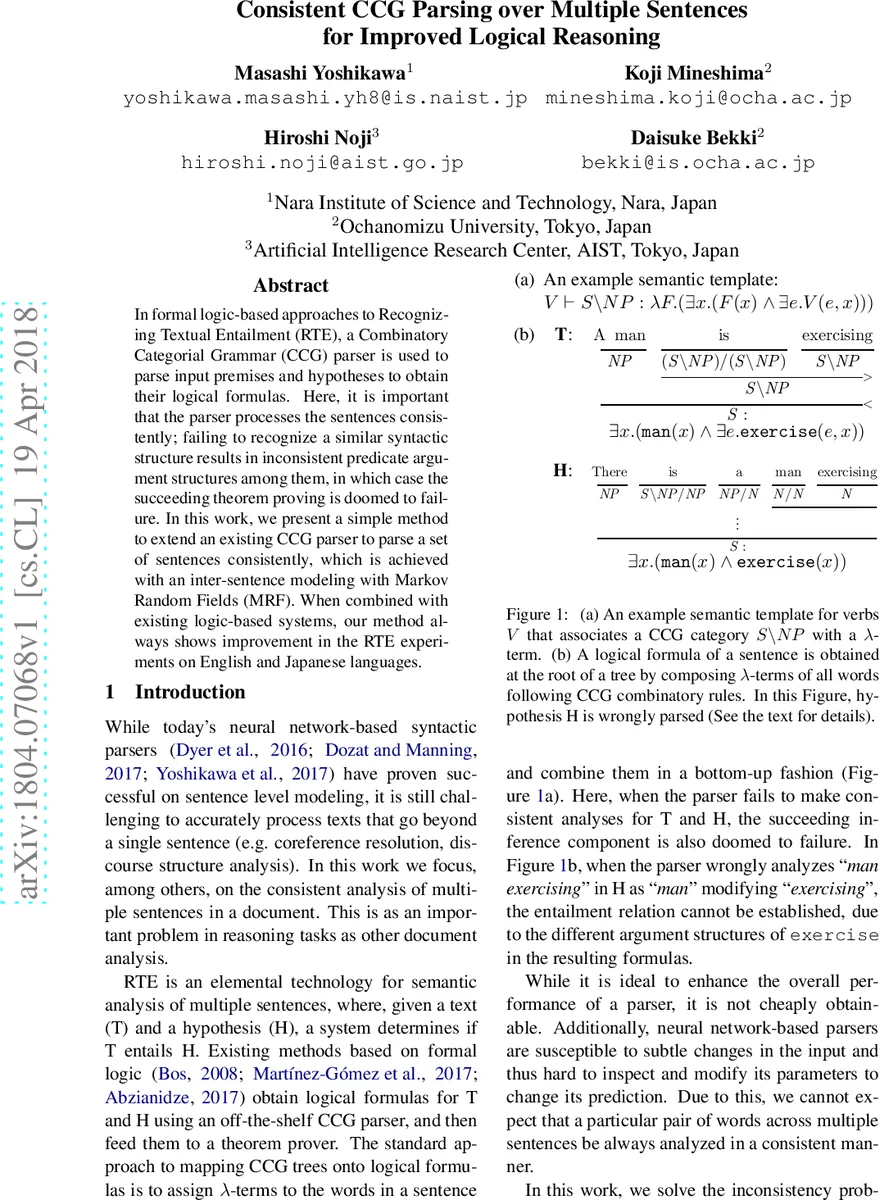

In formal logic-based approaches to Recognizing Textual Entailment (RTE), a Combinatory Categorial Grammar (CCG) parser is used to parse input premises and hypotheses to obtain their logical formulas. Here, it is important that the parser processes the sentences consistently; failing to recognize a similar syntactic structure results in inconsistent predicate argument structures among them, in which case the succeeding theorem proving is doomed to failure. In this work, we present a simple method to extend an existing CCG parser to parse a set of sentences consistently, which is achieved with an inter-sentence modeling with Markov Random Fields (MRF). When combined with existing logic-based systems, our method always shows improvement in the RTE experiments on English and Japanese languages.

💡 Research Summary

The paper addresses a critical weakness in logic‑based Recognizing Textual Entailment (RTE) pipelines that rely on Combinatory Categorial Grammar (CCG) parsing: the parser typically processes each sentence in isolation, which can lead to inconsistent syntactic analyses across premises and hypotheses. When the same lexical item receives different CCG categories in different sentences, the resulting logical forms become mismatched and downstream theorem proving fails.

To remedy this, the authors extend an existing CCG parser with a global inter‑sentence consistency model based on a Markov Random Field (MRF). In the MRF, each word token is a node, and each “context” (initially defined as a unigram surface form, with a bigram POS variant for Japanese) is another node. Edges connect words to the contexts they share. The model assigns a CCG category label to every node and maximizes a global consistency score g(z) that combines (i) local word‑level scores f_w(z_w) derived from the parser’s supertag probabilities and (ii) pairwise consistency scores f_{w,c}(z_w, z_c) that reward identical or simplified category matches (weighted by δ₁, δ₂, δ₃). The NULL label for context nodes allows the model to switch off constraints when needed.

Parsing itself uses the state‑of‑the‑art A* decoder from depCCG (Yoshikawa et al., 2017). The A* algorithm treats a CCG tree as a combination of supertag probabilities (p_tag) and dependency head probabilities (p_dep), yielding a locally factored probability for each tree.

The core technical contribution is the joint optimization of the local A* scores and the global MRF score via dual decomposition. The authors formulate a constrained maximization problem where the category assigned to each word by the parser (c_w) must equal the category assigned to the same word node in the MRF (z_w). They introduce Lagrange multipliers u and iteratively solve two sub‑problems: (1) an MRF inference step (dynamic programming on a naïve Bayes structure) that maximizes g(z) plus a linear term from u, and (2) an A* parsing step that maximizes the log‑probability of the tree minus the same linear term. After each iteration, u is updated with a step size α, and the process repeats for K iterations (α=0.0002, K=500, with decay). This scheme preserves the exact A* decoder while only adding a modest overhead for the MRF updates.

Experiments were conducted on two logic‑based RTE systems: ccg2lambda (Martínez‑Gómez et al., 2017) and LangPro (Abzianidze, 2015/2017). The authors evaluated on the English SICK dataset and the Japanese JSeM dataset. For English, adding the MRF to depCCG consistently improved accuracy and recall across both systems. For example, LangPro15’s accuracy rose from 79.05% to 80.88%, and LangPro17’s from 81.04% to 81.61%. ccg2lambda’s accuracy increased from 81.95% to 82.86% with the MRF. Similar gains were observed for Japanese, where accuracy rose from 67.87% to 71.31% with the MRF.

Error analysis shows that the MRF successfully resolves ambiguous participial constructions (e.g., “man exercising”) by leveraging the less ambiguous counterpart (“A man is exercising”) to assign the correct S\NP category. It also corrects certain PP‑attachment and coordinate‑structure errors. However, when the same surface form legitimately takes different syntactic roles in premise and hypothesis (e.g., “eat” as transitive vs. intransitive), the MRF’s strict consistency can mislabel, indicating the need for more nuanced context definitions (e.g., N‑grams, POS tags) or adaptive weighting of δ parameters.

The authors conclude that global inter‑sentence consistency modeling via MRF and dual decomposition can substantially improve the end‑to‑end performance of logic‑based RTE without requiring a new parser architecture. Future work includes extending the approach to richer discourse phenomena (coreference, discourse relations), learning context selection automatically, and scaling to more complex datasets where surface‑form based contexts may be insufficient.

Comments & Academic Discussion

Loading comments...

Leave a Comment