Models for Capturing Temporal Smoothness in Evolving Networks for Learning Latent Representation of Nodes

In a dynamic network, the neighborhood of the vertices evolve across different temporal snapshots of the network. Accurate modeling of this temporal evolution can help solve complex tasks involving real-life social and interaction networks. However, existing models for learning latent representation are inadequate for obtaining the representation vectors of the vertices for different time-stamps of a dynamic network in a meaningful way. In this paper, we propose latent representation learning models for dynamic networks which overcome the above limitation by considering two different kinds of temporal smoothness: (i) retrofitted, and (ii) linear transformation. The retrofitted model tracks the representation vector of a vertex over time, facilitating vertex-based temporal analysis of a network. On the other hand, linear transformation based model provides a smooth transition operator which maps the representation vectors of all vertices from one temporal snapshot to the next (unobserved) snapshot-this facilitates prediction of the state of a network in a future time-stamp. We validate the performance of our proposed models by employing them for solving the temporal link prediction task. Experiments on 9 real-life networks from various domains validate that the proposed models are significantly better than the existing models for predicting the dynamics of an evolving network.

💡 Research Summary

The paper addresses a fundamental limitation of existing network embedding methods: they treat each snapshot of a dynamic graph as an independent static network, thereby ignoring temporal continuity. To overcome this, the authors propose two novel embedding frameworks that explicitly enforce temporal smoothness across snapshots.

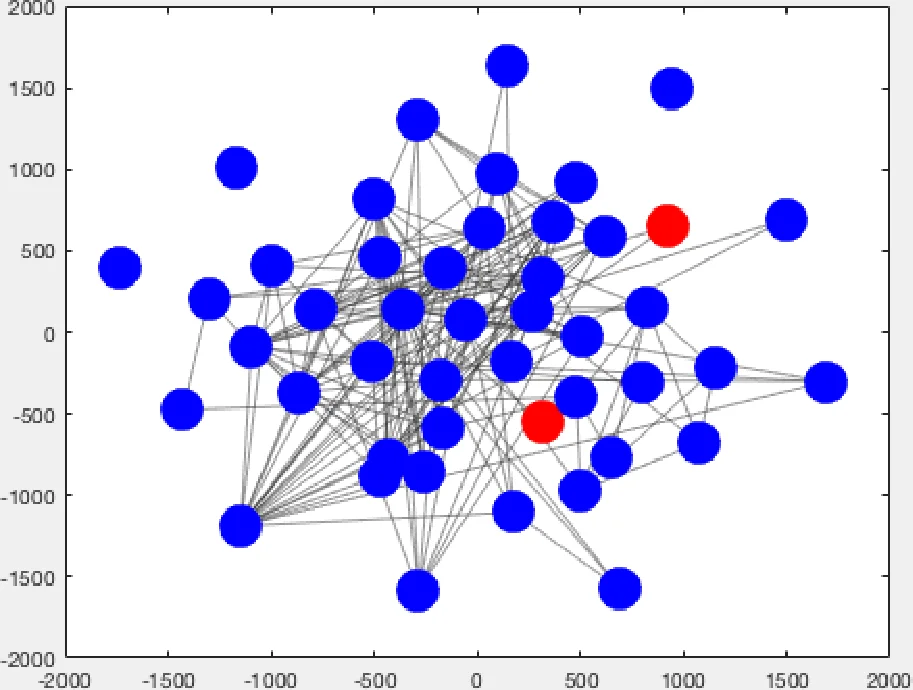

The first framework, called the retrofitted model, embodies a local temporal smoothness assumption. It starts by learning a conventional static embedding (e.g., DeepWalk, Node2Vec, LINE) for the first snapshot G₁, yielding a mapping ϕ₁: V → ℝᵈ. For each subsequent snapshot G_t (t > 1), only the vertices that experience a change in their neighbourhood are updated. The update minimizes a loss that balances fidelity to the previous embedding (‖ϕ_t(v) – ϕ_{t‑1}(v)‖²) with a regularizer that forces the new embedding to be close to the embeddings of the current neighbours (∑_{u∈N_t(v)}‖ϕ_t(v) – ϕ_t(u)‖²). This iterative retrofitting yields a smooth trajectory for each vertex, enabling fine‑grained analysis of community evolution, role changes, or anomaly detection.

The second framework, the linear transformation model, captures global temporal smoothness. After independently embedding every snapshot with any static method, the authors learn a linear operator W ∈ ℝ^{d×d} that maps the embedding of one time step to the next: ϕ_t ≈ ϕ_{t‑1} W. Two variants are explored: (i) homogeneous mapping, where a single shared matrix W is used for all consecutive pairs, and (ii) heterogeneous mapping, where each pair (t‑1, t) has its own matrix W_t, later combined (e.g., by averaging) to obtain a smoother overall transformation. The objective is a Frobenius‑norm reconstruction error with optional regularization to prevent over‑fitting. Once learned, W can be applied to the latest known embedding to predict the latent representation of an unseen future snapshot, directly supporting tasks such as future link prediction or network growth simulation.

The authors formalize the problem: given a sequence of undirected graphs G = {G₁,…,G_T} with a common vertex set V, learn a sequence of mappings ϕ_t that respect both structural proximity (first‑order and second‑order) and temporal dynamics. They argue that existing dynamic embedding approaches (e.g., Zhu et al., 2016) only incorporate first‑order proximity and a simple temporal regularizer, lacking the ability to capture higher‑order relationships and the two distinct notions of smoothness introduced here.

Experimental evaluation is conducted on nine real‑world datasets spanning citation networks, social platforms, and instant‑messaging logs. The task is temporal link prediction: given snapshots up to time T, predict edges that will appear in G_{T+1}. Performance is measured using AUC, PR‑AUC, and NDCG. Both proposed models consistently outperform the state‑of‑the‑art temporal smoothness baseline, achieving improvements ranging from 0.20 to 0.60 points across metrics. The retrofitted model excels when short‑term, vertex‑level changes dominate, while the linear transformation model shows superior long‑term predictive power due to its ability to extrapolate the global evolution of the embedding space.

Key contributions are: (1) introduction of two complementary temporal smoothness concepts (local vs. global) and corresponding embedding algorithms; (2) extensive empirical validation demonstrating substantial gains over prior work; (3) public release of code, datasets, and experimental pipelines to foster reproducibility.

In conclusion, the paper provides a solid methodological foundation for temporally aware network embeddings. By decoupling vertex‑centric and network‑centric smoothness, it offers flexibility for a wide range of downstream tasks. Future research directions suggested include non‑linear transformation operators, incorporation of node/edge attributes, online streaming updates, and exploration of attention‑based mechanisms to weight temporal contributions adaptively.

Comments & Academic Discussion

Loading comments...

Leave a Comment