Coded Caching in a Multi-Server System with Random Topology

Cache-aided content delivery is studied in a multi-server system with $P$ servers and $K$ users, each equipped with a local cache memory. In the delivery phase, each user connects randomly to any $\rho$ out of $P$ servers. Thanks to the availability …

Authors: Nitish Mital, Deniz Gunduz, Cong Ling

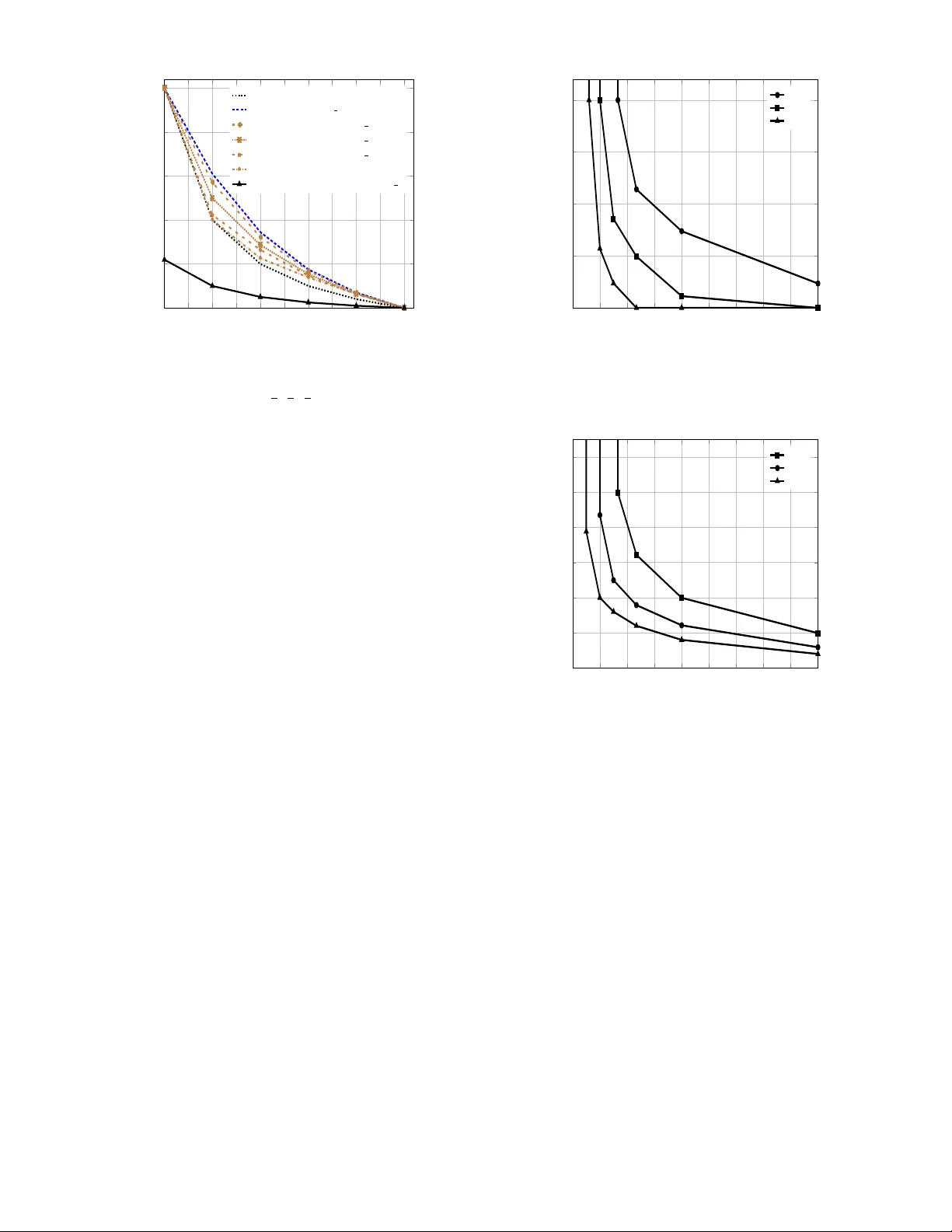

Coded Caching in a Multi-Serv er System with Random T opology Nitish Mital, Deniz Gündüz and Cong Ling Department of Electrical and Electronic Engineering Imperial College London Email: {n.mital, d.gunduz, c.ling}@imperial.ac.uk Abstract —Cache-aided content delivery is studied in a multi- server system with P servers and K users, each equipped with a local cache memory . In the delivery phase, each user connects randomly to any ρ out of P servers. Thanks to the av ailability of multiple servers, which model small base stations with limited storage capacity , user demands can be satisfied with reduced storage capacity at each server and reduced deli very rate per serv er; however , this also leads to r educed multicasting opportunities compared to a single server serving all the users simultaneously . A joint storage and proacti ve caching scheme is proposed, which exploits coded storage across the servers, uncoded cache placement at the users, and coded delivery . The delivery latency is studied for both successive and simultaneous transmission from the servers. It is shown that, with successive transmission the achievable a verage delivery latency is compara- ble to that achieved by a single server , while the gap between the two depends on ρ , the available redundancy across servers, and can be reduced by increasing the storage capacity at the SBSs. I . I N T RO D U C T I O N The unprecedented growth in transmitted data volumes across networks necessitates design of more ef ficient delivery methods that can exploit the av ailable memory space and processing power of individual network nodes to increase the throughput and efficienc y of data av ailability . Coded caching and distrib uted storage ha ve recei ved significant attention in recent years as promising techniques to achiev e these goals. W ith proactiv e caching, part of the data can be pushed into nodes’ local cache memories during off-peak hours, called the placement phase , to reduce the burden on the network, particularly the wireless downlink, during peak-traf fic periods when all the users place their requests, called the delivery phase . Intelligent design of the cache contents creates multi- casting opportunities across users, and multiple demands can be satisfied simultaneously through coded deli very . Coded caching is able to utilize the cumulative cache capacity in the network to satisfy all the users at much lower rates, or equiv alently with lower deliv ery latency [1] - [10]. A different type of coded caching is shown to improve the deliv ery performance in the so-called “femtocaching” scenario [3]. In femtocaching, files are replicated or coded at multiple cache-equipped small base stations (SBSs) so that a user may reconstruct its request from only a subset of the av ailable SBSs. SBSs can act as edge caches and provide contents to This work was supported in part by the European Union‘s H2020 research and innov ation programme under the Marie Sklodo wska-Curie Action SCA V - ENGE (grant agreement no. 675891), and by the European Research Council (ERC) Starting Grant BEACON (grant agreement no. 725731). (a) ρ = 2 , q 1 = 2 , q 2 = 4 , q 3 = 2 . (b) ρ = 2 , q 1 = 4 , q 2 = 4 , q 3 = 0 (best topology (for successi ve transmis- sions), worst topology (for simultaneous transmissions)). (c) ρ = 2 , q 1 = 3 , q 2 = 3 , q 3 = 2 (worst topology (for successive transmissions), best topology (for simultaneous transmissions)). Fig. 1: Examples of dif ferent network topologies for P = 3 and K = 4 with ρ = 2 . users directly , reducing latency , backhaul load or the energy consumption [3], [6]. Coding for distributed storage systems has been extensiv ely studied in the literature (see, for example, [11], [12]), and in the femtocaching scenario, ideal rateless maximum distance separable (MDS) codes allo w users to recov er contents by collecting parity bits from only a subset of SBSs they connect to [3]. In this work, we combine distributed storage at SBSs, simi- lar to the “femtocaching” framew ork [3], with cache storage at the users, and consider coded delivery over error-free shared broadcast links [1]. W e consider a library of N files stored across P SBSs, each equipped with a limited-capacity storage space (see Fig. 1). Differently from the existing literature, we consider a random connecti vity model: during the deliv ery phase, each user connects only to a random subset of ρ SBSs, where ρ ≤ P . This may be due to physical v ariations in the channel, or resource constraints. Most importantly , these connections that form the network topology are not known in adv ance during the placement phase; therefore, the cache placement cannot be designed for a particular network topology . Storing the files across multiple SBSs, and allowing users to connect randomly to a subset of them results in a loss in multicasting opportunities for the servers, indicating a trade-off between the coded caching gain and the fle xibility provided by distrib uted storage across the servers, which, to the best of our knowledge, has not been studied before. On the other hand, the presence of multiple servers may improv e the latency if user requests can be satisfied in parallel. Accordingly , two scenarios are discussed depending on the deliv ery protocol. If the servers transmit successively , i.e., time-division transmission, the total latency is the sum of the latencies on each link in delivering all the requests. If the servers operate in parallel, i.e., simultaneous transmission, then the latency is giv en by the link with the maximum latency . W e propose a practical coded cache placement and delivery scheme that exploits MDS coding across servers simulta- neously with coded caching and deliv ery to users. In the successiv e transmission scenario, we show that the cost for the flexibility of distrib uted storage is a scaling of the latency by a constant. W e also characterize the average worst-case latency (over all user-server associations) of the proposed scheme by assuming that the users connect to a uniformly random subset of the serv ers; and sho w that it is relati vely close to the best-case performance, which is the single-server centralized delivery time deriv ed in [1], achiev ed when all users connect to the same set of servers. W e observe that, as the server storage capacities increase, the a verage delivery time-user cache memory trade-of f improv es, approaching the single-server deli very time. W e also identify the delivery latency of the proposed scheme when the servers can transmit simultaneously , and characterize the achiev able average w orst case deli very time as a function of the serv er storage capacity for different ρ values. In a related work [8], the authors study coded caching schemes presented in [1] and [7] when parity servers are av ailable. The authors consider special scenarios with one and two parity servers. The y propose a scheme that stripes the files into blocks, and codes them across the serv ers with a systematic MDS code, and they also propose a scheme for the scenario in which files are stored as whole units in the servers, without striping. In our work, we do not specify servers as parity servers, and instead propose a scheme that generalizes to the use of any type of MDS code and any number of storage servers. W e study the impact of the topology on the sum and maximum deli very rates, and the trade-of f between the server storage space and the average of these rates. In [9], the authors consider multiple servers serving the users through an intermediate network of relay nodes, each server ha ving access to all the files in the library . The authors study the deli very delay considering simultaneous transmission from the servers. Note that, our model considers both limited storage servers and Fig. 2: Segmentation, MDS coding and placement of files. random topology over the deli very network, which is unknown at the placement phase. Another line of related works study caching in combination networks [10], [13], which consider a single serv er serving cache-equipped users through multiple relay nodes. The server is connected to these relays through unicast links, which in turn serve a distinct subset of a fixed number of users through unicast links. A combination network with cache-enabled relay nodes is considered in [13]. Howe ver , the symmetry of a standard combination network, which w ould be unrealistic in man y practical scenarios, and the assumption of a fixed and kno wn network topology during the placement phase make the caching scheme and the analysis fundamentally different from our paper . Notations . For two integers i < j , we denote the set { i, i + 1 , . . . , j } by [ i : j ] , while the set [1 : j ] is denoted by [ j ] . Sets are denoted with the calligraphic font, and |A| denotes the cardinality of set A . F or A = { a 1 , a 2 , . . . , a p } , we define X A , ( X a 1 , . . . , X a p ) . I I . S Y S T E M M O D E L W e consider the system model illustrated in Fig. 1 with P servers, denoted by S 1 , S 2 , . . . , S P , serving K users, de- noted by U 1 , U 2 , . . . , U K . There is a library of N files W 1 , W 2 , . . . , W N , each of length F bits uniformly distributed ov er [2 F ] . Each user has access to a local cache memory of capacity M U F bits, 0 ≤ M U ≤ N , while each serv er has a storage memory of capacity M S F bits. The caching scheme consists of two phases: placement phase and delivery phase. W e consider a centralized placement scenario as in [1], which is carried out centrally with the knowledge of the servers and the users participating in the deliv ery phase. Howe ver neither the user demands, nor the network topology is known in adv ance during the placement phase. In the deli very phase, we assume that each user randomly connects to ρ servers out of P , where ρ ≤ P , and requests a single file from the library . For j ∈ [ K ] , let Z j denote the set of servers U j connects to, where |Z j | = ρ , and d j ∈ [ N ] denotes the index of the file it requests. F or e xample, in Fig. 1a, Z 1 = { S 1 , S 2 } , Z 2 = { S 1 , S 2 } , Z 3 = { S 2 , S 3 } and Z 4 = { S 2 , S 3 } . Let the demand vector be denoted by d , ( d 1 , d 2 , ..., d K ) . The topology of the network, i.e., which users are connected to which servers, and the demands of the users are re vealed to the servers at the beginning of the delivery phase. The complete library must be stored at the servers in a coded manner to provide redundancy , since each user connects only to a random subset of the serv ers. Since any user should be able to reconstruct any requested file from its o wn cache memory and the servers it is connected to, the total cache capacity of a user and any ρ servers must be sufficient to store the whole library; that is, we must hav e M U + ρM S ≥ N . Let K p denote the set of users served by S p , for p ∈ [ P ] , and define the random variable Q p , |K p | , which denotes the number of users served by S p . W e shall denote a particular realization of Q p for a giv en topology as q p , where we have P P p =1 q p = K ρ . For example, in Fig. 1a, K 1 = { U 1 , U 2 } , K 2 = { U 1 , U 2 , U 3 , U 4 } , K 3 = { U 3 , U 4 } . Server S p transmits message X p of size R p F bits to the users connected to it, i.e., users in set K p , over the corresponding shared link. The message X p is a function of the demand vector d , the network topology , the storage memory contents of server S p , and the cache contents of the users in K p . User U j receiv es the messages X Z j , and reconstructs its requested file W d j using these messages and its local cache contents. Our goal is to minimize the deliv ery time, which is the time by which all the user requests can be satisfied. The deliv ery time depends on the operation of the SBSs. If each SBS transmits over an orthogonal frequency band, the requests can be delivered in parallel, and the normalized delivery time is gi ven by T = max p R p , where F R p is the number of bits transmitted from server S p during the deliv ery phase. If, instead, the servers transmit successiv ely in a time-di vision manner , which is suitable for user devices that are simple and not capable of multihoming on multiple frequencies, the normalized deliv ery time will be giv en by T = P P p =1 R p . Our goal will be to find the a verage w orst-case deli very time, where the worst case refers to the fact that all the users can correctly decode their requested files, independent of the combination of files requested by them, and the averaging is over all possible network topologies. Assuming that N ≥ K (i.e., the number of files is larger than the number of users), it is not difficult to see that all the users requesting a dif ferent file corresponds to the worst-case scenario. I I I . C O D E D D I S T R I B U T ED S T O R AG E A N D C AC H I N G S C H E M E W e first note that our system model brings together aspects of distributed storage and proactiv e caching/coded deliv ery . T o see this, consider the system without any user caches, i.e., M U = 0 , which is equiv alent to a distrib uted storage system with unreliable servers. It is known that MDS codes provide much higher reliability and efficienc y compared to replication in this scenario [11]. On the other hand, when the servers are reliable, i.e., ρ = P , our system is equi valent to the one in [1], and coded delivery provides significant reductions in the deli very rate. Accordingly , our proposed scheme brings together benefits from coded storage and coded deliv ery . T o illustrate the main ingredients of the proposed scheme we assume M S = N ρ in this section. Extension to other server capacities will be presented in Section IV -A. A. Server Storage Placement W e first describe how the files are stored across the serv ers in order to guarantee that each user request can be satisfied from any ρ servers the user may connect to (see Fig. 2). W e define t , K M U N , and assume initially that it is an integer , i.e., t ∈ [0 : M U ] . The solution for non-integer t values can be obtained through memory-sharing [1]. Each file is divided into K t equal-size non-ov erlapping segments. W e enumerate them according to distinct t -element subsets of [ K ] , where W j, A denotes the segment of W j that corresponds to subset A . W e hav e W j = S A⊂ [ K ]: |A| = t W j, A . Each segment is further di vided into ρ equal-size non- ov erlapping sub-segments denoted by W l j, A , l ∈ [ ρ ] . The ρ sub-segments of each segment are coded together using a ( P , ρ ) linear MDS code with generator matrix G , gi ving as output P coded versions of the segment W j, A , denoted by C l j, A , l ∈ [ P ] . C l j, A is a linear combination of the subsegments of the segment corresponding to subset A , of the j th file, that is stored in server S l . Since each sub-segment is of length F ρ ( K t ) , ev ery linear combination C l j, A is of the same length; and hence, serv er storage capacity constraint of N F ρ is met with equality . Remark 1. W e assume that each user knows the gener ator matrix of the MDS code to be able to r econstruct any coded symbol C l j, A fr om the uncoded segment W j, A stor ed in its cache memory . B. User Cache Placement For the user caches we use the placement scheme proposed in [1]. Each segment of a file, W j, A , is placed into the caches of all the users U k for which k ∈ A . C. Delivery Phase W e first make the follo wing observ ation about the abo ve placement scheme: in the worst-case demand scenario, con- sider any t + 1 users. Any t out of these t + 1 users share in their caches one segment of the file requested by the remaining user . Enumerate these subsets of t + 1 users as H i , i ∈ h K t +1 i . Consider any server S p , and one of the q p users connected to it, say U k . Then, for an y subset H i , that includes k , i.e., k ∈ H i , the se gment W d k , H i \{ k } is needed by user U k , but is not av ailable in its cache because k / ∈ H i \{ k } ; while it is av ailable in the caches of the remaining users in K p T H i . The MDS coded version of W d k , H i \{ k } stored by S p is C p d k , H i \{ k } , and since the users know the generator matrix G , each user which has W d k , H i \{ k } in its cache can reconstruct C p d k , H i \{ k } as well. Then, for each H i that includes at least one user from K p , S p transmits X p ( H i ) = M k ∈K p T H i C p d k , H i \{ k } , (1) where L denotes the bitwise XOR operation. Then, n i ∈ h K t +1 i : k ∈ H i o = K − 1 t is the number of mes- sages transmitted by server S p that contain the coded version of a segment requested by U k , and is also equal to the number of segments of W d k not present in the cache of user U k . Overall, the message transmitted by S p is giv en by X p = [ i ∈ [( K t +1 )] : K p T H i 6 = φ X p ( H i ) . (2) From the transmitted message X p ( H i ) in (1) for each set H i , user U k can decode the MDS coded version C p d k , H i \{ k } of its requested se gment W d k , H i \{ k } . W ith the transmissions from all the servers, U k receiv es ρ coded versions of each missing segment from the ρ servers it is connected to. Since each segment is coded with a ( P, ρ ) MDS code, the user is able to decode each missing segment. Note that each transmitted message X p ( H i ) by a server is of length F / ρ K t bits. The number of transmis- sions by S p is n i ∈ h K t +1 i : K p T H i 6 = φ o = K t +1 − n i ∈ h K t +1 i : K p T H i = φ o = K t +1 − K − q p t +1 . That is, server S p transmits K t +1 − K − q p t +1 messages, each of length F / ρ K t bits. The delivery latency performance of this proposed coded storage and delivery scheme with both successive and simul- taneous SBS transmissions is studied in the following sections. I V . S U C C E S S I V E S B S T R A N S M I S S I O N S When the SBSs transmit successi vely in time, the normal- ized deliv ery time is given by T , P X p =1 R p = 1 ρ K t P X p =1 K t + 1 − K − q p t + 1 = P ρ ( K − t ) ( t + 1) − 1 ρ K t P X p =1 K − q p t + 1 . (3) T o characterize the “best” and “worst” network topologies that lead to the minimum and maximum deliv ery times, respectiv ely , we present the following lemma without proof. Lemma 2. F or n 1 , n 2 , r ∈ Z + satisfying r ≤ n 1 and n 1 + 2 ≤ n 2 , we have n 1 r + n 2 r ≥ n 1 + 1 r + n 2 − 1 r . (4) The lemma abov e indicates the “con vex” nature of the binomial coefficients in (3); that is, the points ( r , r r ) , ( r + 1 , r +1 r ) , . . . , ( n 1 + n 2 − r , n 1 + n 2 − r r ) form a con ve x re gion. From Lemma 2, it can be deduced that the second summation term in (3) takes its minimum when max p ( q p ) ≤ min p ( q p )+ 1 , p ∈ [ P ] , i.e., the values of q p are as close to each other as possible. This corresponds to the class of topologies with the highest delivery times (see Fig. 1c for an example). The topology that requires the minimum deliv ery time of T = K − t t +1 is when q p is either 0 or K for each server , or equiv alently , when all the users are connected to the same ρ servers (see Fig. 1b for an example). Next we study the average worst-case normalized delivery time, where the av erage is taken ov er all possible network topologies, assuming a uniformly random user-server associ- ation; that is, each user connects to an y ρ out of P servers with uniform distribution. As we hav e seen above, the deliv ery time depends on the topology , and for a giv en topology , the “worst-case” deli very time refers to the worst-case demand combination when each user requests a different file. Let T denote the set of all possible topologies. W e have |T | = P ρ K . Define N ( q p ) as the number of different topologies, in which a particular server S p serves q p ∈ [0 : K ] users. Then, N ( q p ) = K q p P − 1 ρ − 1 q p P − 1 ρ K − q p . The follo wing theorem presents the average normalized worst-case delivery time of the proposed scheme. Theorem 3. The worst-case averag e normalized delivery time of the pr oposed scheme over all topologies under uniformly random user-server association is given by E [ T ] = P ρ ( K − t ) ( t + 1) − P ρ K t K X q p =0 Pr( Q p = q p ) K − q p t + 1 , (5) wher e Pr( Q p = q p ) = N ( q p ) ( P ρ ) K is the pr obability of exactly q p users being served by a particular server . Pr oof. Each topology τ ∈ T is represented by a particular tuple q τ = ( q 1 , . . . , q P ) . Each topology is distinct, but not all tuples are necessarily distinct. This is demonstrated in Fig. 1c, where two distinct topologies ha ve the same tuple q τ = (3 , 3 , 2) associated with them. The expectation of the worst- case deliv ery rate over all possible topologies τ ∈ T can be written as E [ T ] = P q τ ,τ ∈T P ρ ( K − t ) ( t +1) − 1 ρ ( K t ) P P p =1 K − q p t +1 P ρ K = P ρ ( K − t ) ( t + 1) − 1 ρ P ρ K K t X q τ ,τ ∈T P X p =1 K − q p t + 1 = P ρ ( K − t ) ( t + 1) − P X p =1 K X q p =0 N ( q p ) ρ P ρ K K t K − q p t + 1 = P ρ ( K − t ) ( t + 1) − P ρ K t K X q p =0 N ( q p ) P ρ K K − q p t + 1 . A. Redundancy in Server Storage Capacity Abov e the server storage capacity is fixed as M S = N ρ . The minimum server storage capacity that would allow the reconstruction of any demand combination is gi ven by M S = N − M U ρ . In this case, we cache M U N fraction of the library in the user caches during the placement phase, and transmit the remaining fraction of the library from the servers. The worst- case delivery time in this case is given by T = K 1 − M U N . Next, we consider the case when there is redundancy in Fig. 3: An example 7 × 5 incidence matrix ( P = 7 , K = 5 ) with ρ = 4 . server memories; that is, we have N ρ < M S ≤ N . Assume that M S = N ρ − z for some integer z ∈ [ ρ − 1] . For non-integer values of z , the solution can be obtained by memory-sharing. In this case, a ( P , ρ − z ) MDS code is used for server storage placement, which allows each user to reconstruct any requested file by connecting to ρ − z servers. The user cache placement is done as in the previous section. In the deliv ery phase, each user randomly connects to ρ servers. W e now ha ve a degree of freedom thanks to the additional storage space av ailable at each server . Each user can get a particular segment from only a ρ − z subset of the ρ servers it is connected to by recei ving one copy from each of those servers. The choice of the servers that will deli ver the coded subsegments to each user is done such that the multicasting opportunities across the network are maximized. Construct an incidence matrix A of dimensions P × K such that a ij = 1 if server i is connected to user j , a ij = 0 otherwise. Consider the t + 1 − element subset H i , and the file segments W d k , H i \{ k } , ∀ k ∈ H i . Consider the columns of A corresponding to the users in H i and the matrix Q formed by them. Define the minimum cover of H i as the smallest l for which a l × t + 1 submatrix of Q has at least ρ − z non-zero v alues in each column. The servers corresponding to the l ro ws of this submatrix ha ve to transmit one coded message each to satisfy completely the requests for the missing segments corresponding to H i . Therefore, the total number of transmissions required to deli ver the segments W d k , H i \{ k } , k ∈ H i , is equal to the minimum cov er of H i . As an example, consider the incidence matrix as shown in Fig. 3 which corresponds to a system with P = 7 servers and K = 5 users, where each user connects to ρ = 4 serv ers. Assume that the server storage capacity is M S = N ρ − 2 and t = 1 . In this setting, coded subsegments of requested files can be deliv ered to t + 1 = 2 users through multicasting, and it is sufficient for each user to recei ve coded se gments from ρ − 2 = 2 serv ers. Then, for the user set H i = { 1 , 2 } , we consider the submatrix corresponding to the columns 1 and 2 and ro ws 1 and 2 (marked by the blue dashed lines in Fig. 3), which is the smallest submatrix satisfying the condition that each column has at least ρ − z = 2 1 s. Hence, the minimum cov er for H i is equal to the number of rows of this submatrix, that is, 2 . Similarly , for H i = { 3 , 4 } (marked by the red dashed lines in Fig. 3), and the minimum cov er is 3 . Thus, from (1), for se gments W d k , { 3 , 4 }\{ k } , k ∈ { 3 , 4 } , S 3 transmits the message X 3 ( { 3 , 4 } ) = L k ∈{ 3 , 4 } C 3 d k , { 3 , 4 }\{ k } , S 4 trans- mits X 4 ( { 3 , 4 } ) = C 4 d 3 , { 4 } , and S 5 transmits X 5 ( { 3 , 4 } ) = C 5 d 4 , { 3 } . The total number of transmissions is 3 . This algorithm can be applied to transmit all the missing segments of the requested files. V . S I M U L TA N E O U S S B S T R A N S M I S SI O N S The normalized deli very time when the SBSs can transmit in parallel is giv en by: T , max q p 1 ρ K t K t + 1 − K − q p t + 1 . (6) The “best” and “worst” network topologies are different from the successive transmission scenario. The topology with the minimum value of the maximum q p , i.e., where the values of q p are as close to each other as possible, has the “best” (lowest) deliv ery time, contrary to the successiv e transmission scenario, in which this would be the “worst” topology . The corresponding deli very time can be obtained by substituting q p = d K ρ P e in (6). The topology with the maximum possible q p , i.e., any topology with at least one serv er connected to all K users, is the “worst” topology since it has the highest deliv ery time. T o minimize the deli very time in the scenario with parallel simultaneous deli very from the serv ers, a greedy server al- location algorithm is used which applies the algorithm used in Section IV in a greedy manner to balance the number of transmissions from the servers at each iteration. V I . R E S U LT S A N D D I S C U S S I O N S In Fig. 4 we plot the achiev able trade-off between the user cache capacity and the normalized deliv ery time for the best and w orst topologies, and the a verage normalized deliv ery time ov er all topologies, for successi ve transmission. The average normalized delivery time for the parallel transmission scenario is also plotted. The trade-off curves are plotted for dif ferent server storage capacities. W e observe that the g ap between the worst and the best topologies can be significant. From (3) and (5) we can deduce that, for successive transmission the worst topology deli very time; and hence, the average deliv ery time of the proposed scheme are both within a multiplicati ve factor of P ρ of the best topology deliv ery time. In Fig. 5 the a verage delivery time-server storage capacity trade-off is plotted for server storage capacities of M S ∈ [ N − M U ρ , N ] ; the plot is obtained by performing multiple simu- lations with random realizations of the topology and av eraging the achie vable deli very time o ver them. W e observe from Fig. 4 that the delivery time decreases significantly , particularly for lo w M U values, as the redundancy in server storage in- creases. W e observe from Fig. 5 that the average delivery time decreases rapidly for an initial increase in the server storage capacity , and the decrease can become significantly fast for high ρ values. This is because, thanks to the MDS-coded caching at the servers, the number of a vailable multicasting opportunities increases with the redundancy across servers. The average delivery time for parallel transmissions is plotted with respect to serv er storage capacity , M U , in Fig. 6. 0 0 . 5 1 1 . 5 2 2 . 5 3 3 . 5 4 4 . 5 5 0 1 2 3 4 5 User cache capacity ( M U ) Normalized deliv ery time Best top ology W orst top ology( M S = 5 4 ) Average ov er topologies( M S = 5 4 ) Average ov er topologies( M S = 5 3 ) Average ov er topologies( M S = 5 2 ) Average ov er topologies( M S = 5) Average delivery time (parallel)( M S = 5 4 ) Fig. 4: A verage normalized deliv ery time vs. user cache capacity M U , for P = 7 , N = K = 5 , ρ = 4 , and for server storage capacities of M S = 5 4 , 5 3 , 5 2 , 5 . The best and worst topologies are as illustrated in Fig. 1. The average deli very time for parallel transmissions is also plotted. As opposed to the deliv ery time for successiv e transmissions, we can see that the deli very time does not saturate, and keeps decreasing until all the files are stored at each of the servers. W e also observ e that the reduction in the deli very time with ρ saturates as ρ increases. V I I . C O N C L U S I O N S W e ha ve presented a multi-server coded caching and deliv- ery network, in which cache-equipped users connect randomly to a subset of the av ailable servers, each with its own limited storage capacity . While this allo ws each server to hav e only a limited amount of storage capacity , it requires coded storage across servers to account for the random topology . W e pro- posed a novel scheme that jointly applies MDS-coded caching at the servers, and uncoded caching and coded delivery to users. The achiev able delivery times of this scheme for both successiv e and simultaneous transmissions from the SBSs are presented, and their av erages over the topologies is studied. This analysis sho ws that, thanks to coding, the price for robustness and reliability using distributed storage is not much ev en when the servers operate in a time-division manner . R E F E R E N C E S [1] M. A. Maddah-Ali and U. Niesen, “Fundamental limits of caching“, IEEE T rans. Inf. Theory , vol. 60, no. 5,May 2014. [2] M. Mohammadi Amiri, Q. Y ang and D. Gunduz, “Decentralized coded caching with distinct cache capacities, ” IEEE Trans. on Comms., vol. 65, no. 11, Nov . 2017. [3] N. Golrezaei, A. Molisch, A. Dimakis, and G. Caire, “Femtocaching and device-to-de vice collaboration: A new architecture for wireless video distribution,“ IEEE Commun. Mag. , vol. 51, no. 4, Apr . 2013. [4] M. Mohammadi Amiri and D. Gunduz, “Fundamental limits of caching: Improved delivery rate-cache capacity trade-off,“ IEEE T rans. on Com- munications , vol. 65, no. 2, Feb. 2017. [5] M. Gregori, J. Gomez-V ilardebo, J. Matamoros, and D. Gunduz, “W ire- less content caching for small cell and D2D networks, ” IEEE J ournal on Selected Areas in Communications , vol. 34, no. 5, May 2016. 0 . 5 1 1 . 5 2 2 . 5 3 3 . 5 4 4 . 5 5 2 2 . 5 3 3 . 5 4 Server storage capacity ( M S ) Normalized deliv ery time ρ = 3 ρ = 4 ρ = 5 Fig. 5: A verage normalized deli very time vs. server storage capacity M S , for P = 7 , N = K = 5 , M U = 1 for successi ve SBS transmissions. 0 . 5 1 1 . 5 2 2 . 5 3 3 . 5 4 4 . 5 5 0 0 . 2 0 . 4 0 . 6 0 . 8 1 1 . 2 Server storage capacity ( M S ) Normalized deliv ery time ρ = 3 ρ = 4 ρ = 5 Fig. 6: A verage normalized deli very time vs. server storage capacity M S , for P = 7 , N = K = 5 , M U = 1 for simultaneous transmissions. [6] J. G. V ilardebo, “Fundamental limits of caching: Improved bounds with coded prefetching, ” arXiv:1612.09071, 2016. [7] C. Tian and J. Chen, “Caching and Delivery via Interference Elimina- tion", IEEE Int’l Symp. Inform. Theory , Barcelona, Spain, Jul. 2016. [8] T . Luo, V . Aggarw al, and B. Peleato, “Coded caching with Distrib uted Storage", ArXiv :1611.06591v1 [cs.IT] Nov 2016. [9] S. P . Shariatpanahi, S. A. Motahari, and B. H. Khalaj, “Multi-server coded caching", IEEE Trans. on Information Theory , vol. 62, no. 12, Dec. 2016. [10] M. Ji, A.M. T ulino, J. Llorca, and G. Caire, “Caching in combination networks", Asilomar Conf. on Signals, Systems and Computers , Mon- terey , CA, Nov . 2015. [11] A. G. Dimakis, P . Godfrey , Y . W u, M. J. W ainwright, and K. Ramchan- dran, “Network coding for distributed storage systems", IEEE T rans. Inf. Theory , vol. 56, no. 9, Sept. 2010. [12] D.S. Papailiopoulos, J. Luo, A.G. Dimakis, C. Huang, and J. Li, “Simple regenerating codes: Network coding for cloud storage", Pr oc. IEEE INFOCOM , March 2012. [13] A.A. Zewail and A. Y ener , “Coded caching for combination networks with cache-aided relays", International Symposium on Information The- ory 2017 , pp 2438-2442, June 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment