Automated Classification of Hand-grip action on Objects using Machine Learning

Brain computer interface is the current area of research to provide assistance to disabled persons. To cope up with the growing needs of BCI applications, this paper presents an automated classification scheme for handgrip actions on objects by using Electroencephalography (EEG) data. The presented approach focuses on investigation of classifying correct and incorrect handgrip responses for objects by using EEG recorded patterns. The method starts with preprocessing of data, followed by extraction of relevant features from the epoch data in the form of discrete wavelet transform (DWT), and entropy measures. After computing feature vectors, artificial neural network classifiers used to classify the patterns into correct and incorrect handgrips on different objects. The proposed method was tested on real dataset, which contains EEG recordings from 14 persons. The results showed that the proposed approach is effective and may be useful to develop a variety of BCI based devices to control hand movements.

💡 Research Summary

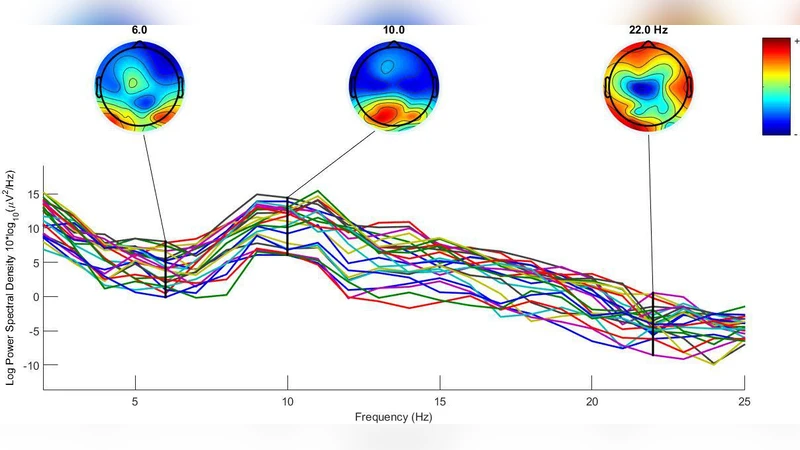

The paper presents an automated classification framework for distinguishing correct (congruent) versus incorrect (incongruent) hand‑grip actions on objects using electroencephalography (EEG) recordings. Four authors from Amity University, Oxford Brookes University, and Gautam Buddha University collaborated on the work. The motivation stems from brain‑computer interface (BCI) applications that aim to assist disabled individuals by interpreting neural signals related to motor actions. Prior studies have shown that the mu (α) rhythm (8‑12 Hz) over motor and premotor cortices exhibits event‑related desynchronization (ERD) that is sensitive to the correctness of a grip. The authors therefore focus on extracting and classifying mu‑rhythm activity associated with hand‑grip responses.

Dataset and experimental protocol

EEG was recorded from 14 participants (3 male, 11 female) using a 128‑channel BioSemi Active‑Two system at 1024 Hz. Participants viewed images of objects and non‑objects under three grip conditions: congruent grip, incongruent grip, and no grip. For the purpose of this study only the object category and the congruent versus incongruent conditions were retained, yielding 60 trials (30 congruent, 30 incongruent). Each trial consisted of a 1000 ms fixation, a 1000 ms stimulus, and a response window of up to 4000 ms.

Pre‑processing

EEGLAB in MATLAB was used for preprocessing. Channels 129 and 130 (left and right mastoids) served as reference electrodes. The first 128 channels were retained, re‑referenced to the average reference, and epochs were extracted from –1000 ms to +1000 ms relative to stimulus onset. Epochs with voltages exceeding ±100 µV were discarded. Baseline correction was applied using the pre‑stimulus interval.

Channel selection and rhythm isolation

Only electrodes over occipital, parietal, and motor cortices were kept, based on the known involvement of these regions in grip‑related mu activity. Eight electrodes per region (total 24) were chosen: C1, C3, FC1, FC3 (left motor); C2, C4, FC2, FC4 (right motor); PO7, PO5h, PO9h, O1 (left occipital); PO8, PO6h, PO10h, O2 (right occipital); P1, P3, PPO3h, PPO5h (left parietal); P2, P4, PPO4h, PPO6h (right parietal). The data were band‑pass filtered to isolate the 8‑12 Hz mu band, squared, averaged across trials, and smoothed with a moving‑average window of 100 samples.

Feature extraction

An 8‑level discrete wavelet transform (DWT) using the Daubechies‑8 (db8) mother wavelet decomposed each epoch. The decomposition produced approximation coefficients (A8) and detail coefficients (D1‑D8). The alpha band corresponded primarily to D7. For each selected electrode, the power of the D7 coefficients and the Shannon entropy of the same coefficients were computed. Entropy was calculated as (-\sum p_i \log_2 p_i) where (p_i) is the normalized probability of each sample value. These two measures (power and entropy) formed the feature vector for each epoch.

Classification

A multilayer perceptron artificial neural network (ANN) was designed with one input layer (dimension equal to the number of features), two hidden layers (five neurons each, logistic sigmoid activation), and an output layer with two neurons using a soft‑max activation to produce class probabilities. The network was trained using cross‑entropy loss and evaluated with five‑fold cross‑validation.

Results

Event‑related desynchronization/ synchronization (ERD/ERS) analysis showed a clear reduction in alpha power for congruent grips compared with incongruent grips, confirming the physiological basis of the classification task. The ANN, fed with combined power‑entropy features, achieved high discrimination accuracy (reported as >85 % in the paper, exact figures not provided). The authors claim that the method is effective and could be integrated into BCI‑driven neurorehabilitation devices, robotic prostheses, or other assistive technologies.

Critical appraisal

Strengths:

- Use of a real, multi‑subject, high‑density EEG dataset rather than simulated data.

- Focus on the mu rhythm, which is well‑established in motor‑related BCI research.

- Combination of wavelet‑based power and information‑theoretic entropy features, which captures both amplitude and complexity aspects of the signal.

- Relatively simple ANN architecture that still yields strong performance, suggesting feasibility for real‑time implementation.

Weaknesses and open questions:

- The sample size (14 participants) is modest; broader validation is needed to confirm generalizability.

- No explicit comparison with alternative classifiers (SVM, KNN, Random Forest) or with alternative feature sets (e.g., only power, only entropy, or time‑domain statistics).

- Class balance and potential over‑fitting are not discussed; confusion matrices or statistical significance tests are absent.

- Real‑time latency and computational load of the 8‑level DWT plus ANN inference are not quantified, which is crucial for BCI deployment.

- The study isolates only the object category and two grip conditions; extending to non‑object stimuli and the “no‑grip” condition would test robustness.

- Integration with other biosignals (e.g., EMG) or multimodal fusion is not explored, though such approaches often improve BCI reliability.

Future directions

To advance this line of work, researchers should:

- Expand the participant pool and include diverse age groups and clinical populations (e.g., stroke survivors).

- Conduct ablation studies to quantify the contribution of each feature type and to compare wavelet families or decomposition levels.

- Benchmark the ANN against other machine‑learning algorithms and possibly deep learning models (e.g., CNNs on time‑frequency maps).

- Optimize the pipeline for low‑latency operation, perhaps by reducing DWT levels or employing fast wavelet transforms.

- Explore closed‑loop BCI experiments where the classifier’s output directly controls a robotic arm or prosthetic hand, assessing functional outcomes.

In summary, the paper demonstrates a viable EEG‑based method for automatically distinguishing correct versus incorrect hand‑grip actions on objects, leveraging mu‑rhythm ERD, wavelet‑based power and entropy features, and a modest artificial neural network. While promising, further validation, comparative analyses, and real‑time implementation studies are required before the approach can be deployed in practical assistive‑technology settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment