Differentially Private Confidence Intervals for Empirical Risk Minimization

The process of data mining with differential privacy produces results that are affected by two types of noise: sampling noise due to data collection and privacy noise that is designed to prevent the reconstruction of sensitive information. In this paper, we consider the problem of designing confidence intervals for the parameters of a variety of differentially private machine learning models. The algorithms can provide confidence intervals that satisfy differential privacy (as well as the more recently proposed concentrated differential privacy) and can be used with existing differentially private mechanisms that train models using objective perturbation and output perturbation.

💡 Research Summary

The paper addresses a gap in the literature on differentially private (DP) machine learning: while many algorithms exist for training models under DP (notably the objective‑perturbation and output‑perturbation methods of Chaudhuri et al.), none of them provide statistically sound uncertainty quantification for the learned parameters. The authors propose a general framework that produces confidence intervals (CIs) for the coefficients of any empirical risk minimization (ERM) model that can be trained with these two perturbation techniques, while preserving the privacy guarantees of pure ε‑DP, approximate (ε, δ)‑DP, and the more recent zero‑concentrated DP (zCDP).

The framework consists of two privacy‑budget phases. In the first phase a fraction ε₁ of the total privacy budget is spent to obtain a private estimate (\hat\theta) of the model parameters. This is done either by adding a spherical Laplace‑distributed linear term to the objective (objective perturbation) or by adding Gaussian noise to the final solution (output perturbation). The second phase uses the remaining budget ε₂ to privately estimate the first‑ and second‑order statistics of the loss function (gradient mean and Hessian/Fisher information) that are required to characterize the sampling distribution of (\hat\theta).

To construct the CIs the authors invoke a Taylor expansion of the perturbed objective around the true optimum (\theta^) and apply the Central Limit Theorem (CLT). Under standard strong‑convexity assumptions the distribution of (\hat\theta) can be approximated by a multivariate normal with mean (\theta^) and covariance that is the sum of two components: one arising from sampling variability (which depends on the true Hessian) and one arising from the privacy noise (which depends on the noise added in phase 1). Because the Hessian and related quantities are themselves data‑dependent, they must be estimated in a privacy‑preserving way; the authors use Laplace or Gaussian mechanisms (or Wishart noise for matrix-valued quantities) to obtain private estimates of these matrices, consuming ε₂ of the budget.

The resulting algorithm is:

- Split the total privacy budget (ε,δ) or ρ (for zCDP) into (ε₁,ε₂) or (ρ₁,ρ₂).

- Run objective‑perturbation (Laplace) or output‑perturbation (Gaussian) with ε₁ (or ρ₁) to obtain (\hat\theta).

- Privately compute the empirical gradient and Hessian (or Fisher information) using ε₂ (or ρ₂).

- Form the estimated covariance matrix (\hat\Sigma) by combining the analytic expression from the Taylor‑CLT approximation with the privately estimated Hessian.

- Return component‑wise or joint (1‑α) confidence intervals, e.g., (\hat\theta_i \pm z_{1-\alpha/2}\sqrt{\hat\Sigma_{ii}}) for each coefficient, or ellipsoidal regions for the whole vector.

The authors also refine the constants in the original objective‑perturbation algorithm, reducing the required noise magnitude by tightening the L₂‑sensitivity analysis under the strong‑convexity condition. This yields tighter confidence intervals for the same privacy budget.

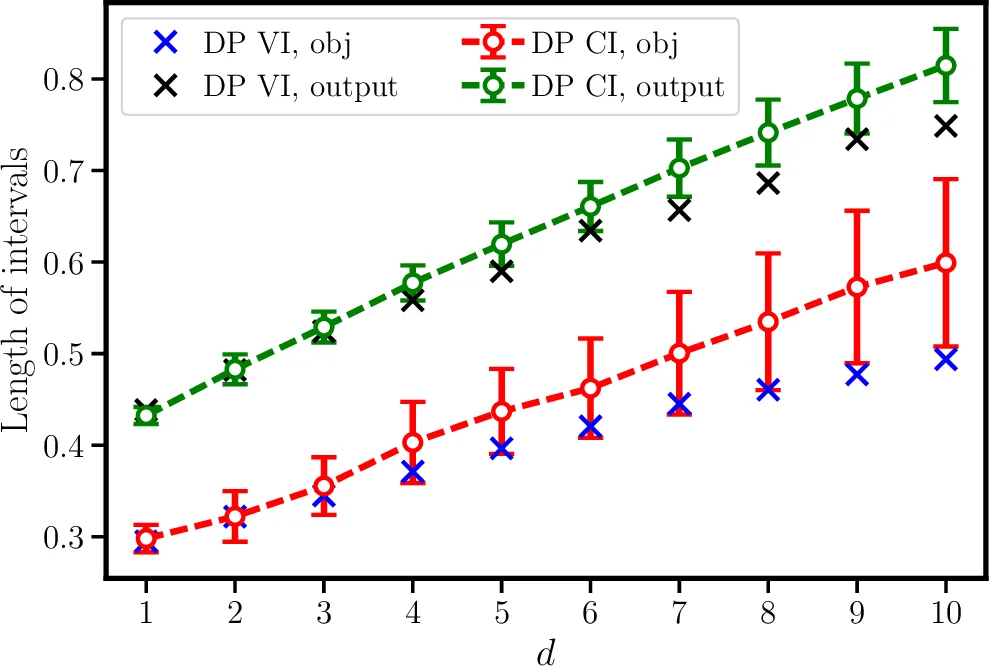

Experimental validation is performed on several public datasets (UCI, libsvm) for logistic regression and support‑vector machines (SVM). The paper compares three settings: (a) pure ε‑DP with objective perturbation, (b) pure ε‑DP with output perturbation, and (c) zCDP with output perturbation. Results show that under pure DP the objective‑perturbation method yields shorter CIs than output perturbation, whereas under zCDP the output‑perturbation method produces substantially tighter intervals. In all cases the empirical coverage of the intervals matches the nominal 95 % level, confirming the validity of the normal approximation and the privacy‑preserving estimation of second‑order statistics. Moreover, the proposed approach works for any ERM model satisfying the required convexity and smoothness conditions, extending beyond the previously studied linear‑regression‑only DP CI methods.

In summary, this work introduces the first general technique for constructing differentially private confidence intervals for a broad class of ERM models, integrating privacy‑preserving parameter estimation with rigorous statistical inference. It opens a new research direction that combines model accuracy, privacy guarantees, and uncertainty quantification, and suggests future extensions to high‑dimensional settings, non‑convex deep learning models, and optimal privacy‑budget allocation strategies.

Comments & Academic Discussion

Loading comments...

Leave a Comment