Riemannian game dynamics

We study a class of evolutionary game dynamics defined by balancing a gain determined by the game’s payoffs against a cost of motion that captures the difficulty with which the population moves between states. Costs of motion are represented by a Riemannian metric, i.e., a state-dependent inner product on the set of population states. The replicator dynamics and the (Euclidean) projection dynamics are the archetypal examples of the class we study. Like these representative dynamics, all Riemannian game dynamics satisfy certain basic desiderata, including positive correlation and global convergence in potential games. Moreover, when the underlying Riemannian metric satisfies a Hessian integrability condition, the resulting dynamics preserve many further properties of the replicator and projection dynamics. We examine the close connections between Hessian game dynamics and reinforcement learning in normal form games, extending and elucidating a well-known link between the replicator dynamics and exponential reinforcement learning.

💡 Research Summary

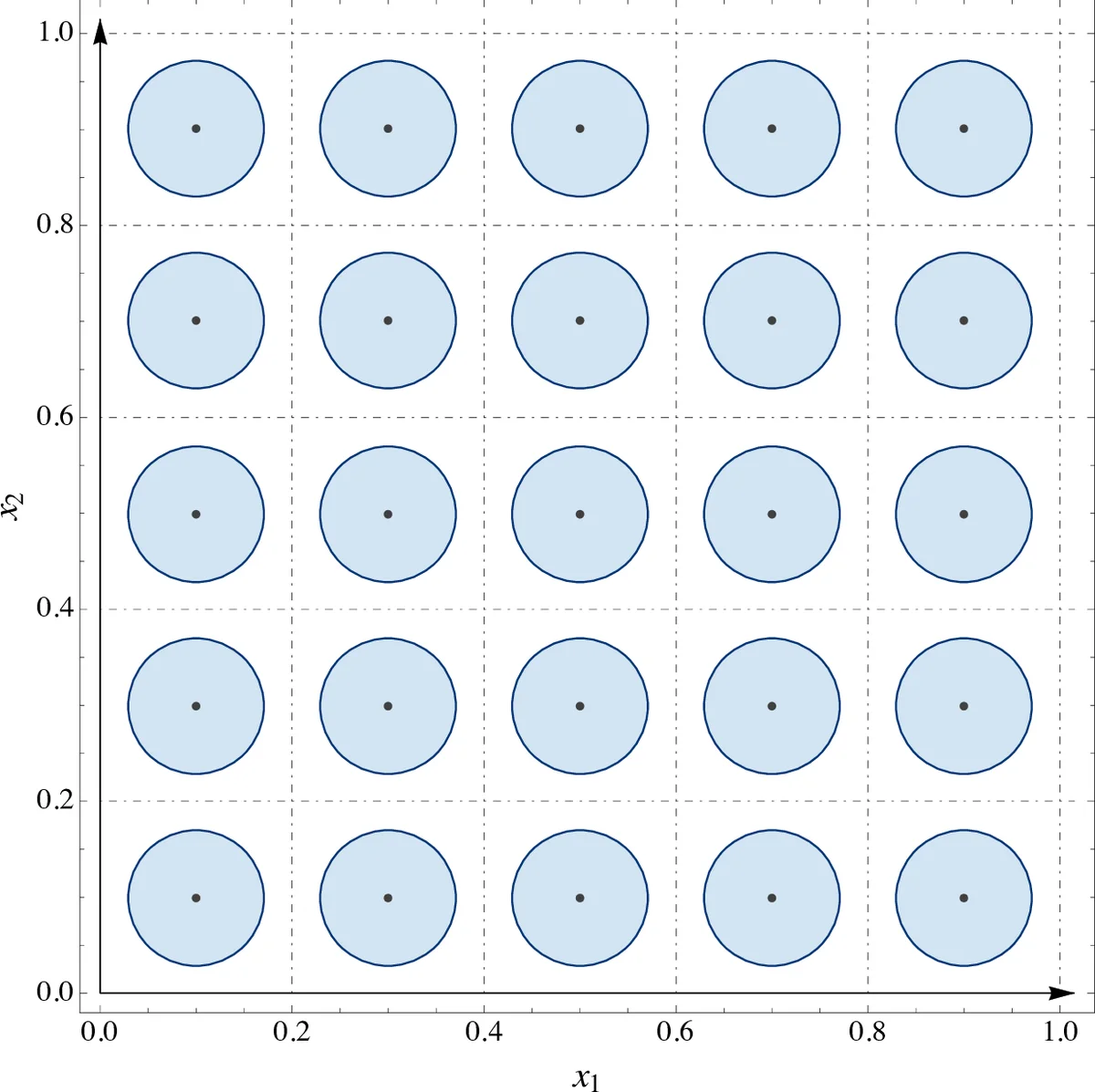

The paper introduces a broad class of evolutionary game dynamics that explicitly incorporates the “cost of motion” experienced by a population when it shifts between strategy profiles. This cost is modeled by a state‑dependent inner product—a Riemannian metric—on the simplex of population states. The dynamics are derived by maximizing the net benefit obtained from the game’s payoffs minus the motion cost, leading to a variational problem whose first‑order optimality conditions define the vector field governing the evolution of the population state.

Two classical dynamics appear as special cases. When the metric is the Shahshahani metric (gαβ(x)=δαβ/xα), the resulting dynamics coincide with the replicator equation. In this setting the cost of increasing a strategy’s share grows without bound as its frequency approaches zero, which forces the support of the population to remain invariant and yields a continuous flow. When the metric is the standard Euclidean metric (gαβ=δαβ), the dynamics become the Euclidean projection dynamics, obtained by orthogonally projecting the payoff vector onto the tangent cone of the simplex. Here the cost remains finite at the boundary, allowing strategies to appear and disappear repeatedly, and the flow can be discontinuous.

These two prototypes motivate a taxonomy: continuous Riemannian dynamics (replicator‑type) and discontinuous Riemannian dynamics (projection‑type). The authors then explore a richer family of metrics that satisfy an integrability (Hessian) condition: the metric can be expressed as the Hessian of a convex potential function f, i.e., g(x)=∇²f(x). Dynamics generated by such metrics are called Hessian game dynamics. The potential f serves as a “metric potential,” and the associated Bregman divergence D_f(y‖x)=f(y)−f(x)−∇f(x)·(y−x) becomes a natural Lyapunov function.

Key theoretical contributions are as follows:

-

Positive Correlation (PC) – All Riemannian dynamics satisfy X·v(x)·V(x)≥0, guaranteeing that every Nash equilibrium of the underlying game is a rest point.

-

Global convergence in potential games – When the game itself admits a potential function, the dynamics follow the steepest ascent of that potential measured with the chosen metric. This generalizes Kimura’s maximum principle, which originally applied only to the Shahshahani metric.

-

Convergence and stability under Hessian metrics – Using the Bregman divergence as a Lyapunov function, the authors prove:

- Global convergence to Nash equilibria in strictly contractive games.

- Local asymptotic stability of evolutionarily stable states (ESS).

- Elimination of strictly dominated strategies for continuous Hessian dynamics (a property that fails in the discontinuous regime).

-

Time‑average convergence – For interior trajectories, the time‑average of the population state converges to the set of Nash equilibria, extending a classic result for the replicator dynamics.

-

Reinforcement‑learning interpretation – If agents keep cumulative scores of their strategies and at each instant select a mixed strategy via a logit (soft‑max) rule applied to these scores, the induced evolution of mixed strategies coincides with Hessian dynamics derived from a steep potential. Thus, exponential reinforcement learning, previously known to generate the replicator equation, is shown to generate the whole Hessian family when the metric’s potential is sufficiently steep.

The paper situates its contributions within a broad literature: it connects to Hofbauer‑Sigmund’s continuous‑trait selection models, to earlier work on natural selection and Kimura’s principle, to convex‑analysis frameworks involving Hessian Riemannian metrics, and to recent studies of learning in games (Hart–Mas‑Colell, Mertikopoulos–Moustakas).

Finally, the authors outline future directions: extending the framework to multi‑population settings, allowing heterogeneous or stochastic cost structures, analyzing robustness under noise, and designing practical reinforcement‑learning algorithms that exploit the geometric insight. In sum, by embedding evolutionary game dynamics in a Riemannian geometric setting, the paper unifies several classical dynamics, provides powerful analytical tools (Bregman divergence, Hessian potentials), and bridges evolutionary game theory with modern reinforcement‑learning paradigms.

Comments & Academic Discussion

Loading comments...

Leave a Comment