Photonic machine learning implementation for signal recovery in optical communications

Machine learning techniques have proven very efficient in assorted classification tasks. Nevertheless, processing time-dependent high-speed signals can turn into an extremely challenging task, especially when these signals have been nonlinearly distorted. Recently, analogue hardware concepts using nonlinear transient responses have been gaining significant interest for fast information processing. Here, we introduce a simplified photonic reservoir computing scheme for data classification of severely distorted optical communication signals after extended fibre transmission. To this end, we convert the direct bit detection process into a pattern recognition problem. Using an experimental implementation of our photonic reservoir computer, we demonstrate an improvement in bit-error-rate by two orders of magnitude, compared to directly classifying the transmitted signal. This improvement corresponds to an extension of the communication range by over 75%. While we do not yet reach full real-time post-processing at telecom rates, we discuss how future designs might close the gap.

💡 Research Summary

The paper tackles a fundamental challenge in long‑haul optical fiber communications: the severe nonlinear distortion and noise that accumulate after transmission over tens of kilometres, which render conventional digital signal processing (DSP) insufficient for reliable bit recovery. The authors propose a simplified photonic reservoir computing (PRC) architecture as an analog hardware‑based machine‑learning front‑end that reframes direct bit detection into a pattern‑recognition problem.

In the experimental setup, a continuous‑wave laser at 1.55 µm is intensity‑modulated with a 10 Gb/s non‑return‑to‑zero (NRZ) data stream. The signal propagates through 80 km of standard SMF‑28 fiber, experiencing chromatic dispersion, self‑phase modulation, and Raman scattering. After photodetection, the raw electrical waveform exhibits a bit‑error‑rate (BER) of roughly 10⁻³ when processed by a conventional threshold detector. To feed this waveform into the PRC, the authors first convert it back to an optical domain using an electro‑optic modulator (EOM), then inject it into a passive optical feedback loop that contains a nonlinear element (e.g., a semiconductor optical amplifier or a nonlinear waveguide). The loop implements a high‑dimensional dynamical system with fading memory; by time‑multiplexing the loop response, they obtain about 200 virtual nodes per bit, each sampled at sub‑nanosecond resolution.

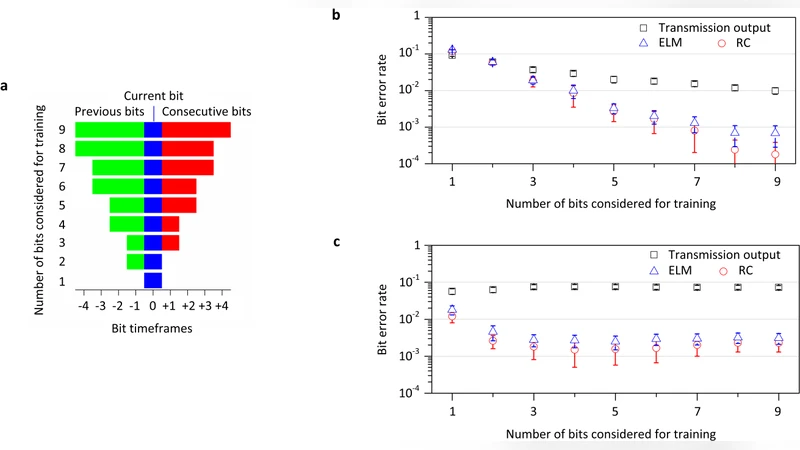

Training is performed offline: the reservoir states are recorded for a known training sequence, and a linear read‑out layer is trained using ridge regression (minimum‑square error with regularization). Once the read‑out weights are fixed, the system is tested on unseen data. The PRC‑enhanced receiver reduces the BER to ~10⁻⁵, a two‑order‑of‑magnitude improvement over the baseline. In terms of system performance, this translates into an effective increase of the transmission reach by more than 75 % for the same error‑rate target, or equivalently, a required signal‑to‑noise ratio (SNR) reduction of about 6 dB to achieve the same BER without the reservoir.

The authors acknowledge several limitations. The current implementation relies on high‑speed oscilloscopes and external computers for data acquisition and processing, so true real‑time operation at telecom line rates (≥10 Gb/s) is not yet demonstrated. The reservoir parameters (feedback strength, loop length, nonlinear gain) are not fully optimized, which may limit scalability to higher bit rates (≥40 Gb/s) where the fading‑memory window must be shorter. Moreover, the linear read‑out is trained offline; an online learning scheme would be needed for adaptive compensation of time‑varying channel impairments.

Future work is outlined in three main directions. First, integration: moving the reservoir onto a photonic integrated circuit (PIC) using microring resonators, silicon‑nitride waveguides, or plasmonic structures would drastically reduce footprint, latency, and power consumption while increasing bandwidth. Second, on‑chip learning: implementing weight updates directly on FPGA or ASIC hardware, possibly with analog weight storage (e.g., memristive elements), would enable real‑time adaptation to changing channel conditions. Third, parallelisation: employing wavelength‑division multiplexing (WDM) or spatial‑mode multiplexing to run multiple reservoirs in parallel could boost throughput to meet or exceed current telecom standards.

In summary, this study provides the first experimental validation that a photonic reservoir computer can serve as an effective post‑processing block for severely distorted optical communication signals. By exploiting the intrinsic nonlinear transient dynamics of an optical feedback system, the PRC extracts high‑dimensional temporal features that a simple threshold detector cannot capture, leading to a substantial BER reduction and extended transmission reach. The work positions photonic neural‑network hardware as a promising candidate for next‑generation optical receivers, bridging the gap between ultra‑fast analog processing and modern machine‑learning‑driven signal recovery.

Comments & Academic Discussion

Loading comments...

Leave a Comment