ScaleSimulator: A Fast and Cycle-Accurate Parallel Simulator for Architectural Exploration

Design of next generation computer systems should be supported by simulation infrastructure that must achieve a few contradictory goals such as fast execution time, high accuracy, and enough flexibility to allow comparison between large numbers of possible design points. Most existing architecture level simulators are designed to be flexible and to execute the code in parallel for greater efficiency, but at the cost of scarified accuracy. This paper presents the ScaleSimulator simulation environment, which is based on a new design methodology whose goal is to achieve near cycle accuracy while still being flexible enough to simulate many different future system architectures and efficient enough to run meaningful workloads. We achieve these goals by making the parallelism a first-class citizen in our methodology. Thus, this paper focuses mainly on the ScaleSimulator design points that enable better parallel execution while maintaining the scalability and cycle accuracy of a simulated architecture. The paper indicates that the new proposed ScaleSimulator tool can (1) efficiently parallelize the execution of a cycle-accurate architecture simulator, (2) efficiently simulate complex architectures (e.g., out-of-order CPU pipeline, cache coherency protocol, and network) and massive parallel systems, and (3) use meaningful workloads, such as full simulation of OLTP benchmarks, to examine future architectural choices.

💡 Research Summary

The paper introduces ScaleSimulator, a system‑level simulation environment that aims to reconcile three traditionally conflicting goals: fast execution, cycle‑accurate timing, and sufficient flexibility to explore a large design space. Existing architectural simulators either prioritize accuracy (cycle‑accurate simulators) and suffer from poor parallel scalability, or they adopt parallel discrete‑event techniques that improve speed but introduce synchronization bottlenecks and accuracy loss. ScaleSimulator tackles this dilemma by making parallelism a first‑class design principle and by defining a novel “2.5‑phase” execution model that enables lock‑free, barrier‑driven parallelism while preserving cycle‑accurate semantics.

Architecture Overview

The simulator is split into a Functional Model (FM) and a Performance Model (PM). The FM provides a correct functional execution of the target system, typically by leveraging a fast full‑system emulator such as QEMU. The PM, reminiscent of SystemC TLM‑2.0, models timing using a set of units (stateful components), ports (point‑to‑point connections), and messages (events). Units encapsulate micro‑architectural resources (e.g., pipeline stages, cache controllers) and communicate exclusively via ports, which carry metadata such as capacity and latency.

2.5‑Phase Execution

For each simulated clock cycle the simulator performs three logical steps:

-

Work Phase – All units that are ready execute their assigned operation in parallel. The design rules guarantee that operations within the same work phase are independent: each operation reads input messages, accesses local state, checks output‑port availability, computes results, stores them locally, and finally posts result messages to its output ports. Because ports are point‑to‑point, there is no contention, and no locks are required.

-

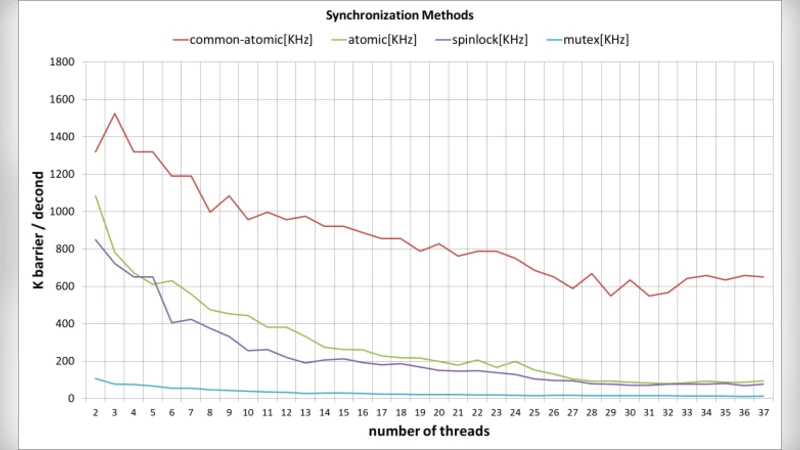

Barrier Phase – A lightweight software barrier synchronizes all worker threads. This is the only global synchronization point and its cost dominates the scalability of the whole system. The authors argue that, given the race‑free nature of the work phase, a simple barrier suffices to enforce lock‑step cycle progression.

-

Transfer Phase – The messages produced in the work phase are transferred to the destination units. Importantly, the transfer moves pointers rather than the full payload, dramatically reducing memory traffic. After the pointer copy, the receiving unit’s input port is marked ready for the next cycle.

Back‑Pressure Handling

Back‑pressure—situations where a downstream unit cannot accept more messages—has traditionally forced serial traversal of pipelines. ScaleSimulator introduces explicit back‑pressure ports that can be triggered one cycle early (cycle N‑1) to indicate a stall that will occur in cycle N. This pre‑emptive signaling eliminates the need for intra‑cycle dependency checks. Two mechanisms are supported: (a) explicit ports that carry stall messages, and (b) implicit stalls where a transfer fails because the target input port is occupied, causing the sender’s output port to remain busy for the next cycle. Both approaches guarantee that the order of execution does not affect timing results.

Synchronization Philosophy

Unlike many modern simulators that rely on relaxed synchronization (e.g., optimistic Time‑Warp, conservative PDES with frequent roll‑backs), ScaleSimulator deliberately avoids any fine‑grained locking or semaphore usage. The only synchronization primitive is the barrier between phases, based on the assumption that all operations within a phase are race‑free. This design yields substantially higher parallel efficiency, especially when scaling to hundreds of cores.

Evaluation

The authors evaluate the framework using realistic workloads: OLTP transaction processing, Hadoop map‑reduce jobs, and SPEC‑2006 benchmarks. Experiments on a many‑core host (tens to hundreds of physical cores) demonstrate:

- Scalability – Near‑linear speedup up to ~200 cores, achieving >80 % parallel efficiency.

- Accuracy – Measured timing error stays below 1 % compared to a reference serial cycle‑accurate simulator.

- Throughput – Ability to simulate full system workloads (including OS and application code) within practical time frames, something previously infeasible for cycle‑accurate tools.

Strengths

- The 2.5‑phase model cleanly separates computation from communication, enabling lock‑free parallelism.

- Pointer‑based message transfer reduces memory bandwidth pressure.

- Explicit early back‑pressure eliminates costly pipeline traversals.

- The barrier‑only synchronization simplifies implementation and scales well on modern many‑core CPUs.

- Modular FM/PM separation allows swapping the functional emulator (e.g., QEMU → gem5) without redesigning the timing model.

Limitations and Open Issues

- The requirement that every operation be modeled as a single‑cycle action (with multi‑cycle latency expressed as delayed “no‑op” cycles) may increase model complexity for sophisticated micro‑architectures (e.g., out‑of‑order cores with variable‑latency execution units).

- Barrier synchronization, while lightweight, can still become a bottleneck at extreme core counts or when the work phase workload is highly imbalanced. Adaptive barrier algorithms or hardware‑assisted synchronization were not explored.

- Memory locality optimizations (e.g., NUMA‑aware placement of units) are mentioned only briefly; deeper analysis could further improve scalability on large servers.

- The functional model relies heavily on QEMU; portability to other full‑system emulators or to trace‑driven functional models is not demonstrated.

- No quantitative comparison with state‑of‑the‑art relaxed‑sync simulators (e.g., Zsim, Sniper) is provided, making it difficult to assess the trade‑off between absolute speed and accuracy.

Future Directions

The authors suggest several avenues for extending the work: integrating NUMA‑aware data structures, exploring hardware‑supported barrier mechanisms, supporting dynamic workload balancing, and formalizing an API for plugging in alternative functional models. Additionally, extending the back‑pressure mechanism to support more complex flow‑control protocols (e.g., credit‑based networks) would broaden applicability to emerging many‑core interconnect designs.

Conclusion

ScaleSimulator presents a compelling approach to achieving cycle‑accurate, large‑scale system simulation with practical execution times. By embedding parallelism into the very fabric of the simulation model—through lock‑free unit design, pointer‑based message transfer, early back‑pressure signaling, and barrier‑only synchronization—the framework bridges the long‑standing gap between accuracy and performance. While certain scalability challenges remain, the methodology offers a solid foundation for next‑generation architectural exploration tools.

Comments & Academic Discussion

Loading comments...

Leave a Comment