Detecting Alzheimers Disease Using Gated Convolutional Neural Network from Audio Data

We propose an automatic detection method of Alzheimer's diseases using a gated convolutional neural network (GCNN) from speech data. This GCNN can be trained with a relatively small amount of data and can capture the temporal information in audio par…

Authors: Tifani Warnita, Nakamasa Inoue, Koichi Shinoda

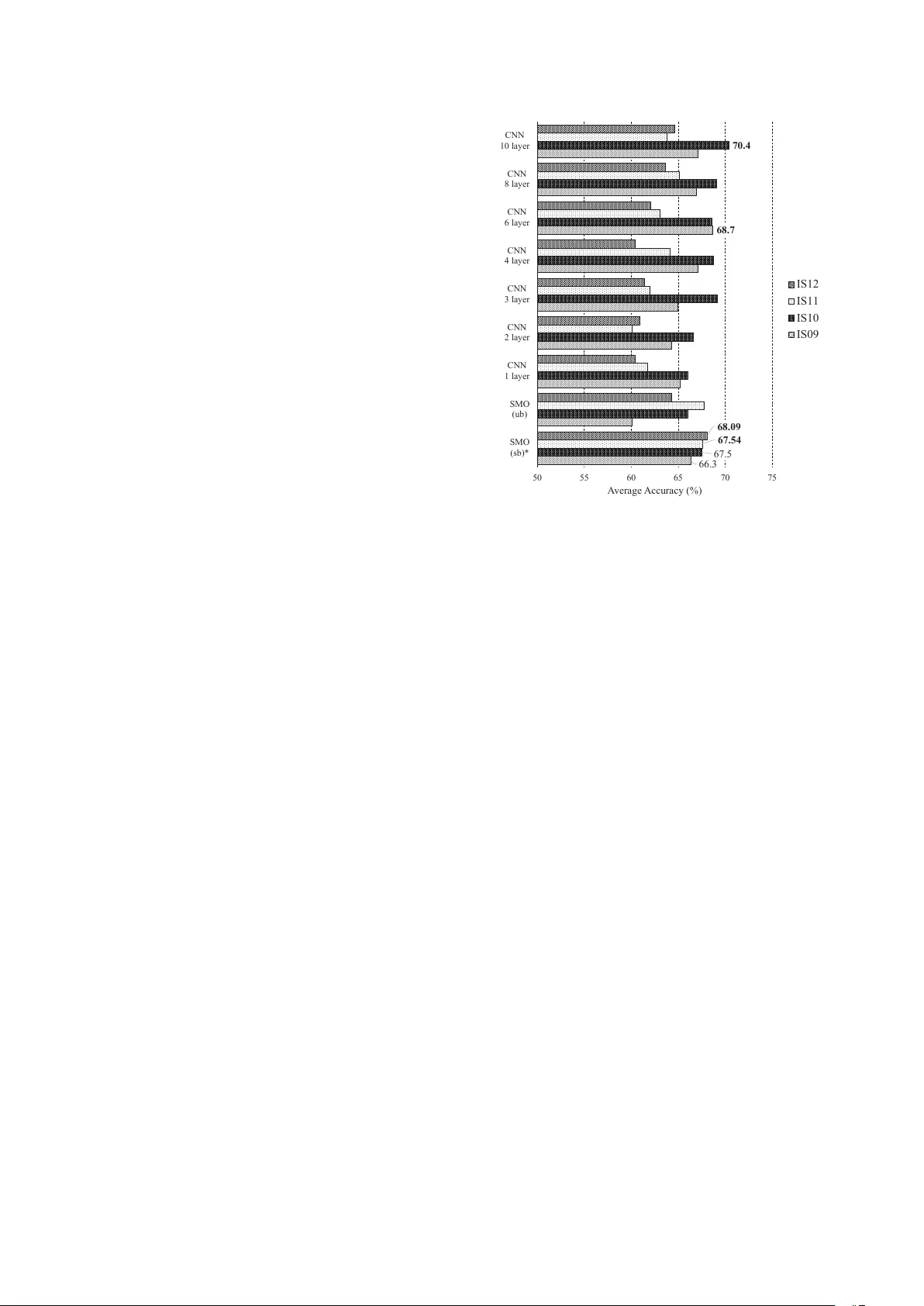

Detecting Alzheimer’ s Disease Using Gated Con volutional Neural Netw ork fr om A udio Data T ifani W arnita, Nakamasa Inoue, K oichi Shinoda T okyo Institute of T echnology , T okyo, Japan { tifani,inoue } @ks.cs.titech.ac.jp, shinoda@c.titech.ac.jp Abstract W e propose an automatic detection method of Alzheimer’ s diseases using a gated con volutional neural network (GCNN) from speech data. This GCNN can be trained with a relatively small amount of data and can capture the temporal information in audio paralinguistic features. Since it does not utilize any linguistic features, it can be easily applied to any languages. W e ev aluated our method using Pitt Corpus. The proposed method achie ved the accurac y of 73.6%, which is better than the con ventional sequential minimal optimization (SMO) by 7.6 points. Index T erms : Alzheimer’ s disease, computational paralinguis- tics, con volutional neural network, gating mechanism 1. Introduction Alzheimer’ s Disease (AD) is the most common cause of de- mentia [1], a neurodegenerati ve disease strongly related to the reduced functionality or even death of neurons in the central nervous system [2]. As the result of ageing society , we face an increasing number of people being af fected by AD [3] which is estimated to be doubled ev ery 20 years [4]. The most notice- able symptom of AD patients is the memory loss [5] such as in recalling experiences, which results in poor narrati ve memory [6]. They also often become apathy and easy to get depressed [1]. At present, there is no clear protocol on how to detect AD not only in an effecti ve but also accurate way [1]. The most common approach is to monitor the patients, to carefully exam- ine the medical history of the patients, to conduct some cogni- tiv e tests (i.e. a picture description task, a naming task), mental status, and mood test, and to take their brain images. The care- ful diagnosing process can take in days or e ven in weeks which might be v ery cumbersome. Early prediction of AD actually can help its patients to preserve their cognitiv e functions [7]. Some of their causes are treatable and the patients are sometimes fully recov ered [8]. Automatic detection of AD in its early phase has been strongly demanded. T o date, there have been a lot of studies for automatically detecting AD patients. Most of them used linguistic information [3, 9], which is dif ficult to be applied to different languages, es- pecially to low-resource languages. In this study , we propose a non-linguistic approach for detecting AD using acoustic fea- tures from speech data. Inspired by numerous successes with deep learning for paralinguistic tasks such as for emotion recog- nition [10, 11], we employ conv olutional neural networks with a gating mechanism. 2. Related W orks Linguistic information had been widely utilized in order to auto- matically detect AD patients [12, 13, 9]. For instance, W ankerl et al. (2017) [9] employed a pure linguistic approach based on n -gram on Pitt Corpus [14], in which subjects undergo a picture description task. From the study , they found that patients often not only uttered incomplete phrases but also interrupted others. These degraded the intelligibility of their speech [15]. Some studies utilized both of linguistic features and acous- tic features to detect people having AD [15, 3, 16]. Fraser et al. (2016) [3] utilized multilinear logistic regression and se- lected 35 top-ranked features out of 370 features by using Pitt Corpus [14]. Khodabakhsh et al. (2015) [15] used the record- ings of conv ersational speech of 32 AD patients and 51 control subjects for this combination. W einer et al. (2016) [17] em- ployed only acoustic information from German con versational recordings. Ho wev er, the study was relied on the transcription for calculating some features such as silence-to-word ratio and word rate. In contrast with these studies, we focused on non-linguistic approach where only speech audio features of the subjects are utilized. It can be easily applied to other languages and expected to be more robust against en vironmental noise and channel dif- ferences. As features, we used the paralinguistic features of- ten used in emotion recognition task since the previous studies showed that AD people tend to show emotional prosodic im- pairment [18]. Numerous approaches for detecting emotions emerged from people hav e been carried out. Schuller et al. (2009) [19] provided the baseline for emotion recognition in the INTERSPEECH 2009 Emotion Challenge, which uses se- quential minimal optimization (SMO). Huang et al. (2014) [10] employed deep learning based approach in which con volutional layers were employed. Keren and Schuller (2016) [11] used not only con volutional but also recurrent layers. 3. Database In this study , we used the picture description task session data of Pitt Corpus [14], which is a part of DementiaBank, a multime- dia database for studying people having dementia. In the picture description task, patients are asked to describe what happens in a picture, Cookie Theft Picture of the Boston Diagnostic Apha- sia Examination [20]. Pitt Corpus consists of speech data and their transcription from 244 control (healthy) subjects as well as 309 patients having dementia such as mild cogniti ve impair- ment (MCI), vascular dementia, and AD. In this study , we used only the data from AD patients and from patients suspected of having AD (probable AD). It should be noted that, e ven though we used the same database as in [3, 9], the number of the sub- jects in our study was slightly dif ferent from theirs from the following three reasons. First, the size of the database has in- creased over time. Second, we excluded speech data with ov er- lapped sounds from the other intervie w sessions. Third, we only used those data with both audio data and transcription informa- tion. Consequently , the number of sessions is 488 (255 AD, 233 control) from 267 subjects (169 AD, 98 control). Similar to [3], due to a limited amount of data, we employed a 10-fold cross validation scheme for our ev aluation. W e designed the ten sub- sets so that no subject appeared in more than one subset in order to av oid any speaker dependencies. In this study , we performed three preprocessing stages. First, we normalize each audio signal using the average value of decibels relativ e to full scale (dBFS) in the data. Then, we seg- mented the audio data of each subject into utterances according to the transcription information. W e obtained the total of 6267 utterances (3276 AD, 2991 control). Finally , we added 10ms at the beginning and at the end of each utterance segment with 15ms fade-in and fade-out. 4. F eatures In this study we used openSMILE [21] to extract acoustic fea- tures from Pitt Corpus. OpenSMILE consists of se veral differ - ent configurations of acoustic feature extraction. It has been mainly used for emotion recognition but we believ e it is also effecti ve for our purpose. While AD patients can easily get de- pressed, anxious, or ev en upset [1], they find it difficult to ex- press their emotions in prosodies such as tempo alteration and powerful intonation [18]. Based on this finding, we use the fol- lowing feature sets. 1. INTERSPEECH 2009 Emotion Challenge Features (IS09) [19] In this feature set, there are 16 types of low-le vel descrip- tors (LLD) extracted from the frame le vel. The delta co- efficients were also calculated hence producing the total of 32 LLD. In order to get the utterance-le vel features from LLD, 12 functionals are introduced (e.g. the val- ues of minimum and maximum, mean, and range) are applied to each LLD. As a result, 384 features are ex- tracted from one utterance. 2. INTERSPEECH 2010 Paralinguistic Challenge Features (IS10) [22] The additional LLD to IS09 are PCM loudness, eight log Mel frequency band (0-7), eight line spectral pairs (LSP) frequency (0-7), F0 en velope, voicing probability , jitter local, jitter DPP , and shimmer local. In addition, more MFCC features are extracted (0-14 compared to 1-12). Finally , we get 76 LLD for one frame and the total of 1582 features for one utterance. 3. INTERSPEECH 2011 Speaker State Challenge Features (IS11) [23] Compared to the pre vious feature sets, IS11 provides the deriv ed loudness measure and the employment of Rel- ativ e Spectral Analysis (RAST A)-style filtered auditory spectra resulting in 118 LLD for the frame-lev el features. The total number of features in one utterance is 4368. 4. INTERSPEECH 2012 Speaker T rait Challenge Features (IS12) [24] In this feature set, some of the LLD added compare to IS11 includes harmonic-to-noise ratio (HNR), spectral harmonicity , and psychoacoustic spectral sharpness re- sulting in 120 LLD for the frame-lev el features. After being applied by functionals, total number of features in one utterance is 6125 features. Convolution Layer N Time (T) LLD Features (F) σ ) ⵙ ) Gated Convolution Time (T) Number of Filters (K) Time (T) Number of Filters (K) Gated Linear Unit (GLU) Max-Pooling Flatten Fully Connected Layer (256) Time (T) Prediction (AD or Control) Input (OpenSMILE features) Number of Filters (K) Figure 1: GCNN with depth = 1. The kernel window in the con volutional layer is colored in gr ay . 5. Method 5.1. Con volutional Neural Network In this study , assuming that temporal features are well repre- sented in the frame-le vel features, we employed a Conv olutional Neural Network (CNN) [25] as a classifier . A CNN was in- spired by the structure of animal visual cortex for perceiving lights [26] and has yielded supreme results in an abundance of tasks in the past few years [27]. Furthermore, it needs relativ ely small amount of training data compared to the other networks since it has a much smaller number of connection weights [28]. The con volution operation ov er the input aims to extract the temporal information by sliding through the use of a ker- nel (filter). In our study , we feed utterance segment features X ∈ R F × T into the CNN, where F and T represent the di- mension of a feature vector of LLD and the number of time frames, respectively . The size of the sliding window is R F × N where N is the window length of the kernel in time axis. The con volution operation between the kernel and the input will pro- duce a scalar output. Output from the conv olution layer at time i , i = n + 1 , ..., T is then defined as, y i = g F X f =1 N X n =1 w f ,n x f ,i − n + b , (1) where b and g ( · ) denotes the bias and activ ation function re- spectiv ely . x c,d is the ( c, d ) element of the input X , and w c,d is the ( c, d ) element of the weight matrix W . Both W and b are the learnable parameters of the network that we train. When the kernel is sliding through the input matrix ov er the time dimen- sion, we multiply the overlapping element of the two matrices as in Eq. 1. W e employed a rectified linear unit (ReLU) [29] as the activ ation function g . This network is also referred as T ime-Delay Neural Network (TDNN) [30]. Since the input dimension for CNN should be fixed, we set the segment length as that of the longest utterance segment in the dataset. After that, we applied zero padding for the rest of the utterance segment. W e use the number of kernels K = 64 and the window length of N = 3 . Accordingly , the calculation of every patch of the input will produce the complete output Y ∈ R M × K where M is the number of time frames, T − N + 1 . W e added batch normalization before each activation func- tion [31]. W e also used random weight initialization for each con volution layer . After each con volution layer, we put a max- pooling layer [32]. The output of the last con volution layer is flattened into one feature vector . For example, the flattened output Y ∈ R M × N will produce a vector Z ∈ R O where O = M × N . This vec- tor became the input to a fully-connected layer with activation function ReLU consisting 256 hidden neurons. W e also em- ployed batch normalization and initialized the weight matrix as random uniform. Furthermore, we also applied dropout with the value of 0.5 before the output layer . The output layer consists one hidden neuron with a sigmoid function. W e used binary cross-entropy as the loss function and Adam [33] as the opti- mizer . W e trained the network with the maximum number of epochs 200 based on the pair of LLD features of each utterance segment and its corresponding binary label. 5.2. Gating Mechanism In addition to the standard deep con volutional neural network, we introduce a gating mechanism after each con volution. The resulting network is called Gated Conv olution Neural Network (GCNN). A gate represents an information controller which manages the information that flows into the succeeding layer . The gate function can pre vent the gradient from being vanished during backpropagation [34]. Recently , it has been often used in con volutional neural networks such as for conditional im- age modeling [35], language modeling [34], and speech syn- thesis [36]. Previous study [34] showed that gated linear unit (GLU) outperformed gated tanh unit (GTU) which is used in [35]. Therefore, we employed GLU in our study . Similar as in Section 5.1, we give the input of extracted LLD features of each utterance segment X ∈ R T × F . W e used a sigmoid function as the activation function g , which is multi- plied by a linear gate. Eq. 1 is modified as: (2) y i = F X f =1 N X n =1 v f ,n x f ,i − n + e · g F X f =1 N X n =1 w f ,n x f ,i − n + b , 66 .3 68 .7 67 .5 70 .4 68 .09 67 .54 5 0 5 5 6 0 6 5 7 0 7 5 SMO (sb ) * SMO (u b ) CN N 1 l ay er CN N 2 l ay er CN N 3 l ay er CN N 4 l ay er CN N 6 l ay er CN N 8 l ay er CN N 1 0 l ay er A verage Accuracy (%) IS12 IS11 IS10 IS09 Figure 2: Comparison of IS09, IS10, IS11, and IS12 feature sets using standar d CNN with sb and ub denote the subject-le vel and utterance-le vel based classification r espectively . where v c,d represent the element of V at position ( c, d ) . The V ∈ R K × F and e ∈ R are the kernel weight matrix and bias for the linear gate. For GCNN, we used N = 2 and applied the same padding as in [36]. After that, we halved the output length in a max-pooling layer [32]. Figure 1 shows the visualization of our GCNN with the depth = 1 where the depth represents the number of gated con volution layers. In the figure, one gated con volution layer lies between the dotted horizontal line which followed by the max-pooling layer . Deeper network consists of more gated con- volution layers. W e also defined the same layers after the max- pooling layer and used the same number of epochs as in Section 5.1. 5.3. Framework Ov erview While we need to classify each subject based on his/her whole data, we performed the classification for each utterance instead. This is based on our assumption that the information about a patient having AD or not can still be obtained from a smaller but appropriate length of a segment. After the utterance-based classification is performed, we make the final verdict for each subject based on the proportion of each class; we classified a subject into AD if he/she has AD percentage abov e the half. The utterance-le vel subject classification is expected to gi ve more detailed information about the symptoms while most of the previous studies conducted the subject-lev el classification [15, 3, 12, 13, 9, 16]. 6. Experimental Results W e used the av erage accuracy from the 10-fold cross validation scheme on Pitt Corpus [14] (see Section 3 for details) for eval- uating our method. Our first experiment was the employment 61 63 65 67 69 71 73 75 50 0 100 0 2000 400 0 4295 ) % ( y c a r u c c A e g a r e v A Utter an ce L en g th ( m s ) 6 La yer s 8 La yer s 10 La yer s Figure 3: GCNN with differ ent utterance length. T able 1: Comparison of the employment of the standar d CNN and GCNN by using IS10 featur e set r epresented in the aver age accuracy (%) fr om 10-fold cr oss validation. Depth Standard CNN Linear GCNN Utterance Subject Utterance Subject 1 64.2 66.0 62.2 66.2 2 64.2 66.6 62.6 68.7 3 64.2 69.2 61.9 66.4 4 64.9 68.7 63.3 68.9 6 65.5 68.6 65.1 72.2 8 66.1 69.0 66.3 73.6 10 65.2 70.4 65.2 69.8 of the standard deep CNN without gates on the four feature sets, IS09, IS10, IS11, and IS12. Figure 2 shows the classifi- cation result of AD/non AD with different numbers of hidden layers (1, 2, 3, 4, 6, 8, 10). The performance result given is from the subject-level classification after majority voting from the utterance-level classification. Since there is no established baseline for this database and the number of instances used are different from one experiment to another experiment, we giv e the result of the four feature sets with baseline methods, which is the subject-le vel classification using sequential minimal opti- mization (SMO) [19, 22]. In Figure 2, they are marked by a star symbol (*). From Figure 2, the best result is obtained when we use 10- layer CNN with IS10 feature set which is better than SMO by 2.4 points. Furthermore, we can see that the use of CNN with both IS11 and IS12 could not yield better result compared to using SMO while it can improve the performance over using IS09 and IS10. W e can also see that the IS10 feature set outper - formed the rest of the feature sets when we use CNN. When we compare between IS09 and IS10, we can see that IS10 co vers more features in the paralinguistic aspect of speech as it was used as the age-gender and le vel-of-interest classifica- tion. The performance of the feature sets IS09 was worse than that of IS10 especially when the subjects did not have any spe- cific emotion (neutral). Some emotions appeared at the begin- ning before the subject begins to describe the image, in the mid- dle when the subject begins to confuse with the things he/she wants to describe (laughs), and at the end after completing the task. Noticeable differences between IS09 and IS10 include the use of voicing probability in which might more represent the sound-silence pattern in the subjects. Further , jitter and shim- mer in IS10 might give more representation of the hesitation rate in the subjects. Those LLD are also appear in both IS11 and IS12. Howe ver , the higher dimension of the two feature sets might be too big for the input of the CNN. Next, we in vestigated the effectiv eness of the gating mech- anism. The experiment was carried out by using IS10 feature set. The comparison of the standard CNN and the gating mech- anism is shown in T able 1. From the table, we can see that the employment of linear gate improved the average accuracy from the 10-fold cross validation scheme into 73.6%. W e can see that the gating mechanism yields better result. Lastly , we in vestigated the importance of utterance length information in the classification performance. W e tried a set of different segment length L , which are 500ms, 1000ms, 2000ms, 4000ms, and also 4295ms which is the segment length of the longest utterance in the dataset. In this case, we segmented each subject data into segments with a predetermined length L without taking into consideration the oracle utterance length. Accordingly , the zero padding is added only for the last utter- ance segment of the subject if its length is less than L . The experiment was carried out by using the best scheme from the previous experiment, which is GCNN. W e only tried to use the gated CNN with the number of layers as 6, 8, and 10. As depicted in Figure 3, we can see that shorter segment length yields worse results. Howe ver , we can still get close results using the utterance length of 4000ms (69.1%, 70.8%, and 69.8% for 6, 8, and 10 layers respectiv ely) compared to the cases when we use the oracle utterance length (72.2%, 73.6%, and 69.8% for 6, 8, and 10 layers respecti vely). For 10 lay- ers, we got similar result between using the utterance length of 4000ms and the oracle utterance length with 69.8%. This result suggests that we can use the approach even if we do not hav e transcription. 7. Conclusion W e present our study in the non-linguistic approach for detect- ing AD by utilizing only the speech audio data. The employ- ment of GCNN yielded the best result of 73.6%. Since it does not utilize linguistic information, we can easily apply it to low- resource languages. There are still a lot of remaining tasks. In the near future, we will ev aluate our current approach on different languages especially on low-resource languages. Other possible directions include estimating the severity of the disease and also ev aluating its temporal change. 8. Acknowledgements This work was supported by JSPS KAKENHI 16H02845 and by JST CREST Grant Number JPMJCR1687, Japan. 9. References [1] A. Association, “2017 Alzheimer’ s disease facts and figures, ” vol. 13, 2017. [2] T . Y acoubian, “Neurodegenerati ve disorders: Why do we need new therapies?” pp. 1–16, 2017. [3] K. C. Fraser, J. A. Meltzer , and F . Rudzicz, “Linguistic fea- tures identify Alzheimers disease in narrative speech, ” Journal of Alzheimer’ s Disease , vol. 49, no. 2, pp. 407–422, 2000. [4] C. P . Ferri, M. Prince, C. Brayne, H. Brodaty , L. Fratiglioni, M. Ganguli, K. Hall, K. Hasega wa, H. Hendrie, Y . Huang et al. , “Global prev alence of dementia: a Delphi consensus study , ” The lancet , vol. 366, no. 9503, pp. 2112–2117, 2005. [5] A. Burns and S. Iliffe, “ Alzheimer’s disease, ” BMJ , vol. 338, 2009. [6] E. T . Prud’hommeaux and B. Roark, “Extraction of narrativ e re- call patterns for neuropsychological assessment, ” in T welfth An- nual Confer ence of the International Speech Communication As- sociation , 2011. [7] H. Martono, Buku Ajar Boedhi-Darmojo: Geriatri (Ilmu Kese- hatan Usia Lanjut) [Boedhi-Darmojo T extbook: Geriatrics (El- derly P eople Health Science)] . Balai Penerbit FKUI, 2000. [8] T . Manjari, V . Deepti et al. , “Reversible dementias, ” Indian Jour - nal of Psychiatry , v ol. 51, no. 5, pp. 52–55, 2009. [9] S. W ankerl, E. N ¨ oth, and S. Ev ert, “ An N -gram based approach to the automatic diagnosis of Alzheimers disease from spoken lan- guage, ” in Pr oc. INTERSPEECH 2017 , 2017. [10] Z. Huang, M. Dong, Q. Mao, and Y . Zhan, “Speech emotion recognition using CNN, ” in A CM Multimedia , 2014. [11] G. Keren and B. Schuller , “Conv olutional RNN: an enhanced model for extracting features from sequential data, ” 2016 Interna- tional Joint Conference on Neural Networks (IJCNN) , pp. 3412– 3419, 2016. [12] V . C. Zimmerer, M. Wibro w , and R. A. V arle y , “Formulaic lan- guage in people with probable Alzheimers disease: a frequency- based approach, ” Journal of Alzheimer’s Disease , vol. 53, no. 3, pp. 1145–1160, 2016. [13] S. O. Orimaye, J. S. W ong, K. J. Golden, C. P . W ong, and I. N. Soyiri, “Predicting probable Alzheimers disease using linguis- tic deficits and biomarkers, ” BMC bioinformatics , vol. 18, no. 1, p. 34, 2017. [14] J. T . Becker, F . Boiler, O. L. Lopez, J. Saxton, and K. L. McGo- nigle, “The natural history of Alzheimer’ s disease: description of study cohort and accuracy of diagnosis, ” Arc hives of Neur ology , vol. 51, no. 6, pp. 585–594, 1994. [15] A. Khodabakhsh, F . Y esil, E. Guner, and C. Demiroglu, “Evalua- tion of linguistic and prosodic features for detection of Alzheimers disease in T urkish conv ersational speech, ” EURASIP Journal on Audio, Speech, and Music Pr ocessing , vol. 2015, no. 1, p. 9, 2015. [16] R. Sadeghian, J. D. Schaffer , and S. A. Zahorian, “Speech pro- cessing approach for diagnosing dementia in an early stage, ” Proc. INTERSPEECH 2017 , pp. 2705–2709, 2017. [17] J. W einer , C. Herf f, and T . Schultz, “Speech-based detection of Alzheimer’ s disease in con versational german. ” pp. 1938–1942, 2016. [18] G. T osto, M. Gasparini, G. Lenzi, and G. Bruno, “Prosodic im- pairment in Alzheimer’ s disease: assessment and clinical rel- ev ance, ” The Journal of neuropsyc hiatry and clinical neuro- sciences , vol. 23, no. 2, pp. E21–E23, 2011. [19] B. Schuller, S. Steidl, and A. Batliner , “The INTERSPEECH 2009 emotion challenge, ” Pr oc. INTERSPEECH 2009 , pp. 312–315, 2009. [20] E. Kaplan, H. Goodglass, S. W eintraub, and S. Brand, Boston Naming T est . Lea & Febiger, 1983. [21] F . Eyben, F . W eninger, F . Gross, and B. Schuller, “Recent devel- opments in opensmile, the munich open-source multimedia fea- ture extractor , ” pp. 835–838, 2013. [22] B. Schuller, S. Steidl, A. Batliner, F . Burkhardt, L. De villers, C. M ¨ uller , and S. Narayanan, “The INTERSPEECH 2010 paralin- guistic challenge, ” Proc. INTERSPEECH 2010 , pp. 2794–2797, 2010. [23] B. Schuller , S. Steidl, A. Batliner , F . Schiel, and J. Krajewski, “The INTERSPEECH 2011 speaker state challenge, ” Proc. IN- TERSPEECH 2011 , 2011. [24] B. Schuller , S. Steidl, A. Batliner, E. N ¨ oth, A. V inciarelli, F . Burkhardt, R. v . Son, F . W eninger , F . Eyben, T . Bocklet et al. , “The INTERSPEECH 2012 speaker trait challenge, ” Pr oc. IN- TERSPEECH 2012 , 2012. [25] Y . LeCun, B. Boser, J. S. Denker, D. Henderson, R. E. Howard, W . Hubbard, and L. D. Jackel, “Backpropagation applied to hand- written zip code recognition, ” Neural computation , vol. 1, no. 4, pp. 541–551, 1989. [26] J. Gu, Z. W ang, J. Kuen, L. Ma, A. Shahroudy , B. Shuai, T . Liu, X. W ang, G. W ang, J. Cai et al. , “Recent advances in con volu- tional neural networks, ” P attern Recognition , vol. 77, pp. 354– 377, 2017. [27] I. Goodfellow , Y . Bengio, and A. Courville, Deep Learning . MIT Press, 2016, http://www .deeplearningbook.org. [28] Y . Bengio et al. , “Learning deep architectures for ai, ” F oundations and tr ends® in Machine Learning , vol. 2, no. 1, pp. 1–127, 2009. [29] R. H. Hahnloser , R. Sarpeshkar, M. A. Maho wald, R. J. Douglas, and H. S. Seung, “Digital selection and analogue amplification coexist in a cortex-inspired silicon circuit, ” Natur e , vol. 405, no. 6789, p. 947, 2000. [30] A. W aibel, T . Hanazawa, G. Hinton, K. Shikano, and K. J. Lang, “Phoneme recognition using time-delay neural networks, ” in Readings in speec h recognition . Elsevier , 1990, pp. 393–404. [31] S. Ioffe and C. Szegedy , “Batch normalization: Accelerating deep network training by reducing internal cov ariate shift, ” pp. 448– 456, 2015. [32] Y . Zhou and R. Chellappa, “Computation of optical flow using a neural network, ” v ol. 27, pp. 71–78, 1988. [33] D. P . Kingma and J. Ba, “ Adam: A method for stochastic opti- mization, ” arXiv pr eprint arXiv:1412.6980 , 2014. [34] Y . N. Dauphin, A. Fan, M. Auli, and D. Grangier , “Language modeling with gated conv olutional networks, ” pp. 933–941, 2017. [35] A. v . d. Oord, N. Kalchbrenner, L. Espeholt, O. V inyals, A. Graves et al. , “Conditional image generation with pixelcnn decoders, ” pp. 4790–4798, 2016. [36] A. v . d. Oord, S. Dieleman, H. Zen, K. Simonyan, O. V inyals, A. Grav es, N. Kalchbrenner , A. Senior, and K. Kavukcuoglu, “W a venet: A generative model for raw audio, ” arXiv pr eprint arXiv:1609.03499 , 2016.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment