An empirical approach to the relationship between emotion and music production quality

In music production, the role of the mix engineer is to take recorded music and convey the expressed emotions as professionally sounding as possible. We investigated the relationship between music production quality and musically induced and perceive…

Authors: David Ronan, Joshua D. Reiss, Hatice Gunes

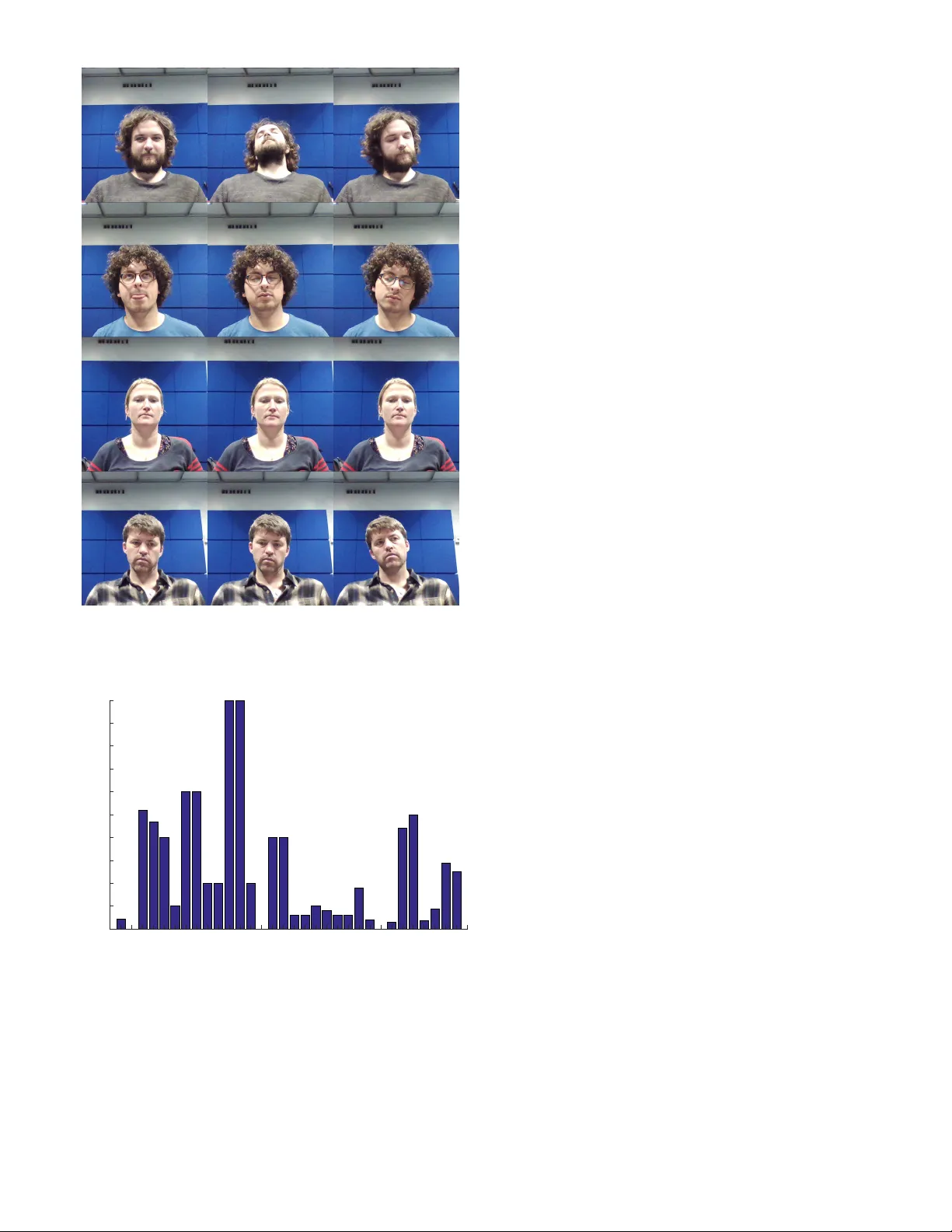

JOURNAL OF L A T E X CLASS FILES 1 An empir ical approach to the relationship between emotion and m usic production quality David Ronan, Joshua D . Reiss and Hatice Gunes Abstract —In music production, the role of the mix engineer is to take recorded m usic and conve y the expressed emotions as professionally sounding as possib le. W e investigated the relationship between m usic production quality and musically induced and perceived emotions . A listening test was performed where 10 critical listeners and 10 non-critical listeners evaluated 10 songs . There were two mix es of each song, the low quality mix and the high quality mix. Each par ticipants subjectiv e experience was measured directly through questionnaire and indirectly by e xamining peripheral physiological changes, change in f acial expressions and the number of head nods and shakes the y made as they listened to each mix. W e showed that music production quality had more of an emotional impact on critical listeners. Also , cr itical listeners had significantly diff erent emotional responses to non-cr itical listeners f or the high quality mixes and to a lesser e xtent the low quality mix es. The findings suggest that having a high le vel of skill in mix engineering only seems to matter in an emotional context to a subset of music listeners . Index T erms —F acial Expression Analysis, Head/Nod Shake Detection, Ph ysiological Measures, Mix Pref erence, Audio Engineering, Musically Induced and P erceived Emotions F 1 I N T R O D U C T I O N T H E R E are a number of stages when it comes to pro- ducing music for mass consumption. The first step is to recor d a musical performance using specific micro- phone placement techniques in a suitable acoustic space. In the post-production stage, the mix engineer combines the recor dings through mixing and editing to achieve a final mix. Pr edominately , the mor e skilled the mix engineer is, the better the final mix sounds in terms of production quality . The mixing of audio involves applying signal pr ocessing techniques to each recorded audio track whereby the engi- neer manipulates the dynamics (balance and dynamic range compression), spatial (stereo or surround panning and r e- verberation), and spectral (equalisation) characteristics of the source material. Once the final mix has been created, it is sent to a mastering studio where additional processing is applied befor e it can be distributed for listening in a home or a club environment [1]. There have been several studies that have looked at why people prefer certain mixes over others. [2], [3] conducted a mix experiment where groups of nine mix engineers were asked to mix 10 differ ent songs. The mixes were evaluated in a listening test to infer the quality as perceived by a gr oup of trained listeners. Mix prefer ence ratings wer e correlated with a lar ge number of low level featur es in order to explor e if there was any relationship, but the findings indicated in this particular case was that there was no significantly strong correlations. In [4], we analysed the same tracks used in [2], [3], to • D. Ronan is with the Centre for Intelligent Sensing, Queen Mary University of London, UK. E-mail: d.m.ronan@qmul.ac.uk • J.D. Reiss is with the Centre for Digital Music, Queen Mary University of London, UK. E-mail: joshua.reiss@qmul.ac.uk • H. Gunes is with the Computer Laboratory , University of Cambridge, UK. E-mail: hatice.gunes@cl.cam.ac.uk ascertain the impact of subgrouping practices on mix pref- erence, where subgr ouping involves combining similar in- strument tracks for pr ocessing and manipulation. W e looked at the quantity of subgr oups and the type of subgr oup effect processing used for each mix, then correlated these findings with mix quality ratings to see the extent of the relationship [5]. [6] claimed that audio production quality is linked to perceived loudness and dynamic range compression. It also demonstrated that a participant’s expertise is not a str ong factor in assessing audio quality or musical prefer ence. T o our knowledge, there have been no pr evious studies that examined the r elationship between music production quality and emotional response. This repr esents a new area of research in music perception and emotion that we intend to explore. In [7], three of the mix engineers that were interviewed mentioned the importance of emotion in the context of mixing and producing music. This indicates that emotion plays a significant role in how a mix engineer tries to achieve a desired mix. [8] states that dynamic contrast in a piece of music has been heralded as one of the most important factors for conveying emotion. The purpose of the current study is to determine the extent of the link between music production quality and musically induced and perceived emotions. The participants in this study listened to low and high quality mixes (rated in [2], [3]) of the same musical piece. W e then measur ed each participant’s subjective experience, peripheral physiological changes, changes in facial expressions and head nods, and shakes as they listened to each mix. The rest of the paper is organised as follows. Section 2 provides the backgr ound to this study with respect to mu- sically induced vs. perceived emotions, psychological emo- tion models and measuring emotional r esponses to music. Section 3 provides the methodology used to conduct this experiment. Section 4 presents the results and subsequent JOURNAL OF L A T E X CLASS FILES 2 analysis. Section 5 discusses the r esults, Section 7 pr oposes future work and the paper is concluded in Section 6. 2 B A C K G R O U N D 2.1 Musically Induced vs. Perceived Emotions In the study of emotion and music listening, induced emo- tions are those experienced by the listener and perceived emotions ar e those conveyed in the music, though perceived emotions may also be induced [9], [10], [11]. A listener ’s perception of emotional expression is mainly related to how they perceive and think about a musical process, in contrast to their emotional response to the music where someone experiences an emotion [11]. Perceived emotion in music can be provoked in a number of ways. It can be associated with the metrical structure of the music, or how a certain song might be perceived as happy or sad because of the chords being played [9]. Numerous studies have shown that any increase in tempo/speed, intensity/loudness or spectral centroid causes higher arousal. These studies have been summarised in [12], where tempo, loudness and timbre were shown to have an impact on how other typical ‘musical’ variables such as pitch and the major-happy minor-sad chord asso- ciations are perceived. The most complete framework of psychological mecha- nisms for emotional induction is in [13] and its extensions [14], [15]. Until that point, most research in that area had been exploratory , but Juslin et al. posited a theoretical framework of eight dif ferent cognitive mechanisms known as BRECVEMA. The eight mechanisms are as follows: • Brain stem reflex is a hard-wir ed primordial re- sponse that humans have to sudden loud noises and dissonant sounds. A reason given for the brain stem reflex reaction is the dynamic changes in music [15]. This particular mechanism might be related to music production in terms of a recording having good dynamics. A mix that has sudden large bursts in volume should arouse the listener more. • Rhythmic entrainment is when the listener ’s inter- nal body r hythm adjusts to an external source, such as a drum beat. This may relate to music production in a similar way as the brain stem reflex, i.e., if the drums in a musical pr oduction are loud and have a clear pulse, the listener may be more aroused. • Evaluative conditioning occurs because a piece of music has been paired repeatedly with a positive or negative experience and an emotion is induced. • Emotional contagion is when the listener perceives an emotional expr ession in the music and mimics the emotions internally [16]. This may mean that a better quality mix conveys the emotion in music in a clear er sense than a poorer quality mix, e.g. vocals or lead guitar is more audible in one mix over the other . • V isual imagery may occur when a piece of music conjures up a particularly strong image. This could potentially have negative or positive valence and has been linked to feelings of pleasure and deep relaxation [15]. • Episodic memory is when music triggers a particular memory from a listener ’s past life. When a mem- ory is triggered, so is an attached emotion [13]. A mix engineer might use a certain music production technique from a specific era, which may trigger nostalgia in the listener . • Musical expectancy is believed to be activated by an unexpected melodic or harmonic sequence. The lis- tener will expect musical structur e to be resolved, but suddenly it is violated or changes in an unexpected way [16]. • Aesthetic judgement is the mechanism that induces ‘aesthetic emotion’ such as admiration and awe. This may play a part in music production quality by enhancing musically induced emotions. How well a song has been mixed can be judged on the artistic skill involved as well as how much expression is in the mix. A poor mix is not typically going to be as expressive as a well constructed mix. How both perceived and induced emotions in music relate to music pr oduction quality is an area of music and emotion that has not yet been explored. For both induced and perceived musical emotions we have proposed a num- ber of ways in which a mix engineer may have a direct effect, which we seek to capture from the listener through self- report, physiological measur es, facial expression and body movement. 2.2 Psychological Models of Emotion T o describe musical emotions, three well-known models may be employed; discrete, dimensional and music specific. The discrete or categorical model is constructed from a limited number of universal emotions such as happiness, sadness and fear [17], [18]. One criticism is that the basic emotions in the model are unable to describe many of the emotions found in everyday life and ther e is not a consistent set of basic emotions [19], [20]. Dimensional models consider all affective terms along broad dimensions. The dimensions ar e usually related to valence and arousal, but can include other dimensions such as pleasure or dominance [ ? ], [21]. Dimensional models have been criticised for blurring the distinction between certain emotions such as anger and fear , and because participants can not indicate they are experiencing both positive and negative emotions [11], [19], [20]. In r ecent years, a music-specific multidimensional model has been constructed. This is derived from the Geneva Emotion Music Scale (GEMS) and has been developed for musically induced emotions. This consists of nine emotional scales; wonder , transcendence, tenderness, nostalgia, peace- fulness, power , joyful activation, tension and sadness [11], [22]. The scales have been shown to factor down to three emotional scales; calmness-power , joyful activation-sadness and solemnity-nostalgia [22], [23]. Empirical evidence [24], [25] suggests both discrete and dimensional models are suitable for measuring musically induced and perceived emotions [11]. [22] compared the discrete appr oach, the dimensional approach and the GEMS approach. It was found that participants preferr ed to report their emotions using the GEMS approach. Therefor e, we JOURNAL OF L A T E X CLASS FILES 3 adopted the GEMS approach as well as the dimensional model. 2.3 Measuring Emotional Responses to Music W e employed self-report, physiological measures, facial ex- pression analysis and head nod-shake detection for measur- ing emotional responses to music. 2.3.1 Self-Repor t Methods The most common self-r eport method to measure emotional responses to music is to ask listeners to rate the extent to which they perceive or feel a particular emotion, such as happiness. T echniques to assess affect are measured using a Likert scale or choosing a visual repr esentation of the emo- tion the person is feeling. An example visual repr esentation is the Self-Assessment Manikin [26] where the user is asked to rate the scales of arousal, valence and dominance based on an illustrative picture. Another method is to present listeners with a list of possible emotions and ask them to indicate which one (or ones) they hear . Examples are the Differ ential Emotion Scale and the Positive and Negative Affect Schedule (P ANAS). In P ANAS, participants are requested to rate 60 words that characterise their emotion or feeling. The Differ ential Emotion Scale contains 30 words, 3 for each of the 10 emo- tions. These would be examples of the categorical approach mentioned previously [27], [28]. A third approach is to require participants to rate pieces on a number of dimensions. These are often arousal and valence, but can include a thir d dimension such as power , tension or dominance [19], [29]. Self-reporting leads to concerns about response bias. Fortunately , people tend to be attuned to how they are feeling (i.e., to the subjective component of their emotional responses) [30]. Furthermor e, Gabrielsson came to the con- clusion that self-reports are “the best and most natural method to study emotional responses to music” after con- ducting a review of empirical studies of emotion perception [9]. One caveat with retr ospective self-r eport is ‘duration neglect’ [31], where the listener may forget the momentary point of intensity of the emotion attempted to be measured. W e chose self-report in our experiment due to it being the most reliable measure according to [9]. GEMS-9 was used for measuring induced emotion and Arousal-V alence- T ension for perceived emotion. W e selected GEMS-9 due to it being a specialised measure for self-report of musically induced emotions and Arousal-V alence-T ension due to it being a dimensional rather than categorical model. 2.3.2 Ph ysiological Measures Measures for recor ding physiological responses to music include heart or pulse rate, galvanic skin response, respi- ration or breathing rate and facial electr omyography . Such measures have been used in recent papers [16], [32], [33]. High arousal or stimulative music tends to cause an increase in heart rate, while calm music tends to cause a decrease [34]. Respiration has been shown to increase in 19 studies on emotional responses to music [34]. These studies found differences between high- and low-ar ousal emotions but few differences between emotions with positive or neg- ative valence. One physiological measure that corresponds with va- lence is facial electromyography (EMG). EMG measure- ments of cheek and brow facial muscles are associated with processing positive and negative events, respectively [35]. In [36], each participant’s facial muscle activity was measured while they listened to different pieces of music that were selected to cover all parts of the valence-arousal space. Results showed greater cheek muscle activity when participants listened to music that was considered high arousal and positive valence. Br ow muscle activity incr eased in response to music that was considered to induce negative valence, irrespective of the arousal level. Galvanic skin response (GSR) is a measurement of elec- trodermal activity or resistance of the skin [37]. When a listener is aroused, resistance tends to decrease and skin conductance increases [38], [39]. W e used skin conductance measurements in our experiment as it has been used exten- sively in pr evious studies related to music and emotion [16], [32], [33], [34]. 2.3.3 F acial Expression and Head Mov ement The Facial Action Coding System (F ACS) [40] provides a systematic and objective way to study facial expressions, repr esenting them as a combination of individual facial muscle actions known as Action Units (AU). Action Units can track brow and cheek activity , which can be linked to arousal and valence when listening to music [36]. [41] examined how schizophrenic patients perceive emotion in music using facial expression, and [42] looked at the role of a musical conductors facial expression in a musical ensemble. W e were unable to find anything directly related to our resear ch questions. People move their bodies to the rhythms of music in a variety of different ways. This can occur through finger and foot tapping or other rhythmic movements such as head nods and shakes [43], [44]. In human psychology , head nods are typically associated with a positive response and head shakes negative one [45]. In one study , participants who gauged the content of a simulated radio broadcast more positively wer e mor e inclined to nod their head than those who performed a negatively associated head shaking movement [44], [46]. But for music, a head shake might be considered a positive response as this might simply be a rhythmic response. W e examined facial expr ession in this experiment since it had not been attempted before in music and emotion or music production quality resear ch. Facial expression analy- sis is somewhat similar to facial EMG, so we should be able to link results to previous findings [34]. 3 M E T H O D O L O G Y 3.1 Resear ch questions and hypotheses Our original hypothesis was that music production quality had a dir ect effect on the induced and perceived emotions of the listener . However , before we proceeded to the main study , we conducted a short pilot study on six participants, three of whom had critical listening skills. The feedback from the pilot study indicated that training was required in JOURNAL OF L A T E X CLASS FILES 4 order for participants to become familiar with the adjectives used to describe induced emotions. W e also decided to track head nods and shakes, a typical response to musical enjoyment, based on a review of the recor ded videos. Ob- servation of potential differences between critical and non- critical listeners led us to r evise our original hypothesis. It was refined to be that music production quality has more effect on the induced and perceived emotions of critical listeners than non-critical listeners. 3.2 P ar ticipants T wenty participants were recruited from within the univer- sity . 14 were male, 6 female and their ages ranged from 26 to 42 ( µ = 30 . 4 , σ 2 = 4 . 4 ). 10 participants had critical listening skills, i.e, knew what critical listening involved and had been trained to do so previously or had worked in a studio, while the other 10 did not i.e., no music production experience and not trained in how to critique a piece of music. A pre-experiment questionnaire established the genre prefer ence of participants, shown in T able 1, since some participants may have bias towards certain genres. T ABLE 1 Genre preference f or par ticipants Genre No. of Participants Rock/Indie 15 Dance/Electronic 11 Pop 8 Jazz 6 Classical 4 3.3 Stim uli T en dif ferent songs were used, each with nine mixes (90 mixes in total). Songs were split into three study groups, where mixes for songs within a study group were created by 8 student mix engineers and their instructor , who was a professional mix engineer (the same professional mix engineer participated in Groups 1 and 2). These mixes were obtained from the experiment conducted in [2]. Mixes of a song had been rated for mix quality by all the members of the other study groups, so no one rated their own mix. Further details on how the stimuli was obtained can be seen in [2]. For our experiment, we selected the lowest and highest quality mix of each song. T able 2 shows the names of each song, the song genre and which group mixed each song. Some song names had to be r emoved due to copyright issues, but the rest are available on the Open Multitrack T estbed [47]. All mixes were loudness normalised using ITU-R BS. 1770-2 specification [48] to avoid bias towards loud mixes. 3.4 Measurements 3.4.1 Ph ysiological Measures T o measure skin conductance we used small (53mm x 32 mm x 19 mm) wireless GSR sensors developed by Shimmer Research. The GSR module was placed around the wrist of their usually inactive hand, and electrodes strapped to their index and middle finger . ECG measurements were attempted but discar ded due to extr eme noise levels in the data, at least partly since participants moved in the rotatable chair provided. T ABLE 2 Song titles, song genres and mix groups . Songs in italics are not av ailable online due to cop yr ight restrictions. Song Name Genre Mixed By Red to Blue - (S1) Pop-Rock Group 1 Not Alone - (S2) Funk Group 1 My Funny V alentine - (S3) Jazz Group 1 Lead Me - (S4) Pop-Rock Group 1 In the Meantime - (S5) Funk Group 1 - (S6) Soul-Blues Group 2 No Prize - (S7) Soul-Jazz Group 2 - (S8) Pop-Rock Group 2 Under a Covered Sky - (S9) Pop-Rock Group 2 Pouring Room - (S10) Rock-Indie Gr oup 3 3.4.2 F acial Expression and Head Nod-Shak e T o recor d video for facial expression and head nod/shake detection, we used a Lenovo 720p webcam that was em- bedded in the laptop used to perform the experiment. In Figure 1 we can see the automatic facial feature tracking for one of our participants. Fig. 1. Facial features tracked for detecting facial action units dur ing music listening. 3.4.3 Self-Repor t After listening to each piece of music, participants GEMS-9 to rate the emotions induced while listening. This was done using a 5-point Likert scales ranging from ‘Not at all’ to ‘V ery much’ based on 9 adjectives; wonder , transcendence, power , tenderness, nostalgia, peacefulness, joyful activation, sadness and tension. Each participant also rated the emo- tions they perceived in each song using three discrete (1- 100) sliders for arousal, valence and tension. They were also asked to indicate how much they liked each piece of music they hear d based on a 5-point Likert scale ranging fr om ‘Not at all’ to ‘V ery much’. 3.4.4 User Interface The physiological measurements, self-report scores and video were recor ded into a bespoke software program de- veloped for the experiment. It was designed to allow the experiment to run without the need for assistance, and the graphical user interface was designed to be as aesthetically neutral as possible. 3.4.5 Pre- and P ost-Exper iment Questionnaires W e provided pre- and post-experiment questionnaires. The pre-experiment questionnaire asked simple questions re- lated to age, musical experience, music production expe- rience, music genre prefer ence and critical listening skills. JOURNAL OF L A T E X CLASS FILES 5 There was also a question clarifying each participant’s emo- tional state as well as how tir ed they wer e when they started the study . If any participant indicated that they were very tired, we asked them to attempt the experiment at a later time once rested. The post-experiment questionnair e asked questions such as could they hear an audible difference between the two mixes of each song, was there any difference in emotional content between the two mixes of each song and was ther e any difference in the induced emotions between the two mixes of each song. These were all asked on a 5-point Likert scale ranging from ‘Not at all’ to ‘V ery much’. 3.5 Setup The experiment took place in a dedicated listening room at the university . The room was very well lit, which was im- portant for facial expression analysis and head nod/shake detection. Each participant was sat at a studio desk in front of the laptop used for the experiment. The audio was heard over a pair of studio quality loudspeakers, where the participant could adjust the volume of the audio to a comfortable level. Figure 2 shows the room in which the experiment was conducted. Fig. 2. Studio space where the experiment was conducted. 3.6 T asks After the pre-experiment questionnaire, we trained each participant in how the interface worked. They were super- vised while they listened to two example songs and were shown how to answer each question. Each participant was then asked to relax and listen to the music as they would at home for enjoyment. Next, three minutes of relaxing sounds were played to each participant in order to get an emotional baseline. They then had to click play in order for one of the mixes to be heard, where the or- der in which mixes were presented was randomised. While the music was playing, GSR measurements and facial and head movements were recorded. Once the music finished, each participant rated the induced emotions using GEMS- 9. They then rated perceived emotions on the Arousal- V alence-T ension scale and rated how much they liked each mix. Once answers were submitted, there was another 30 seconds of r elaxing sounds played for an emotional baseline and the same procedure repeated for the next mix. The participant was updated on their progress throughout the experiment via the software. Finally , the participant filled out the post-experiment questionnaire and the experiment was concluded. This process is illustrated in Figure 3. 3.7 Data Processing Skin conductance response (SCR) has been shown to be useful in analysis of GSR data [49], [50]. W e used Ledalab 5 to extract the timing and amplitude of SCR events from the raw GSR data (sampled at 5Hz) using Continuous Decom- position Analysis (CDA) [51]. Interpolation was performed and the mean, standard deviation, positions of maxima and minima, and number of extrema divided by task duration, were calculated from the SCR amplitude series for each mix [49], [52]. GSR data of one critical listener was discar ded due to poor electrode contact. W e extracted head nod events, head shake events, arousal, expectation, intensity , power and valence fr om each video clip using the method introduced in [53]. Each 20 frames (0.8 sec) of video provided a value for each of these features. Head nod and head shake events ar e binary values, while the rest of the features are continuous values. W e extracted the total head shake and head nod events and took average and standard deviation values for the rest of the features for each video clip. Intensity values (0-1) of eight AUs, see T able 3, were extracted every five frames (0.2 sec) for each video, using the method of [54]. W e calculated the average and standard deviation values of each AU for each video clip. T ABLE 3 Extracted Action Units AU Number F ACS Name AU1 Inner brow raiser AU2 Outer brow raiser AU4 Brow lowerer AU12 Lip corner puller AU17 Chin raiser AU25 Lip raiser AU28 Lip suck AU45 Blink 4 E X P E R I M E N T S A N D R E S U L T S T able 4 summarises the conditions tested in our experi- ment. In conditions C1, C2, C5 and C6, we constrained listener type and tested if there was a statistical difference in emotional response ratings and scores based on mix quality . In conditions C3, C4, C7 and C8 we constrained mix quality type and tested if there was a statistical difference in emotional response ratings and scores based on critical listening skills. W e used two types of weightings for ratings and scores, similar to the approaches in [55], [56], [57]. The audible differ ence weighting was used in conditions C1 - C4. It weighted participant results by how much they indicated they could hear an audible differ ence between the high and low quality mix types. The perceived emotional differ ence weighting was used in conditions C5 - C8, based on how much participants could perceive an emotional difference between the high and low quality mixes. W eights were calculated based on each participant’s response to questions asked in the Post-Experiment questionnaire. Each partic- ipant indicated on a Likert scale how much they could perceive an audible difference between the two mixes of each song and to what extent they could per ceive an emo- tional differ ence between the mixes of each song. W eighting JOURNAL OF L A T E X CLASS FILES 6 Training (2 Songs) Baseline (3 mins) Listen, ECG, GSR, FAU’s + Nod-Shake GEMS-9 A-V-T + Like Baseline (30 secs) Post-experiment Questionnaire Pre-experiment Questionnaire (20 Mixes) Fig. 3. T asks involv ed in the experiment. was applied as W R = O R D X N , where O R is the original and W R the weighted result, D X is the Likert value for either perceived audible differ ence or perceived emotional differ ence, and N is the number of points used in the Likert scale. In conditions C1, C2, C5 and C6 we used the W ilcoxon Signed Rank non-parametric statistical test because our data is ordinal and we have the same subjects in both datasets. In conditions C3, C4, C7 and C8 we used the Mann-Whitney U non-parametric statistical test because our data is ordinal and we are comparing the medians of two independent groups. In each table in this section the results shown are p- values from the statistical tests for rejecting the null hypoth- esis, wher e the numbers in bold are significant ( p < 0 . 05) . W e have not used the Bonferroni correction because the method is concerned with the general null hypothesis. In this instance, we ar e investigating how emotions and reac- tions vary along the many different dimensions tested [ ? ]. The data used for this analysis can be accessed at 4.1 GEMS-9 T able 5 compared the ratings for each of the GEMS-9 emo- tional adjectives on a song by song basis for conditions C1 to C4. W e have removed any p-values that wer e not significant in order to make the tables easier to read. There are four statistically significant p-values for C1 in contrast to C2 where there are no statistically significant p-values. This occurred for two songs and happened for the emotions transcendence, tenderness, joyful activation and tension. W e see a lot mor e significant p-values for C3 and C4 than for C1 and C2. W e have 47 significant p-values out of a possible 90 for C3 and 43 significant p-values out of 90 for C4. The most amount of significant p-values occur for the emotions of nostalgia, peacefulness, joyful activation and sadness. 4.2 Ar ousal-V alence-T ension T able 4.2 compar es the ratings for Ar ousal-V alence-T ension dimensions on a song by song basis for Conditions C1 to C4. For C1, there are four statistically significant p-values for arousal, two for valence, and two for tension. This is in contrast to C2 wher e there is one significant p-value for arousal and one for valence. The significant p-values for C1 are related to six songs in contrast to C2 where they are only related to one song. For both C3 and C4, there are six significant p-values for arousal, all ten for valence and four for tension. p-values for both are similar in terms of distribution over the dimensions, but they differ by song. 4.3 GSR W e compared the mean, standard deviation, positions of maxima and minima and frequency of event values for each participant’s GSR data on a song by song basis. However , since there were few significant p-values we did not present the results in a table. This was also the only part of the experiment where we tested conditions C1 to C4 as well as conditions C5 to C8, as it was the only time these conditions gave a noticeable amount of significant p-values. When we tested C1 and C2, there were only 3 out of 50 statistically significant p-values for critical listeners and 3 out of 50 statistically significant p-values for non- critical listeners. Similar results occurred when we tested conditions C5 and C6. C3 gave 5 out of 50 statistically significant p-values for two songs, and there were 4 out of 50 for C4. When we tested condition C7, there were 9 out of 50 statistically significant p-values. This is in contrast to C8 where there were 2 out of 50 statistically significant p-values. 4.4 Head Nod and Shake W e compared Head Nod and Shake scores on a song by song basis. There were no statistically significant p-values for condition C1, and only 2 out 70 p-values for C2 were statistically significant. The results for conditions C3 and C4 are summarised in T able 6. For C3, we have 31 significant p- values out of a possible 70. The most amount of significant p-values occurred for shake, expectation and power . C4 gave 35 significant p-values out of 70. The lar gest amount of significant p-values occur for shake, arousal and power . 4.5 F acial Action Units W e compar ed the standard deviation for each participant’s Facial Action Unit scor es on a song by song basis. W e saw 3 out of 80 statistically significant p-values for condition C1, whereas C2 gave 7 out of 80 statistically significant p- values. Results for conditions C3 and C4 are summarised in T able 7. There were 23 significant p-values out of a possible 80, mainly for AU1, AU4 and AU45. For condition C4, we have 20 significant p-values out of 80, mostly fr om AU4 and AU45. W e also examined which AUs had the highest intensity throughout the experiment. W e checked every mix that each participant listened to, to see if any of their average AU intensities was > = 0 . 5 . If the average AU intensity was > = 0 . 5 we marked the AU for that particular mix with a 1, otherwise a 0. W e summarised the results as a percentage of all the mixes listened to for critical listeners and non- critical listeners in T able 4.5. AU1 and AU4 gave the gr eatest JOURNAL OF L A T E X CLASS FILES 7 T ABLE 4 Different types of conditions tested Condition Constrained V aried W eighting Statistical T est C1 Critical Listener High Quality Mix vs Low Quality Mix Audible Difference W ilcoxon Sign Rank C2 Non-critical Listener High Quality Mix vs Low Quality Mix Audible Differ ence Wilcoxon Sign Rank C3 High Quality Mix Critical Listener vs Non-Critical Listener Audible Differ ence Mann-Whitney U C4 Low Quality Mix Critical Listener vs Non-Critical Listener Audible Differ ence Mann-Whitney U C5 Critical Listener High Quality Mix vs Low Quality Mix Emotional Difference W ilcoxon Sign Rank C6 Non-critical Listener High Quality Mix vs Low Quality Mix Emotional Differ ence W ilcoxon Sign Rank C7 High Quality Mix Critical Listener vs Non-Critical Listener Emotional Difference Mann-Whitney U C8 Low Quality Mix Critical Listener vs Non-Critical Listener Emotional Difference Mann-Whitney U T ABLE 5 GEMS-9 - Audib le Difference W eighting for Conditions C1 to C4. C1 W onder T rans Power T ender Nostal Peace Joyful Sadness T ension S4 0.031 0.031 S7 0.031 0.031 C2 W ond T rans Power T ender Nostal Peace Joyful Sadness T ension C3 W onder T rans Power T ender Nostal Peace Joyful Sadness T ension S1 0.030 0.042 0.014 0.043 0.011 0.023 S2 0.039 0.028 0.007 0.034 S3 0.024 0.005 0.041 S4 0.022 0.018 0.038 0.028 0.007 0.027 S5 0.042 0.031 0.035 S6 0.039 0.028 0.041 0.014 S7 0.006 0.038 0.038 S8 0.035 0.036 0.038 0.031 0.013 0.027 S9 0.027 0.043 0.030 0.008 0.035 0.042 0.017 S10 0.017 0.022 0.031 0.020 0.027 C4 W onder T rans Power T ender Nostal Peace Joyful Sadness T ension S1 0.010 0.033 0.029 0.006 0.025 0.049 S2 0.011 0.023 0.009 S3 0.028 0.014 0.005 0.026 S4 0.039 S5 0.042 0.024 S6 0.034 0.010 0.018 0.028 0.020 S7 0.020 0.034 0.004 0.023 S8 0.017 0.015 0.045 0.021 0.007 0.006 S9 0.049 0.018 0.039 0.031 S10 0.004 0.016 0.041 0.006 0.011 0.008 0.007 0.032 amount of average AU intensities > = 0 . 5 . The results for AU12 and AU17 wer e omitted since all the r esults were 0. Critical listeners experienced a greater number of average AU intensities > = 0 . 5 than non-critical listeners for all AUs except AU28. However , the difference in the case of AU28 is 0.005, which is negligible. 5 D I S C U S S I O N 5.1 Findings 5.1.1 GEMS-9 W ith GEMS-9 we investigated if there was a significant differ ence in the distribution of induced emotions of each listener type. T able 5 results indicate that the critical listeners were the only group where there was significant differ ences in the distribution of induced emotions between the two mix types. This suggests that our hypothesis is true. However , since there ar e so few p-values in comparison to the amount of tests we can not draw a strong conclusion from this. Results also indicate that high quality mixes had a greater significant differ ence on the distribution of induced emotions between the two listener types. These results support our hypothesis, in that the high quality mix had more of an impact emotionally on one listener type over the other . They also imply that there was a greater differ ence in the indicated levels of joyful activation and sadness between critical and non-critical listeners for the high quality mixes (C3). Joyful activation and sadness would be synonymous with the positive and negative valence, implying that the quality of the mix may have an impact on how happy or sad a critical listener may feel. 5.1.2 Arousal-V alence-T ension W e investigated if there was a significant difference in the distribution of emotions perceived by each listener type along Arousal-V alence-T ension dimensions. T able 4.2 indi- cates that for critical listeners there are more examples of where there are significant differ ences in the distribution of perceived emotions, especially with respect to arousal. This JOURNAL OF L A T E X CLASS FILES 8 Arousal-V alence-T ension - Audible Difference W eighting for Conditions C1 to C4. C1 A V T C3 A V T S1 S1 0.019 0.013 0.045 S2 S2 0.011 S3 0.021 S3 0.004 0.021 S4 0.002 S4 0.018 S5 S5 0.008 0.017 S6 S6 0.021 0.009 0.049 S7 0.039 S7 0.002 S8 0.035 S8 0.008 0.006 0.038 S9 0.027 0.016 S9 0.009 0.002 S10 0.016 0.031 S10 0.019 0.004 C2 A V T C4 A V T S1 S1 0.026 0.011 S2 0.047 0.039 S2 0.007 0.005 S3 S3 0.038 0.006 S4 S4 0.014 S5 S5 0.004 0.010 0.010 S6 S6 0.005 0.026 S7 S7 0.006 S8 S8 0.011 0.021 0.041 S9 S9 0.007 0.015 0.028 S10 S10 0.013 was the only time a noticeable difference in the amount of significant p-values occurred when we compared the critical listener ’s high quality mixes to critical listener ’s low quality mixes. This also occurred in the case of non- critical listeners (C2), but to a lesser extent. These results support our hypothesis, in that critical listeners were able to perceive an emotional dif ference between the two mixes much more so than non-critical listeners and this was mostly with respect to arousal and tension. T able 4.2 showed a lot of significant p-values for Condi- tions C3 and C4 in comparison to C1 and C2. Interestingly , we have the same amount of significant values in each dimension for both conditions C3 and C4. This implies that there ar e the same amount of significant differ ences in the distribution of emotions for both listener types due to mix quality , but it varies by song. The two listener types are perceiving different levels of arousal and tension, but on differ ent songs. However , this may have something to do with the participant’s genre preference. These results are similar to those seen in T able 5 (iii) and (iv), in the r espect that joyful activation corresponds to positive valence and sadness corresponds to negative valence. 5.1.3 GSR Overall GSR gave largely inconclusive r esults except when we examined response of critical and non-critical listener ’s to high quality mixes (C3, C7). There is also a trend when we compare the r esults for C3 and C7, against the results for critical and non-critical listeners’ low quality mixes (C4, C8). There are more significant results when we do this compar- ison as opposed to comparing responses of critical listener ’s to high and low quality mixes (C1, C5), against responses of non-critical listener ’s to high and low quality mixes (C2, C6). W e also saw this for GEMS-9 and Arousal-V alence-T ension. Thus testing critical versus non-critical listener responses to high versus low quality mixes supported our hypothesis. 5.1.4 Head Nod and Shake Head nod/shake results pr oved to be conclusive and sup- ported our hypothesis. The difference in nodding is far more apparent for low quality mixes (C4) than high quality mixes (C3). Notably , on low quality mixes, non-critical lis- teners nodded their heads mor e than critical listeners. This could mean that non-critical listeners might enjoy the mix regar dless of mix quality . W e also see something similar for arousal and power where there are slightly more significant p-values for the low quality mixes than for the high quality mixes. Power , expectation and arousal seem to be divisive features when comparing the types of listeners. Power is based on the sense of control, expectation on the degree of anticipation and arousal on the degree of excitement or apathy [53]. These are features based on tracking emotional cues when conversing with someone, so it is interesting to see them having such an effect during music listening. Having examined the participant’s videos we found that since they were sitting in a chair that could r otate, they sometimes moved the chair in time with the music. The classifier detected this as a head shake, which would nor- mally be viewed as a negative response [45], but in this case it could indicate that the participant is engaged with the music and most likely enjoying it. It is also worth noting that music is very cultural and certain individuals might react differently than others with respect to head nods and shakes. 5.1.5 F acial Action Units Results indicated that the high quality mixes had a greater effect than low quality mixes on the distribution of AU1 and AU4 between the two listener types. AU1 corresponds to inner br ow raiser and AU4 corr esponds to br ow lowering, so this is similar to resear ch on Facial EMG and music, where the br ow is associated with the pr ocessing of negative events [35], [36]. AU45 corresponds to blinking. Ther e is one more significant AU45 result for condition C4 than ther e is condition C3, which might imply that there is a dif ference in intensity of blinking for critical and non-critical listeners. The percentage total of average AU intensities > = 0 . 5 for AU45 is small, but provided a large amount of significant p-values in T able 7. This suggests that the differ ences in blink intensity between listener type may have been very subtle. This is the first experiment of its kind that has looked at automatic facial expression recognition and tracking head nod/shakes in a music production quality context. By in- specting the videos we found that some participants were much mor e expressive in their face than others or might be a lot mor e inclined to nod and shake their head than use facial expressions. Some critical listeners gazed left or right of the camera, closed their eyes while listening for a prolonged duration, placed their hand under their chin, looked down, looked up, moved their head back and forth, tilted their head or sucked the ir lip. For non-critical listeners, ther e were not as many AU’s activated, except in one case where the participant was looking away , moving their body on the chair left and right, moving their head back and forth and moving their head left and right. Some stills fr om the videos can be seen in Figure 4, where the top two participants are critical listeners and the bottom two are non-critical listeners. JOURNAL OF L A T E X CLASS FILES 9 T ABLE 6 Head Nod and Shake - A udible Diff erence Weighting f or Conditions C3 and C4. C3 Nod Shake Arousal Expectation Intensity Power V alence S1 0.023 0.041 0.006 0.006 0.006 S2 0.017 0.034 S3 0.002 0.009 0.004 0.017 0.000 S4 0.009 0.002 S5 0.026 0.006 0.006 S6 0.013 0.026 0.038 S7 0.014 S8 0.011 0.021 0.009 0.031 S9 0.005 0.001 0.001 0.002 0.003 S10 0.002 C4 Nod Shake Arousal Expectation Intensity Power V alence S1 0.005 0.026 S2 0.006 0.010 0.009 0.010 S3 0.028 0.014 S4 0.045 0.007 S5 0.034 0.036 0.017 S6 0.007 0.038 0.031 0.045 0.031 S7 0.005 0.007 0.011 0.005 S8 0.001 0.017 0.000 0.021 0.004 S9 0.017 0.017 0.021 0.034 S10 0.006 0.028 0.023 0.013 T ABLE 7 F ACS - A udible Diff erence Weighting f or Conditions C3 and C4. C3 AU1 AU2 AU4 AU12 AU17 AU25 AU28 AU45 S1 0.011 0.021 S2 0.038 0.006 S3 0.026 0.038 S4 0.026 0.014 0.038 S5 0.045 0.007 S6 0.004 0.045 S7 0.038 0.006 0.011 0.031 S8 0.038 0.004 0.026 S9 0.038 0.014 S10 0.021 C4 AU1 AU2 AU4 AU12 AU17 AU25 AU28 AU45 S1 0.009 0.045 S2 0.031 0.045 0.045 S3 0.003 0.007 S4 0.009 0.038 S5 0.006 S6 0.002 0.031 0.011 S7 0.021 S8 0.045 0.014 0.011 S9 0.009 S10 0.006 0.026 Percentage of mixes where average AU intensity was > = 0.5. (i) Non-critical listeners (ii) Critical listeners (i) AU1 AU2 AU4 AU25 AU28 AU45 (ii) AU1 AU2 AU4 AU25 AU28 AU45 A 0.9 K 1 1 B 0.85 0.85 L 0.95 0.05 0.25 0.25 0.1 C 0.55 0.7 M 0.1 0.95 0.2 D N 0.55 0.35 E 0.05 0.95 0.05 O 1 F 0.75 0.05 P 0.9 1 G 0.25 0.55 Q 0.45 1 H 1 0.75 0.05 R 0.2 0.35 0.1 0.15 I 0.75 0.7 0.05 S 0.75 0.45 1 J 0.85 T 0.8 0.05 T otal % 0.43 0.005 0.61 0.01 0.005 0.005 T otal % 0.57 0.05 0.695 0.035 0 0.045 5.2 Measures Self-report measures proved to be the most revealing when comparing mixes and when comparing listener types. W e expected the GSR results to be more telling, but found them to be mostly inconclusive. This might have been due to noise in the data as a result of poor electrode contact which is similar to what happened in [33]. The values for the AUs only became interesting when JOURNAL OF L A T E X CLASS FILES 10 Fig. 4. Still images of f our par ticipants from the videos made during the experiment. T op two rows are cr itical listeners and the bottom two are non-critical listeners. GEMS9 C1 GEMS9 C2 GEMS9 C3 GEMS9 C4 AROUSAL C1 AROUSAL C2 AROUSAL C3 AROUSAL C4 VALENCE C1 VALENCE C2 VALENCE C3 VALENCE C4 TENSION C1 TENSION C2 TENSION C3 TENSION C4 GSRAUD C1 GSRAUD C2 GSRAUD C3 GSRAUD C4 GSREMO C1 GSREMO C2 GSREMO C3 GSREMO C4 NOD-SHK C1 NOD-SHK C2 NOD-SHK C3 NOD-SHK C4 FAU C1 FAU C2 FAU C3 FAU C4 Statistical Test 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Percentage of Significant Results Fig. 5. The percentage of significant results for each statistical test performed for each condition. The highest percentage of significant results occurred for GEMS9 (Felt emotion), Arousal-V alence-T ension (P erceived emotion), Head Nod/Shak e and Facial Action Units . we looked at the standard deviation. This is expected since someone that is more excited by music tends to be more expressive in their face as the music is played. Head nod/shake detection proved to be very inter esting when comparing the types of listeners. Non-critical listeners nod- ded their heads more than critical listeners when listening to the poor quality mix, which was something we decided to analyse based on our initial findings in the pilot study . 5.3 Design As beneficial as it was to have a pilot study , we learned a lot about experimental design from the main part of the experiment, which could be used to help future studies. One participant reported that most of the emotions that music induces for them comes fr om the lyrics. They r eported that if they disliked the lyrics, then they tended to dislike the song, thus potentially meaning a negative or lack of emotional response. This aspect of music listening may have had an impact on the emotional responses of non-native English speakers. T en of the participants were non-native speakers and may not have fully understood all lyrics, so this is a confounding variable we had not considered [ ? ]. Recent research on perceptual evaluation of high resolu- tion audio found that providing training before conducting perceptual experiments greatly improved the reliability of results [58]. In our experiment we provided two training songs, but this was to become familiar with the experimental interface. However , it could be argued that training would have blurred the distinction between critical and non-critical listeners. Ideally we would have used songs in the experiment that came from a wider variety of genres. A number of participants were dissatisfied with the songs because they simply did not like the genre. But this was out of our contr ol since we used songs rated in a previous experiment [3]. W e would have also liked to have had a bigger sample size for our experiment, to further generalise the results. W e would also suggest that each participant be made sit on a chair that does not rotate or have wheels. When some participants were enjoying a song they tended to move around, which sometimes caused sensors to become dislodged and rendered the acquired data unusable. 6 C O N C L U S I O N Our exploratory study provides an insight into the rela- tionship between music production quality and musically induced and perceived emotions. W e highlighted some of the challenges with working with physiological sensors and conducting listening tests when trying to measur e emotional responses in a musical context. W e conducted the first experiment of its kind using facial expr ession analysis and head nod-shake detection in conjunction with a per ceptual listening test. When we tested to see if critical listeners and non- critical listeners had differ ent emotional responses based on the differ ence in music production quality , the r esults were inconclusive for GSR, facial expression and head nod-shake detection. Results strongly agreed with our hypothesis only when we looked at the self-report of perceived emotion. When we examined just high quality mixes and looked at the difference in emotions of critical and non-critical listeners we found significant p-values in most cases. This JOURNAL OF L A T E X CLASS FILES 11 was most evident for self-report, head nods/shakes and facial expression. When we examined low quality mixes and looked at the differ ence in emotions of critical and non- critical listeners we also found a lot of significant p-values, but to a lesser extent than that of the high quality mixes. This was also most evident for self-r eport, head nods/shakes and facial expression. The results implied that emotion in a mix, whether in- duced or perceived, mattered the most to those with critical listening skills, which agrees with our hypothesis. This was most evident from the GEMS-9, Ar ousal-V alence-T ension, Head Nod/Shake Detection and Facial Action Unit r esults since they had the most amount of significant p-values. If one was to take a cynical view , it could be said that using a more professional and experienced mix engineer to mix a piece of music only really matters to those who have been trained to listen for mix defects, and mix quality has little bearing on the layperson emotionally . This is an important result for audio engineers and specifically in the context of automatic mixing systems. The perceived quality of an automatically generated mix may not be important to those without critical listening skills and it suggests that automatically generated mixes may be good enough for the general public. 7 F U T U R E W O R K It would be inter esting to perform pair-wise ranking be- tween the two mix types, as Likert scales may not be the best tool for affect studies since the values they ask people to rate may mean different things to each participant [59]. However , one argument against pairwise testing is that it is time consuming, e.g. for 10 samples, one might need 10*9/2 comparisons [60], [61]. It would also be interesting to see if we get similar r esults when non-critical listeners ar e pr ovided with training before the experiment i.e. trained to spot common mix defects. This would help identify if the trained non-critical listener ’s exhibited emotions based on what they think is expected of them due to the training. W e would like to track if a participant is singing along to the music being played, as this could be regarded as a measure of engagement and potential enjoyment of the mu- sic. This could be achieved by tracking the Action Units that correspond to the mouth as well as having a microphone near the participant to verify if they were actually singing or not. W e would also recommend looking at tracking foot or finger tapping as this is a common form of movement to music [43]. This could be achieved by attaching accelerome- ters to the participant’s feet and placing small piezo contact microphones on their fingertips. W e hope this work will inspire futur e research. In partic- ular there is a need to use more varied genr es of music for evaluation and to see if emotional measures correlate well with low to high level audio features. This could potentially be used in automatic mixing systems such as [62], [63], [64], [65]. Acknowledgements: The authors would like to thank all the participants of this study and EPSRC UK for funding this research. W e would also like to thank Elio Quinton, Dave Moffat and Emmanuel Deruty for providing valuable feedback. R E F E R E N C E S [1] A. U. Case, Mix smart . Focal Press, 2011. [2] B. De Man, M. Boerum, B. Leonard, R. King, G. Massenburg, and J. D. Reiss, “Perceptual evaluation of music mixing practices,” in 138th Convention of the Audio Engineering Society , May 2015. [3] B. De Man, B. Leonard, R. King, and J. D. Reiss, “An analysis and evaluation of audio features for multitrack music mixtures,” in 15th International Society for Music Information Retrieval Conference (ISMIR 2014) , October 2014. [4] D. Ronan, D. Moffat, H. Gunes, and J. D. Reiss, “Automatic sub- grouping of multitrack audio,” in Pr oc. 18th International Conference on Digital Audio Effects (DAFx-15) , 2015. [5] D. Ronan, B. De Man, H. Gunes, and J. D. Reiss, “The impact of subgrouping practices on the perception of multitrack mixes,” in 139th Convention of the Audio Engineering Society , 2015. [6] A. W ilson and B. M. Fazenda, “Perception of audio quality in productions of popular music,” Journal of the Audio Engineering Society , 2015. [7] P . Pestana and J. Reiss, “Intelligent audio production strategies in- formed by best practices,” in Audio Engineering Society Conference: 53rd International Conference: Semantic Audio , Audio Engineering Society , 2014. [8] A. Ross, The rest is noise: Listening to the twentieth century . Macmil- lan, 2007. [9] A. Gabrielsson, “Emotion perceived and emotion felt: Same or differ ent?,” Musicae Scientiae , vol. 5, no. 1 suppl, pp. 123–147, 2002. [10] T . Eerola and J. K. V uoskoski, “A comparison of the discrete and dimensional models of emotion in music,” Psychology of Music , 2010. [11] Y . Song, S. Dixon, M. T . Pearce, and A. R. Halpern, “Perceived and induced emotion r esponses to popular music,” Music Perception: An Interdisciplinary Journal , vol. 33, no. 4, pp. 472–492, 2016. [12] A. Gabrielsson and E. Lindstr ¨ om, “The role of structure in the musical expr ession of emotions,” Handbook of music and emotion: Theory , research, applications , pp. 367–400, 2010. [13] P . N. Juslin and D. V ¨ astfj ¨ all, “Emotional responses to music: The need to consider underlying mechanisms,” Behavioral and brain sciences , vol. 31, no. 05, pp. 559–575, 2008. [14] P . N. Juslin, S. Liljestr ¨ om, D. V ¨ astfj ¨ all, and L.-O. Lundqvist, “How does music evoke emotions? exploring the underlying mecha- nisms,” in Handbook of music and emotion , pp. 605–642, Oxford Press, 2010. [15] P . N. Juslin, “From everyday emotions to aesthetic emotions: towards a unified theory of musical emotions,” Physics of life reviews , vol. 10, no. 3, pp. 235–266, 2013. [16] P . N. Juslin, L. Harmat, and T . Eerola, “What makes music emotionally significant? exploring the underlying mechanisms,” Psychology of Music , p. 0305735613484548, 2013. [17] P . Ekman, “An argument for basic emotions,” Cognition & emotion , vol. 6, no. 3-4, pp. 169–200, 1992. [18] J. Panksepp, Affective neuroscience: The foundations of human and animal emotions . Oxfor d university press, 1998. [19] T . Eer ola, O. Lartillot, and P . T oiviainen, “Prediction of multidi- mensional emotional ratings in music from audio using multi- variate regression models.,” in 10th International Society for Music Information Retrieval Conference (ISMIR 2009) , pp. 621–626, 2009. [20] J. A. Sloboda and P . N. Juslin, “Psychological perspectives on music and emotion.,” 2001. [21] J. A. Russell, “A circumplex model of affect.,” Journal of personality and social psychology , vol. 39, no. 6, p. 1161, 1980. [22] M. Zentner , D. Grandjean, and K. R. Scher er , “Emotions evoked by the sound of music: characterization, classification, and mea- surement.,” Emotion , vol. 8, no. 4, p. 494, 2008. [23] M. T . Pearce and A. R. Halpern, “Age-related patterns in emotions evoked by music.,” 2015. [24] G. Kreutz, U. Ott, D. T eichmann, P . Osawa, and D. V aitl, “Using music to induce emotions: Influences of musical pr eference and absorption,” Psychology of music , 2007. [25] S. V ieillard, I. Peretz, N. Gosselin, S. Khalfa, L. Gagnon, and B. Bouchard, “Happy , sad, scary and peaceful musical excerpts for resear ch on emotions,” Cognition & Emotion , vol. 22, no. 4, pp. 720– 752, 2008. [26] M. M. Bradley and P . J. Lang, “Measuring emotion: the self- assessment manikin and the semantic dif ferential,” Journal of be- havior therapy and experimental psychiatry , vol. 25, no. 1, pp. 49–59, 1994. JOURNAL OF L A T E X CLASS FILES 12 [27] C. E. Izard, “Basic emotions, natural kinds, emotion schemas, and a new paradigm,” Perspectives on psychological science , vol. 2, no. 3, pp. 260–280, 2007. [28] D. W atson, L. A. Clark, and A. T ellegen, “Development and validation of brief measures of positive and negative af fect: the panas scales.,” Journal of personality and social psychology , vol. 54, no. 6, p. 1063, 1988. [29] O. Grewe, F . Nagel, R. Kopiez, and E. Altenm ¨ uller , “Emotions over time: Synchronicity and development of subjective, physiological, and facial affective reactions to music.,” Emotion , vol. 7, no. 4, p. 774, 2007. [30] P . G. Hunter and E. G. Schellenberg, “Music and emotion,” in Music perception , pp. 129–164, Springer , 2010. [31] E. Schubert, “Continuous self-report methods,” Handbook of music and emotion: Theory , research, applications , vol. 2, pp. 223–253, 2010. [32] H. Egermann, M. T . Pearce, G. A. W iggins, and S. McAdams, “Probabilistic models of expectation violation predict psychophys- iological emotional responses to live concert music,” Cognitive, Affective, & Behavioral Neuroscience , vol. 13, no. 3, pp. 533–553, 2013. [33] E. Morgan, H. Gunes, and N. Bryan-Kinns, “Using affective and behavioural sensors to explore aspects of collaborative music making,” International Journal of Human-Computer Studies , vol. 82, pp. 31–47, 2015. [34] D. Hodges, “Psychophysiological measures,” Handbook of music and emotion , pp. 279–312, 2010. [35] G. E. Schwartz, S.-L. Brown, and G. L. Ahern, “Facial muscle patterning and subjective experience during affective imagery: Sex differ ences,” Psychophysiology , vol. 17, no. 1, pp. 75–82, 1980. [36] C. V . W itvliet and S. R. V rana, “Play it again sam: Repeated expo- sure to emotionally evocative music polarises liking and smiling responses, and influences other affective reports, facial emg, and heart rate,” Cognition and Emotion , vol. 21, no. 1, pp. 3–25, 2007. [37] J. L. Andreassi, Psychophysiology: Human behavior & physiological response . Psychology Press, 2013. [38] L.-O. Lundqvist, F . Carlsson, P . Hilmersson, and P . Juslin, “Emo- tional responses to music: experience, expression, and physiol- ogy ,” Psychology of Music , 2008. [39] M. Roy , J.-P . Mailhot, N. Gosselin, S. Paquette, and I. Peretz, “Mod- ulation of the startle r eflex by pleasant and unpleasant music,” International Journal of Psychophysiology , vol. 71, no. 1, pp. 37–42, 2009. [40] J. C. Hager , P . Ekman, and W . V . Friesen, “Facial action coding system,” Salt Lake City, UT : A Human Face , 2002. [41] A. W eisgerber , N. V ermeulen, I. Per etz, S. Samson, P . Philippot, P . Maurage, D. Catherine De Graeuwe, A. De Jaegere, B. Delatte, B. Gillain, et al. , “Facial, vocal and musical emotion recognition is altered in paranoid schizophrenic patients,” Psychiatry resear ch , 2015. [42] B. A. Silvey , “The role of conductor facial expression in students evaluation of ensemble expressivity ,” Journal of Research in Music Education , p. 0022429412462580, 2012. [43] N. L. W allin and B. Merker , The origins of music . MIT press, 2001. [44] P . Sedlmeier , O. W eigelt, and E. W alther , “Music is in the mus- cle: How embodied cognition may influence music prefer ences,” Music Per ception: An Interdisciplinary Journal , vol. 28, no. 3, pp. 297– 306, 2011. [45] G. T om, P . Pettersen, T . Lau, T . Burton, and J. Cook, “The role of overt head movement in the formation of affect,” Basic and Applied Social Psychology , vol. 12, no. 3, pp. 281–289, 1991. [46] G. L. W ells and R. E. Petty , “The effects of over head movements on persuasion: Compatibility and incompatibility of responses,” Basic and Applied Social Psychology , vol. 1, no. 3, pp. 219–230, 1980. [47] B. De Man, M. Mora-Mcginity , G. Fazekas, and J. D. Reiss, “The Open Multitrack Testbed,” in 137th Convention of the Audio Engi- neering Society , October 2014. [48] R. ITU-R, “Itu-r bs. 1770-2, algorithms to measure audio pro- gramme loudness and true-peak audio level,” International T elecommunications Union, Geneva , 2011. [49] J. Kim and E. Andr ´ e, “Emotion r ecognition based on physiological changes in music listening,” IEEE transactions on pattern analysis and machine intelligence , vol. 30, no. 12, pp. 2067–2083, 2008. [50] K. H. Kim, S. Bang, and S. Kim, “Emotion recognition system using short-term monitoring of physiological signals,” Medical and biological engineering and computing , vol. 42, no. 3, pp. 419–427, 2004. [51] M. Benedek and C. Kaernbach, “A continuous measure of phasic electrodermal activity ,” Journal of neuroscience methods , vol. 190, no. 1, pp. 80–91, 2010. [52] I. Daly , A. Malik, J. W eaver , F . Hwang, S. J. Nasuto, D. W illiams, A. Kirke, and E. Miranda, “T owards human-computer music interaction: Evaluation of an affectively-driven music generator via galvanic skin response measures,” in Computer Science and Electronic Engineering Conference (CEEC), 2015 7th , pp. 87–92, IEEE, 2015. [53] H. Gunes and M. Pantic, “Dimensional emotion prediction from spontaneous head gestures for interaction with sensitive artificial listeners,” in Intelligent virtual agents , pp. 371–377, Springer , 2010. [54] S. Jaiswal and M. F . V alstar , “Deep learning the dynamic ap- pearance and shape of facial action units,” W inter Conference on Applications of Computer V ision (W ACV), 7-9 March 2016, Lake Placid, USA. , 2016. [55] M. Grimm and K. Kroschel, “Evaluation of natural emotions using self assessment manikins,” in Automatic Speech Recognition and Understanding, 2005 IEEE Workshop on , pp. 381–385, IEEE, 2005. [56] E. Perez-Gonzalez and J. D. Reiss, “A real-time semiautonomous audio panning system for music mixing,” EURASIP Journal on Advances in Signal Processing , vol. 2010, no. 1, p. 436895, 2010. [57] M. A. Nicolaou, H. Gunes, and M. Pantic, “Continuous predic- tion of spontaneous affect from multiple cues and modalities in valence-arousal space,” IEEE T ransactions on Affective Computing , vol. 2, no. 2, pp. 92–105, 2011. [58] J. D. Reiss, “A meta-analysis of high resolution audio perceptual evaluation,” Journal of the Audio Engineering Society , 2016. [59] G. N. Y annakakis and J. Hallam, “Ranking vs. prefer ence: a com- parative study of self-reporting,” in International Conference on Af- fective Computing and Intelligent Interaction , pp. 437–446, Springer , 2011. [60] R. Schatz, S. Egger , and K. Masuch, “The impact of test duration on user fatigue and reliability of subjective quality ratings,” Journal of the Audio Engineering Society , vol. 60, no. 1/2, pp. 63–73, 2012. [61] P . L. Ackerman and R. Kanfer , “T est length and cognitive fatigue: An empirical examination of effects on performance and test- taker reactions.,” Journal of Experim ental Psychology: Applied , vol. 15, no. 2, p. 163, 2009. [62] S. Hafezi and J. D. Reiss, “Autonomous multitrack equalization based on masking reduction,” Journal of the Audio Engineering Society , vol. 63, no. 5, pp. 312–323, 2015. [63] J. D. Reiss, “Intelligent systems for mixing multichannel audio,” in Digital Signal Processing (DSP), 2011 17th International Conference on , pp. 1–6, IEEE, 2011. [64] D. Ronan, D. Moffat, H. Gunes, and J. D. Reiss, “Automatic sub- grouping of multitrack audio,” in Pr oc. 18th International Conference on Digital Audio Effects (DAFx-15) , 2015. [65] Z. Ma, B. De Man, P . D. Pestana, D. A. A. Black, and J. D. Reiss, “Intelligent multitrack dynamic range compression,” Journal of the Audio Engineering Society , 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment