Comprehending Real Numbers: Development of Bengali Real Number Speech Corpus

Speech recognition has received a less attention in Bengali literature due to the lack of a comprehensive dataset. In this paper, we describe the development process of the first comprehensive Bengali speech dataset on real numbers. It comprehends al…

Authors: Md Mahadi Hasan Nahid, Md. Ashraful Islam, Bishwajit Purkaystha

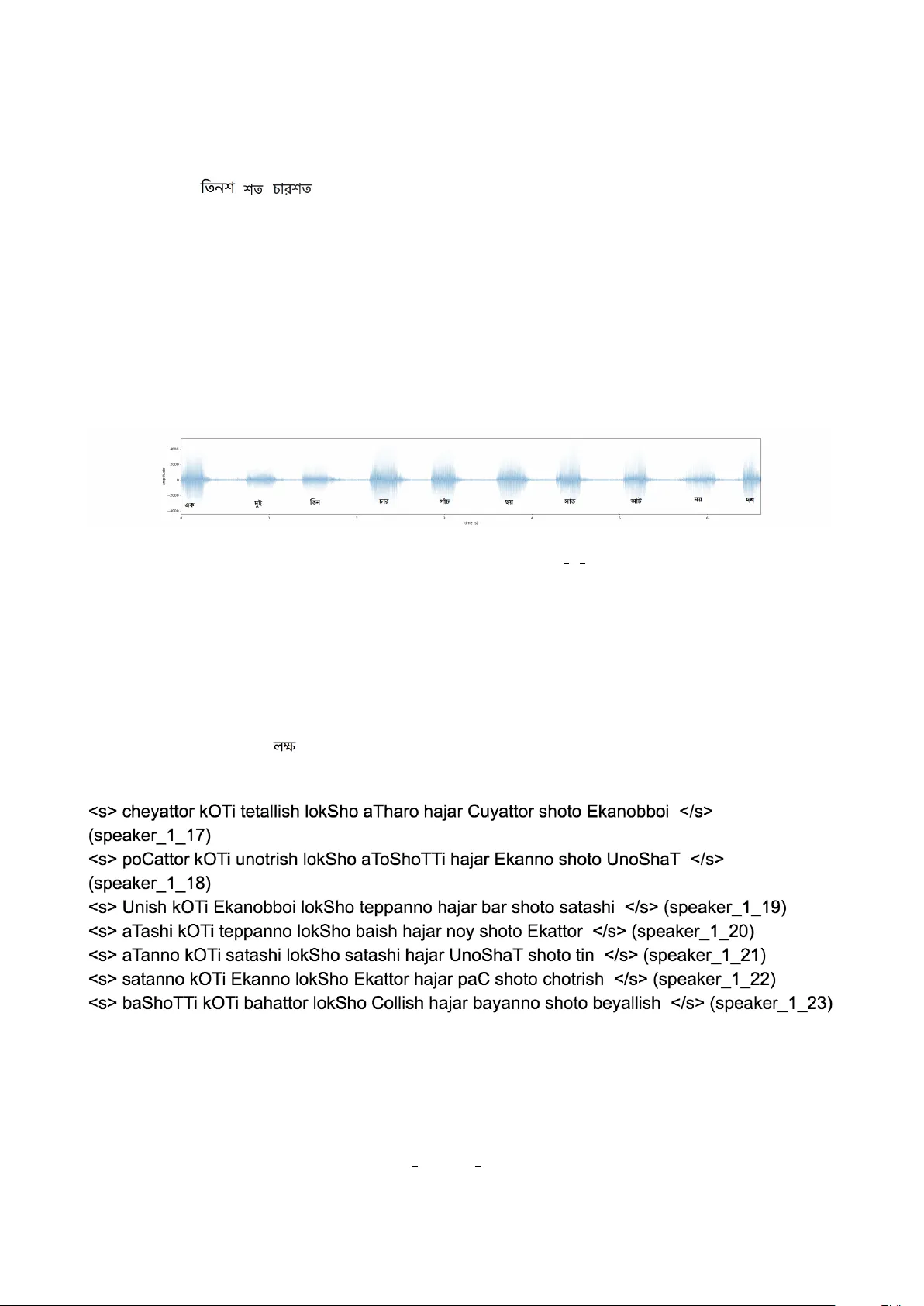

Comprehending Real Num b ers: Dev elopmen t of Bengali Real Num b er Sp eec h Corpus Md Mahadi Hasan Nahid *,1 , Md. Ashraful Islam *,2 , Bish wa jit Purk a ystha *,2 , and Md Saiful Islam *,1 * Departmen t of Computer Science and Engineering Shahjalal Univ ersity of Science and T ec hnology , Sylhet-3114. *,1 { nahid-cse,saiful-cse } @sust.e du *,2 { ashr afulcse.sust, bishwa420 } @gmail.c om Abstract Sp eec h recognition has receiv ed a less atten tion in Bengali literature due to the lac k of a comprehensiv e dataset. In this pap er, we describe the developmen t pro cess of the first comprehensiv e Bengali sp eec h dataset on real n umbers. It comprehends all the p ossible w ords that may arise in uttering an y Bengali real num b er. The corpus has ten sp eak ers from the different regions of Bengali nativ e p eople. It comprises of more than t w o thousands of sp eec h samples in a total duration of closed to four hours. W e also pro vide a deep analysis of our corpus, highlight some of the notable features of it, and finally ev aluate the p erformances of t wo of the notable Bengali speech recognizers on it. 1 In tro ductions Automatic Sp eech Recognition (ASR) capabilit y of machines is v ery imp ortan t as it reduces the cost of communication betw een h umans and mac hines. The recent resurgence in ASR [1, 2] is significan t and due to the large recurren t neural net works [3]. The neural netw orks are most useful when they hav e large datasets. The improv emen t in automatic sp eech recognition is not reflected in Bengali literature. This is due to a lack of comprehensive corpus in the literature. There are few corp ora in the literature [4], but they are not comprehensiv e, meaning that they do not encompass all the p ossible words in a sp ecific portion of the literature. In this pap er, we address this issue by describing the developmen t of a Bengali sp eec h corpus which encompasses all p ossible words that ma y app ear in a Bengali real n umber. W e call our corpus “Bengali real n umber sp eech corpus”. This corpus was developed to cov er all the words that could p ossibly inv olv e in an y Bengali real n umber. The corpus comprises the sp eec hes of sev eral Bengali nativ e sp eak ers from differen t regions. The summary of our corpus is given in T able 1. One of the limitations of our corpus is that its sp eakers are all male sp eak ers and the standard deviation in the ages of the sp eakers is relativ ely small. The vocabulary of the corpus, men tioned in T able 1, con tains all the Bengali n umbers from zero to h undred. Eac h n um b er from zero to hundred in Bengali is uttered in a singular wa y , except for the num b er ‘45’. It is uttered as b oth ‘/p/o/y/t/a/l/l/i/sh’ and ‘/p/o/y/c h/o/l/l/i/sh’. Therefore, 102 w ords in our vocabulary stand for n um b er 0 to n umber 1 T able 1: Bengali real num b er audio corpus summary Num b er of sp eakers 10 Age range 20-23 y ears Num b er of sp eech samples 2302 samples Duration 3.79 hours V o cabulary size 115 w ords Num b er of distinct phonemes 30 Av erage num b er of words p er sample ≈ 8 w ords T able 2: Sp ecial t welv e w ords # Bengali w ord English corresp ondence Bengali utterance 1 One h undred ekSho 2 Tw o hundred duiSho 3 Three h undred tinSho 4 F our h undred c harSho 5 Fiv e hundred pac hSho 6 Six h undred c hoySho 7 Sev en hundred satSho 8 Eigh t hundred aTSho 9 Nine h undred no ySho 10 Thousand ha jar 11 Lakh lokSho 12 Crore k oTi 13 Decimal doShomik 100. The details about the rest of tw elv e w ords are giv en in T able 2. All the w ords inv olv ed in uttering an y Bengali real num b er fall to either the category of first 102 w ords or to the category of the words men tioned in T able 2. The main motiv ation b ehind this w ork was to dev elop a balanced corpus, meaning that all unique words would o ccur with more or less an equal probability . This will b e useful for an y learning metho d mo deling the sp eech samples, but this may b e a shortcoming in terms of representing the actual prior probabilities of o ccurring the individual words. The prior probabilities were not considered b ecause we did not ha ve an y authen tic information about them. The rest of the pap er is organized as follo ws. In Section 2, w e describ e ho w w e ha ve developed the corpus. In the same section w e also describ e some k ey features of our corpus. In the next section, Section 3, we ev aluate tw o metho ds of automatic sp eech recognition on our corpus. And finally , in Section 4, we dra w the conclusion to our pap er. 2 Dev elopmen t of Bengali Real Num b er Sp eec h Dataset In this section, we giv e a detailed description of our developmen t pro cess and some key features of it. 2 2.1 Data Preparation W e dev elop ed the corpus in tw o steps. At first, w e had to decide what real n um b ers should b e present in the dataset. Therefore, we generated individual random strings containing the Bengali real n umbers. W e had also generated some strings that did not con tain the real num- b ers, rather, they only contained sequences of n umbers (lik e, “One Two Three ... T en”). The algorithm of generating the strings is giv en in Algorithm 1. Algorithm 1 String generation algorithm 1: pro cedure StringGenera tion 2: w 1 ← list of each w ord for num b ers from 0 to 99 . size = 101 3: w 2 ← list of each w ord for num b ers { 100,200,300, ..., 900 } . size = 9 4: w 3 ← { , , , } . { h undred, thousand, lakh, crore } 5: w 4 ← { } . { decimal } 6: N ← total n umber of strings to generate 7: counter ← 0 8: S ← {} . final set of generated strings to return 9: lo op outer : 10: L ← r and (2 , 4) . a random in teger in range [2,4] 11: L ← L × 2 . the string will hav e 4 to 8 w ords 12: i ← 0 13: s ← “” . generated string in this iteration 14: lo op inner : 15: i ← i + 2 16: r 1 ← r and (1 , 14) 17: if r 1 > 1 then 18: s ← s + w 1 [ r and (1 , l en ( w 1 ))] 19: else 20: s ← s + w 2 [ r and (1 , l en ( w 2 ))] 21: end if 22: r 2 ← r and (1 , 7) 23: if r 2 ≤ 6 or i = L then . the utterance should not end in ‘decimal’ 24: s ← s + w 3 [ r and (1 , l en ( w 3 ))] 25: else 26: s ← s + w 4 [ r and (1 , l en ( w 4 ))] 27: end if 28: if i < L then 29: goto lo op inner 30: add s to S 31: counter ← counter + 1 32: if counter < N then 33: goto lo op outer 34: end if 35: return S In the algorithm, w e made tw o clusters (i.e., { w 1 , w 2 } and { w 3 , w 4 } ) to comprehend all the w ords in the v o cabulary . W e constricted the length of the strings to b e either four, or six, or eigh t. A string can b e logically split in to a num b er of groups (dep ending on the length of the string) where eac h group has exactly tw o words. The first w ord comes from either w 1 or w 2 and the last word comes from either w 3 or w 4 . This reflects the real w orld scenario. The 3 w ord ‘decimal’ do es not app ear as the final w ord in the sen tence, so we hav e restricted it from app earing as the final w ord in line n umber 23 in the algorithm. Th us, we ha ve generated the strings for our corpus. All the generated strings are grammatically v alid, but still, there can b e a very few strings which are semantically inv alid. F or example, a seman tically inv alid string ma y be like: ‘ ’. F or suc h strings, we hav e also incorp orated them in our corpus as these strings should not imp ede the learning pro cess of a recognizer. After generating the strings, a set of different recording en vironments was set. This included lab ro oms, class ro oms, closed ro oms, and etc. The noise in the en vironmen t was kept to a minim um lev el. The v olun teers w ere giv en the scripts (which con tained the randomly generated strings) and were asked to read out in front of a microphone. Their sp eeches were recorded and filtered using a band-pass filter with the cutoff frequencies 300 Hz and 3 KHz. Finally , we stored the filtered sp eech samples in “.wa v” file format with the original bit rate of 256 kbps. A sample sp eech, in its final represen tation, is shown in Figure 1. Figure 1: Con ten ts of file speaker 1 1.wav 2.2 Data Organization 2.2.1 T ranscripts of the Sp eech Samples Although we hav e mentioned b efore that w e had not taken actual prior probabilities in to accoun t, but still we made sure that an y imp ossible com bination of the w ords do es not occur. F or example, the word nev er o ccurs at the b eginning of any sp eec h sample throughout the corpus. Figure 2 sho ws a snapshot of the con ten ts of text-data.txt file of the corpus. This file Figure 2: T ranscript file: text-data.txt con tains transcripts of all the sp eec h samples. Eac h line contains the transcript of one sp eec h sample. Each line has mainly tw o important parts. The actual con tent contained in the audio file is transcrib ed within the

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment