MTGAN: Speaker Verification through Multitasking Triplet Generative Adversarial Networks

In this paper, we propose an enhanced triplet method that improves the encoding process of embeddings by jointly utilizing generative adversarial mechanism and multitasking optimization. We extend our triplet encoder with Generative Adversarial Netwo…

Authors: Wenhao Ding, Liang He

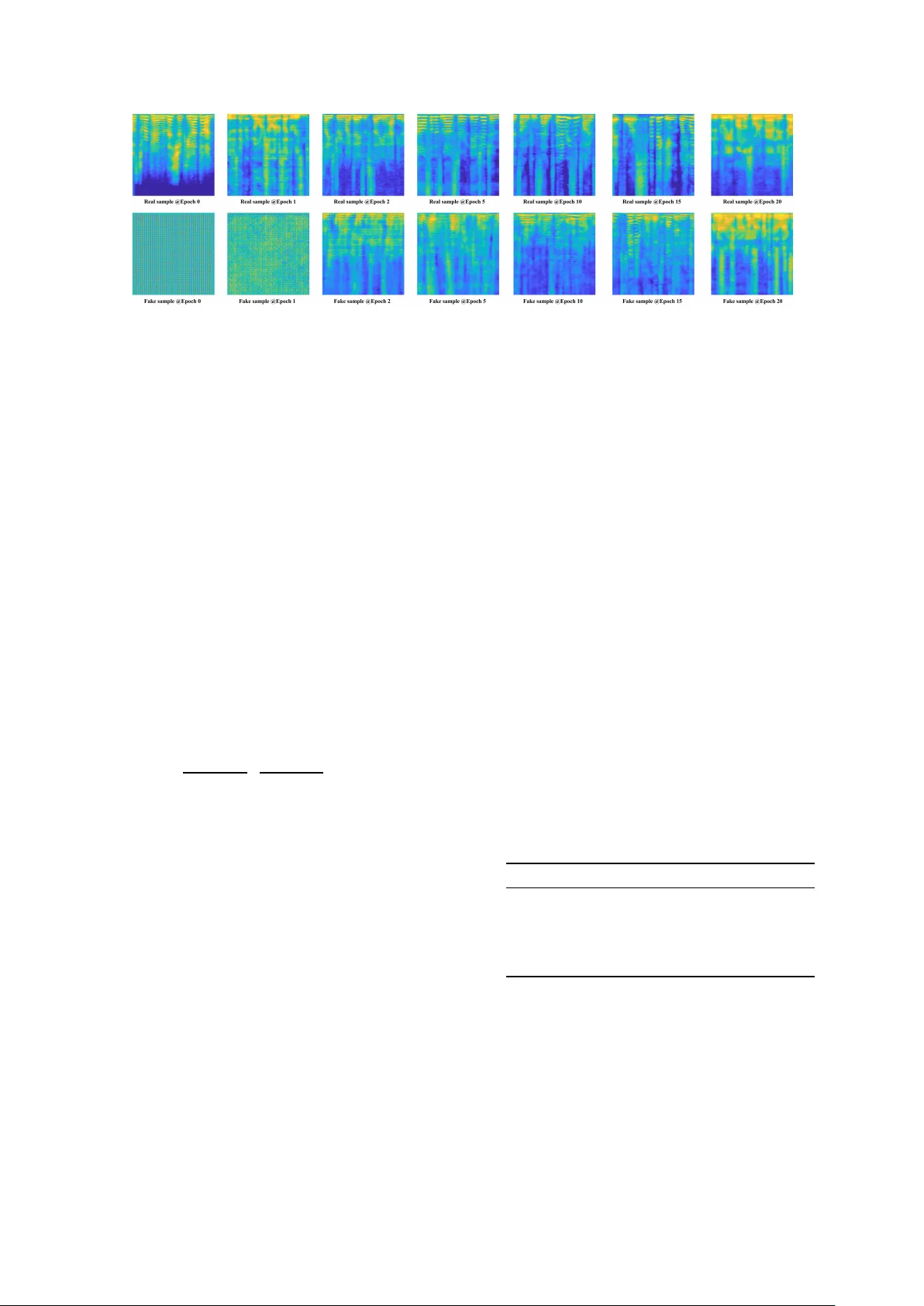

MTGAN: Speaker V erification thr ough Multitasking T riplet Generativ e Adversarial Netw orks W enhao Ding and Liang He Department of Electronic Engineering, Tsinghua Uni versity , China dwh14@mails.tsinghua.edu.cn, heliang@mail.tsinghua.edu.cn Abstract In this paper , we propose an enhanced triplet method that im- prov es the encoding process of embeddings by jointly utilizing generativ e adversarial mechanism and multitasking optimiza- tion. W e extend our triplet encoder with Generative Adversar- ial Networks (GANs) and softmax loss function. GAN is in- troduced for increasing the generality and diversity of samples, while softmax is for reinforcing features about speakers. For simplification, we term our method Multitasking T riplet Gen- erative Adversarial Networks (MTGAN). Experiment on short utterances demonstrates that MTGAN reduces the verification equal error rate (EER) by 67% (relati vely) and 32% (relati vely) ov er conv entional i-vector method and state-of-the-art triplet loss method respectively . This effecti vely indicates that MT - GAN outperforms triplet methods in the aspect of expressing the high-lev el feature of speaker information. Index T erms : generative adversarial networks, speaker verifi- cation, triplet loss 1. Introduction Automatic Speaker V erification (ASV) refers to the process of identifying the speaker’ s ID of an unknown utterance given a registered v oice database. As an important non-contact biometric identification technique, it has been widely studied [ 1 , 2 , 3 , 4 ]. In the past fe w years, the field of ASV has formed a main- stream with i-vector/PLD A [ 2 , 5 ]. Howe ver , plenty of works hav e found that end-to-end systems composed of Deep Neural Networks (DNN) surpass traditional methods in some aspects, especially under short-utterance condition. Besides, speaker verification of short v oice is of great practical v alue, which mo- tiv ates us to research on DNN methods. Recently , some metric learning methods with DNNs attract a lot of attention. Triplet loss is one of them and is popular in the field of pattern recognition because of FaceNet [ 6 ], which is a novel method of face recognition. After that, Zhang et al. [ 4 ] apply this method to speaker verification. T riplet method has been pro ved to be useful and large amount of w orks [ 7 , 8 , 9 ] are improv ed on the basis of it. The essential thought of triplet loss is to minimize intra- class distance while maximizing inter-class distance. Theoret- ically , it is effectiv e for all classification tasks, but considering limited training samples, rev erberation and ambient noise dur- ing recording, triplet loss has limitations on the task of speaker verification. In the absence of any guidance or restriction, en- coders with v anilla triplet loss usually extract features unrelated to the speaker’ s ID, resulting in poor performance. Further - more, generalization ability is important for zero-shot learning. T raining encoders entirely on training set without any augmen- tation makes triplet methods less general on a test set. T o ad- dress aforementioned issues, we propose to enhance triplet loss with multitasking learning and generative adversarial mecha- nism. As for our architecture (sho wn in Figure 1 ), two more mod- ules are introduced in addition to the basic encoder . First, we tail a Conditional GAN behind the encoder . The generator pro- duces new samples from embeddings of the encoders and ran- dom noise. Merging encoders with GAN is similar to the frame- work of [ 10 ] and [ 11 ], which have pro ved their superiority . Af- ter passing through an encoder-decoder structure with noise, new samples have more generalization ability and v ariety in terms of speech conte xt and unrelated en vironment information. The discriminator guarantees the authenticity and similarity of the generated samples, while the features of speak er remain be- cause of the following restrictions. A classifier takes samples both from the generator and raw data as input. The last layer of this classifier is used for softmax loss, whose labels are speak- ers’ ID of the training set. Such a module improves the ability of the encoder to extract distincti ve features of speakers. W e train and test our method on two different datasets to analyse the transferability of the algorithm. Our baselines in- clude a i-vector/PLD A system, a softmax method [ 3 ] and a triplet method [ 4 ]. Experimental results show that our algo- rithm achie ves 1.81% of EER and 92.65% of accuracy that are much better than baseline systems. Through more extensi ve e x- periments (see experiments section), we confirms that MTGAN has more ability to extract speaker related features than vanilla triplet loss methods. 2. Related W orks 2.1. Deep Neutral Networks The appearance of d-vector [ 3 ] signifies the birth of ASV sys- tems under the entire DNN framework. It is a milestone in the field of ASV which leads a large amount of works about DNN. After that, more and more works [ 12 , 13 , 14 ] achiev e as good results as i-vector/PLD A methods. For instance, [ 14 ] presents a conv olutional time-delay deep neural network structure (CT - DNN) and claims they are much better than i-vector systems in the case of short time speech. In this area, a lot of works focus on the adjustment of the network structure and the utilization of new training technolo- gies. Ho wever , in terms of zero-shot tasks like ASV , whose training set and test set are irrelev ant, more suitable method should be proposed rather than optimizing the network struc- ture. [ 13 ] claims that only using softmax loss like [ 3 ] and [ 14 ] leads to poor performance on test sets that are very different from their training sets. 2.2. T riplet Metric Lear ning For the sake of tackling zero-shot problems, triplet loss is pro- posed for the first time in [ 15 ]. Although it has appeared for Figure 1: Arc hitecture of Multitasking Triplet Generative Adversarial Networks. P arameters ar e shared among the networks for triplet samples. In enroll/test phase , embeddings produced by the encoder ar e used to calculate the distance. Best viewed in color . a long time, there are still many subsequent works [ 7 , 8 , 9 ]. [ 7 ] adopted a multi-channel approach to enhance the tightness of intra-class samples. [ 8 ] proposed a structure of quadruplet network to improv e the transferability of triplet loss on the test set. Some other works like [ 9 ] directly modify the definition of distance and margin. Inspired by FaceNet [ 6 ], which improved the sampling method of triplet loss, [ 4 ] combined triplet loss with ResNet [ 16 ] and applied it to ASV for the first time. After that, [ 17 ] also proposed a structure called TRISTOUNET for speaker ver- ification using a combination of bidirectional LSTM and triplet loss. [ 13 ] proposed Deep Speaker to tackle both text-dependent and text-independent tasks. Deep Speaker also proves that pre- trained softmax network is conduciv e to improve triplet meth- ods. Methods mentioned above ha ve adopted a variety of ways of improving, but none of them combine triplet loss with other multitasking methods. Despite deepspeaker uses pre-trained softmax network, there is only one loss item during the train- ing process. 2.3. Generative Adv ersarial Network GAN [ 18 ] is a framework based on game theory presented in 2014. After the proposal of original GAN, dozens of variants [ 19 , 20 , 21 , 22 ] appear and are widely used in many fields. The frame work of GAN contains two players, one is gener- ator and the other is discriminator . Generator and discriminator play the following minimax g ame with value function V(G, D) : min G max D V ( G, D ) = E x ∼ p data ( x ) [ log D ( x )] + E z ∼ p z ( z ) [ log (1 − D ( G ( z )))] (1) where z is a random noise that is introduced to av oid mode col- lapse. G(z) is fake samples generated from the generator . The first item of the equation represents the probability that the dis- criminator holds real sample is true, and the second item repre- sents the probability that the discriminator holds fake sample is false. Intuitiv ely , GANs are usually used for generative tasks, b ut recently there are some works using GAN for classification tasks [ 10 , 11 ]. Our architecture is similar to [ 10 ] that combines an encoder with GAN. Most applications of GAN are related to computer vision. Howe ver , researchers utilize GAN in the field of speech lately . [ 23 ] and [ 24 ] apply GAN to denoise and enhance voice. [ 25 ] improv e the process of speech recognition with GAN. Some people also combine triplet loss with GAN [ 26 , 27 ] to explore new applications. Concretely , [ 26 ] proposed a triplet network to generate samples specially for triplet loss. [ 27 ] proposed T ripletGAN to minimize the distance between real data and fak e data while maximizing the distance between dif ferent f ake data. In the field of speech, most previous work with GAN is about data enhancement. T o the best of our knowledge, no one has proposed to enhance triplet loss with GAN for the task of speaker verification. 3. Multitasking T riplet Generative Adversarial Network 3.1. Network Ar chitecture Figure 1 displays the architecture of our network. It consists of four modules and all of them have already been marked as different colors. • Encoder : This module is used to extract features from samples. The last fully-connected layer of it outputs a 512 dimension embedding, which represents speaker in- formation of the original sample. In the enroll/test stage, this embedding is used for calculating distance between unknown utterance and re gistered utterances. • GAN : More specifically , this is a GAN with conditional architecture. The inputs of the generator are not only the random noise but also embeddings from the encoder . The output of the generator is fake samples that are ex- pected to look like original samples. Discriminator has two kinds of inputs, one is the real sample and the other is the fake sample from a generator . • Classifier : Similarly , we feed both f ake samples and real samples into the classifier module. The output of this module is a one-hot vector , whose size is equal to the number of the speaker in training set. In the whole frame work, we only use con volution layer and fully-connected layer . The kernel size of all con volution layer is 5 × 5 and we also utilize batch normalization. [ 28 ] Figure 2: Generative samples during the training process. The first r ow contains fbanks that fed into the encoder , and the second row contains fake samples gener ated from the g enerator . F rom left to right in the or der of training . 3.2. Loss Function The loss function of our algorithm has four components, and each of them has a weight coefficient. The first one is a standard triplet loss that has been fully explained in [ 4 ] : N X i [ k f ( x a i ) − f ( x p i ) k 2 2 − k f ( x a i ) − f ( x n i ) k 2 2 + α ] + (2) where a (anchor) and p (positiv e) represent samples from the same class, while a (anchor) and n (negati ve) represent samples from different classes. α is a hyper-parameter margin, which defines the distance between intra-class samples and inter-class samples. It is set to 0.2 in our experiments. In our algorithm, we tak e advantage of cosine distance to measure the dif ferences between embeddings that produced by the encoder . The sec- ond term is the softmax loss of the classifier , whose labels are speakers’ ID of the training set. The sum of triplet loss and softmax loss are named as encoder loss function to measure the encoder’ s ability of extracting features. The last two loss func- tions come from the generator and discriminator of GAN, thus the whole loss function will be expressed as: L M T GAN = ω 1 L T riplet + ω 2 L S of tmax | {z } E ncoder Loss + ω 3 L G + ω 4 L D (3) where ω i represents the weight coef ficient of each item. In con- sideration of the generating div ersity , we optimize the genera- tor more times than discriminator . All of the ω i are determined through experiments, and we set them to 0.1, 0.2, 0.2, 0.5 re- spectiv ely . 3.3. T riplet Sampling Method The accuracy and conv ergence speed of triplet approach heav- ily depend on sampling method, and this problem has been de- tailedly discussed in [ 29 ]. There are tremendous combinations between all utterances totally , as a result, it is impossible to consider all possibility . [ 6 ] proposed to use semi-hard nega- tiv e exploration to sample triplet pairs, and [ 4 ] followed it. This method searches triplet pairs inside one mini-batch, thus it is effecti ve and timesaving. Deep Speaker [ 13 ] also propose to search anchor-ne gative pairs across multiple GPUs. After comparing random selection with semi-hard negativ e selection [ 6 ] (details in e xperiment section), we find that the se- lecting method does not matter as long as large amounts of peo- ple are used in one epoch. Thus, we directly use random sam- pling method in our algorithm. T otally , we obtain n*A*P*K*J triplet pairs in one epoch, where n represents the number of people selected, A is the number of Anchor , P is the number of P ositive , K is the number of other classes inside n and N is the number of Ne gative of each K . 3.4. Details about T raining Networks In the stage of preprocessing, we extract mel-fbank from raw audio slice. The length of each slice is 2s and we use 128 mel- filters, thus the dimension of the input is 128 × 128 . Admittedly , GAN is difficult to train because of its instability , especially in our multitasking situation. Like most works, we choose to mod- ify the DCGAN architecture proposed by [ 20 ], and utilize state- of-the-art training skills of WGAN-GP [ 22 ]. Some generativ e samples during the training process are shown in Figure 2 . 4. Experiments and Discussion 4.1. Datasets and Baselines The dataset that we utilize for training is Librispeech [ 30 ], which consists of ”clean” part and ”other” part. W e use ”other” part only for the experiment that explores the influence of the number of speakers. The test dataset is TIMIT [ 31 ], because this dataset covers all English phonemes. The reason we train and test on different datasets is to explore the transferability of algorithms. In terms of the ev aluation settings, we randomly choose 3 utterances for enrollment and 7 utterances for test. T able 1: EER (%) and accuracy (%) of dif fer ent systems Methods Equal Error Rate Accuracy i-vector/Cosine 8.48% 81.92% i-vector/PLD A 5.61% 85.78% Softmax loss [ 3 ] 3.61% 88.23% T riplet loss [ 4 ] 2.68% 90.45% MTGAN 1.81% 92.65% W e ha ve four baselines for comparison. T wo of them are i- vector systems, another is a supervised softmax system [ 3 ] and the last one is a triplet system [ 4 ]. 4.2. Perf ormance Comparison Experiments In this section, we compared our method with baselines under the same experiment settings (training with 1252 people of Lib- rispeech), and the result is displayed in T able 1 . W e use EER and ACC as our ev aluation criteria. EER ev aluates the ov erall Figure 3: Left: DET curves. Results of five methods are dis- played. T wo back-end methods ar e used for i-vector system. Right: EER (%) with differ ent embedding dimensions. F ive kinds of dimensions of the embeddings ar e displayed. Figure 4: Changes of A CC (%) and EER (%) with the time of training. W e choose the fir st 80 epochs to show the trend. performance of the system and ACC reveals the best result for us. For a more comprehensi ve assessment, we plot detection er- ror trade-off (DET) curves of all fi ve systems (shown in the left part of Figure 3 ). Through the results in T able 1 , we summarize that triplet method [ 4 ] indeed outperforms i-vector and softmax methods. Howe ver , our method achiev es a better result than [ 4 ] and has faster con ver gence speed. Through the analysis, we think the simple triplet method is limited by the ability of feature extrac- tion and is poor in the performance of data transfer . In the later period of training, triplet loss of [ 4 ] is close to zero (not overfit- ting). This phenomenon indicates that it has reached the limit of the speaker verification task with current features. The encoder extracts features not only from the speak er information but also from other independent factors. 4.3. Ablation Experiments In this section, we did more ablation experiments to prov e that our framew ork is feasible. Results under different conditions are shown in T able 2 . First, we verify the necessity of each module in our structure. W e removed three modules once at a time and carried out experiments under the same settings. Re- sults prove that the structure after the remov al of modules can- not behav e as effecti vely as MTGAN. Among three situations, the removal of classifier has the greatest impact, which means softmax loss is important for improving feature extraction pro- cess. Then we compared the dif ference between the random sam- pling method and the semi-hard negati ve method proposed by [ 6 ]. The network architecture we applied was Inception-Resnet- v1 , and we tested on selecting 60 and 600 people of each epoch for both methods. Results reported in T able 2 shows that the gap between the two methods is very tiny in the case of a large num- ber of people. W e also find that the number of selected people has more influence than the number of samples from the same person. EER and ACC of each epoch are displayed in Figure 4 . Embedding’ s dimension is also an important factor that in- fluences the expressing ability of the system. Therefore, we compared the EERs of five dif ferent dimensions and the results are displayed in the right part of Figure 3 T able 2: Ablation experiments with dif ferent conditions Conditions EER A CC Con vergence w/o GAN 2.04% 90.17% 60 epoch w/o softmax loss 3.34% 88.63% 80 epoch w/o triplet loss 2.71% 89.51% 60 epoch MTGAN 1.81% 92.65% 100 epoch Random (#60) 3.13% 85.26% 550 epoch Semi-hard (#60) 2.90% 88.73% 500 epoch Random (#600) 2.75% 90.03% 250 epoch Semi-hard (#600) 2.68% 90.45% 200 epoch 1252 people 1.81% 92.65% 70 epoch 2484 people 1.33% 94.27% 100 epoch The last experiment is to explore the impact of the number of people in training set. W e added the ”other” part of Lib- rispeech to the training set ( 2484 in total) and did the same experiment with the one that had 1252 people. Although the con vergence speed became slo wer, EER and A CC increased af- ter enlar ging training set. W e cannot fail to note a phenomenon: the output layer of classifier is related to the number of training speaker . The size of the network will increase if we use a lar ger dataset to train the model. 5. Conclusion In this study , we present a novel end-to-end text-independent speaker verification system on short utterances, which is named MTGAN. W e extend triplet loss with classifier and generative adversarial networks to form a multitasking frame work. Triplet loss is designed for clustering, while GAN and softmax loss help with extracting features about speaker information. Experimental results demonstrate that our algorithm achiev es lower EER and higher accurac y ov er i-vector methods and triplet methods. Besides, our method has a faster con ver- gence speed than v anilla triplet methods.Through more ablation experiments, we get other conclusions. W e confirm that soft- max loss plays a significant role in extracting features, and the gap between semi-hard negati ve method and random method is tiny in the situation of selecting large number of people in one batch. W e also observe that as expected, training with more people helps improv e the performance. W e believe this work provides more ideas and inspirations for speaker verification community , and introduces more DNN methods. Although our framework has much room to improve, we think our experimental results will help others understand the task of speaker verification more clearly . 6. References [1] D. Reynolds, T . F . Quatieri, and R. B. Dunn, “Speaker verification using adapted gaussian mixture models, ” Digital Signal Pr ocess- ing , vol. 10, no. 1, pp. 19–41, 2000. [2] N. Dehak, P . J. Kenn y , R. Dehak, P . Dumouchel, and P . Ouellet, “Front-end factor analysis for speaker verification, ” IEEE T rans- actions on Audio, Speech, and Language Processing , vol. 19, no. 4, pp. 788–798, 2011. [3] E. V ariani, X. Lei, E. McDermott, I. L. Moreno, and J. G. Dominguez, “Deep neural networks for small footprint text- dependent speaker verification, ” in IEEE International Confer- ence on Acoustics, Speech and Signal Pr ocessing (ICASSP), Flo- r ence, Italy , 2014. [4] C. Zhang and K. Koishida, “End-to-end text-independent speak er verification with triplet loss on short utterances, ” in Interspeech, Stockholm, Sweden , 2017. [5] S. J. D. Prince and J. H. Elder, “Probabilistic linear discriminant analysis for inferences about identity , ” in International Confer- ence on Computer V ision (ICCV), Rio de Janeir o, Brazil , 2007. [6] F . Schroff, D. Kalenichenko, and J. Philbin, “Probabilistic linear discriminant analysis for inferences about identity , ” in IEEE Inter - national Conf er ence on Computer V ision and P attern Recognition (CVPR), Boston, MA, USA , 2015. [7] D. Cheng, Y . Gong, S. Zhou, J. W ang, and N. Zheng, “End-to-end text-independent speaker verification with triplet loss on short ut- terances, ” in IEEE International Conference on Computer V ision and P attern Recognition (CVPR), Las V egas, Nevada, USA , 2016. [8] W . Chen, X. Chen, J. Zhang, and K. Huang, “Beyond triplet loss: A deep quadruplet network for person re-identification, ” in IEEE International Confer ence on Computer V ision and P attern Recog- nition (CVPR), Honolulu, Hawaii, USA , 2017. [9] H. Alexander , B. Lucas, and L. Bastian, “In Defense of the T riplet Loss for Person Re-Identification, ” arXiv preprint arXiv:1703.07737 , 2017. [10] L. T ran, X. Y in, and X. Liu, “Representation learning by rotating your faces, ” arXiv preprint , 2017. [11] A. Makhzani, N. J. J. Shlens, I. Goodfellow , and B. Frey , “ Adver- sarial Autoencoders, ” arXiv preprint , 2015. [12] D. Snyder , D. Garcia-Romero, D. Pove y , and S. Khudanpur , “Deep neural network embeddings for text-independent speaker verification, ” in Interspeech, Stockholm, Sweden , 2017. [13] C. Li*, X. Ma*, B. Jiang*, X. Li*, X. Zhang, X. Liu, Y . Cao, A. Kannan, and Z. Zhu, “Deep Speaker: an End-to-End Neural Speaker Embedding System, ” arXiv preprint , 2017. [14] L. Li, Y . Chen, Y . Shi, Z. T ang, and D. W ang, “Deep speaker feature learning for text-independent speaker verification, ” in In- terspeech, Stoc kholm, Sweden , 2017. [15] K. Q. W einberger and L. K. Saul, “Distance metric learning for large margin nearest neighbor classification, ” Journal of Machine Learning Resear ch , vol. 10, pp. 207–244, 2009. [16] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” in IEEE International Conference on Com- puter V ision and P attern Recognition (CVPR), Las V e gas, NV , USA , 2016. [17] H. Bredin, “T ristounet: T riplet loss for speaker turn embedding, ” in IEEE International Conference on Acoustics, Speech and Sig- nal Pr ocessing, New Orleans, USA , 2017. [18] I. Goodfellow , J. Pouget-Abadie, M. Mirza, B. Xu, D. W . Farley , S. Ozair, A. Courville, and J. Bengio, “Generative Adversarial Networks, ” arXiv preprint , 2014. [19] M. Mirza and S. Osindero, “Conditional Generative Adversarial Nets, ” arXiv preprint , 2014. [20] A. Radford, L. Metz, and S. Chintala, “Unsupervised Represen- tation Learning with Deep Con volutional Generative Adversarial Networks, ” in International Conference on Learning Representa- tion (ICLR), San J uan, Puerto Rico , 2016. [21] X. Chen, R. H. Y . Duan, J. Schulman, I. Sutske ver , and P . Abbeel, “Infogan: Interpretable Representation Learning by Information Maximizing Generativ e Adversarial Nets, ” in Neural Information Pr ocessing Systems (NIPS), Barcelona, Spain , 2016. [22] I. Gulrajani, F . Ahmed, M. Arjovsky , V . Dumoulin, and A. Courville, “Improved Training of W asserstein GANs, ” arXiv pr eprint arXiv:1704.00028 , 2017. [23] L. Y u, W . Zhang, J. W ang, and Y . Y u, “SeqGAN: Sequence Gener- ativ e Adversarial Nets with Polic y Gradient, ” in AAAI Confer ence on Artificial Intelligence, San F rancisco, California, USA , 2017. [24] D. Michelsanti and Z. T an, “Conditional generative adversarial networks for speech enhancement and noise-robust speaker veri- fication, ” in Interspeech, Stockholm, Sweden , 2017. [25] A. Sriram, H. Jun, Y . Gaur, and S. Satheesh, “Robust Speech Recognition Using Generative Adversarial Networks, ” arXiv pr eprint arXiv:1711.01567 , 2017. [26] M. Zieba and L. W ang, “T raining Triplet Networks with GAN, ” arXiv pr eprint arXiv:1704.02227 , 2017. [27] G. Cao, Y . Y ang, J. Lei, C. Jin, Y . Liu, and M. Song, “TripletGAN: T raining Generativ e Model with TripletLoss, ” arXiv preprint arXiv:1711.05084 , 2017. [28] S. Ioffe and C. Szegedy , “Batch normalization: Accelerating deep network training by reducing internal covariate shift, ” in IEEE International Confer ence on Machine Learning (ICML), Lille, F rance , 2015. [29] C. W u, R. Manmatha, A. J. Smola, and P . Krhenbhl, “Sam- pling Matters in Deep Embedding Learning, ” arXiv pr eprint arXiv:1706.07567 , 2017. [30] V . Panayotov , G. Chen, D. Povey , and S. Khudanpur , “Lib- rispeech: An asr corpus based on public domain audio books, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP), Brisbane, QLD, Australia , 2015. [31] J. S. Garofolo, L. F . Lamel, W . M. Fisher , J. G. Fiscus, D. S. Pallett, N. L. Dahlgren, and V . Zue, “T imit acoustic-phonetic con- tinuous speech corpus, ” Linguistic data consortium , v ol. 10, no. 5, 1993.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment