Style Tokens: Unsupervised Style Modeling, Control and Transfer in End-to-End Speech Synthesis

In this work, we propose "global style tokens" (GSTs), a bank of embeddings that are jointly trained within Tacotron, a state-of-the-art end-to-end speech synthesis system. The embeddings are trained with no explicit labels, yet learn to model a larg…

Authors: Yuxuan Wang, Daisy Stanton, Yu Zhang

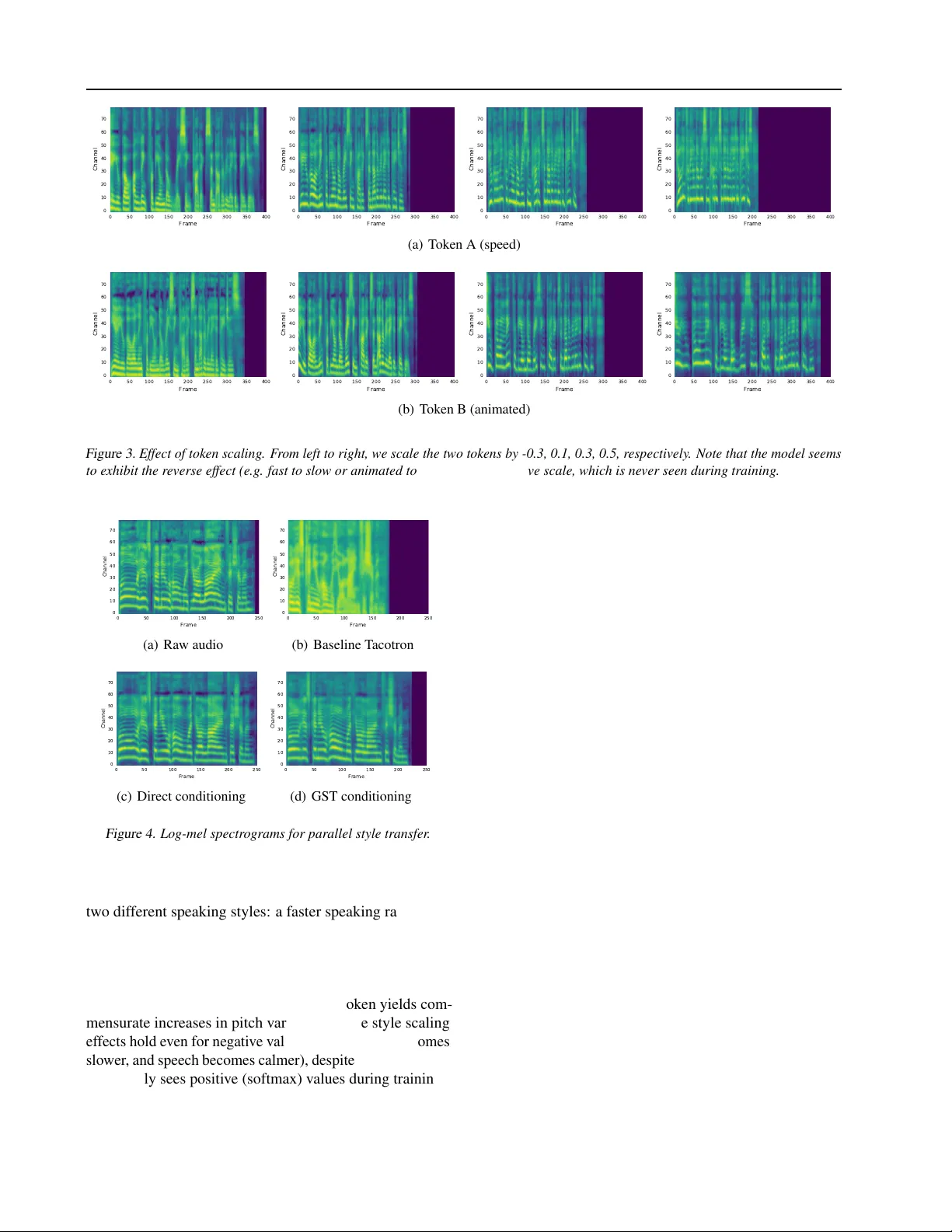

Style T okens: Unsupervised Style Modeling, Contr ol and T ransfer in End-to-End Speech Synthesis Y uxuan W ang 1 Daisy Stanton 1 Y u Zhang 1 RJ Skerry-Ryan 1 Eric Battenberg 1 Joel Shor 1 Y ing Xiao 1 Fei Ren 1 Y e Jia 1 Rif A. Saurous 1 Abstract In this work, we propose “global style tokens” (GSTs), a bank of embeddings that are jointly trained within T acotron, a state-of-the-art end-to- end speech synthesis system. The embeddings are trained with no explicit labels, yet learn to model a large range of acoustic expressi veness. GSTs lead to a rich set of significant results. The soft interpretable “labels” they generate can be used to control synthesis in no vel ways, such as varying speed and speaking style – independently of the text content. They can also be used for style transfer , replicating the speaking style of a single audio clip across an entire long-form te xt corpus. When trained on noisy , unlabeled found data, GSTs learn to factorize noise and speaker identity , providing a path towards highly scalable but rob ust speech synthesis. 1. Introduction The past fe w years hav e seen exciting de velopments in the use of deep neural networks to synthesize natural-sounding human speech ( Zen et al. , 2016 ; v an den Oord et al. , 2016 ; W ang et al. , 2017a ; Arik et al. , 2017 ; T aigman et al. , 2017 ; Shen et al. , 2017 ). As text-to-speech (TTS) models ha ve rapidly improv ed, there is a growing opportunity for a num- ber of applications, such as audiobook narration, ne ws read- ers, and con versational assistants. Neural models sho w the potential to robustly synthesize e xpressiv e long-form speech, and yet research in this area is still in its infancy . T o deliver true human-like speech, a TTS system must learn to model prosody . Prosody is the confluence of a number of phenomena in speech, such as paralinguistic informa- tion, intonation, stress, and style. In this work we focus 1 Google, Inc.. Correspondence to: Y uxuan W ang < yxwang@google.com > . Sound demos can be found at https://google.github. io/tacotron/publications/global_style_ tokens on style modeling , the goal of which is to provide models the capability to choose a speaking style appropriate for the gi ven context. While difficult to define precisely , style contains rich information, such as intention and emotion, and influences the speaker’ s choice of intonation and flo w . Proper stylistic rendering affects ov erall perception (see e.g. “affecti ve prosody” in ( T aylor , 2009 )), which is important for applications such as audiobooks and newsreaders. Style modeling presents sev eral challenges. First, there is no objectiv e measure of “correct” prosodic style, making both modeling and e valuation difficult. Acquiring annotations for large datasets can be costly and similarly problematic, since human raters often disagree. Second, the high dynamic range in expressi ve v oices is difficult to model. Many TTS models, including recent end-to-end systems, only learn an av eraged prosodic distrib ution ov er their input data, generat- ing less expressi ve speech especially for long-form phrases. Furthermore, they often lack the ability to control the ex- pression with which speech is synthesized. This work attempts to address the above issues by introduc- ing “global style tok ens” (GSTs) to T acotron ( W ang et al. , 2017a ; Shen et al. , 2017 ), a state-of-the-art end-to-end TTS model. GSTs are trained without any prosodic labels, and yet uncov er a large range of expressi ve styles. The internal architecture itself produces soft interpretable “labels” that can be used to perform various style control and transfer tasks, leading to significant improvements for expressi ve long-form synthesis. GSTs can be directly applied to noisy , unlabeled found data, providing a path tow ards highly scal- able but rob ust speech synthesis. 2. Model Architectur e Our model is based on T acotron ( W ang et al. , 2017a ; Shen et al. , 2017 ), a sequence-to-sequence (seq2seq) model that predicts mel spectrograms directly from grapheme or phoneme inputs. These mel spectrograms are con verted to wa veforms either by a lo w-resource in version algorithm ( Griffin & Lim , 1984 ) or a neural vocoder such as W aveNet ( van den Oord et al. , 2016 ). W e point out that, for T acotron, the choice of vocoder does not af fect prosody , which is Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis T acotron seq2seq Style embeddings “Style token” layer Input audio sequence Attention Encoder states Input text sequence Attention Decoder Style embedding T raining Inference T acotron seq2seq Reference audio sequence “Style token” layer Style embedding Reference encoder Conditioned on audio signal A B C D 0.0 0.8 0.0 0.0 Input text sequence A B C D Conditioned on T oken B or Reference encoder 0.2 0.1 0.3 0.4 Figure 1. Model diagram. During training , the log-mel spectrogram of the training tar get is fed to the reference encoder follo wed by a style token layer . The resulting style embedding is used to condition the T acotron te xt encoder states. During inference , we can feed an arbitrary reference signal to synthesize te xt with its speaking style. Alternativ ely , we can remo ve the reference encoder and directly control synthesis using the learned interpretable tokens. modeled by the seq2seq model. Our proposed GST model, illustrated in Figure 1 , consists of a reference encoder, style attention, style embedding, and sequence-to-sequence (T acotron) model. 2.1. T raining During training, information flows through the model as follows: • The refer ence encoder , proposed in ( Skerry-Ryan et al. , 2018 ), compresses the prosody of a variable- length audio signal into a fixed-length vector , which we call the r efer ence embedding . During training, the reference signal is ground-truth audio. • The reference embedding is passed to a style token layer , where it is used as the query vector to an at- tention module . Here, attention is not used to learn an alignment. Instead, it learns a similarity measure between the reference embedding and each token in a bank of randomly initialized embeddings . This set of embeddings, which we alternately call global style tokens , GSTs, or token embeddings, are shared across all training sequences. • The attention module outputs a set of combination weights that represent the contribution of each style to- ken to the encoded reference embedding. The weighted sum of the GSTs, which we call the style embedding , is passed to the te xt encoder for conditioning at e very timestep. • The style token layer is jointly trained with the rest of the model, driv en only by the reconstruction loss from the T acotron decoder . GSTs thus do not require any explicit style or prosody labels. 2.2. Inference The GST architecture is designed for powerful and fle xible control in inference mode. In this mode, information can flow through the model in one of tw o ways: 1. W e can directly condition the text encoder on cer- tain tokens, as depicted on the right-hand side of the inference-mode diagram in Figure 1 (“Conditioned on T oken B”). This allo ws for style control and manipula- tion without a reference signal. 2. W e can feed a different audio signal (whose transcript does not need to match the text to be synthesized) to achiev e style transfer . This is depicted on the left- hand side of the inference-mode diagram in Figure 1 (“Conditioned on audio signal”). These will be discussed in more detail in Section 6 . 3. Model Details 3.1. T acotron Architectur e For our baseline and GST -augmented T acotron systems, we use the same architecture and hyperparameters as ( W ang et al. , 2017a ) except for a few details. W e use phoneme inputs to speed up training, and slightly change the decoder , replacing GRU cells with two layers of 256-cell LSTMs; these are regularized using zoneout ( Krueger et al. , 2017 ) with probability 0.1. The decoder outputs 80-channel log- mel spectrogram energies, two frames at a time, which are run through a dilated con volution network that outputs linear spectrograms. W e run these through Griffin-Lim for fast wa veform reconstruction. It is straightforward to replace Griffin-Lim by a W aveNet vocoder to improve the audio fidelity ( Shen et al. , 2017 ). The baseline model achiev es a 4.0 mean opinion score Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis (MOS), outperforming the 3.82 MOS reported in ( W ang et al. , 2017a ) on the same ev aluation set. It is thus a very strong baseline. 3.2. Style T oken Architectur e 3 . 2 . 1 . R E F E R E N C E E N C O D E R The reference encoder is made up of a con volutional stack, follo wed by an RNN. It takes as input a log-mel spectrogram, which is first passed to a stack of six 2-D conv olutional lay- ers with 3 × 3 kernel, 2 × 2 stride, batch normalization and ReLU acti vation function. W e use 32, 32, 64, 64, 128 and 128 output channels for the 6 con volutional layers, respec- tiv ely . The resulting output tensor is then shaped back to 3 dimensions (preserving the output time resolution) and fed to a single-layer 128-unit unidirectional GR U. The last GR U state serves as the reference embedding, which is then fed as input to the style token layer . 3 . 2 . 2 . S T Y L E T O K E N L A Y E R The style token layer is made up of a bank of style tok en em- beddings and an attention module. Unless stated otherwise, our experiments use 10 tok ens, which we found sufficient to represent a small but rich v ariety of prosodic dimensions in the training data. T o match the dimensionality of the text encoder state, each token embedding is 256-D. Similarly , the text encoder state uses a tanh activ ation; we found that applying a tanh activ ation to GSTs before applying attention led to greater token di versity . The content-based tanh atten- tion uses a softmax activ ation to output a set of combination weights o ver the tokens; the resulting weighted combination of GSTs is then used for conditioning. W e experimented with dif ferent combinations of conditioning sites, and found that replicating the style embedding and simply adding it to ev ery text encoder state performed the best. While we use content-based attention as a similarity mea- sure in this work, it is tri vial to substitute alternati ves. Dot- product attention, location-based attention, or e ven combi- nations of attention mechanisms may learn different types of style tokens. In our experiments, we found that using multi- head attention ( V aswani et al. , 2017 ) significantly improves style transfer performance, and, moreover , is more effecti ve than simply increasing the number of tokens. When using h attention heads, we set the token embedding size to be 256 /h and concatenate the attention outputs, such that the final style embedding size remains the same. 4. Model Interpr etation 4.1. End-to-End Clustering/Quantization Intuitiv ely , the GST model can be thought of as an end-to- end method for decomposing the reference embedding into a set of basis v ectors or soft clusters – i.e. the style tokens. As mentioned above, the contrib ution of each style token is represented by an attention score, but can be replaced with any desired similarity measure. The GST layer is concep- tually some what similar to the VQ-V AE encoder ( v an den Oord et al. , 2017 ), in that it learns a quantized representation of its input. W e also experimented with replacing the GST layer with a discrete, VQ-lik e lookup table layer , b ut hav e not seen comparable results yet. This decomposition concept can also be generalized to other models, e.g. the factorized variational latent model in ( Hsu et al. , 2017 ), which exploits the multi-scale nature of a speech signal by e xplicitly formulating it within a factorized hierarchical graphical model. Its sequence-dependent priors are formulated by an embedding table, which is similar to GSTs but without the attention-based clustering. GSTs could potentially be used to reduce the required samples to learn each prior embedding. 4.2. Memory-A ugmented Neural Network GST embeddings can also be vie wed as an e xternal memory that stores style information extracted from training data. The reference signal guides memory writes at training time, and memory reads at inference time. W e may leverage recent advances from memory-augmented networks ( Graves et al. , 2014 ) to further improv e GST learning. 5. Related W ork Prosody and speaking style models have been studied for decades in the TTS community . Howe ver , most existing models require e xplicit labels, such as emotion or speak er codes ( Luong et al. , 2017 ). While a small amount of re- search has explored automatic labeling, learning is still su- pervised, requiring e xpensiv e annotations for model training. AuT oBI, for example, ( Rosenberg , 2010 ) aims to produce T oBI ( Silverman et al. , 1992 ) labels that can be used by other TTS models. Howe ver , AuT oBI still needs annota- tions for training, and T oBI, as a hand-designed label system, is known to ha ve limited performance ( W ightman , 2002 ). Cluster-based modeling ( Eyben et al. , 2012 ; Jauk , 2017 ) is related to our work. Jauk ( 2017 ), for example, uses i -vectors ( Dehak et al. , 2011 ) and other acoustic features to cluster the training set and train models in dif ferent partitions. These methods rely on a complex set of hand-designed features, howe ver , and require training a neutral voice model in a separate step. As mentioned previously , ( Skerry-Ryan et al. , 2018 ) in- troduces the reference embedding used in this work, and shows that it can be used to transfer prosody from a refer- ence signal. This embedding does not enable interpretable style control, howe ver , and we show in Section 6 that it Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis generalizes poorly on some style transfer tasks. Our work substantially extends the research in ( W ang et al. , 2017b ), but there are se veral fundamental differences. First, ( W ang et al. , 2017b ) uses a single frame from the T acotron decoder as the query to learn tokens. It thus only models “local” variations that primarily correspond to F0. GSTs instead use a summary of the entire reference signal as input, and are thus able to uncover both local and global attributes that are essential for expressi ve synthesis. Second, in contrast to the decoder-side conditioning in ( W ang et al. , 2017b ), the design of GSTs allo ws textual input to be condi- tioned on a disentangled style embedding. W e show crucial implications of this for style control and transfer in Section 6.2 . Finally , GSTs can be applied to both clean recordings and noisy found data. W e discuss this and its significance in detail in Section 7 . 6. Experiments: Style Control and T ransfer In this section, we measure the ability of GSTs to control and transfer speaking style, using the inference methods from Section 2.2 . W e train models using 147 hours of American English audio- book data. These are read by the 2013 Blizzard Challenge speaker , Catherine Byers, in an animated and emotiv e story- telling style. Some books contain very expressi ve character voices with high dynamic range, which are challenging to model. As is common for generati ve models, objecti ve metrics often do not correlate well with perception ( Theis et al. , 2015 ). While we use visualizations for some experiments below , we strongly encourage readers to listen to the samples pro vided on our demo page . 6.1. Style Control 6 . 1 . 1 . S T Y L E S E L E C T I O N The simplest method of control is conditioning the model on an individual tok en. At inference time, we simply replace the style embedding with a specific, optionally scaled token. Conditioning in this manner has several benefits. First, it allows us to examine which style attrib utes each token en- codes. Empirically , we find that each token can represent not just pitch and intensity , but also a variety of other at- tributes, such as speaking rate and emotion. This can be seen in Figure 2 , which shows two sentences synthesized with three dif ferent style tokens (scale=0.3) from a 10-token GST model. The plots show that F0 and C0 (energy) curves are quite different across style tokens. Howe ver , the F0 and C0 contours generated by each token follow a clear rela- tiv e trend, despite the fact that input sentences A and B are completely different. 0 50 100 150 200 250 300 350 400 450 Frame 50 0 50 100 150 200 250 300 350 Frequency (Hz) token A token B token C 0 50 100 150 200 250 300 350 400 450 Frame 40 30 20 10 0 10 20 Smoothed C0 token A token B token C (a) Sentence A 0 100 200 300 400 500 Frame 50 0 50 100 150 200 250 300 350 Frequency (Hz) token A token B token C 0 100 200 300 400 500 Frame 40 30 20 10 0 10 20 30 Smoothed C0 token A token B token C (b) Sentence B Figure 2. F0 and C0 (log scale) of two differ ent sentences, synthe- sized using three tokens. Independent of the text content, the same token exhibits the same F0/C0 trend relative to the other tokens. Indeed, perceptually , the red token corresponds to a lower- pitch voice, the green token to a decreasing pitch, and the blue token to a faster speaking rate (note the total audio duration in both plots). Single-token conditioning also rev eals that not all tokens capture single attrib utes: while one token may learn to represent speaking rate, others may learn a mixture of at- tributes that reflect stylistic co-occurrence in the training data (a low-pitched token, for example, can also encode a slower speaking rate). Encouraging more independent style attribute learning is an important focus of ongoing w ork. In addition to pro viding interpretability , style token condi- tioning can also improv e synthesis quality . Consider the problem of long-form synthesis on training data with lots of prosodic v ariation. Many TTS models learn to generate the “av erage” prosodic style, which can be problematic for expressi ve datasets, since the very variation that character- izes them is collapsed. This can also lead to undesirable side effects, such as pitch continuously declining towards the end of each sentence. W e find that conditioning on “li vely”-sounding tokens can address both of these problems, significantly improving the prosodic v ariation. Audio examples of style selection can be found here . 6 . 1 . 2 . S T Y L E S C A L I N G Another method for controlling style token output is via scaling. W e find that multiplying a token embedding by a scalar value intensifies its style ef fect. (Note that large scal- ing v alues may lead to unintelligible speech, which suggests future work on impro ving stability .) This is illustrated in Fig- ure 3 , which shows spectrograms of utterances synthesized by two dif ferent tokens. Perceptually , these tok ens encode Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis 0 50 100 150 200 250 300 350 400 Frame 0 10 20 30 40 50 60 70 Channel 0 50 100 150 200 250 300 350 400 Frame 0 10 20 30 40 50 60 70 Channel 0 50 100 150 200 250 300 350 400 Frame 0 10 20 30 40 50 60 70 Channel 0 50 100 150 200 250 300 350 400 Frame 0 10 20 30 40 50 60 70 Channel (a) T oken A (speed) 0 50 100 150 200 250 300 350 400 Frame 0 10 20 30 40 50 60 70 Channel 0 50 100 150 200 250 300 350 400 Frame 0 10 20 30 40 50 60 70 Channel 0 50 100 150 200 250 300 350 400 Frame 0 10 20 30 40 50 60 70 Channel 0 50 100 150 200 250 300 350 400 Frame 0 10 20 30 40 50 60 70 Channel (b) T oken B (animated) Figure 3. Effect of token scaling. F r om left to right, we scale the two tokens by -0.3, 0.1, 0.3, 0.5, r espectively . Note that the model seems to exhibit the re verse effect (e.g . fast to slow or animated to calm) with a negative scale, which is never seen during training. 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 Channel (a) Raw audio 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 Channel (b) Baseline T acotron 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 Channel (c) Direct conditioning 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 Channel (d) GST conditioning Figure 4. Log-mel spectro grams for parallel style transfer . two dif ferent speaking styles: a faster speaking rate ( 3(a) ), and more animated speech ( 3(b) ). Figure 3(a) shows that increasing the scaling factor of the faster speaking rate to- ken causes a gradual compression of the spectrogram in the time domain. Similarly , Figure 3(b) sho ws that increasing the scaling factor of the animated speech token yields com- mensurate increases in pitch v ariation. These style scaling effects hold even for ne gativ e values (speaking rate becomes slowe r , and speech becomes calmer), despite the fact that the model only sees positiv e (softmax) values during training. Audio examples of style scaling can be found here . 6 . 1 . 3 . S T Y L E S A M P L I N G W e can also control synthesis during inference by modi- fying the attention module weights inside the style token layer . Since the GST attention produces a set of combination weights, these may be refined manually to yield a desired interpolation. W e can also use randomly generated softmax weights to sample the style space. The sampling div ersity can be controlled by tuning the softmax temperature. 6 . 1 . 4 . T E X T - S I D E S T Y L E C O N T R O L / M O R P H I N G While the same style embedding is added to all text encoder states during training, this doesn’t need to be the case in inference mode. As our audio samples demonstrate, this allows us to do piecewise style control or morphing by conditioning on one or more tokens for different segments of input text. Audio examples of style morphing can be found here . 6.2. Style T ransfer Style transfer is an acti ve area of research that aims to syn- thesize a phrase in the prosodic style of a reference signal ( W u et al. , 2013 ; Nakashika et al. , 2016 ; Kinnunen et al. , 2017 ). The property that a GST model can be conditioned on any con ve x combination of style tokens lends itself well to this task; at inference time (method 2 of Section 2.2 ), we can simply feed a reference signal to guide the choice of token combination weights. The experiments below use 4-head GST attention. Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis 0 100 200 300 400 500 Decoder 0 50 100 150 200 Encoder 0 100 200 300 400 500 Decoder 0 50 100 150 200 Encoder 0 100 200 300 400 500 Decoder 0 50 100 150 200 Encoder (a) 10-token GST 0 100 200 300 400 500 Decoder 0 50 100 150 200 Encoder 0 100 200 300 400 500 Decoder 0 50 100 150 200 Encoder 0 100 200 300 400 500 Decoder 0 50 100 150 200 Encoder (b) Direct conditioning (128-D) 0 100 200 300 400 500 Decoder 0 50 100 150 200 Encoder 0 100 200 300 400 500 Decoder 0 50 100 150 200 Encoder 0 100 200 300 400 500 Decoder 0 50 100 150 200 Encoder (c) 256-token GST Figure 5. Robustness in non-parallel style transfer . Left to right: attention alignments obtained fr om feeding three r efer ences whose te xt lengths are 10, 96, 321 characters, r espectively . The tar get text length is 258 char acters. 6 . 2 . 1 . P A R A L L E L S T Y L E T R A N S F E R Figure 4 shows spectrograms for a parallel transfer task, where the te xt to synthesize matches the te xt of the reference signal. The GST model spectrogram is at the bottom right, compared to three other baselines: (a) the ground-truth input signal (i.e. the reference); (b) inference performed by a baseline T acotron model (which infers acoustics only from text); and (c) inference as performed by ( Skerry-Ryan et al. , 2018 ), a T acotron system which conditions the text encoder directly on an 128-D reference embedding. W e see that, given only text input, the baseline T acotron model does not closely match the prosodic style of the ref- erence signal. By contrast, the direct conditioning method of ( Skerry-Ryan et al. , 2018 ) results in nearly time-aligned fine prosody transfer . The GST model is somewhere in between: while its output duration and formant transitions don’t precisely match those of the reference, the overall spectrotemporal en v elopes do. Perceptually , GSTs resemble the prosodic style of the reference. Audio examples of parallel style transfer can be found here . 6 . 2 . 2 . N O N - P A R A L L E L S T Y L E T R A N S F E R W e next show results for a non-parallel transfer task, in which a TTS system must synthesize arbitrary text in the prosodic style of a reference signal. W e chose three dif- ferent reference signals for this task, and tested how well a GST model replicated each style when synthesizing the same target phrase. Since long-form synthesis can benefit significantly from proper stylistic rendering, we used a long (258-character) target phrase. W e chose source phrases of varying lengths (10, 96, and 321 characters, respectiv ely). Figure 5 shows alignment matrices for synthesis conditioned on each source signal. The top row shows a 10-token GST model. This model robustly generalizes to all three conditioning inputs, as e vi- denced by the good alignment plots. The bottom row sho ws a 256-token GST model e xhibiting the same beha vior; we include this model to show that GSTs remain robust e ven when the number of tok ens (256) is larger than the reference embedding dimensionality (128). The middle row shows a model with direct reference em- bedding conditioning. The attention matrices show that this model fails when conditioned on the shorter source phrases, since it tries to squeeze its synthesis into the same time in- terv al as that of the reference. While the model successfully aligns when conditioned on the longest input, intelligibility is poor for some words: the per-utterance embedding cap- tures too much information (such as timing and phonetics) from the source, hurting generalization. Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis T able 1. SxS subjective preference (%) and p -values of GST au- diobook synthesis against a T acotron baseline. Each row shows GST inference conditioned a different reference signal (A and B). p -values are gi ven for both a 3-point and 7-point rating system. P R E F ER E N C E ( % ) P - V A L U E B A S E N E U T RA L G S T 3 - P O IN T 7 - P O IN T S I G NA L A 3 2 . 9 2 6 . 5 4 0 . 6 P = 0 . 0 5 5 2 P = 0 . 0 1 3 1 S I G NA L B 3 3 . 1 2 1 . 9 4 5 . 0 P = 0 . 0 03 8 P = 0 . 0 0 0 3 T able 2. Robust MOS as a function of the percentage of interfer- ence in the training set. The total training set size is the same. N O I S E % B A S E L I N E T AC OT R O N G S T 5 0 % 2 . 8 1 9 ± 0 . 2 6 9 4 . 0 8 0 ± 0 . 0 7 5 7 5 % 1 . 8 1 9 ± 0 . 2 2 7 3 . 9 9 3 ± 0 . 0 7 4 9 0 % 1 . 6 0 9 ± 0 . 1 3 1 4 . 0 3 1 ± 0 . 0 8 2 9 5 % 1 . 3 5 3 ± 0 . 0 9 0 3 . 9 9 7 ± 0 . 0 6 6 T o ev aluate the quality of this method at scale, we ran side- by-side subjectiv e tests of non-parallel GST style transfer against a T acotron baseline. W e used an ev aluation set of 60 audiobook sentences, including many long phrases. W e generated tw o sets of GST output by conditioning the model on two different narrativ e-style reference signals, unseen during training. A side-by-side subjectiv e test indicated that raters preferred both sets of GST synthesis against a T acotron baseline, as shown in T able 1 . The performance of GSTs on non-parallel style transfer is significant, since it allows using a source signal to guide robust stylistic synthesis of arbitrary te xt. Audio examples of non-parallel style transfer can be found here . 7. Experiments: Unlabeled Noisy Found Data Studio-quality data can be both economically and time con- suming to record. While the internet holds vast amounts of rich real-life expressi ve speech, it is often noisy and difficult to label. In this section, we demonstrate ho w GSTs can be used to train robust models directly from noisy found data, without modifications. 7.1. Artificial Noisy Data As a first e xperiment, we artificially generate training sets by adding noise to clean speech. The motiv ation here is to simulate real noisy data while performing controlled ex- periments. T o achiev e this, we pass the single-speaker US English proprietary dataset from ( W ang et al. , 2017a ) into a room simulator ( Kim et al. , 2017 ), which adds varying types of background noise and room rev erberations. The 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 Channel (a) “Music” token 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 Channel (b) “Rev erb . ” token 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 Channel (c) “Noise” token 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 Channel (d) Clean token Figure 6. Noisy and clean tokens uncovered. signal-to-noise ratio (SNR) ranges from 5-25 dB, and the T60s of room reverberation ranges from 100-900 ms. W e create four different training sets where 50%, 75%, 90% and 95% of the input is noisified, respectiv ely . After training a GST -augmented T acotron on these datasets, we run inference in the first mode described in Section 2.2 . Instead of providing a reference signal, we condition the model on each individual style token, which giv es us an interpretable, audible sense of what each token has learned. Interestingly , we find that different noises are treated as styles and “absorbed” into different tokens. W e illustrate the spectrograms from a few tokens in Figure 6 . W e can see (and hear ) that these tokens clearly correspond to dif ferent interference types, such as music, reverberation and general background noise. Importantly , this method rev eals that a subset of the learned tokens also correspond to completely clean speech. This means that we can synthesize clean speech for arbitrary text input by conditioning the model on a single, clean style token. T o demonstrate this, we run inference using a manually- identified clean style token (scaled to 0.3), and then e v aluate the output using MOS naturalness tests. W e use the same 100-phrase e valuation set as ( W ang et al. , 2017a ), collecting 8 ratings each from crowdsourced native speakers. T able 2 shows MOS results for both a baseline T acotron and a “clean-token” GST model. While the baseline T acotron achiev es a 4.0 MOS when the dataset is 100% clean, MOS decreases as interference increases, dropping to a low score of 1.353. Because the model has no prior knowledge of speech or noise, it blindly models all statistics in the train- ing set, resulting in substantial amounts of noise during synthesis. By contrast, the GST model achieves about 4.0 MOS in Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 80 Channel (a) T oken A 0 50 100 150 200 250 Frame 0 10 20 30 40 50 60 70 80 Channel (b) T oken B Figure 7. Log-mel spectr ograms (overlaid with F0 trac ks) of two randomly chosen tokens fr om a GST model trained on the TED data. The two tokens uncover two differ ent speakers. all noise conditions. Note that the number of tokens needs to increase along with the percentage of noise to achiev e this result. For example, a 10-token GST model yields clean tokens when trained on a 50% noise dataset, but the noisier datasets required a 20-token model. Future work may explore ho w to adapt the number of tokens automatically to a giv en data distribution. Audio examples from these models can be found here . 7.2. Real Multi-Speaker F ound Data Our second experiment uses real data. This dataset is made up of audio tracks mined from 439 official TED Y ouT ube channel videos. The tracks contain significant acoustic variations, including channel variation (near - and f ar-field speech), noise (e.g. laughs), and reverberation. W e use an endpointer to segment the audio tracks into short clips, followed by an ASR model to create < text, audio > train- ing pairs. Despite the fact that the ASR model generates a significant number of transcription and misalignment errors, we perform no other preprocessing. The final training set is about 68 hours long and contains about 439 speakers. W ithout using any metadata as labels, we train a baseline T acotron and a 1024-token GST model for comparison. As expected, the baseline fails to learn, since the multi-speaker data is too varied. The GST model results are presented in Figure 7 . This shows spectrograms for the same phrase ov erlaid with F0 tracks, generated by conditioning the model on two randomly chosen tokens. Examining the trained GSTs, we find that dif ferent tokens correspond to dif ferent speakers. This means that, to synthesize with a specific speaker’ s voice, we can simply feed audio from that speaker as a reference signal. See Section 7.3 for more quantitativ e ev aluations. Finally , we exploit the fact that most of the talks are in English, but a small fraction are in Spanish. For this e xper- iment, we compare baseline and GST -enabled noisy data models on a cross-lingual style transfer task. For a baseline, T able 3. WER for the Spanish to English unsupervised language transfer e xperiment. Note that WER is an underestimate of the true intelligibility score; we only care about the relativ e differences. M O D E L W E R ( I N S / D E L / S U B ) G S T 1 8 . 6 8 ( 6 . 1 3 / 2 . 3 7 / 1 0 . 1 8 ) M U LTI - S P E A K E R 5 6 . 1 8 ( 3 . 7 5 / 2 0 . 2 7 / 3 2 . 1 4 ) we train a multi-speaker T acotron similar to ( Ping et al. , 2017 ), using video IDs as a proxy for speak er labels. Condi- tioned on a Spanish speaker label, we then synthesize 100 English phrases. For the GST system, we feed a reference signal from the same Spanish speaker and synthesize the same 100 English phrases. While the Spanish accent from the speaker is not preserved, we find that the GST model produces completely intelligible English speech with a simi- lar pitch range as the speak er . By contrast, the multi-speaker T acotron output is much less intelligible. T o e valuate this result objecti vely , we compute word error rates (WER) of an English ASR model on the synthesized speech. As shown in T able 3 , the WER of the GST utter- ances is much lower than that of the multi-speak er model. The results strongly corroborate that GSTs learn embed- dings disentangled from text content. Though this is an exciting early result, an in-depth study of using GST for prosody-preserving language transfer is in order . 7.3. Quantitative Ev aluations W e use t-SNE ( Maaten & Hinton , 2008 ) to visualize the style embeddings learned from both the artificial noise and TED datasets. Figure 8(a) shows that the embeddings learned from the artificial noisy dataset (50% clean) are clearly sep- arated into tw o classes. Figure 8(b) sho ws style embeddings for 2,000 randomly drawn samples containing 14 TED talk data speakers. W e see that samples are well separated into 14 clusters, each corresponding to an individual speaker . Female and male speakers are linearly separable. W e also use style embeddings as features to perform noise and speaker classification with Linear Discriminativ e Anal- ysis. Results are shown in T able 4 . For noise classification, GSTs unco ver the true label with 99.2% accuracy . For speaker classification, we use TED video IDs as true labels and compare with the i -vector method ( Dehak et al. , 2011 ), a standard representation used in modern speaker verifica- tion systems. For this task, the test set contains 431 speakers. While both trained and tested on short utterances (mean du- ration 3.75 secs), we can see that GSTs are comparable with i -vectors. This is an encouraging result, given that i -vectors were specifically designed for speaker classification. W e speculate that GST has the potential to be applied to speak er diarization. Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis Clean Noise (a) 50% noisy data Female Male (b) Multi-speaker TED Figure 8. Style embedding visualization using t-SNE. T able 4. Classification accuracy (noise-vs-clean and TED speaker ID) using GSTs and i -vectors. Despite being trained within a generativ e model, GSTs encode rich discriminativ e information. E M B E DD I N G A RT I FI CI A L D AT A T E D ( 4 3 1 S P EA K E R S ) G S T 9 9 . 2 % 7 5 . 0 % I - V E CT O R / 7 3 . 4% 7.4. Implications The results abov e have important implications for future TTS research on found data. First, due to the robustness of GSTs to both acoustic and textual noise, the design of automated data mining pipelines may be greatly simplified. Accurate segmentation and ASR models, for example, are no longer necessary to build high-quality TTS models. Sec- ond, style attributes, such as emotion, are often v ery dif ficult to label for large-scale noisy data. Using GSTs or weights to automatically generate style annotations may substantially reduce the human-in-the-loop efforts. 8. Conclusions and Discussions This work has introduced Global Style T okens, a power - ful method for modeling style in end-to-end TTS systems. GSTs are intuitive, easy to implement, and learn without explicit labels. W e hav e shown that, when trained on ex- pressiv e speech data, a GST model yields interpretable em- beddings that can be used to control and transfer style. W e hav e also demonstrated that, while originally concei ved to model speaking styles, GSTs are a general technique for un- cov ering latent variations in data. This was corroborated by experiments on unlabeled noisy found data, which sho wed that the GST model learns to decompose various noise and speaker factors into separate style tok ens. There is still much to be in v estigated, including improving the learning of GST , and using GST weights as targets to predict from text. Finally , while we only applied GST to T acotron in this work, we believe it can be readily used by other types of end-to-end TTS models. More generally , we en vision that GST can be applied to other problem do- mains that benefit from interpretability , controllability and robustness. For example, GST may be similarly employed in text-to-image and neural machine translation models. Acknowledgements The authors thank Aren Jansen, Rob Clark, Zhifeng Chen, Ron J. W eiss, Mike Schuster , Y onghui W u, Patrick Nguyen, and the Machine Hearing, Google Brain and Google TTS teams for their helpful discussions and feedback. References Arik, Sercan O, Chrzanowski, Mike, Coates, Adam, Di- amos, Gregory , Gibiansky , Andrew , Kang, Y ongguo, Li, Xian, Miller , John, Raiman, Jonathan, Sengupta, Shubho, et al. Deep voice: Real-time neural te xt-to-speech. ICML , 2017. Dehak, Najim, Kenn y , Patrick J, Dehak, R ´ eda, Dumouchel, Pierre, and Ouellet, Pierre. Front-end factor analysis for speaker verification. IEEE T ransactions on Audio, Speech, and Language Pr ocessing , 19(4):788–798, 2011. Eyben, Florian, Buchholz, Sabine, and Braunschweiler, Nor- bert. Unsupervised clustering of emotion and v oice styles for expressi ve tts. In ICASSP , pp. 4009–4012. IEEE, 2012. Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis Grav es, Alex, W ayne, Greg, and Danihelka, Ivo. Neural turing machines. arXiv preprint , 2014. Griffin, Daniel and Lim, Jae. Signal estimation from modi- fied short-time fourier transform. IEEE T ransactions on Acoustics, Speech, and Signal Pr ocessing , 32(2):236–243, 1984. Hsu, W ei-Ning, Zhang, Y u, and Glass, James. Unsupervised learning of disentangled and interpretable representations from sequential data. In Advances in Neural Information Pr ocessing Systems , 2017. Jauk, Igor . Unsupervised learning for expr essive speech syn- thesis . PhD thesis, Univ ersitat Polit ` ecnica de Catalunya, 2017. Kim, Chanwoo, Misra, Ananya, Chin, K ean, Hughes, Thad, Narayanan, Arun, Sainath, T ara, and Bacchiani, Michiel. Generation of large-scale simulated utterances in virtual rooms to train deep-neural networks for far -field speech recognition in google home. Pr oc. INTERSPEECH. ISCA , 2017. Kinnunen, T omi, Juvela, Lauri, Alku, Paav o, and Y amag- ishi, Junichi. Non-parallel v oice con version using i-vector plda: T owards unifying speak er verification and transfor- mation. In ICASSP , 2017. Krueger , Da vid, Maharaj, T egan, Kram ´ ar , J ´ anos, Pezeshki, Mohammad, Ballas, Nicolas, K e, Nan Rosemary , Go yal, Anirudh, Bengio, Y oshua, Larochelle, Hugo, Courville, Aaron, et al. Zoneout: Regularizing RNNs by randomly preserving hidden activ ations. In Proc. ICLR , 2017. Luong, Hieu-Thi, T akaki, Shinji, Henter , Gustav Eje, and Y amagishi, Junichi. Adapting and controlling dnn-based speech synthesis using input codes. In ICASSP , pp. 4905– 4909. IEEE, 2017. Maaten, Laurens van der and Hinton, Geof frey . V isualizing data using t-sne. Journal of machine learning r esear ch , 9 (Nov):2579–2605, 2008. Nakashika, T oru, T akiguchi, T etsuya, Minami, Y asuhiro, Nakashika, T oru, T akiguchi, T etsuya, and Minami, Y a- suhiro. Non-parallel training in voice con version using an adaptive restricted boltzmann machine. IEEE/ACM T rans. Audio, Speec h and Lang. Pr oc. , 24(11):2032–2045, Nov ember 2016. Ping, W ei, Peng, Kainan, Gibiansky , Andrew , Arik, Ser- can O, Kannan, Ajay , Narang, Sharan, Raiman, Jonathan, and Miller , John. Deep voice 3: 2000-speaker neural text-to-speech. arXiv pr eprint arXiv:1710.07654 , 2017. Rosenberg, Andre w . AuT oBI-a tool for automatic T oBI an- notation. In Interspeec h , pp. 146–149, 2010. URL http: //eniac.cs.qc.cuny.edu/andrew/autobi/ . Shen, Jonathan, Pang, Ruoming, W eiss, Ron J, Schuster , Mike, Jaitly , Navdeep, Y ang, Zongheng, Chen, Zhifeng, Zhang, Y u, W ang, Y uxuan, Skerry-Ryan, RJ, et al. Nat- ural tts synthesis by conditioning wav enet on mel spec- trogram predictions. arXiv pr eprint arXiv:1712.05884 , 2017. Silverman, Kim, Beckman, Mary , Pitrelli, John, Ostendorf, Mori, W ightman, Colin, Price, Patti, Pierrehumbert, Janet, and Hirschberg, Julia. T oBI: A standard for labeling english prosody . In Second International Conference on Spoken Language Pr ocessing , 1992. Skerry-Ryan, RJ, Battenberg, Eric, Xiao, Y ing, W ang, Y ux- uan, Stanton, Daisy , Shor , Joel, W eiss, Ron J., Clark, Rob, and Saurous, Rif A. T owards end-to-end prosody transfer for expressi ve speech synthesis with Tacotron. arXiv preprint , 2018. T aigman, Y aniv , W olf, Lior, Polyak, Adam, and Nachmani, Eliya. V oice synthesis for in-the-wild speakers via a phonological loop. arXiv pr eprint arXiv:1707.06588 , 2017. T aylor, Paul. T ext-to-speech synthesis . Cambridge university press, 2009. Theis, Lucas, Oord, A ¨ aron v an den, and Bethge, Matthias. A note on the ev aluation of generativ e models. arXiv pr eprint arXiv:1511.01844 , 2015. van den Oord, A ¨ aron, Dieleman, Sander, Zen, Heiga, Si- monyan, Karen, V inyals, Oriol, Graves, Alex, Kalch- brenner , Nal, Senior , Andre w , and Kavukcuoglu, K o- ray . W avenet: A generativ e model for raw audio. CoRR abs/1609.03499 , 2016. van den Oord, Aaron, V inyals, Oriol, et al. Neural discrete representation learning. In Advances in Neural Informa- tion Pr ocessing Systems , pp. 6309–6318, 2017. V aswani, Ashish, Shazeer , Noam, Parmar , Niki, Uszk oreit, Jakob, Jones, Llion, Gomez, Aidan N, Kaiser , Ł ukasz, and Polosukhin, Illia. Attention is all you need. In Ad- vances in Neural Information Pr ocessing Systems , pp. 6000–6010, 2017. W ang, Y uxuan, Skerry-Ryan, RJ, Stanton, Daisy , W u, Y onghui, W eiss, Ron J., Jaitly , Na vdeep, Y ang, Zongheng, Xiao, Y ing, Chen, Zhifeng, Bengio, Samy , Le, Quoc, Agiomyrgiannakis, Y annis, Clark, Rob, and Saurous, Rif A. T acotron: T owards end-to-end speech synthe- sis. In Pr oc. Interspeech , pp. 4006–4010, August 2017a. URL . W ang, Y uxuan, Skerry-Ryan, RJ, Xiao, Y ing, Stanton, Daisy , Shor, Joel, Battenberg, Eric, Clark, Rob, and Style T okens: Unsupervised Style Modeling, Control and T ransfer in End-to-End Speech Synthesis Saurous, Rif A. Uncov ering latent style factors for ex- pressiv e speech synthesis. ML4Audio W orkshop, NIPS , 2017b. W ightman, Colin W . T obi or not tobi? In Speech Pr osody 2002, International Confer ence , 2002. W u, Zhizheng, Chng, Eng Siong, and Li, Haizhou. Condi- tional restricted boltzmann machine for voice con version. In ChinaSIP , 2013. Zen, Heig a, Agiomyrgiannakis, Y annis, Egberts, Niels, Hen- derson, Fergus, and Szczepaniak, Przemysław . F ast, compact, and high quality LSTM-RNN based statisti- cal parametric speech synthesizers for mobile devices. Pr oceedings Interspeech , 2016.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment