Fastest Rates for Stochastic Mirror Descent Methods

Relative smoothness - a notion introduced by Birnbaum et al. (2011) and rediscovered by Bauschke et al. (2016) and Lu et al. (2016) - generalizes the standard notion of smoothness typically used in the analysis of gradient type methods. In this work we are taking ideas from well studied field of stochastic convex optimization and using them in order to obtain faster algorithms for minimizing relatively smooth functions. We propose and analyze two new algorithms: Relative Randomized Coordinate Descent (relRCD) and Relative Stochastic Gradient Descent (relSGD), both generalizing famous algorithms in the standard smooth setting. The methods we propose can be in fact seen as a particular instances of stochastic mirror descent algorithms. One of them, relRCD corresponds to the first stochastic variant of mirror descent algorithm with linear convergence rate.

💡 Research Summary

The paper “Fastest Rates for Stochastic Mirror Descent Methods” investigates how to accelerate first‑order optimization for convex problems that are not smooth in the classical Lipschitz‑gradient sense, but satisfy a more general notion called relative smoothness. Relative smoothness, introduced by Birnbaum et al. (2011) and later rediscovered by Bauschke et al. (2016) and Lu et al. (2016), replaces the Euclidean quadratic upper bound with a Bregman‑type bound based on a reference function h. Formally, a convex differentiable function f is L‑smooth relative to h if for all x, y in the feasible set Q,

f(x) ≤ f(y) + ⟨∇f(y), x‑y⟩ + L D_h(x, y),

where D_h is the Bregman distance generated by h. When h(x)=½‖x‖², this reduces to the standard L‑smoothness. The authors also use the companion concept of relative strong convexity: f is μ‑strongly convex relative to h if

f(y) ≥ f(x) + ⟨∇f(x), y‑x⟩ + μ D_h(y, x).

The paper’s main contribution is the design and analysis of two stochastic algorithms that operate under these relative conditions:

- Relative Randomized Coordinate Descent (relRCD).

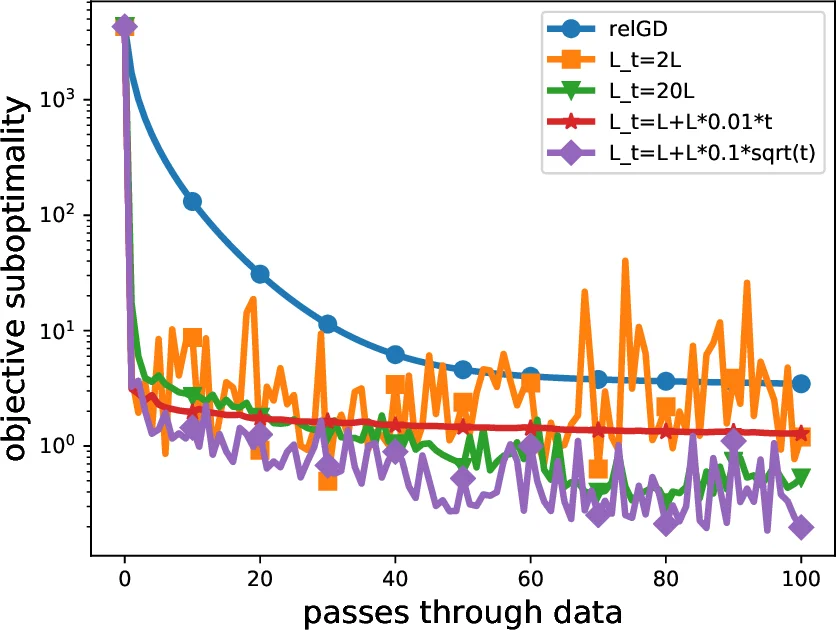

At each iteration a random subset S_t of τ coordinates is selected (τ may be 1 or larger). The algorithm updates only those coordinates by solving a Bregman‑proximal subproblem that mirrors the deterministic relative gradient descent (relGD) step. The analysis relies on an Expected Separable Overapproximation (ESO) inequality, which provides a bound on the expected Bregman distance decrease in terms of per‑coordinate parameters v(i) (≤ L). With a stepsize L_t chosen according to the ESO bound, the authors prove a linear convergence rate under relative strong convexity:

E

Comments & Academic Discussion

Loading comments...

Leave a Comment