Water Residence Time Estimation by 1D Deconvolution in the Form of a l2-Regularized Inverse Problem With Smoothness, Positivity and Causality Constraints

The Water Residence Time distribution is the equivalent of the impulse response of a linear system allowing the propagation of water through a medium, e.g. the propagation of rain water from the top of the mountain towards the aquifers. We consider t…

Authors: Alina G. Meresescu, Matthieu Kowalski, Frederic Schmidt

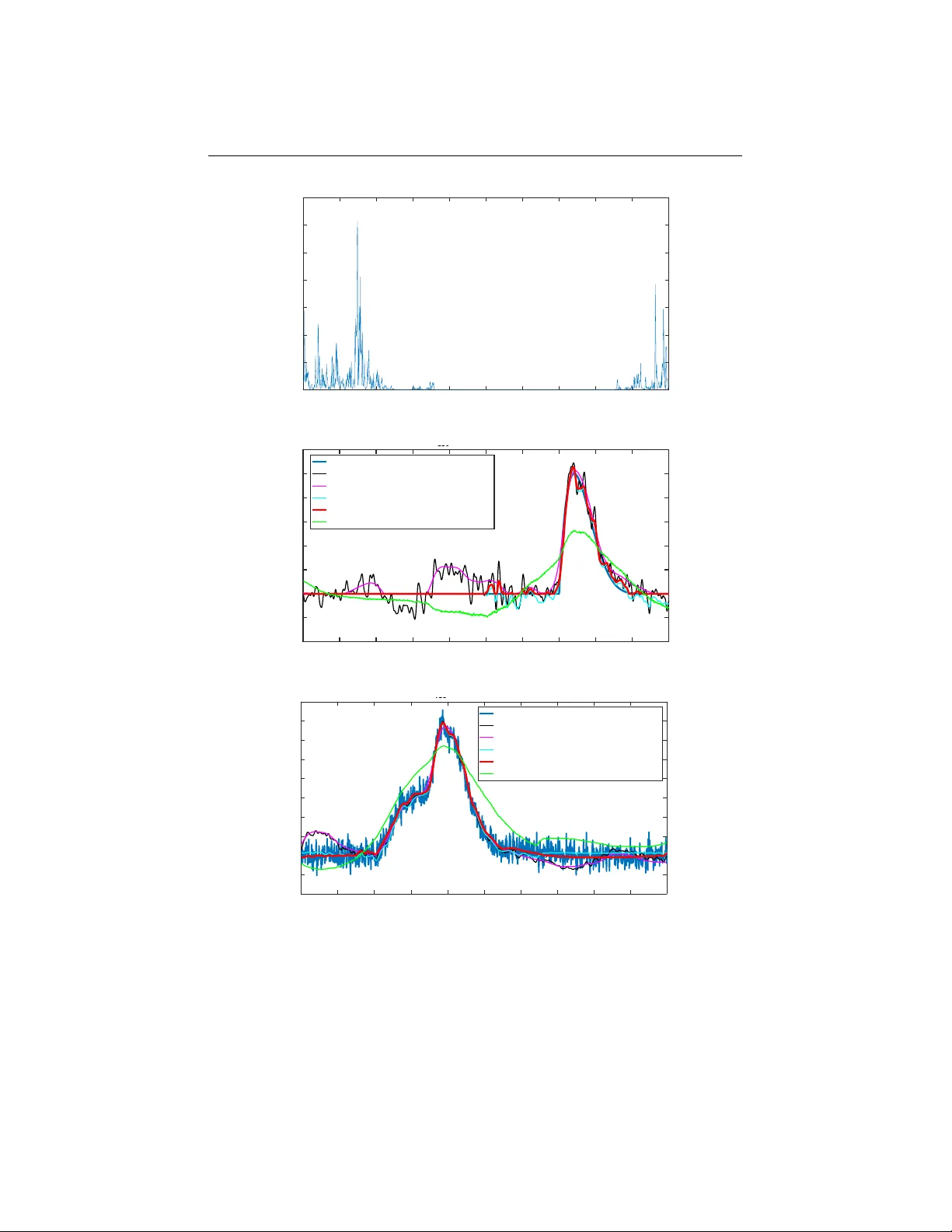

W ater Residence T ime Estimation by 1D Decon v olution in the Form of a l 2 -Regularized In verse Problem W ith Smoothness, Positi vity and Causality Constraints Alina G. Meresescu 1,2 , Matthieu K owalski 2 , Fr ´ ed ´ eric Schmidt 1 , Franc ¸ ois Landais 1 Abstract The W ater Residence T ime distribution is the equi valent of the impulse response of a linear system allo wing the propagation of water through a medium, e.g. the propaga- tion of rain water from the top of the mountain tow ards the aquifers. W e consider the output aquifer le vels as the con volution between the input rain le vels and the W ater Residence T ime, starting with an initial aquifer base lev el. The estimation of W ater Residence T ime is important for a better understanding of hydro-bio-geochemical pro- cesses and mixing properties of wetlands used as filters in ecological applications, as well as protecting fresh water sources for wells from pollutants. Common methods of estimating the W ater Residence Time focus on cross-correlation, parameter fitting and non-parametric decon volution methods. Here we propose a 1D full-decon volution, regularized, non-parametric in verse problem algorithm that enforces smoothness and uses constraints of causality and positivity to estimate the W ater Residence T ime curv e. Compared to Bayesian non-parametric decon volution approaches, it has a f ast runtime per test case; compared to the popular and fast cross-correlation method, it produces a more precise W ater Residence T ime curve ev en in the case of noisy measurements. The algorithm needs only one re gularization parameter to balance between smoothness of the W ater Residence T ime and accuracy of the reconstruction. W e propose an ap- proach on how to automatically find a suitable value of the regularization parameter from the input data only . T ests on real data illustrate the potential of this method to analyze hydrological datasets. K eywor ds: Hydrology , W ater Residence T ime, 1D deconv olution, transit time, catchment ∗ Corresponding author Email addr ess: alina-georgiana.meresescu@u-psud.fr (Alina G. Meresescu) 1 GEOPS, Univ . Paris-Sud, CNRS, Univ ersite Paris-Saclay , Rue du Belvedere, Bat. 504-509, 91405 Orsay , France 2 L2S, Univ . Paris-Sud, Supelec, CNRS, Uni versite Paris-Saclay , 3 rue Joliot Curie, 91192 Gif-sur -Yvette, France Pr eprint submitted to Computers and Geosciences September 3, 2018 1 INTR ODUCTION 1. Introduction The hydrological W ater Residence T ime distribution (named in this article simply as residence time) is a measure allo wing the analysis of the transit of water through a giv en medium. Its estimation is necessary when using wetlands as a natural treatment plant for pollutants that are already in the water W erner & Kadlec (2000), to better manage and protect drinking water sources from pollution Cirpka et al. (2007), to study the water transport of dissolved nutrients Gooseff et al. (2011). F or a more compre- hensiv e application range, including deciphering hydro-bio-geochemical processes or riv er monitoring, the re view done in McGuire & McDonnell (2006) is a useful starting point. W e call here the residence time the linear response of the aquifer system. In this context it refers to wa ve propagation of the water dynamics, not to the actual molecular trav el time Botter et al. (2011). T o obtain the residence time, one can distinguish two families of methods: active and passive. The active methods are carried out by releasing tracers at the entrance of the system at a gi ven time, like artificial dyes, and then by tracing the curve while mea- suring the tracer levels at the exit of the system Dziko wski & Delay (1992); W erner & Kadlec (2000); Payn et al. (2008); Robinson et al. (2010). Although robust, this methodology inv olves high effort and high operational costs. It could also perturb the water channel and this may lead to biased results. The passiv e methodology consists of recording data at the inlet and outlet of the water channel by specific water iso- topes McGuire & McDonnell (2006), water electrical conducti vity Cirpka et al. (2007) or by simply recording the rainfall le vels at high altitude grounds and the aquifer levels at the base Delbart et al. (2014). In the passi ve case, the residence time is not measured directly but must be retriev ed by decon volution. Some authors also use decon volution in the active methodology when the release of tracer cannot be considered as instan- taneous McGuire & McDonnell (2006); Cirpka et al. (2007); Payn et al. (2008). The residence time can then be approximated as the impulse response of the system and this in turn can be estimated by deconv olution Neuman et al. (1982); Skaggs et al. (1998); Fienen et al. (2006). The method can also be used for enhancing geophysical models, although not targeted explicitly for W ater Residence Time estimation Zuo & Hu (2012). Decon volution methods can be parametric Neuman & De Marsily (1976); Long & De- rickson (1999); Etchev erry & Perrochet (2000); W erner & Kadlec (2000); Luo et al. (2006); McGuire & McDonnell (2006) or non-parametric Neuman et al. (1982); Di- etrich & Chapman (1993); Skaggs et al. (1998); Michalak & Kitanidis (2003); Cirpka et al. (2007); Fienen et al. (2008); Gooseff et al. (2011); Delbart et al. (2014). Parametric methodology has the adv antage of always providing a result with ex- pected properties such as correct shape and positiveness but with the cav eat of being insensitiv e to unexpected results for real data (for instance a second peak in the res- idence time). The non-parametric decon volution has the advantage of being ”blind”, meaning that no strong a priori are being set on the estimated curve, but in the ab- sence of adapted mathematical constraints, the results may not reflect the physics of the residence time curve (these are sometimes ne gative or non-causal). Our method is non-parametric and takes into account limitations of pre vious meth- ods from the same category: variable-sized rainfall time series as input compared to Neuman et al. (1982), a more compact direct model formulation than in Neuman c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 2 1 INTR ODUCTION et al. (1982); Cirpka et al. (2007), less computational ef fort and less time consuming than for a Bayesian Monte-Carlo inv erse problem methodology Fienen et al. (2006, 2008), strictly using a passive method with respect to mixed methods like the ones in Gooseff et al. (2011). In contrast to the cross-correlation V ogt et al. (2010); Delbart et al. (2014) we av oid the unrealistic hypothesis that the rain signal can be considered as white noise. In fact, rainfall datasets hav e long range memory properties and therefore we simulate the input rainfall for synthetic tests as a multifractal signal T essier et al. (1996). One important dif ference from other non-parametric deconv olution methods is that we enforce causality e xplicitly through projection. W e also discuss the im- portance of this aspect to av oid a sub-optimal solution when using a Fourier Domain based conv olution McCormick (1969). In Neuman et al. (1982); Dietrich & Chapman (1993); Delbart et al. (2014) the causality constraint was not mentioned. In Skaggs et al. (1998); Cirpka et al. (2007); Payn et al. (2008); Goosef f et al. (2011), causality is taken into account through a carefully constructed T oeplitz matrix for the conv olution operation. W e propose a new algorithm to estimate the residence time with the following properties: • passiv e: only input rainfall and output aquifer le vels are required; • flexible: in the sense that it handles ev en une xpected solutions (double peaks or unexpected shapes of the residence time). It can handle Dirac-like rain events as inputs but also clustered rain ev ents over a longer time period (for instance a whole season); • constrained: by physical and mathematical aspects of the residence time (posi- tivity , smoothness and causality); • automatic: providing a simple and accurate way of choosing the best hyper- parameter that governs the smoothness of the residence time curve, without hu- man operation; • efficient/accurate: a fast algorithm that provides a good signal-to-noise ratio (SNR), av oiding noise amplification. This last property is important in order to deal with non-linearity and non-stationarity of the water channel, a known difficulty in residence time estimation Neuman & De Marsily (1976); Massei et al. (2006); McGuire & McDonnell (2006); Payn et al. (2008) The rest of this article is organized as follows: Section 2 presents the direct prob- lem and the in verse problem formulation, Section 3 depicts the algorithm used to solv e this inv erse problem formulation. Some important implementation details are dis- cussed in Section 4. W e also discuss differences between our solution and previous non-parametric 1D deconv olution methods used as benchmarks in Section 5. In Sec- tion 6 we present results obtained from synthetic data and we discuss the choice of the hyper-parameter that controls the smoothness of the residence time. Finally , we present results obtained from real data in Section 7, while Section 8 concludes the paper . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 3 2 MODEL 2. Model 2.1. Dir ect Problem The direct model for water propagation through a channel can be written as a linear system Neuman et al. (1982): y = 1 c + x ∗ k + n , (1) with: • y ∈ R + T , y = ( y 0 , ..., y T ) output of the linear system: aquifer basin level (known), real, positiv e signal, of length T , • 1 vector of all ones of length T , • c ≥ 0 initial aquifer basin level (to estimate), real, positi ve, constant • x ∈ R + T , x = ( x 0 , ..., x T ) input of the linear system: rainfall lev el (kno wn), real, positiv e signal, of length T • ∗ con volution • k ∈ R + K , k = ( k − K 2 , ... k 0 , k 1 , ... k K 2 ) impulse response to be estimated, real, posi- tiv e signal, of length K • n ∈ R T white gaussian noise, real, signal of length T . The impulse response of the system – k – as well as the mean le vel of the aquifer – c – must be estimated. It is required that k be positiv e, causal, and smooth. If positivity is obvious for the residence time, causality refers to the delayed, unidirectional flo w of water from the point of entry to the aquifer , thus the idea that k must progress only in the positi ve time domain (negati ve time domain elements of k are zero). Smoothness regularization is used in order to a void noise amplification in the decon volution. 2.2. In verse Pr oblem T o estimate k , we propose to solve the follo wing constrained optimization problem: ˆ k , ˆ c = argmin k ∈ R K + , c 1 2 k y − x ∗ k − c 1 k 2 2 + λ k ∇ k k 2 2 (2) s . t . causality is enforced: ∀ i ∈ {− K / 2 , . . . , − 1 } k i = 0 This function classically introduces a ”fidelity term” (attachment to the data) corre- sponding to the white Gaussian noise, as well as a ` 2 ”regularization term” on the gra- dient of k in order to fav or ”smooth” solutions. The smoothness de gree of the estimate is controlled by the hyper -parameter λ . A bigger λ will stress more the smoothness of the solution, while a smaller λ will better fit the solution to the data. A main goal of this work is also to find the optimal λ range that consistently gi ves accurate estimates c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 4 3 AL TERNA TING MINIMIZA TION FOR 1D DECONVOLUTION while taking into account both good data representation and smoothness a priori . In the following, we re write the functional (2) using matrix operators: J ( k , c ) = 1 2 k y − Xk − c 1 k 2 2 + λ k Dk k 2 2 (3) s . t . ∀ i ∈ {− K / 2 , . . . , − 1 } k i = 0 and ∀ i k i ≥ 0 where X is the T oeplitz matrix corresponding to the con volution by the signal x , while D is the finite-difference matrix corresponding to the gradient used for applying smoothness on the estimated signal. The minimization of J ( k , c ) can be interpreted as a Maximum A Posteriori (MAP) estimation in a Bayesian context with a Gaussian prior on the noise and an exponential family on the smoothness. Since the problem is conv ex, we estimate k and c by an Alternating Minimization algorithm (shortened throughout as AM), that ensures a global minimization for the two items to be estimated. A historical overvie w is av ailable from OSulliv an (1998). W ith a fixed c , the problem is a simple quadratic optimization with constraints that is solved using the Projected Newton Method Bertsekas (1982), chosen for computational speed. With a fix ed k , the estimate of c is giv en by an analytic formula. The AM algorithm will ev aluate k to conv ergence while applying an orthogonal projection P on the positi vity and causality constraints in each iteration. The analytic solution for k is computed and used as an initial step for the iterati ve AM algorithm. 3. Alternating Minimization f or 1D Deconv olution After replacing the con volution operator with the equiv alent T oeplitz matrix X , we introduce the functional J ( k , c ) to minimize: J ( k , c ) = P 1 2 || y − X · k − c 1 || 2 2 + λ || Dk || 2 2 , (4) where P ( k ) is the orthogonal projection ov er the constraints, ∀ t k t = 0 if k t < 0 or if t < 0. Considering that both k and c must be estimated, we propose an AM algorithm where in a first step k est is estimated, then in the second step c est is updated. 3.1. Estimation of k est with the Pr ojected Newton Method The update of k est by the Projected Newton Method with c fixed is gi ven by: k t + 1 = P k t + α t · ( − ∇ 2 J ( k , c ) − 1 · ∇ J ( k , c )) = P ( 1 − α t ) k t + α t · ( X T X + λ D T D ) − 1 · X T ( y − c 1 ) , (5) where α t > 0 is the descent step size. For k = k − K / 2 , . . . , k 0 , k K / 2 , we have P ( k ) = 0 , . . . , 0 , ( k 0 ) + , . . . , ( k K / 2 ) + , where ( x ) + = max ( 0 , x ) . By replacing the Hessian and the Jacobian of (3) in (5), we see that only the step size α t can ev olve at each iteration, while k t is changed by a constant called Newton’ s step. k t + 1 = ( 1 − α t ) k t + α t · ( X T X + λ D T D ) − 1 · X T ˜ y k t + 1 = ( 1 − α t ) k t + α t · M t n , (6) c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 5 3.2 Estimation of c 3 AL TERNA TING MINIMIZA TION FOR 1D DECONVOLUTION where α t is the variable step size, M t n = ( X T X + λ D T D ) − 1 · X T ˜ y is Ne wton’ s step. 3.2. Estimation of c T aking the deri vati ve of (3) with respect to c 1 leads to: ∇ J ( k , c ) = − y + 1 T Xk + c 1 ! = 0 (7) W ith k fixed, the estimation of c is gi ven by: c 1 = y − 1 T Xk , (8) where ¯ m is the empirical mean of vector m . The AM algorithm for estimating k and c is summarized in Alg. 1. Algorithm 1 Alternating Minimization Input: x , y , λ , D , α min , k er r min , y err min , s max , t max Output: k est , c est , y rec 1: c est = y , ˆ y = y − c est 2: M t n = ( X T X + λ D T D ) − 1 · X T ˆ y , k est = M t n 3: k err rel = 1 , y err rel = 1 , s = 0 , t = 0, J re f = 1 2 || ˆ y || 2 , y rec = 1 4: while s != s max and y er r rel > y er r min do 5: α = 1, s = s + 1 6: k est ol d = k est , y rec ol d = y rec , ˆ y = y − c est 7: while t != t max and k err rel > k err min and α > α min do 8: t = t + 1 9: k est = P (( 1 − α ) k est ol d + α M t n ) 10: J ( k ) t + 1 = 1 2 || ˆ y − x ∗ k est || 2 2 + λ || D k est || 2 2 11: if ( J ( k ) t + 1 > J re f ) then 12: k est ol d = k est , α = 0 . 9 · α 13: else 14: J re f = J ( k ) t + 1 , t = 0 15: break; 16: end if 17: k er r rel = || k est − k est ol d || 2 2 || k est || 2 2 18: end while 19: ˜ y rec = x ∗ k est 20: c est = y − ˜ y rec 21: y rec = ˜ y rec + c est , y er r rel = || y rec − y rec ol d || 2 2 || y rec || 2 2 22: end while 23: return k est , y rec , c est c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 6 4 IMPLEMENT A TION DET AILS 4. Implementation Details W e provide a distribution package in Matlab for our algorithm and the download link can be found at the end of this article. Although in the pre vious sections the model and the solution are written in matrix form, the Matlab implementation of the con volution for our AM algorithm is done through dot product multiplication in the Fourier Domain with appropriate zero padding, meaning that no T oeplitz matrix is explicitly defined here for the con volution. It is also possible to carefully implement a causal con volution by designing a proper T oeplitz matrix. Ho wever , the con volution in the Fourier Domain appears to be more ef ficient in general. This implementation also allows for the estimation of a k residence time longer than the inputs x and y , although this w ould be under -determined. Once that non-circularity is enforced through this particular implementation of the conv olution, another aspect that is dealt with is the causality constraint. In Figure 1, we present the con volution of two rainfall Diracs with a residence time curve. W e con volve the rainfall time series once with a residence time curve found in the negati ve time domain (causality is not respected) and once when this curve is in the positiv e time domain (causality is respected). The resulting breakthrough curve appears before the rain ev ents in the first case which is wrong. In the second case the breakthrough curv e appears after these rainfall e vents as expected for real applications. In the non-causal case lobes can appear in the ne gative time domain also, incorporating energy that should be present in the residence time curve thus reducing its amplitude and distorting its shape. c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 7 4 IMPLEMENT A TION DET AILS 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 Rainfall [mm] Rainfall Measurements, x -500 -400 -300 -200 -100 0 100 200 300 400 500 time [hours] 0 0.5 1 WRT [1] Water Residence Time, Non Causal k 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 10 Basin [mm] Basin Measurements, y (a) 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 Rainfall [mm] Rainfall Measurements, x -500 -400 -300 -200 -100 0 100 200 300 400 500 time [hours] 0 0.5 1 WRT [1] Water Residence Time, Causal k 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 10 Basin [mm] Basin Measurements, y (b) Figure 1: Enforcing causality while doing the conv olution in the Fourier Domain needs to include the nega- tiv e time domain interval of the residence time. In Figure 2 we estimate with the AM algorithm all the possible residence time curves: with no positi vity and no causality constraints applied, only the positivity con- straint applied, only the causality constraint applied, and both positivity and causality constraints applied. In all cases, the conv olution between the rainfall and these resi- dence time curves giv e a reconstructed breakthrough curve that is similar in general shape with the real one. The best residence time estimation and breakthrough curve reconstruction are nonetheless the ones where both positivity and causality constraints are applied in the algorithm. c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 8 4 IMPLEMENT A TION DET AILS 0 100 200 300 400 500 600 700 800 900 1000 t [hours] 0 0.5 1 1.5 2 2.5 3 3.5 Rainfall [mm] x (a) -500 -400 -300 -200 -100 0 100 200 300 400 500 t [hours] -0.4 -0.2 0 0.2 0.4 0.6 0.8 1 1.2 WRT [1/t] k true, k est s for an input SNR of 15 dB true No Constraints, SNR = 8.31 dB Just Positivity, SNR = 9.51 dB Just Causality, SNR = 16.3 dB Positivity and Causality, SNR = 19.2 dB XCORR, SNR = 4.72 dB (b) 0 100 200 300 400 500 600 700 800 900 1000 t [hours] 90 95 100 105 110 115 120 125 130 135 140 Basin [mm] y true, y rec s for an input SNR of 15 dB true No Constraints, SNR = 31.4 dB Just Positivity, SNR = 31.1 dB Just Causality, SNR = 34.1 dB Positivity And Causality, SNR = 34.4 dB XCORR, SNR = 26.8 dB (c) Figure 2: Different results for the k est for different constraints applied during the AM algorithm. All give a similar y rec but the best y rec and k est are those where both positivity and causality constraints are applied. Furthermore, not applying the causality constraint all along the AM algorithm, and setting the negati ve time domain of k est to zero only at the end, would lead to a sub- c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 9 5 DISCUSSION ON RELA TED WORK optimal solution caused by the way in which the AM algorithm na vigates through the optimality map attached to the gi ven functional: any change in the estimated vector k est at the end of the algorithm moves the value of the functional away from the optimal point that was estimated in the last iteration McCormick (1969); Bertsekas (1982). 5. Discussion on Related W ork Non-parametric deconv olution techniques with/without positivity constraints exist from the 1980s. Ho w is our method different from those and why benchmarking it against the cross-correlation? 5.1. Comparison to Pr evious W orks As a first example, let’ s take Neuman et al. (1982) which does a regularized non- parametric decon volution and uses a bi-criterion curve; it navigates the optimality map to find the optimal estimation of the residence time by using a lag-one auto-correlation coefficient between the two error criteria. W e consider this to be similar to our approach but our functional has a simpler , unified formulation from the direct model’ s point of vie w and a different method to navigate the optimality map through the Projected Newton method in the AM algorithm. Also in the cited article there is no discussion about positivity , smoothness and causality of the estimated residence time. In the case of the Skaggs et al. (1998) article, the direct model is similar to ours with some differences in its formulation: ( ˆ f , ˆ α ) = argmin f ∈ R K + , α = 1 2 k c − A · f k 2 2 + α 2 k ∇ 2 f k 2 2 with f ≥ 0 , a 0 f = 1 , (9) where • c is the output of the system, known; • a is the input of the system, known; • A is the T oeplitz matrix of the input of the system; • f is the impulse response of the system, to estimate; • α is the hyper-parameter to estimate with Fischer’ s Statistic method; • ∇ 2 f denotes the Hessian of f The hyper-parameter α is here squared and determined with Fischer’ s Statistic method ( F ), while smoothness is implemented by a second deri vati ve applied on f . There is a constraint for positivity and the condition that the integral of the obtained curve sums up to 1. The solutions are ev aluated with Provencher (1982) Fischer’ s Statistic method and visual inspection. Another aspect here is the multiple peak prob- lem, where Prov encher (1982) argues to in vestigate separately for certain values of F . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 10 5.1 Comparison to Pre vious W orks 5 DISCUSSION ON RELA TED WORK Also, to av oid computational difficulties in the test runs, a basis function representa- tion of f was introduced to ensure linearity between the probability density function (pdf) representation and the transport model. A causality constraint is not discussed here. In contrast, we estimate the α hyper-parameter ( λ in our case) by using the SN R values between the reconstructed breakthrough curve and the original one. A bigger SN R means a better reconstruction and also a better estimation of k through the con- straints, and this is realized through the λ hyper-parameter possible choice strategies ( α equiv alent). A hydrologist can then estimate the same curve with a range of values for λ , for multiple time series and time series lengths, and then see what λ v alue best fits for that particular tested site. W e do smoothness regularization with a first-order deriv ativ e since testing with a second-order deri vati ve does not sho w any improv ement on the estimate, thus our direct model is slightly simpler . Our algorithm does not make an a priori assumption about the shape of the estimated residence time, therefore mul- tiple lobes can appear without ha ving to set any fixed number of these beforehand. The estimation of f ( k in our case) is also free of being modeled with basis functions. The sole observation here is that the channel needs to be short enough so that it can be considered linear . In the case of Fienen et al. (2006) the presented method is a Bayesian Monte-Carlo non-parametric decon volution method that giv es as result the full shape of the residence time distribution curve containing all possible residence time curves for that channel with zones of interest curves and the average curve. The method can yield multiple peaks in the transfer function with some computational cost – ” Using the MCMC Gibbs sampler with r eflected Br ownian motion requir es some computational ef fort (CPU time up to several days on a typical desktop computer) ” Fienen et al. (2006). There is a constraint for positivity and for causality through Michalak & Kitanidis (2003). Ex- pectation Maximization is used to estimate the parameters. The algorithm is tested on uni-modal and bi-modal cases. In comparison, our method provides f aster estimates of the residence time curve for a Dirac-like rainfall e vent or for a clustered rainfall event. The computational cost per tested hyper -parameter λ is small. There is no constraint on the shape of the residence time curve other than smoothness (controlled by λ ), and positivity and causality which we implement throughout the algorithm. On the down- side, our algorithm does not estimate the uncertainties attached to the residence time like in a Bayesian approach. Another example is Dietrich & Chapman (1993) with an algorithm based on ridge regression, where the direct model is similar to ours but has two hyper -parameters to be set. Michalak & Kitanidis (2003) is another article where Bayesian Monte-Carlo de- con volution is done through an in verse problem setup. Here positivity and causality are implicitly enforced by the method of images applied to reflected Brownian motion that giv es ” a prior pdf that is non-zer o only in the non-ne gative parameter r ange ” Micha- lak & Kitanidis (2003). The MCMC is here implemented with the Gibbs sampling algorithm. Similar to Fienen et al. (2006) the result is also a pdf with zones of interest for the residence time curve. Even if the computational time for Bayesian MCMC de- con volution methods is deemed ” manag eable ” Michalak & Kitanidis (2003), probably ev en more so with current hardware, the need for a fast method seems necessary for the community , and we expand on this in the next paragraph. c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 11 5.2 Comparison to the Cross-Correlation Method 5 DISCUSSION ON RELA TED WORK 5.2. Comparison to the Cr oss-Correlation Method W e use the cross-correlation method as a benchmark to compare the performance of our algorithm. The cross-correlation measures the similarity between two signals, the second one being a shifted version of itself. The AM algorithm also estimates the basin measurements constant lev el, c est , and the estimated residence time amplitude depends on this constant level. It is necessary to obtain this same amplitude for the cross-correlation method, for comparison purposes, and this is done through the following: y rec = x ∗ R xy k est = R xy · σ y σ y rec (10) W e call the cross-correlation method XCORR in our plots. The cross-correlation implicitly assumes that the input rainfall is white noise. In this case, the auto-correlation of each rain fall time series would be a Dirac at the center . Since real rainfall time series hav e actually long-tailed statistics, the cross-correlation method is inexact. Here we use multifractals to simulate realistic rainfall T essier et al. (1996). Therefore, we expect the cross-correlation method to hav e a limited perfor- mance in real life tests. The decision to benchmark against the cross-correlation is due to the fact that it is the preferred method for hydrologists in numerous recent articles: for deter- mining transport of biological constituents in Sheets et al. (2002), or studying river - groundwater interaction with different types of measurements being cross-correlated like in Hoehn & Cirpka (2006). Cross-correlation is also used by V ogt et al. (2010) for estimating mixing ratios and mean residence times, by Delbart et al. (2014) for estimating the pure residence time curve. Therefore, the hydrology community is in- terested in a simple and fast method with minimal implementation time that gives a residence time curve estimation from different time series measurements. In the case of the cross-correlation method, one focuses on analyzing the position of the maximal amplitude and general shape of the curv e. From this curve hydrologists e xtract the characteristics of interest for that particular channel (mean residence time, mixing ra- tios, etc.). In contrast to the cross-correlation method we of fer positi vity , smoothness and causality constraints that giv e a more precise curve and a similar computing time. 5.3. Comparison to Cirpka et al. (2007) Another benchmark method for the AM is the one presented in Cirpka et al. (2007) that uses measurements in fluctuations of electrical-conductivity as inputs, with a direct model similar to (1). The algorithm in Cirpka et al. (2007) is the same as the one used in V ogt et al. (2010) and both articles compare their results with those of the cross- correlation method. In Cirpka et al. (2007) the decon volution algorithm is also an Alternating Minimization algorithm, but this time between estimating the residence time in the first step using a Bayesian Maximum A Posteriori method, and estimating the v ariance of the noise and the slope parameters in the second step. One can notice that Equation (3) is similar to (Cirpka et al., 2007, Eq.(8)). One main advantage of the Cirpka et al. (2007) approach is that it deli vers the uncertainty curves of the full c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 12 6 SYNTHETIC D A T A Bayesian method while not being a full Bayesian decon volution method, thus ha ving a fast computation time. One dra wback is that the two parameters, v ariance of noise and slope, need to hav e well chosen initial values. In a full Bayesian based decon volution these parameters would also need to be estimated and this would be done by Marko v Chain Monte Carlo methods which are computationally intensive. With re gularization based decon volution we try to av oid high computational costs and ha ving multiple parameters that need carefully chosen initial v alues. The optimal v alue for our hyper- parameter λ can be automatically obtained from the inputs. 6. Synthetic Data 6.1. General Discussion and λ Choice Strate gies In the context of a realistic synthetic v alidation we generate the rain signals x with a multifractal simulation based on T essier et al. (1996). W e use the multifractal param- eters H = − 0 . 1 , C 1 = 0 . 4 , α = 0 . 7. Furthermore we simulate k with a Beta distribution B ( x , α = 2 , β = 6 ) . W e choose arbitrarily c = 100. T o e valuate the computed estimates we use the SNR definition, where we replace the noise term with the estimated k est signal or the y rec signal respectiv ely . SN R = 20 log 10 k m k 2 2 k m − m est k 2 2 [ d B ] , (11) where m is the true signal k or y and m est is the estimated k est or reconstructed y rec signal respectiv ely . Examples of results obtained from synthetic data are shown in Figure 3 and Fig- ure 4. The positivity and causality constraints are well respected. In addition, our method always provides a better estimation of the residence time k est in comparison with the standard cross-correlation method. The cross-correlation method manages to preserve the position of the maximum intensity of the residence time distribution but does not match either the shape or the amplitude of the true k . It can be observ ed that for a high noise lev el of y , the λ hyper -parameter must be greater in order to ob- tain better estimates k est and y rec . The greater the λ , the greater the importance of the regularization term in comparison to the fidelity term therefore smoothing is more important, which improv es results when entries are noisy . Therefore, an analysis of the decon volution results is also necessary in order to find the right adaptation of the λ hyper-parameter for a particular noise le vel. W e propose four strategies to automatically tune the λ hyper-parameter . 1. λ oracl e : choosing the λ corresponding to the best estimation of k est by maxi- mizing the k est SNR output (or minimizing the distance between k est and k ). This strategy only works if the solution is known and represents the maximum achiev able value. 2. λ d iscre pancy : choosing the λ giving the residual v ariance between y and y rec clos- est to that of the noise. This method is kno wn as ”Morozov’ s discrepanc y prin- ciple” Perev erzev & Schock (2009). c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 13 6.1 General Discussion and λ Choice Strategies 6 SYNTHETIC D A T A 3. λ f id el it y : choosing the λ corresponding to the best reconstruction of y rec by maxi- mizing the y rec SNR output (or minimizing the distance between y rec and y ). This is the v alue of the reconstruction optimum. This completely heuristic method au- tomatically selects the hyper -parameter with a performance close to the selection by ”discrepancy principle” as will be seen next, in a completely blind way (with- out a priori knowledge of the v ariance of the noise). 4. λ corrCoe f f : choosing the λ corresponding to the best reconstruction of y rec by maximizing the correlation coefficient v alue between y rec and y . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 14 6.1 General Discussion and λ Choice Strategies 6 SYNTHETIC D A T A 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 10 Rainfall [mm ] x, lambda = 8.9e+03, inputSNR = 5[dB] -500 -400 -300 -200 -100 0 100 200 300 400 -500 time [hours] -2 0 2 WRT [1] k est , k est AM-SNR = 13.8968dB , k est XCORR-SNR = 5.6996dB true AM XCORR 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 50 100 150 Basin [mm] y rec , y rec AM-SNR = 6.486dB, y rec XCORR-SNR = 4.0128dB true AM XCORR (a) 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 2 4 Rainfall [mm ] x, lambda = 5.5e+05, inputSNR = 5[dB] -500 -400 -300 -200 -100 0 100 200 300 400 -500 time [hours] -1 0 1 WRT [1] k est , k est AM-SNR = 17.1284dB , k est XCORR-SNR = 7.3941dB true AM XCORR 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 100 200 Basin [mm] y rec , y rec AM-SNR = 6.166dB, y rec XCORR-SNR = 3.1343dB true AM XCORR (b) Figure 3: T wo e xamples of the residence time estimation k est and reconstructed aquifer levels y rec from syn- thetic data for a y input SNR of 5 dB (noisy measurements). The input rain is generated with realistic multi- fractal time series. AM stands for the Alternating Minimization, XCORR for the standard cross-correlation, true for the true solution. c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 15 6.1 General Discussion and λ Choice Strategies 6 SYNTHETIC D A T A 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 10 Rainfall [mm ] x, lambda = 8.9e+03, inputSNR = 25[dB] -500 -400 -300 -200 -100 0 100 200 300 400 -500 time [hours] -1 0 1 WRT [1] k est , k est AM-SNR = 23.4961dB , k est XCORR-SNR = 6.8564dB true AM XCORR 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 50 100 150 Basin [mm] y rec , y rec AM-SNR = 22.6149dB, y rec XCORR-SNR = 11.2414dB true AM XCORR (a) 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 2 4 Rainfall [mm ] x, lambda = 7.0e+04, inputSNR = 25[dB] -500 -400 -300 -200 -100 0 100 200 300 400 -500 time [hours] -2 0 2 WRT [1] k est , k est AM-SNR = 23.818dB , k est XCORR-SNR = 7.8686dB true AM XCORR 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 50 100 150 Basin [mm] y rec , y rec AM-SNR = 24.3019dB, y rec XCORR-SNR = 7.4912dB true AM XCORR (b) Figure 4: Same as in Figure 3 for a y input SNR of 25 dB. The four λ strategies gi ve different estimates of k est , whose SNR v alue is compared to the y input SNR (measurements noise level), the goal being to obtain the best pos- sible k est SNR for each giv en y input SNR level. The algorithm is tested for different input SNR values from 0 dB (very high noise level) to 30 dB (almost no noise) and ov er a λ range chosen from 10 − 5 to 10 12 with 20 values dispersed on a logarithmic scale. T o sho w the quality of estimation, for each noise lev el, we run arbitrarily 30 test cases (input rainfall x ). For each randomly chosen x con volv ed with the kno wn k , the resulting y signal has Gaussian noise added to it according to the input SNR test c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 16 6.1 General Discussion and λ Choice Strategies 6 SYNTHETIC D A T A value. W e apply the AM, XCORR and Cirpka et al. (2007) methods to each test case for all λ s. For each test run we record the k est SNR value, the y rec SNR value and the y rec correlation coef ficient. Since 30 tests are made for each input y SNR, we obtain 30 plots sho wing the e volution of the k est SNR, of y rec SNR and y rec correlation coefficient, depending on the λ choice. By av eraging these plots, mean values and their standard de viation can be com- puted which are shown in Figure 5 for a y input SNR of 5 dB and Figure 6 for 25 dB respectiv ely . W e lose the optimality for each single example due to averaging, but we show the variability of the criteria depending on noise lev el and input data. W e also present graphically the four strategies of λ determination. c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 17 6.1 General Discussion and λ Choice Strategies 6 SYNTHETIC D A T A 10 -6 10 -4 10 -2 10 0 10 2 10 4 10 6 10 8 10 10 10 12 lambda -20 -15 -10 -5 0 5 10 15 Mean k est AM-SNR [dB] Best oracle to maximize k est AM-SNR for y SNR level of 5[dB] oracle (a) 10 -6 10 -4 10 -2 10 0 10 2 10 4 10 6 10 8 10 10 10 12 lambda 0 1 2 3 4 5 6 7 8 Mean y rec AM-SNR Best fidelity to maximize y rec AM-SNR for y SNR level of 5[dB] fidelity discrepancy (b) 10 -4 10 -2 10 0 10 2 10 4 10 6 10 8 10 10 10 12 lambda 0 0.5 1 1.5 Mean y rec AM Correlation Coefficient Best corrCoeff to maximize y rec AM-SNR for y SNR level of 5[dB] Corr Coeff (c) Figure 5: Selection strategy of hyper-parameter λ . W e plot a verage and standard de viation over 30 synthetic examples of: (a) k est SNR, (b) y rec SNR and (c) y rec correlation coefficient as a function of λ . The y input SNR is 5 dB, meaning very noisy measurements. The λ oracl e point in (a) shows the best λ in a verage to maximize the k est SNR for the synthetic tests. This can be computed only when the true solution is known. In (b) the λ f id el it y maximizes the y rec SNR. The λ d iscre pancy is achie ved when y rec SNR is closest to the actual noise lev el. In (c), the λ corrCoe f f is the optimum over the correlation coef ficient between y rec and y . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 18 6.1 General Discussion and λ Choice Strategies 6 SYNTHETIC D A T A 10 -6 10 -4 10 -2 10 0 10 2 10 4 10 6 10 8 10 10 10 12 lambda -5 0 5 10 15 20 Mean k est AM-SNR [dB] Best oracle to maximize k est AM-SNR for y SNR level of 25[dB] oracle (a) 10 -6 10 -4 10 -2 10 0 10 2 10 4 10 6 10 8 10 10 10 12 lambda 0 5 10 15 20 25 Mean y rec AM-SNR Best fidelity to maximize y rec AM-SNR for y SNR level of 25[dB] fidelity discrepancy (b) 10 -4 10 -2 10 0 10 2 10 4 10 6 10 8 10 10 10 12 lambda 0 0.5 1 1.5 Mean y rec AM Correlation Coefficient Best corrCoeff to maximize y rec AM-SNR for y SNR level of 25[dB] Corr Coeff (c) Figure 6: Same as in Figure 5 with an y input SNR of 25 dB. W e find that λ f id el it y , λ d iscre pancy and λ corrCoe f f approach the optimal λ oracl e in av erage. In Figure 7, we can see how the four strategies compare with the cross-correlation method. For a k est length of 1000 data points to estimate, we sho w in (a) the results for when inputs x and y are 1000 data points long and in (b) the results for when the y are 5000 data points long. The k est SN R is always the best for the λ oracl e strategy as expected. Across the plots, λ corrCoe f f performs closest to it. The λ f id el it y strategy is similar to λ d iscre pancy for SNRs from 10 dB to 30 dB. For the highest noise lev el, y input SN R < 10 dB, λ f id el it y is worst for short time series and λ d iscre pancy is worst for c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 19 6.1 General Discussion and λ Choice Strategies 6 SYNTHETIC D A T A longer time series. Whatever the strategy , our method is always better than the cross- correlation. The av erage optimal λ value for each strategy , giv en the y input SNR lev el, is pre- sented in Figure 8. In (a) and (b), we see the ev olution of the λ v alues v ersus the y input SNR for the four giv en strategies. The four strategies of the hyper -parameters λ are similar at low noise level, down to 10 dB for both 1000 and 5000 data points. Then, they begin to diver ge b ut λ corrCoe f f always stays in the neighborhood of λ oracl e , meaning it is a v alid strate gy to use in real test cases where k is not known. At v ery high noise le v- els for 1000 data points, λ d iscre pancy increases and provides an ov er-regularized, highly smooth solution that is far from the optimum. F or 5000 data points both λ f id el it y and λ d iscre pancy deliv er smaller λ s. If for λ f id el it y we can still expect that it would deliv er a proper k est , we can suspect that λ d iscre pancy would stress more an attachment to the data. This means that the estimated k est would gi ve a y rec that w ould follo w too closely the shape of y , including its noise. Furthermore we in vestigate the influence of data volume on the k estimate. The aggregated results are presented in Figure 9, (a) for a y input SNR of 5 dB and in (b) for a y input SNR of 25 dB. All of our four strategies show significant improvement when the input time series of rainfall and aquifer measurements are longer, especially when the measurements are noisy . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 20 6.1 General Discussion and λ Choice Strategies 6 SYNTHETIC D A T A (a) (b) Figure 7: Quality of the residence time estimation k est for the four hyper-parameter selection strategies and the cross-correlation method. Mean and standard deviation of obtained k est SNRs, as a function of the noise lev el of the measurements, for inputs of length: 1000 data points (a) and 5000 data points (b). The cross- correlation method always stands lower indicating a poorer estimation. The correlation coefficient strategy λ corrCoe f f is the best strategy , across noise level and signal length. c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 21 6.1 General Discussion and λ Choice Strategies 6 SYNTHETIC D A T A 0 5 10 15 20 25 30 input SNR [dB] 10 -5 10 0 10 5 10 10 10 15 lambda Hyper-Parameter Evolution oracle fidelity corrCoeff discrepancy (a) 0 5 10 15 20 25 30 input SNR [dB] 10 0 10 5 10 10 lambda Hyper-Parameter Evolution oracle fidelity corrCoeff discrepancy (b) Figure 8: The ev olution of the four λ strategies depending on the input SNR. For 1000 data points in (a) and 5000 data points in (b). c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 22 6.2 Comparison to Similar Methods 6 SYNTHETIC D A T A 1000 1500 2000 2500 3000 3500 4000 4500 5000 x length -5 0 5 10 15 20 k est SNR [dB] k est SNR depending on lambda strategy and x length (rain-fall time series length) oracle fidelity corrCoeff discrepancy Cross Correlation Method (a) 1000 1500 2000 2500 3000 3500 4000 4500 5000 x length 2 4 6 8 10 12 14 16 18 20 22 k est SNR [dB] k est SNR depending on lambda strategy and x length (rain-fall time series length) oracle fidelity corrCoeff discrepancy Cross Correlation Method (b) Figure 9: Quality of residence time k est estimation depending on the number of data points contained by x (input rain) and y (output aquifer le vel). W e can observe that more data points lead to a better estimation for our method for all four λ strategies. (a) is for a y input SNR of 5 dB and (b) is for a y input SNR of 25 dB 6.2. Comparison to Similar Methods In Figure 10, we can see ho w our method compares to the cross-correlation method and the algorithm described in Cirpka et al. (2007) for various y input SNRs and 1000 and 5000 data points respectively (positi ve time interval of residence time to be esti- mated of 500 data points). Our method and the Cirpka et al. (2007) algorithm show similarly good results in comparison with the cross-correlation. The method of Cirpka et al. (2007) has a smaller standard de viation than our method, showing a weaker de- pendence of the noise/structure of the dataset. c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 23 6.2 Comparison to Similar Methods 6 SYNTHETIC D A T A (a) (b) Figure 10: Comparison between our algorithm, the cross-correlation and the Cirpka et al. (2007) algorithm for 1000 data points (a) and 5000 data points (b) While our proposed approach provides different output results depending on the giv en λ , the best solution being picked automatically , the operator can choose an ap- propriate solution based on his o wn expertise, from an appropriate range around the optimal λ . Moreover , the solution is independent from the initialization due to the con vexity of the J functional. In Figure 11, bar plots illustrate the av erage runtime for 30 test cases, for different y input SNRs, for the three algorithms. The AM algorithm is consistently faster than the Cirpka et al. (2007) algorithm for y input SNRs higher than 15 dB 11(c). It is also faster for the small data sets of 1000 points 11(a),11(b). c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 24 6.2 Comparison to Similar Methods 6 SYNTHETIC D A T A 0 dB 1 dB 5 dB 10 dB 15 dB 20 dB 25dB 30 dB 0 0.5 1 1.5 2 2.5 3 3.5 4 Runtim e AM 1000 2000 3000 4000 5000 input SNR T im e (s) (a) 0 dB 1 dB 5 dB 10 dB 15 dB 20 dB 25dB 30 dB 0 10 20 30 40 50 60 70 80 90 100 Runtim e Cirp ka 1000 2000 3000 4000 5000 input SNR T im e (s) (b) 0 dB 1 dB 5 dB 10 dB 15 dB 20 dB 25dB 30 dB 0 0.5 1 1.5 2 2.5 3 3.5 Ratio AM/CIRP KA Runtim es 1000 2000 3000 4000 5000 input SNR Ratio AM/Ci rpka (c) Figure 11: Analysis of runtimes between the AM algorithm and the Cirpka et al. (2007) algorithm for various lengths of the dataset and various noise le vels. c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 25 7 REAL D A T A 7. Real Data The tests on real data are conducted on data sets made av ailable from the ”Base de Donnes des Observatoires en Hydrologie” c Irstea, Irstea (2017). The data is gathered in the Ile de France region, in France. The measurements are from two neighboring sites, one at a higher altitude for rainfall measurements and the second at a lower al- titude for aquifer measurements, taken at e very 1 hour intervals, between January 1 st , 2016 until January 1 st , 2017. For the real data, the estimates are based on the λ corrCoe f f strategy with λ s chosen around the optimal values found with the synthetic data set, between 10 8 to 10 2 . In Figure 12 and in Figure 13, estimates of the residence time for real life measurements of x and y are sho wn. c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 26 7 REAL D A T A 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 Rainfall [mm] x measured , used lambda corrCoeff = 1.000e+06 -500 -400 -300 -200 -100 0 100 200 300 400 500 time [hours] -20 0 20 WRT [1] k est AM, k est XCORR AM XCORR 0 100 200 300 400 500 600 700 800 900 1000 time [hours] -1500 -1000 -500 Basin [mm] y rec , y rec SNR-AM = 12.6597 dB -- y rec SNR-XCORR = 3.3683 dB true y AM XCORR (a) 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 Rainfall [mm] x measured , used lambda corrCoeff = 3.793e+05 -500 -400 -300 -200 -100 0 100 200 300 400 500 time [hours] -20 0 20 WRT [1] k est AM, k est XCORR AM XCORR 0 100 200 300 400 500 600 700 800 900 1000 time [hours] -1500 -1000 -500 Basin [mm] y rec , y rec SNR-AM = 10.9845 dB -- y rec SNR-XCORR = 7.718 dB true y AM XCORR (b) Figure 12: Examples of results for real data using the λ corrCoe f f strategy . W e estimate the residence time k est and the aquifer level c est ; we also plot the breakthrough curve y rec in blue. AM stands for the Alter- nating Minimization, XCORR for the standard cross-correlation, the true residence time k is not known. The position of the maximum amplitude of k est is similar for the two methods but the shape of k est varies significantly . Only the AM method has the physical properties of positi vity and causality . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 27 7 REAL D A T A 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 10 Rainfall [mm] x measured , used lambda corrCoeff = 1.000e+06 -500 -400 -300 -200 -100 0 100 200 300 400 500 time [hours] -10 0 10 WRT [1] k est AM, k est XCORR AM XCORR 0 100 200 300 400 500 600 700 800 900 1000 time [hours] -1500 -1000 -500 Basin [mm] y rec , y rec SNR-AM = 6.8499 dB -- y rec SNR-XCORR = 6.7535 dB true y AM XCORR (a) 0 100 200 300 400 500 600 700 800 900 1000 time [hours] 0 5 10 Rainfall [mm] x measured , used lambda corrCoeff = 1.000e+06 -500 -400 -300 -200 -100 0 100 200 300 400 500 time [hours] -10 0 10 WRT [1] k est AM, k est XCORR AM XCORR 0 100 200 300 400 500 600 700 800 900 1000 time [hours] -1500 -1000 -500 Basin [mm] y rec , y rec SNR-AM = 7.6238 dB -- y rec SNR-XCORR = 3.8163 dB true y AM XCORR (b) Figure 13: Same as in Figure 12. In all cases, the estimated curves honor the given positi vity and causality con- straints. For the cross-correlation, even if the y rec is close to the original y , the curve for the residence time estimated by this method has the disadvantage to not respect the positivity and causality constraints across all of the presented cases. The aquifer level measurements hav e negati ve v alues due to the con ventions of the used measuring instruments. The AM algorithm is also capable of estimating the aquifer average le vel c , and depending on this constant and the amplitude of the rain fall input, the estimated residence time curve k est will also hav e a certain amplitude c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 28 8 CONCLUSION (the curve is not normalized to resemble that of a pdf). The AM algorithm succeeds in reconstructing the y rec with an SNR around 10 dB in the studied cases, using the λ corrCoe f f and pro vides a better reconstruction SNR than the cross-correlation (XCORR) method. W e find small but significant changes in the residence time curv e for dif ferent data sets of the same channel, as also identified in other datasets Delbart et al. (2014). This may be due to the seasonal variability of the inputs (rainfall) and its ef fects on the hydrological process. This aspect would be of interest to study into more detail for specific sites to better understand it. Another observ ation to be made is the fact that if non-linearities of the system are present (in transit or at the aquifer le vel), our approach may also lead to o ver simplifi- cation. Nonetheless the question arises if a hydrological channel could be considered as a linear and stationary system by parts (smaller time series) and therefore allow the use of our method for estimating partial residence time curves which can then be put together in a more complex mapping of the channel. One can also note in the plots that the y rec is slightly better for cases when a heavy rainfall event appears at the beginning of the time series x instead of towards the end, suggesting the fact that the residence time estimation would also be better . Finally , the examples show the appearance of multiple lobes that are considered a sign of reservoirs of the hydrological channel keeping part of the water for some time before releasing it in a later discharge. This demonstrates the usefulness of a non-parametric deconv olution method in comparison with parametric deconv olution methods where such lobes are either ignored or fixed in number . 8. Conclusion W e propose a ne w approach to estimate a smooth residence time taking into account positivity and causality constraints and ha ving a fast runtime. W e highlight why these constraints must be used all along the algorithmic process to reach the expected solu- tion in the case of non-parametric 1D decon volution for the AM algorithm presented here. The estimation of the residence time k est was done using a fast Alternating Min- imization algorithm with two steps: (1) 1D decon volution and (2) estimation of the aquifer initial lev el. All tests hav e been done on a personal laptop, with CPU In- tel(R) Core(TM) i7-6600U CPU @ 2.6GHz 2.81 GHz, 16.0 GB RAM, 64-bit OS, x-64-based processor , using Matlab R . W e validated the approach on synthetic tests and proposed sev eral strate gies to automatically estimate a hyper -parameter , λ , that controls the smoothness of the residence time curv e. W e ha ve found that between these strategies, the correlation coefficient strate gy seems to be very ef ficient to estimate the best value for λ . W e v alidated our AM method on synthetic data and found that the results are better than the standard cross-correlation method and similar to those of the Cirpka et al. (2007) method. W e also demonstrated the capabilities of our AM method on real data. Additionally , our method respects the physical constraints (positivity , causality , non- circularity) which are important for interpretation purposes. The estimation made by c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 29 REFERENCES our method will pro vide better information for hydro-geologists on amplitude and full shape of the residence time, the mean lev el of the aquifer and will also improv e the estimation of the mean residence time (Appendix A shows ho w to compute it). As possible impro vements we propose refining this methodology for the potential non-linear aspects of the water transit time through the medium. The Matlab implementation of the algorithm is available under CECILL license at the following public Git repository: https://git.l2s.centralesupelec.fr/ meresescual/SmoothSignalEstimatorDeconvolution.git . Acknowledgments W e thank the Base de Donnes des Observatoires en Hydr ologie for providing the data acquired in the field. c Irstea, BDOH ORA CLE c , July 24, 2017. W e thank Prof. Olaf A. Cirpka at the department for Hydrogeology , Univ erst ¨ at T ¨ ubingen, Germany , for kindly providing the algorithm referenced in Cirpka et al. (2007). W e thank Amine Hadjyoucef and Christian K uschel for carefully proof-reading this text and of fering us their comments about unclarities and English phrasing. W e also want to thank the editor and the revie wers for their constructive questions and observations throughout the re viewing process. This work is supported by the Center for Data Science, funded by the IDEX Paris- Saclay , ANR-11-IDEX-0003-02. W e acknowledge support from the ”Institut National des Sciences de l’Univ ers” (INSU), the ”Centre National de la Recherche Scientifique” (CNRS) and ”Centre National d’Etude Spatiale” (CNES) and through the ”Programme National de Plantologie” and MEX/PFS Program. Appendix A. Mean Residence Time In order to estimate the mean residence time τ , one has to simply renormalize the estimated transfer function k est and take the mean: τ = t = K 2 ∑ t = 0 k est ( t ) · t t = K 2 ∑ t = 0 k est ( t ) (A.1) References References Bertsekas, D. P . (1982). Projected newton methods for optimization problems with simple constraints. SIAM Journal on contr ol and Optimization , 20 , 221–246. doi: https://doi.org/10.1137/0320018 . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 30 REFERENCES REFERENCES Botter , G., Bertuzzo, E., & Rinaldo, A. (2011). Catchment residence and trav el time distrib utions: The master equation. Geophysical Resear ch Letters , 38 , n/a–n/a. URL: http://dx.doi.org/10.1029/2011GL047666 . doi: 10.1029/ 2011GL047666 . L11403. Cirpka, O. A., Fienen, M. N., Hofer , M., Hoehn, E., T essarini, A., Kipfer , R., & Kitani- dis, P . K. (2007). Analyzing bank filtration by decon voluting time series of electric conductivity . Gr ound W ater , 45 , 318–328. URL: http://dx.doi.org/10.1111/ j.1745- 6584.2006.00293.x . doi: 10.1111/j.1745- 6584.2006.00293.x . Delbart, C., V aldes, D., Barbecot, F ., T ognelli, A., Richon, P ., & Couchoux, L. (2014). T emporal variability of karst aquifer response time established by the sliding-windows cross-correlation method. J ournal of Hydr ology , 511 , 580–588. doi: 10.1016/j.jhydrol.2014.02.008 . Dietrich, C., & Chapman, T . (1993). Unit graph estimation and stabilization using quadratic programming and difference norms. W ater r esour ces r esear ch , 29 , 2629– 2635. Dziko wski, M., & Delay , F . (1992). Simulation algorithm of time-dependent tracer test systems in hydrogeology . Computers & Geosciences , 18 , 697 – 705. URL: http: //www.sciencedirect.com/science/article/pii/009830049290004B . doi: http://dx.doi.org/10.1016/0098- 3004(92)90004- B . Etchev erry , D., & Perrochet, P . (2000). Direct simulation of groundwater transit-time distributions using the reservoir theory . Hydr ogeology Journal , 8 , 200–208. URL: http://dx.doi.org/10.1007/s100400050006 . doi: 10.1007/ s100400050006 . Fienen, M. N., Clemo, T ., & Kitanidis, P . K. (2008). An interactiv e bayesian geostatis- tical in verse protocol for hydraulic tomography . W ater Resour ces Resear ch , 44 . Fienen, M. N., Luo, J., & Kitanidis, P . K. (2006). A bayesian geostatistical transfer function approach to tracer test analysis. W ater Resour ces Resear ch , 42 . Gooseff, M. N., Benson, D. A., Briggs, M. A., W eaver , M., W ollheim, W ., Peterson, B., & Hopkinson, C. S. (2011). Residence time distrib utions in surface transient storage zones in streams: Estimation via signal decon volution. W ater Resour ces Resear ch , 47 , n/a–n/a. URL: http://dx.doi.org/10.1029/2010WR009959 . doi: 10.1029/ 2010WR009959 . W05509. Hoehn, E., & Cirpka, O. A. (2006). Assessing residence times of hyporheic ground water in two alluvial flood plains of the southern alps using water tem- perature and tracers. Hydr ology and Earth System Sciences , 10 , 553–563. URL: https://www.hydrol- earth- syst- sci.net/10/553/2006/ . doi: 10. 5194/hess- 10- 553- 2006 . Irstea (2017). ”base de donnes des observ atoires en hydrologie” c irstea. URL: https://bdoh.irstea.fr/ORACLE/ . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 31 REFERENCES REFERENCES Long, A., & Derickson, R. (1999). Linear systems analysis in a karst aquifer . Journal of Hydr ology , 219 , 206–217. Luo, J., Cirpka, O. A., Fienen, M. N., W u, W .-m., Mehlhorn, T . L., Carle y , J., Jar- dine, P . M., Criddle, C. S., & Kitanidis, P . K. (2006). A parametric transfer function methodology for analyzing reactiv e transport in nonuniform flow . J ournal of con- taminant hydr ology , 83 , 27–41. Massei, N., Dupont, J., Mahler, B., Laignel, B., Fournier , M., V aldes, D., & Ogier , S. (2006). In vestigating transport properties and turbidity dynamics of a karst aquifer using correlation, spectral, and wavelet analyses. Jour - nal of Hydr ology , 329 , 244 – 257. URL: http://www.sciencedirect.com/ science/article/pii/S0022169406000965 . doi: http://doi.org/10.1016/ j.jhydrol.2006.02.021 . McCormick, G. P . (1969). Anti-zig-zagging by bending. Management Science , (pp. 315–320). McGuire, K. J., & McDonnell, J. J. (2006). A revie w and ev aluation of catchment transit time modeling. Journal of Hydr ology , 330 , 543–563. URL: http://dx. doi.org/10.1016/j.jhydrol.2006.04.020 . doi: 10.1016/j.jhydrol.2006. 04.020 . Michalak, A. M., & Kitanidis, P . K. (2003). A method for enforcing parameter non- negati vity in bayesian in verse problems with an application to contaminant source identification. W ater Resour ces Resear ch , 39 . Neuman, S. P ., & De Marsily , G. (1976). Identification of linear systems response by parametric programing. W ater Resour ces Resear ch , 12 , 253–262. URL: http: //dx.doi.org/10.1029/WR012i002p00253 . doi: 10.1029/WR012i002p00253 . Neuman, S. P ., Resnick, S. D., Reebles, R. W ., & Dunbar , D. B. (1982). Dev eloping a ne w deconv olution technique to model rainfall-runof f in arid en vironments. W ater Resour ces Resear ch Center , University of Arizona , . URL: http://hdl.handle. net/10150/305311 . OSulliv an, J. A. (1998). Alternating minimization algorithms: From blahut-arimoto to expectation-maximization. Springer Science+Business Media New Y ork , (pp. 173– 192). doi: 10.1007/978- 1- 4615- 5121- 8_13 . Payn, R. A., Goosef f, M. N., Benson, D. A., Cirpka, O. A., Zarnetske, J. P ., Bo wden, W . B., McNamara, J. P ., & Bradford, J. H. (2008). Comparison of instantaneous and constant-rate stream tracer experiments through non-parametric analysis of res- idence time distributions. W ater Resour ces Resear ch , 44 , n/a–n/a. URL: http:// dx.doi.org/10.1029/2007WR006274 . doi: 10.1029/2007WR006274 . W06404. Perev erzev , S., & Schock, E. (2009). Morozo v’ s discrepancy principle for tikhonov reg- ularization of sev erely ill-posed problems in finite-dimensional subspaces. Numer- ical Functional Analysis and Optimization , . doi: http://dx.doi.org/10.1080/ 01630560008816993 . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 32 REFERENCES REFERENCES Prov encher, S. W . (1982). Contin: a general purpose constrained regularization pro- gram for inv erting noisy linear algebraic and integral equations. Computer Physics Communications , 27 , 229–242. Robinson, B. A., Dash, Z. V ., & Sriniv asan, G. (2010). A particle tracking trans- port method for the simulation of resident and flux-av eraged concentration of solute plumes in groundwater models. Computational Geosciences , 14 , 779– 792. URL: http://dx.doi.org/10.1007/s10596- 010- 9190- 6 . doi: 10.1007/ s10596- 010- 9190- 6 . Sheets, R., Darner, R., & Whitteberry , B. (2002). Lag times of bank filtration at a well field, cincinnati, ohio, usa. Journal of Hydr ology , 266 , 162 – 174. URL: http://www.sciencedirect.com/science/article/ pii/S0022169402001646 . doi: https://doi.org/10.1016/S0022- 1694(02) 00164- 6 . Attenuation of Groundwater Pollution by Bank Filtration. Skaggs, T . H., Kabala, Z., & Jury , W . A. (1998). Deconv olution of a nonparametric transfer function for solute transport in soils. Journal of Hydr ology , 207 , 170–178. T essier , Y ., Lovejo y , S., Hubert, P ., Schertzer, D., & Pecknold, S. (1996). Multifractal analysis and modeling of rainfall and riv er flo ws and scaling, causal transfer func- tions. Journal of Geophysical Researc h: Atmospher es , 101 , 26427–26440. URL: http://dx.doi.org/10.1029/96JD01799 . doi: 10.1029/96JD01799 . V ogt, T ., Hoehn, E., Schneider, P ., Freund, A., Schirmer , M., & Cirpka, O. A. (2010). Fluctuations of electrical conductivity as a natural tracer for bank filtration in a losing stream. Advances in W ater Resour ces , 33 , 1296–1308. W erner , T . M., & Kadlec, R. H. (2000). W etland residence time distribution model- ing. Ecological Engineering , 15 , 77–90. URL: http://dx.doi.org/10.1016/ S0925- 8574(99)00036- 1 . doi: 10.1016/s0925- 8574(99)00036- 1 . Zuo, B., & Hu, X. (2012). Geophysical model enhancement technique based on blind decon volution. Computers & Geosciences , 49 , 170 – 181. URL: http://www. sciencedirect.com/science/article/pii/S0098300412002129 . doi: http: //doi.org/10.1016/j.cageo.2012.06.017 . c 2018. Preprint made av ailable under the Creativ e Commons CC-BY -NC-ND 4.0 license http://creativ ecommons.org/licenses/by-nc-nd/4.0/ 33

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment