A Read-Write Memory Network for Movie Story Understanding

We propose a novel memory network model named Read-Write Memory Network (RWMN) to perform question and answering tasks for large-scale, multimodal movie story understanding. The key focus of our RWMN model is to design the read network and the write network that consist of multiple convolutional layers, which enable memory read and write operations to have high capacity and flexibility. While existing memory-augmented network models treat each memory slot as an independent block, our use of multi-layered CNNs allows the model to read and write sequential memory cells as chunks, which is more reasonable to represent a sequential story because adjacent memory blocks often have strong correlations. For evaluation, we apply our model to all the six tasks of the MovieQA benchmark, and achieve the best accuracies on several tasks, especially on the visual QA task. Our model shows a potential to better understand not only the content in the story, but also more abstract information, such as relationships between characters and the reasons for their actions.

💡 Research Summary

The paper introduces the Read‑Write Memory Network (RWMN), a novel architecture designed to tackle the challenges of long‑duration, multimodal movie story understanding required by the MovieQA benchmark. Traditional recurrent models struggle with vanishing gradients over two‑hour videos, and existing external‑memory networks treat each memory slot as an independent unit, ignoring the strong temporal correlations between adjacent story elements. RWMN addresses these issues by equipping both the write and read phases with dedicated convolutional neural networks (CNNs) that operate on chunks of memory cells rather than on single slots.

In the preprocessing stage, each movie is split into aligned video subshots and subtitle sentences. Video frames are encoded with a pretrained ResNet‑152, averaged, and subtitles are embedded with Word2Vec plus position encoding. A compact bilinear pooling (CBP) fuses the visual and textual vectors into a 4,096‑dimensional multimodal embedding for each subshot, forming a matrix E of size n × 4096 (n≈1,500 on average).

The write network first reduces each embedding to a lower dimension d (e.g., 256) via a fully‑connected layer, then applies a 1‑D convolution with a vertical filter size of 40 and stride 30. This operation aggregates information from 40 consecutive subshots into a single memory slot, mimicking human episodic encoding. Multiple convolutional layers (typically two) can be stacked to capture higher‑level abstractions, producing a memory tensor M of shape m × d × 3.

For answering a question, the query sentence is embedded with the same Word2Vec and fully‑connected projection to obtain a d‑dimensional vector u. RWMN then creates a query‑dependent memory M_q by applying CBP between u and each cell of M. A second CNN (vertical filter size 3, stride 1) reconstructs the memory into M_r, allowing the model to attend to contiguous chunks that are most relevant to the query. An attention matrix is computed by softmax over the dot product between u and each cell of M_r; the resulting weighted sum yields a context vector o.

Answer candidates are embedded identically to the question, producing a matrix g (5 × d). The final confidence scores are computed as a softmax over the similarity between g and a convex combination α o + (1 − α) u, where α is a learnable scalar that balances the contribution of the retrieved memory and the original query. The answer with the highest score is selected.

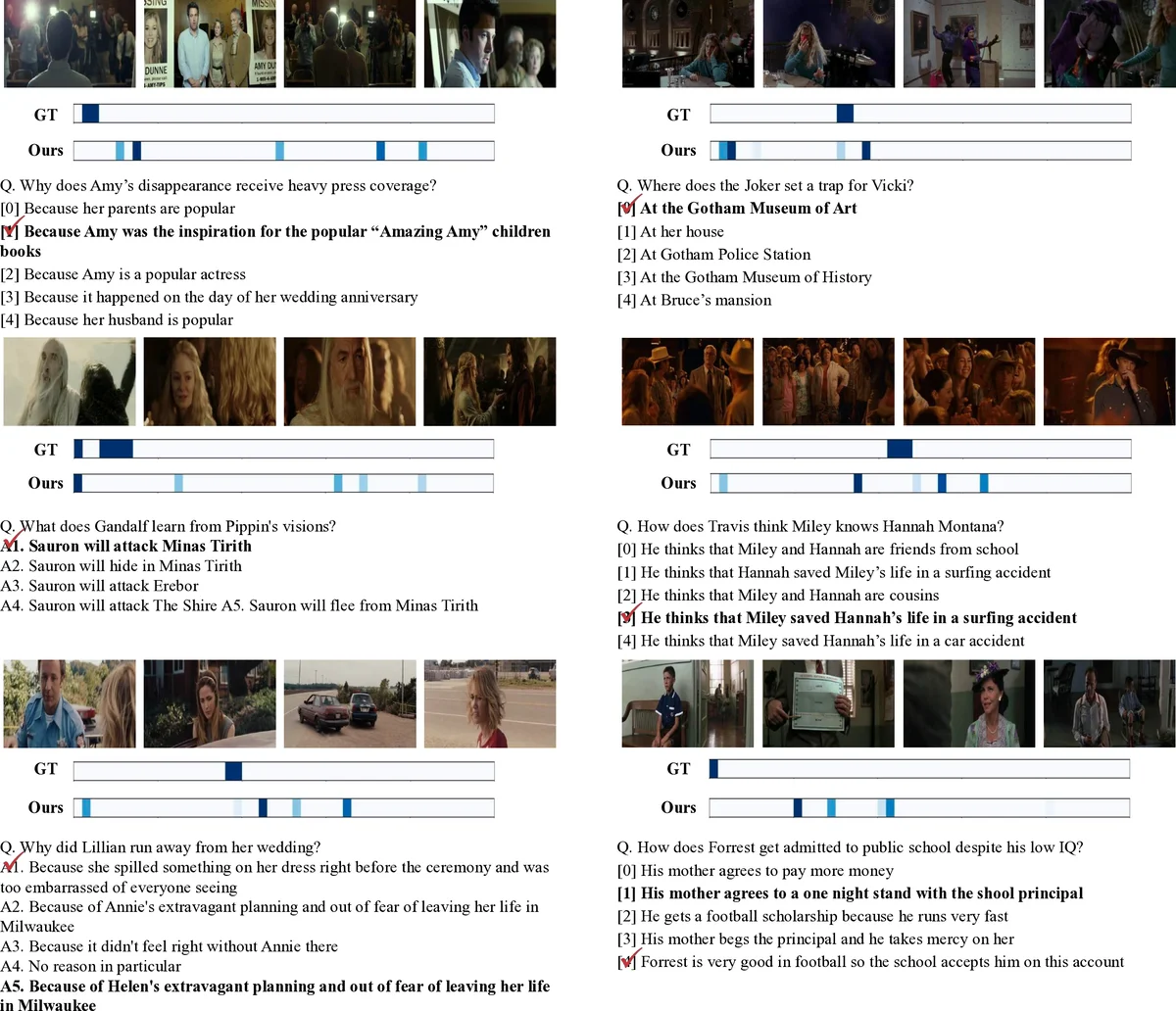

Training minimizes softmax cross‑entropy using Adagrad (learning rate 0.001, batch size 32). Extensive experiments on all six MovieQA tasks demonstrate that RWMN achieves state‑of‑the‑art performance, especially on the visual QA task (video + subtitle) where it surpasses previous best results by over 4 percentage points. Ablation studies reveal that two stacked convolutional layers in both write and read networks provide the optimal trade‑off between capacity and over‑fitting, and that the learnable α parameter significantly improves answer selection.

The authors discuss limitations: memory size grows linearly with video length, making very long movies (>3 hours) computationally demanding; the current visual and textual encoders are fixed (ResNet‑152, Word2Vec), suggesting that replacing them with modern transformer‑based multimodal encoders could further boost performance. Nonetheless, RWMN’s core contribution—using CNN‑based chunked read/write operations to capture sequential dependencies—offers a compelling new direction for large‑scale story understanding and could be extended to other domains such as long‑form video QA, narrative generation, or multimodal retrieval.

Comments & Academic Discussion

Loading comments...

Leave a Comment