Investigating the Effect of Music and Lyrics on Spoken-Word Recognition

Background music in social interaction settings can hinder conversation. Yet, little is known of how specific properties of music impact speech processing. This paper addresses this knowledge gap by investigating 1) whether the masking effect of back…

Authors: Odette Scharenborg, Martha Larson

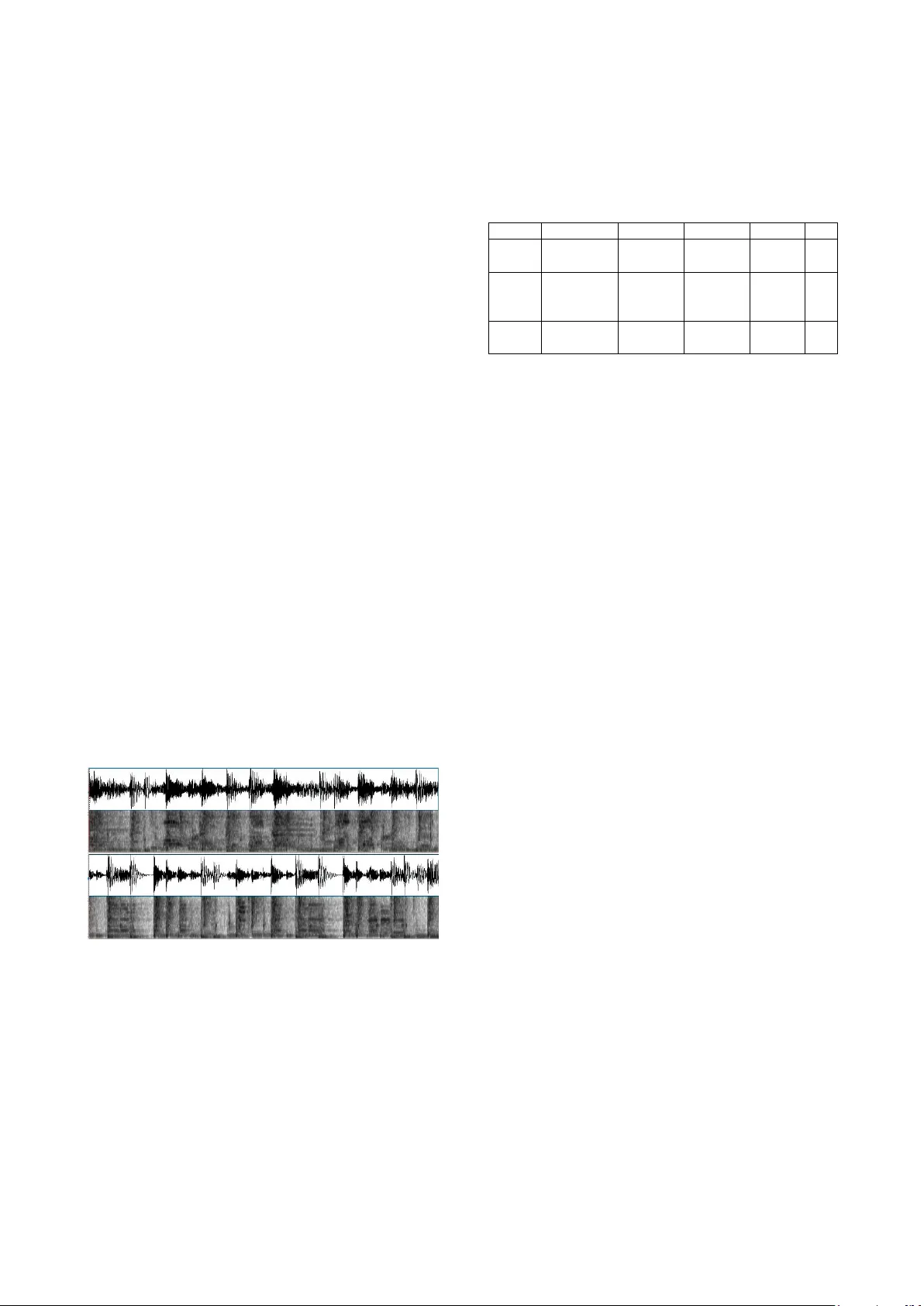

Investigatin g the Effect of Music and L yrics on Spok en-W ord Recognition Odette Scharenborg 1 ,2 and Martha Larson 1,3 ,4 1 Centre for Language Studies, Radboud University Nijmegen, Netherlands 2 Donders I nstitute for Brain, Cognition, & Behavior, Radboud University Nijmegen, Netherlands 3 I nstitute for Computing and Information Sciences, Radboud University Nijmegen, Netherlands 4 I ntelligent S y stems Department, Delft University of Technology, Netherlands o.scharenborg@let.ru.nl, m.larson@let.ru.nl Abstract Background music in so cial inte raction settings can hinder conversation. Yet, little is known of how speci fic properties of music impact speech processing . This paper addresses this knowledge gap by investigating 1 ) whether the masking effect of background music with lyrics is larger than that of music without lyrics, and 2) whether the masking effect is larger for more complex music. To answer th ese questions, a word identification experiment was run in which Dutch participants listened to Dutch CVC words embedded in stretches of background music in tw o conditions, with and w ithout lyrics, and at three SNRs. Three song s were used of d ifferent genres and complexities. Music stretches with and without ly rics were sampled from the same song in order to control for factors beyond the prese nce of lyrics. The results showed a clear negative i mpact of the presence of lyrics in background music on sp oken-word recognition. T his impact is independent of complexity. The results suggest that social spaces (e.g., restaurants, cafés and bars) should make careful choices of music to promote conversation, and op en a p ath for future work. Index Te r m s : spoken-word re cognition, b ackg ro und music, social settings 1. Introduction Music is an important part of the soundscape of social interaction settings. In b ars, restaurants, and ca fés, m usic se r ves to communicate information about the settin g [1 ] , thus cre atin g an atmosphere. It also promotes con versational privacy [2] . However, the wrong soundscape choices may cause fatigue by increasing the effort n ecess ary to carr y o n conversation [3], or even disrupt conversation entirely . This work contribu tes towards the goal of identifying t he properties of b ackg ro und music th at optimally allow conversations to continue unhindered in social settings. Despite the large body of work on the eff ect of the presence of background n o ise on speech processing (see for a r eview [4]), the influence of specific properties of music on speech pro cessing is not well understood. Here, w e focus on t h e im pact of the presence of lyrics and of music complexity. We investigate the effect of music an d lyrics on spoken-word recognition, which is kno wn to be a central building block of speech perception (e.g., [5] ). Previous studies have established that music may in terfere with speech processing [ 6],[7],[8],[9]. By masking acoustic information completely or p artially , music and sung lyrics can make the speech signal less in telligible. Th is type of masking is called energetic masking [4],[10],[11],[12]. Energetic masking occurs due to the direct in teraction o f the b ackg ro und m usic and the speech signal in the sam e ear [10],[11]. The severity of the masking effe ct , and th us the reduction in intelligibility of th e speech signal, is dependent o n the n umber o f “gli mpses” still available to the listener [1 3] . “Glim p ses” are ti me-fre q uency regions not masked by the background noise that can be used by the listener for speech recognition. Further, informational masking [4],[10],[11],[12] can also occur. Inf o rm atio nal masking is the remainin g interference after th e effect of en ergetic ma s king has been taken into account (e.g., [4],[11]) . In our work, sources of infor m atio nal masking can be the music itself, bu t also linguistic in form ation in the form of lyrics . Given the ongoing neuroscience discussion on neural resources sh aring between speech and music pro cessing in the brai n, cf. , [14],[15], one could possibly ex p ect both musical co mplexity and lyrics to interfere eq ually with speech perception. How ev er, g iven the findings on the impact of speech b ackg ro und n oise (e.g., [4]), it is also plausible that lyrics in music pose a unique p roblem for perc ep tion. Our work focuses o n the questions: Is th e masking effect of music with sung lyrics la rger than that of m usic without lyrics; and what is the role of the music complexity ? To in vestigate the se questions, a word id entifica tio n experiment was se t -up in w hich Dutch liste ners listened to short, CVC Dutch w ord s e mbedded in background music. Two listening conditions were created : the Lyrics con dition (music with lyrics) and the Music-Only (music fro m the same song without lyrics). We e xpect a larger detrimental effect of the presence o f lyrics in the background music on spo ken-word recognition than when there are no lyrics present in the background m u sic due to 1) an increase in energetic m asking in the Lyrics cond ition compared to the Music-Only condition, and 2) a potential informational masking effect o f th e lyrics (where informational masking has a larger d etrim ental effect on intelligibility than energetic masking (at a similar SNR) [16]) . Note, we do not exclude the possibility that other properties o f music cause in formational masking. Specifically, we also expect to observe effects re lated to music complexity. Next, we cover related work, mentioning how we extend the current understanding of speech processing in background music. Then we explain ou r experimental set-up an d results. Finally, we p resent an outlook on other specif ic p roperties of music promising for future study. 2. Related Work Bars, restaurant and cafés are devoti ng in creasing amounts of effort to designi n g their soundscape. The fact th at music can be controlled [1], makes it a p articularly important soundscape element. Work until now on music in restaurant settings h as focused on its ability to mask other so unds e.g., [2]. This work proposes a m usic recommender sy ste m to support the choice of music that is an effective masker of speech noise. Evidence that lyrics are a p otential source of informational masking comes from studies that have investigated h ow l yrics in background music aff e ct cognitive tasks. The impact of lyrical vs. n on-lyrical music on foreign language vocabulary learning has been studied by [ 17]. This work found a short-term effect when the language o f the sung ly rics was familiar to the learner. The i mpact of music o n work attention was studied by [18]. This work recommends that music with lyrics should be avoided to avoid impact on worker efficie n cy. We know o f th ree studies th at in vestiga ted th e effect o f background music on speech pro cessing and in cluded background music with lyric s [ 6],[7],[9]. Howev er, none has investigated th e role of lyrics, specifically. In con trast to preceding work, w e isolate th e effect o f l yrics. Specif i cally, we aim to control for o ther f actors in the music in o ur Music-Only and Lyrics conditions by using instrumental music and music containing lyrics taken from the same song. 3. Experimental set- up 3.1. Participants Twenty native Dutch listeners ( 11 females; mean age = 24.9 , SD = 5.1) from the Ra dboud University s ubject pool participated in the experiment. None of the participants reported a history of language, speech, or hearing problems. The participants were paid 5 Euros for their participation. 3.2. Materials 3.2.1. Word stimuli The stimuli consisted o f 150 Dutch CVC word s spoken by a native speaker of Dutch, and were taken from an earlier study [19] i n vestig atin g the ro le of word f requ ency and n eighborhood density o n native spoken-word recognition . The word frequency and neighborhood density of th e 1 50 words, which were ob tained from [2 0], were orthogonally varied (but not further investigated in this study) . Figure 1 . Waveform and spectrogram of 4 seconds of the song “E go betta” (Song 1). Top panels with sung lyrics and bottom panels without lyrics. 3.2.2. Background music The CVC words were embedded in background music. S ince our ultim ate goal is to understand how music influences spee ch comprehension in bars and restaura n ts, we chose music from a sp ecific restaurant in Amsterdam . A dedicated curator selects the music for the restaurant. The restaurant is popular and the curator ensures that the music songs both fit the atmosphere o f the restaurant, and are fresh. By focusing on this restaurant , we co uld choose music that is varied in style, but not radically so. In this way, we could both ensure that we were experimenting with realistic restaurant music, and also minimize the impact of style differences between songs in our experiments. We chose the three song s listed in Table 1. Table 1 . Bar/restaurant music used in the experiment Name Artist Genre Rhythm bpm Song 1 [21] E go betta Dele Sosimi Afrobeat, Funk complex 110 Song 2 [22] Purple Crustation Down- tempo, Trip Hop simple 76 Song 3 [23] Stay away from music Stephen Colebroo k Funk simple 118 In order to control as much as p o ssible for the m usic instruments and the presence of beats in the songs between th e Music-Only an d Lyrics conditions, we chose songs that contained stretches with a nd without lyrics an d where the instrumental music for these stretches was approximately the same. This was investigated by listening and visual inspection of the spectrograms of the songs. Of each song, two versions were created: on e with and one without lyrics. T h e longest stretches with and without lyrics were select ed fro m each song by c arefully cuttin g th e app ropri ate stretches o n the positive - going zero-crossings using Praat [24]. We needed stretches of background m u sic of approximately one minute in len gth. If no such stretch was present in the son g, these were created by hand, by combining d iff erent stretches of the same song, while taking care that no abrupt chan ges in the music or lyrics would occur. This was chec ked both by listening and looking at the spectrograms. Figure 1 pro vides an example o f a 4 seconds stretch for the Song 1 “E go betta”. The top two panels show the condition with sung lyrics and the bottom two panels the condition without ly rics. As is clear, the overa ll struct u re of the music and beats is the same for the two conditions. The resulting six background music files (3 songs , each in a Lyrics and a Music-Only con dition) were th en added to the stimuli at three different SNRs, i.e., SNR +15, +5, and 0 dB, using a custom-made Praat script. Each word stimulus was preceded by 200 ms of leading b ackg ro und m usic and f ollowed by 200 ms o f trailing backgrou nd music. The stretch o f background m u sic w as randomly se lected from the background music files. A H amming window was a p plied to th e background music, with a fade in / fade out of 10 ms. The S NRs were determined o n the basis of a pilot study with 12 Dutch participants, none o f whom participated in the current study. The SNRs were chosen such th at for th e easiest SN R, the back grou nd m usic is indeed perceived as b eing in the background, and at a level often found in c offee bars. The m ore difficult SNRs w er e c h osen as to ref le ct a situ ation that is m ore to be expected in a pub or disco, as we were also interested in whether we could observe a point where the performance would ‘break’, i.e., would be severely im paired. 3.3. Procedure Twelve experimental lists were created. Each list consisted o f 150 items, with 5 0 items in each of th e three SNR conditions. Half of th e items in each SNR con dition was assigned to the Lyrics con dition (= 25 items per SNR condition) and the o ther half to the Music-Only con dition. Finally, the three different songs w ere randomly ass igned to on e of the items. The o rder o f the SNR and Lyrics/Music-Only blocks were randomized and counterbalanced across participants. Each participant was randomly assigned one list. Participants were test ed individually in a sou nd-treated booth. The stimuli were presented o ver closed headphones at a comfortable sound level. Participants listened to the 150 words and were a sked to ty p e in the word they tho ught they had heard. After pressing the return key, the next item was played. 4. Results The top -left panel of Figure 2 shows the proportion o f words correctly recognized for each of the SNR conditions for the two music b ackgrounds separately, averaged o ver the three songs . The do tted line show s the proportion c o rrect for th e music background without ly rics, the o pen-square line s ho w s the proportion correc t for th e music background with lyrics. There was no perform an ce difference for the easiest, 15 dB, listening condition, but Figure 2 shows a clear difference in recognitio n performance by the listeners between the two m u sic conditions for the two more adverse listenin g co nditions: fewer words were correctly recognized for the two worst listening conditions when the music co ntained lyrics compared to the condition where no lyrics were present. Statistical analyses u sing generalized linear mixed-effect models (e.g . , [25]), containing fixed and random effects, on the accuracy of the recognized words we re carried out to investigate these o bservations. The dependent v ariable w as whether the word stimulus was correctly identi fied (‘1’) or not (‘0’). Fixed factors we re SNR (3 levels: +15 dB (on the intercept) , +5 and 0 dB ; nominal variable, as this model (AIC=2513.9) significantly outperformed th e model including SNR as a conti nuous variable (A I C=2526.0)), and cr u cially the absence (on the intercept) or presence of lyrics in the background noise . Stimulus, Subject, and Song were entered as random factors. Rand om by-Subject, by -Stimulus, and by-Song slopes f o r SNR we re added, on ly the ra ndo m by-Stimulus slope for SNR remained in the best-fitting model. Figure 2. Proportion of correct responses for the three SNR conditions for the two music backgrounds separately , averaged over the three songs (top left panel) and for each song separately (top right and bottom panels). Table 2 . Fixed effect estimates for the best-fitting model for the overall analysis, n=3 00 0. Fixed eff ect β SE p Intercept 2.958 .448 <.001 SNR +5 -.719 .2 88 .012 SNR 0 -1.121 .312 <.001 Lyrics -.0 50 .2 35 .83 SNR +5 × Lyrics -1.067 .303 <.001 SNR 0 × Lyrics -1.4 59 .314 <.001 Table 3 . Fixed effect estimates for the best-fitting model for Song 1, n=1000. Fixed eff ect β SE p Intercept 2.474 .415 <.001 SNR +5 -1.326 .390 <.001 SNR 0 -1.603 .478 <.001 Lyrics -.640 .34 .066 SNR +5 × Lyrics -.325 .459 .478 SNR 0 × Lyrics -1.375 .523 .009 Table 4 . Fixed effect estimates for the best-fitting model for Song 2, n=1000. Fixed eff ect β SE p Intercept 4.433 .902 <.001 SNR +5 -1.897 .845 .025 SNR 0 -1.910 .879 .030 Lyrics .640 .561 .254 SNR +5 × Lyrics -1.257 .655 .055 SNR 0 × Lyrics -2.488 .681 <.001 Table 5 . Fixed effect estimates for the best-fitting model for Song 3, n=1000. Fixed eff ect β SE p Intercept 2. 491 .380 <.001 SNR +5 .832 .556 .135 SNR 0 -.450 .529 .395 Lyrics .381 .399 .340 SNR +5 × Lyrics -2.411 .575 <.001 SNR 0 × Lyrics -1.173 .528 .026 Table 2 shows the fixed effect estima t es for the best -fitting model of the overall analy sis. The statistical analysis confirmed the visual observations. Overa ll , at th e two m o re adverse SNR levels, recognition accuracy was significantly worse than at the easier SNR level (SNR effects in Table 2 ). Regarding our cru cia l manipulation of the presence or absence of sung lyrics, for SNR +15 dB, there wa s no significant difference between the two music b ackg ro unds , but as shown by the two interactio ns between SNR and Lyrics, significantly fewer words were recogni zed when th e background music con tained lyrics co mpared to w h en th ere were no lyrics at the two m o st difficult SNR conditions. To in vestiga te th e influence of th e so ngs, in particular that of rhythmic complexity, o n spoken-word recognition, we carried out statistical an aly ses for the three songs separately. The to p-right and bottom panels of F igure 2 show the proportion of words correctly reco gnized for each of th e SNR conditions for t he two music b ackg ro unds f o r eac h o f th e s o ngs separately. Comparing the top right panel of Figure 2 (Song 1) and the bottom panels (Songs 2 and 3) sh o ws that the word recog nition performance for Song 1 was l ower than that for the o ther two 0 +5 +15 0 +5 +15 0 +5 +15 0 +5 +15 No lyrics Lyrics songs. Song 1 is th us an inherently better m asker than Songs 2 and 3. We get back to this finding in the General Discussion . The key observation is that for all so n gs, the Music-On ly condition outperforms the Lyrics condition. Tables 2-4 show the fixed effect estimates for the best - fitting model o f the per-song analyses. The biggest di fference between the th ree s on gs is in the interactions between SNR and Lyrics. Fo r all songs, at SNR 0 dB significantly fewer words were recognized when the background music contained lyrics compared to when th ere were no ly ric s. For Song 3, this effect was also found for SNR +5 dB, and it was marginally present for Song 2 at SNR +5 dB. 5. General discussion This work investigates the in fluence of the presence of lyrics and th e complexity of the music in background m usic on spoken-word recognition. To that end, Dutch n ative listeners were tested on a CVC word-identification task in Dutch with background music, crucially with and without the pre sence o f lyrics, at three different SNRs, and u sing three diff erent so ngs with d ifferences in music complex it y. The key finding is that at the two worst SNR conditions, words in the Music -Only condition were significantly b etter recognized than w ord s in th e Lyrics condition. So, indeed, background m usic w ith sung lyrics has a larger masking effect on spoken-word recognitio n than background music without lyrics . Additionally, d iff erences were observe d between the masking e ffe cts of the th ree songs t hat served as background music. Overall, listeners gav e fewer correct answers to Song 1 than to the other two songs. The complex rhythm, involving swing ti ming, of Song 1 could be the source of a larger energetic masking effect compared to the m usic structures o f Songs 2 and 3 . Since t he stim u li are 500 -1000 ms in length, and the bea ts per minute (bpm) rates o f th e songs vary b etween 7 6 – 118 bpm, there are only 1 – 2 main beats per stimulus. Howeve r, for Song 1, many percussion notes are present between the main beat s , as reflected by the spac in g of the energy in Figure 1. This explanation would put the observation in line with f ind ings from [8] , which f ou nd a larger m a sking effect for f aster tempos. Note that th e tempo of Song 1 in bpm is slo we r than th at o f Song 3. Ho we ver, the number of percussion notes heard by participants was effecti vely larger for Son g 1. Future research will investig ate the relatio n ship between the pr oportion of ‘glimpses’ that are available to the listener [1 3] and the music complexity to get a grip on the a mount o f energetic masking caused by different music complexities . The effect of the presence of l yrics was o nly found when the m usic was relatively loud i n comparison to the target speech. The ea siest listening condition did not show a difference in w ord recognition performance between the Music - Only a nd the Ly rics condition. Moreover, f or Song 1 th is effect was only found at th e most difficult listening condition, while for the other two songs this diffe r ence was present for the two worst listening conditions (marginally so for Song 2) , suggesting that th e sung lyrics in Songs 2 and 3 are better maskers than the sung lyrics in Song 1. The relative energy of the singers’ voices with respect to the other instruments in the song could play a role, and is an interestin g perspective for future work. We note that t he n umber o f singers do es n ot ap pear to impact speech perception . In contrast to Song 2 and 3, Song 1 h as m u ltiple vocalists si n ging in unison. A n increase in background speakers results in an increase of the masking e ffect [16] , so if the number of vocalists were to play a role, w e would have expected a larger masking effect for Song 1 compared to Songs 2 and 3. Finally, the results on the presence of l yrics appear to hin t at a larger m as king effect of a familiar language. The language in Song 1 appears to be West African Coastal En glish, which implies distinctive phonetics and possibly also an influence of tone. As such, the English of S ong 2 and Song 3 is expected to be more familiar to the ears of the native Dutch language participants, who on a daily basis hear mostly English spo ken in p rofessional settings and Western entertainment. If the language o f Song 2 and Song 3 is indeed more familiar, th e results could point towards a larger masking effect of a known language. This findin g w ou ld then be in lin e with results showing th at listen ers experience a larger masking effect from background babble when they und erstand the language of the speech in the background (e.g., [26],[27]). Note that d ue to the length of the stimuli, only w ord fragments and very rarely complete words are captured. For this reason, we would more readily expect an effect due to the phonetics of the ly rics language than an effect of familiarity with the language . 6. Conclusions and outlook To our k n owledge , this is th e f irst study investigating th e ef fect of background music with and without lyrics on spoken -word recognition. Our ex p erim en tal results ex ten d existing knowledge on th e effect o f different masker types on spoken- word recognition. Importantly, they also p rovide a baseline for the impact o f background music on c onversation in social settings. On one hand, iso lated words are more difficult to recognize th an words in context, so the adverse effects we observed co uld be expected to be worse than in more natural conversational settings. On the other h and, words are easier to recognize in a carefully controlled lab situation where the stimuli are pla yed over headph ones in a sound -proof booth compared to a m ore natural listen ing setting where listeners are typically at a (small) distance from on e another. The process of designing the experiment to isolate the impact of l yrics led to an interesting list of other factors that potentially influence how music affects conversations in social se ttings. Above, we already mentioned listener familiarity w ith the language of the lyrics, and the relative sound p ower of the singers with respect to the instruments as important. Additionally, th ere are factors that are related to the ability o f listeners to separate streams of sounds. In our study , So ng 1 had swing ti m in g, and could be perceived as less predictable to listeners th an Song 2 o r 3, with straight timing. The ability to separate streams has been related to speec h com prehension [28] . To understand how listeners’ ability to anticipate the rhythm impacts word recognition, we can move, in the future, to longer samples with more than 1-2 main beats. Further, th e age of the li stener is also expected to play a role, cf. [ 6],[7], as well as the m u sical background of th e listener, familiarity with the genre, and familiarity w ith the specific song cf . [6],[7],[29]. 7. Acknowledgements Odette Scharenborg was spo nsored by a Vidi -grant f ro m NWO (grant number: 276 - 89 - 003). Martha Larson was su pported in part by EU FP7 pro ject no. 6 10594 (CrowdRec). The authors would like to th ank the student assistants o f the lab of O.S. for help in setting up and running the experiments. We would also like to thank Kolle kt.fm and Coffee and Coconuts for helping us understand music relev a nt for social interac tion environments, and providing us with sample songs. 8. References [1] P.M. Lindborg, “A taxonomy of sound sources in restau rants” , Applied Acoustics , v ol. 110, p p. 297-310, 201 6. [2] T. K ato, M. Oka and H. Mori, “ Music recommendation sy stem to be effectively diff icult to hear the speech noise”, The SICE Annual Conference , Japa n, pp. 2 3 53-2359, 2 013. [3] J. H. Ri n del, “Verbal communicat ion and noise in eating establishments”, Applied Acoustics , vol. 71, pp. 1156-116 1 , 2010. [4] M.L.G . Garcia L ecumberri, M. Cooke, and A. Cutle r, “Non - native speech perception in adverse conditions: A review”, Speech Commu n icatio n , vol. 52, p p .864-886, 2010. [5] J. M. McQ ueen, “Speech perception”, I n K. L amberts & R. Goldstone (Eds.), T he handbook of cognition (p p . 255-275). London: Sage Pu blica t ions, 2 004. [6] F. Russo and M.K. Pichora- Fulle r, “Tune in or tune out: Age - related differences in listening to speech in music” , Ear Hear. , vol. 29, pp. 746-7 6 0, 200 8 . [7] D. Başkent, S . van Engelshoven, and J.J. Galv i n, “ Suscept i bility to interfe r ence by music an d speech maske rs in middle-aged adults” , The Journal of the Acoustical Society of Americ a, vol. 135, EL147, 2 014. [8] S. Eks trom and E. Borg , “He arin g speech in music ” , Noise Health vol. 13, pp. 277-2 8 5, 201 1 . [9] K. Gfeller, C. Turner, J. Oleson, S. Kliethermes, and V. Driscoll, “Accuracy of cochle ar implant recipie nt s on spee ch reception in background music” , Ann. Otol. Rhinol. Laryngol. , vol. 1 21, p p. 782- 791 , 2012. [10] M. Cooke, M.L. Garcia- Lecumberri, and J. Barker, “ The foreign language coc ktail party probl em: Energetic a n d i n formational masking effects in non- native speech perce p tion”, Journal of the Acoustical Soci e ty of America. , vol . 1 23, no. 1, p p. 4 1 4-27, 2 008. [11] S. Mattys, J. Brooks, and M . Cooke, “ Recognizing speech under a processing load: D i ssociat i ng energe t ic f rom infor mational factors”, Cogn. Ps ych., vol. 59, pp. 203-243, 2009. [12] B.G. Shinn- Cunningham, “Object -based auditory and visual attention”, Trends in Cognitive Sciences, vol. 12, pp. 182-186, 2008. [13] M. Cooke, “A glimpsing model of s peech perception in noise”, Journal of the Acoustical Society of America , vol. 119, pp. 1562- 1573, 2006. [14] A.D. P atel, “Language, mu sic, syntax and the brain”, Nature Neuroscience , vol. 6, pp. 67 4 -681, 2003. [15] R. Kunert and L.R. Slev , . “ A commentary on: “Neural overlap in processing mus i c a nd speech”, Fro n t. H u m. Ne u rosc i. , vol . 9, pp. 330, 2015. [16] S. Simpson a nd M . Cooke, “Consona n t ident i fica t ion in N -talker babble is a nonmo notonic f unction o f N”, J. Acoust. Soc. Am. , vol . 118, pp. 2775 – 27 78, 2005. [17] A.M.B. de Groo t and H.E. Smedinga , “ Let the music play! A short-term but no long-term detrimental effect of vocal background music with familiar language lyrics on forei gn language v ocabulary learning”, Studi e s i n Sec ond Language Acquisition , vo l. 36, no. 4 , pp. 681 - 707 , 2014. [18] Y. -N. Shih, R.-H. H uang, a nd H . - Y. Chiang. “Background music: Effects on atte n tion pe rformance”. Work 42 , pp. 573-578, 2 012. [19] F. Hintz and O. Scharenborg, “ Effects of frequency and neighborhood density on spoken-word recognition in noise: Evidence from spoken-word id enti fica t ion in Dutch ” , Architectures a nd Mechanisms f or Langu a ge Process ing (AMLaP) , Bilbao, Spain, 2 016. [20] V. Marian, J. Bartol otti , S. C habal, and A. Shook, “CLEARPO ND: Cro ss - L i nguistic easy-access resource for phonolog i cal and orthogr aphic neighborhood densities”, PLoS ONE , Vol. 7, no. 8 , 2012. [21] https://www.youtube.co m/ w a tch?v = UMZ6T DrsOO0 (acc esse d March 2017) [22] https://www.yo u tube.com/w at ch?v =otzbG7iZ0hc (accesse d March 2017) [23] https://soundcloud.com/ djfryer / ath032b-stephen-col ebrook-stay- away-from- mu sic (accesse d Mar c h 20 1 7) [24] P. Boersma, and D. W eenink, D. “ Praat: doing phone tics by computer [ Computer program] ”, 2013. Retr i eve d from http://www .p raat.org / [25] R. H. Baayen, D. J. Davidson, and D. M. Bates, “ Mixed -effects modeling with crossed random effe cts for subjects and items”, Journal of Mem o ry an d Langua g e , vol. 59, pp . 390-412, 2008 . [26] M.L. Garcia Le cu m berri, and M. Cooke, “Effect of masker type on native and non- n ative co nsonant perce p tion in noise ”, Journ al of the Acoustical Society of America , vol. 119, no. 4, pp. 2445- 2454, 2006. [27] K.J. Van Engen, “ Sim i larity and familiarity: Second language sentence recog n ition in firs t - and se c ond-language multi-talker babble”, Speec h Commun ication , vo l. 52, no. 11-12, pp. 943 – 953, 2010. [28] L. - F. Shi and Y. Law , “Masking effects of speech and music: Does the masker’s hierarchical structure matter?” International Journal of Audio l ogy , 49, p ages 2 96-308, 2010. [29] N. Perham and H. Currie, “ Does listening to preferre d music improve r eadi ng comprehension performance? Applied Cognitive Psychology, Appl . Cog n it. Psyc hol. , ” v ol. 28, 2 79-284, 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment