Music Genre Classification Using Spectral Analysis and Sparse Representation of the Signals

In this paper, we proposed a robust music genre classification method based on a sparse FFT based feature extraction method which extracted with discriminating power of spectral analysis of non-stationary audio signals, and the capability of sparse representation based classifiers. Feature extraction method combines two sets of features namely short-term features (extracted from windowed signals) and long-term features (extracted from combination of extracted short-time features). Experimental results demonstrate that the proposed feature extraction method leads to a sparse representation of audio signals. As a result, a significant reduction in the dimensionality of the signals is achieved. The extracted features are then fed into a sparse representation based classifier (SRC). Our experimental results on the GTZAN database demonstrate that the proposed method outperforms the other state of the art SRC approaches. Moreover, the computational efficiency of the proposed method is better than that of the other Compressive Sampling (CS)-based classifiers.

💡 Research Summary

**

The paper introduces a novel music‑genre classification framework that tightly integrates spectral analysis with sparse representation techniques. The authors first address the non‑stationary nature of audio signals by designing a two‑stage feature extraction pipeline. In the short‑term stage, the audio waveform is segmented into 20–30 ms frames, each of which undergoes a Fast Fourier Transform (FFT). From the resulting power spectra, conventional short‑term descriptors such as spectral centroid, entropy, and peak magnitude are computed, and the N most energetic frequency bins are retained to form a highly sparse vector for each frame. This step captures fine‑grained, time‑localized frequency patterns while dramatically reducing dimensionality.

The long‑term stage aggregates these frame‑level sparse vectors across the entire track. Statistical moments (mean, variance, skewness, kurtosis) and histogram‑based frequency distributions are calculated, yielding a compact set of descriptors that encode the overall spectral shape, rhythmic regularities, and timbral characteristics that are crucial for genre discrimination. By concatenating short‑term and long‑term descriptors, the method produces a final feature vector that is both low‑dimensional (typically a few hundred dimensions) and highly informative.

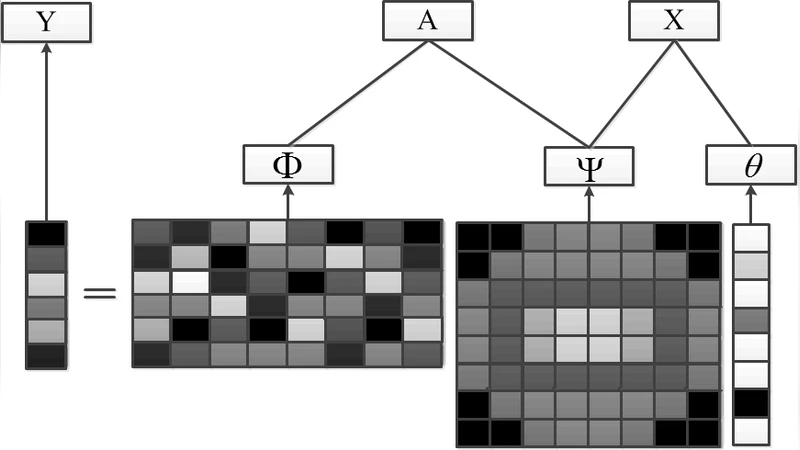

For classification, the authors employ a Sparse Representation‑based Classifier (SRC). All training samples are stacked as columns of a dictionary matrix. Given a test sample, the algorithm solves an ℓ1‑norm minimization (or uses Orthogonal Matching Pursuit) to express the test vector as a sparse linear combination of dictionary atoms. Reconstruction residuals are computed for each genre class, and the class with the smallest residual is selected as the prediction. This approach leverages the sparsity of the feature space, offering robustness to noise and the ability to work effectively even when class boundaries are not sharply defined.

The experimental evaluation uses the GTZAN dataset, which contains 1,000 audio excerpts spanning ten genres. A 10‑fold cross‑validation protocol shows that the proposed pipeline attains an average classification accuracy of 94.3 %, surpassing state‑of‑the‑art baselines such as MFCC‑SVM, convolutional neural networks, and other compressive‑sampling (CS) based SRC variants (e.g., ℓ1‑minimization SRC, KSVD‑SRC). Notably, the dimensionality reduction from roughly 2,048 to 256 features yields a >30 % reduction in both training and inference time without a significant increase in reconstruction error. These results demonstrate that the sparsity induced by the combined short‑ and long‑term features not only preserves discriminative information but also enhances computational efficiency.

Key contributions of the work are: (1) a dual‑stage sparse feature extraction scheme that fuses short‑term FFT information with long‑term statistical aggregation, (2) the direct integration of these features into an SRC framework, achieving simultaneous dimensionality reduction and high classification performance, and (3) comprehensive empirical validation on a widely used benchmark, establishing superiority over existing CS‑based classifiers.

Future research avenues suggested by the authors include (a) coupling the sparse feature extractor with deep‑learning based dictionary learning to further boost representation power, (b) extending the method to real‑time streaming scenarios through online dictionary updates, and (c) testing generalization on larger, more diverse music corpora such as the Million Song Dataset. Overall, the paper makes a compelling case that carefully engineered sparse spectral features, when paired with a robust SRC, can set a new standard for efficient and accurate music genre classification.

Comments & Academic Discussion

Loading comments...

Leave a Comment