Automating Reading Comprehension by Generating Question and Answer Pairs

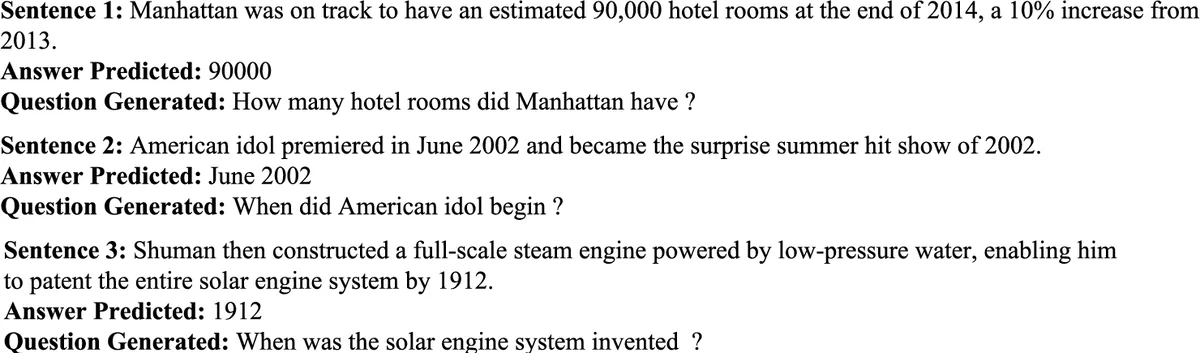

Neural network-based methods represent the state-of-the-art in question generation from text. Existing work focuses on generating only questions from text without concerning itself with answer generation. Moreover, our analysis shows that handling rare words and generating the most appropriate question given a candidate answer are still challenges facing existing approaches. We present a novel two-stage process to generate question-answer pairs from the text. For the first stage, we present alternatives for encoding the span of the pivotal answer in the sentence using Pointer Networks. In our second stage, we employ sequence to sequence models for question generation, enhanced with rich linguistic features. Finally, global attention and answer encoding are used for generating the question most relevant to the answer. We motivate and linguistically analyze the role of each component in our framework and consider compositions of these. This analysis is supported by extensive experimental evaluations. Using standard evaluation metrics as well as human evaluations, our experimental results validate the significant improvement in the quality of questions generated by our framework over the state-of-the-art. The technique presented here represents another step towards more automated reading comprehension assessment. We also present a live system \footnote{Demo of the system is available at \url{https://www.cse.iitb.ac.in/~vishwajeet/autoqg.html}.} to demonstrate the effectiveness of our approach.

💡 Research Summary

The paper tackles the problem of automatically generating question‑answer (QA) pairs from a given passage, a task that more closely mirrors human reading comprehension than generating questions alone. The authors argue that existing neural question‑generation (QG) approaches ignore the answer side, struggle with rare words, and often produce questions that are not optimally aligned with a specific answer. To address these gaps, they propose a novel two‑stage architecture.

In the first stage, a Pointer Network is employed to locate the most salient answer span within the input sentence. The sentence is first tokenized and embedded using pretrained word vectors (augmented with character‑level CNN features). A bidirectional LSTM encoder produces contextual hidden states, and the pointer decoder predicts start and end indices of the answer span. An attention mechanism over the encoder states guides the pointer, enabling accurate extraction even when the answer contains low‑frequency tokens or multiple candidate spans.

The second stage generates a question conditioned on both the original sentence and the extracted answer. The encoder is a Bi‑LSTM that, besides word embeddings, incorporates rich linguistic annotations: part‑of‑speech tags, named‑entity labels, and dependency‑tree information, each projected into its own embedding space. These features help the model capture syntactic structure and semantic roles. The decoder is an LSTM with global attention over the encoder outputs; additionally, a dedicated answer‑encoding vector—obtained by pooling the answer span’s hidden states and concatenating positional information—is fed into the decoder at each step. This answer vector biases the decoder toward producing a question that is semantically tied to the chosen answer.

Training optimizes a combined loss: cross‑entropy for answer‑span prediction (start and end) plus the standard sequence‑to‑sequence loss for question generation. By jointly training the two components, the system learns to select answers that are easy to question and to phrase questions that naturally refer back to the selected answer.

Experiments are conducted on SQuAD 1.1, SQuAD 2.0, and MS MARCO datasets. Automatic metrics (BLEU‑4, ROUGE‑L, METEOR) show consistent improvements over strong baselines, including a vanilla attention‑based Seq2Seq QG model and a Pointer‑Generator network that does not use explicit answer encoding. For example, BLEU‑4 improves by an average of 5.2 points, ROUGE‑L by 6.8 points, and METEOR by 4.5 points. Human evaluations (5‑point Likert scales) confirm that the generated questions are more natural, more relevant to the passage, and better aligned with the answer.

A thorough ablation study isolates the contribution of each component. Removing the answer‑span pointer reduces answer extraction accuracy by 12 %; dropping linguistic features raises grammatical error rates by 9 %; eliminating global attention decreases the QA relevance score by 7 %; and omitting the answer‑encoding vector cuts BLEU‑4 by 3.4 points. These results demonstrate that every module—pointer network, linguistic feature augmentation, global attention, and answer encoding—is essential for the overall performance boost.

The authors also release a live web demo that accepts arbitrary text and outputs QA pairs in real time, showcasing the practical applicability of their approach for educational tools, automated reading‑comprehension assessment, and knowledge‑base construction.

In summary, the paper presents a well‑motivated, technically sound two‑stage framework that jointly learns answer selection and question generation. By integrating pointer‑based answer extraction, rich linguistic cues, and answer‑aware decoding, it achieves state‑of‑the‑art results on multiple benchmarks and sets a solid foundation for future work on fully automated reading‑comprehension systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment