A Characterization of the DNA Data Storage Channel

Owing to its longevity and enormous information density, DNA, the molecule encoding biological information, has emerged as a promising archival storage medium. However, due to technological constraints, data can only be written onto many short DNA molecules that are stored in an unordered way, and can only be read by sampling from this DNA pool. Moreover, imperfections in writing (synthesis), reading (sequencing), storage, and handling of the DNA, in particular amplification via PCR, lead to a loss of DNA molecules and induce errors within the molecules. In order to design DNA storage systems, a qualitative and quantitative understanding of the errors and the loss of molecules is crucial. In this paper, we characterize those error probabilities by analyzing data from our own experiments as well as from experiments of two different groups. We find that errors within molecules are mainly due to synthesis and sequencing, while imperfections in handling and storage lead to a significant loss of sequences. The aim of our study is to help guide the design of future DNA data storage systems by providing a quantitative and qualitative understanding of the DNA data storage channel.

💡 Research Summary

The paper presents a comprehensive characterization of the DNA data‑storage channel, focusing on the quantitative and qualitative understanding of errors that arise during synthesis, storage, handling, amplification, and sequencing. The authors model the channel as an input multiset of M DNA molecules of length L, from which N samples are drawn independently; each sampled molecule is then perturbed by insertions, deletions, and substitutions. This abstraction captures the essential stochastic processes that determine how much information can be reliably stored.

First, the authors review the state of the art in DNA archival storage, citing early demonstrations (Church, Goldman, Grass) and recent large‑scale experiments (Organick et al.). They emphasize two technological constraints: (i) only short oligonucleotides (≈100–200 nt) can be synthesized reliably, and (ii) the molecules are stored in an unordered pool, requiring random sampling for read‑out. Consequently, the system must tolerate both loss of entire molecules and errors within surviving molecules.

In the “Error Sources” section the paper dissects each stage. Synthesis on solid‑phase chips introduces base‑level insertion, deletion, and substitution errors, as well as a termination probability of roughly 0.05 % that limits maximum strand length. The process yields millions of copies per design, but copy numbers are uneven across chip locations and across different sequences, leading to a non‑uniform initial distribution. PCR amplification, typically 10 000‑fold over ~15 cycles, multiplies each molecule by a factor slightly below two, but the factor is sequence‑dependent, creating amplification bias. Although PCR is high‑fidelity, it can still amplify synthesis errors and further skew copy‑number distributions.

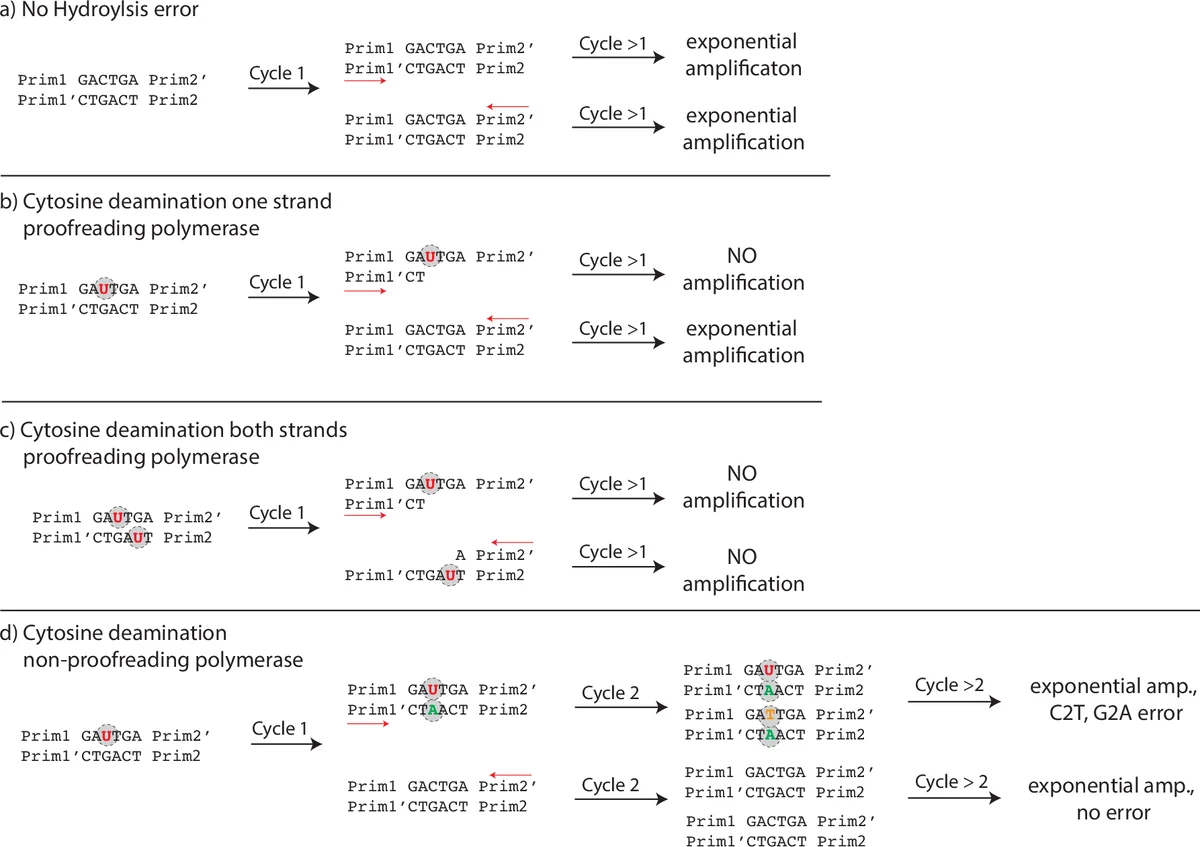

During storage, hydrolytic damage dominates. Depurination causes strand breaks, which render molecules unreadable because broken fragments lack primers on both ends and are eliminated during subsequent PCR steps. Cytosine deamination (C→U) creates uracil; with proofreading polymerases the strand stalls and is not amplified, while with non‑proofreading enzymes the uracil is read as thymine, producing C→T (and complementary G→A) substitutions. The authors note that if both strands of a duplex contain a deaminated base, the entire molecule is lost.

Sequencing errors are examined primarily for Illumina platforms, the most common in DNA‑storage experiments. Substitution rates range from 4 × 10⁻⁴ to 1.5 × 10⁻³ per base, with higher error probability at read ends. Insertions and deletions are rare (≈10⁻⁶). Error rates increase markedly for high‑GC regions and for homopolymer runs longer than six bases. The paper also mentions that low‑GC (<20 %) and very high‑GC (>75 %) fragments suffer reduced coverage due to bridge‑amplification inefficiencies.

The empirical core of the work analyzes three data sets: the authors’ own experiments, the Grass et al. dataset, and the Organick et al. dataset. For each, they compute the distribution of observed molecules, the per‑base substitution, insertion, and deletion rates, and the fraction of molecules lost entirely. Their analysis shows that synthesis and sequencing together account for roughly 70 % of all observed errors, while storage‑related loss accounts for over 60 % of the missing molecules. Longer storage times dramatically increase loss due to depurination, and higher PCR cycle counts exacerbate amplification bias.

Finally, the authors translate these findings into design guidelines for DNA‑storage systems. To mitigate molecule loss, they recommend maintaining a high physical redundancy (large M·copy) and minimizing the number of PCR cycles. To reduce within‑molecule errors, they suggest using shorter oligos (≤150 nt), limiting GC extremes, and avoiding long homopolymers. Selecting proofreading polymerases for all amplification steps helps suppress deamination‑induced substitutions. By quantifying the sampling distribution and error probabilities, the paper provides a concrete framework for evaluating the trade‑off between storage density, cost, and reliability, thereby informing the development of robust encoding/decoding schemes for future DNA‑based archival storage.

Comments & Academic Discussion

Loading comments...

Leave a Comment