Challenges: Bridge between Cloud and IoT

In the real time processing, a new emerging technology where the need of connecting smart devices with cloud through Internet has raised. IoT devices processed information is to be stored and accessed anywhere needed with a support of powerful computing performance, efficient storage infrastructure for heterogeneous systems and software which configures and controls these different devices. A lot of challenges to be addressed are listed with this new emerging technology as it needs to be compatible with the upcoming 5G wireless devices too. In this paper, the benefits and challenges of this innovative paradigm along with the areas open to do research are shown.

💡 Research Summary

The paper examines the emerging paradigm of tightly coupling Internet‑of‑Things (IoT) devices with cloud platforms for real‑time data processing, a trend accelerated by the rollout of 5G networks. It begins by outlining the promise of this integration: massive streams of sensor data can be off‑loaded to powerful cloud resources, enabling ubiquitous storage, on‑demand analytics, and the ability to control heterogeneous devices from a single management plane. However, the authors argue that realizing this vision requires overcoming a suite of inter‑related technical, economic, and security challenges.

First, protocol heterogeneity and lack of standardization are highlighted. IoT devices typically use lightweight protocols such as MQTT, CoAP, or LwM2M, while cloud services often rely on HTTP/REST or gRPC. The mismatch leads to interoperability friction, especially when quality‑of‑service (QoS) guarantees differ across protocols. The paper calls for broader adoption of open standards (e.g., oneM2M, LwM2M) and the development of translation gateways that can preserve security contexts while bridging these stacks.

Second, the authors discuss data handling efficiency. Raw sensor streams are bandwidth‑intensive and can overwhelm both the wireless link and the cloud’s ingest pipelines. Edge computing is presented as a necessary layer to perform filtering, aggregation, compression, and even preliminary analytics before forwarding only salient events to the cloud. The paper recommends a hierarchical storage architecture that combines time‑series databases for recent high‑frequency data, object storage for bulk archival, and lifecycle‑policy automation to control cost.

Third, security and privacy are identified as paramount concerns. Massive device populations create a “scale‑out” authentication problem; traditional PKI solutions are too heavyweight for constrained nodes. The authors explore lightweight certificate mechanisms, token‑based schemes, and emerging blockchain‑based trust models as possible mitigations. End‑to‑end encryption, integrity verification, and differential‑privacy techniques for data in transit and at rest are also emphasized.

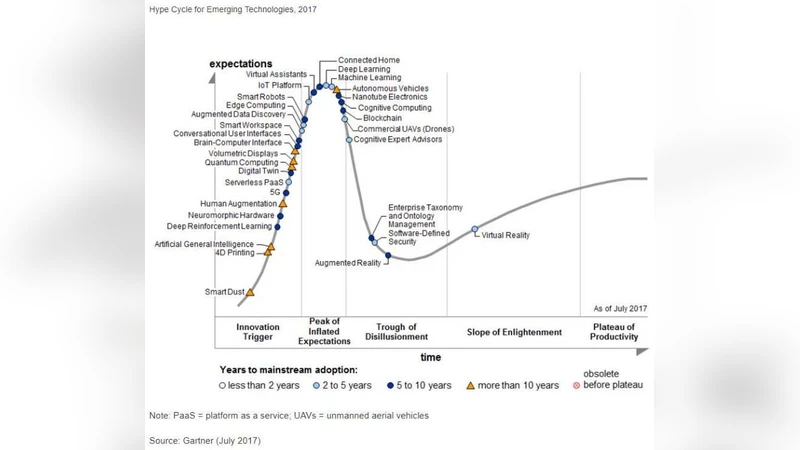

Fourth, resource orchestration and service‑level management are examined. The paper argues that static allocation of workloads to either cloud or edge is insufficient. Dynamic orchestration frameworks—ideally extensions of Kubernetes or lightweight alternatives such as K3s—must be capable of real‑time placement decisions based on latency sensitivity, computational load, and data locality. The authors note current gaps in container orchestration for ultra‑low‑power edge nodes and suggest research into minimal‑footprint schedulers and auto‑scaling policies.

Fifth, scalability and cost efficiency are discussed in the context of billions of devices. The authors point out that traditional vertical scaling is unsustainable; instead, horizontal scaling with automatic provisioning, multi‑tenant isolation, and usage‑based billing (e.g., serverless or Function‑as‑a‑Service) is required. They acknowledge challenges such as cold‑start latency, state management across distributed functions, and the need for predictive cost‑performance models.

Finally, the paper outlines open research directions. These include: (1) establishing robust, globally‑accepted data models and API contracts; (2) designing ultra‑lightweight, provably secure authentication and key‑exchange protocols; (3) optimizing edge‑AI inference pipelines through model quantization and on‑device acceleration; (4) developing automated SLA monitoring and enforcement tools that can reconcile edge and cloud performance metrics; and (5) creating end‑to‑end reference architectures that exploit 5G’s ultra‑reliable low‑latency communications (URLLC) for mission‑critical applications such as autonomous control, digital twins, and smart‑city services.

In conclusion, the authors contend that bridging cloud and IoT is not merely a networking problem but a comprehensive systems engineering challenge that spans protocol design, data lifecycle management, security, orchestration, and economic modeling. By systematically cataloguing these challenges and proposing concrete research avenues, the paper provides a roadmap for academia and industry to collaboratively build the next generation of intelligent, scalable, and secure IoT‑cloud ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment