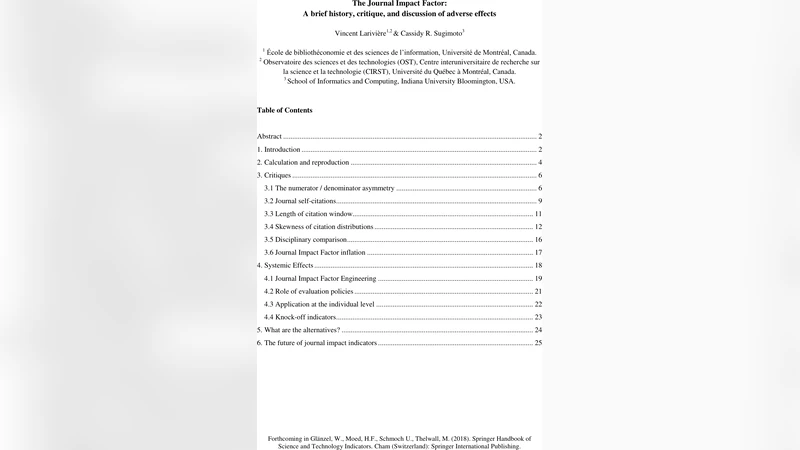

The Journal Impact Factor: A brief history, critique, and discussion of adverse effects

The Journal Impact Factor (JIF) is, by far, the most discussed bibliometric indicator. Since its introduction over 40 years ago, it has had enormous effects on the scientific ecosystem: transforming the publishing industry, shaping hiring practices and the allocation of resources, and, as a result, reorienting the research activities and dissemination practices of scholars. Given both the ubiquity and impact of the indicator, the JIF has been widely dissected and debated by scholars of every disciplinary orientation. Drawing on the existing literature as well as on original research, this chapter provides a brief history of the indicator and highlights well-known limitations-such as the asymmetry between the numerator and the denominator, differences across disciplines, the insufficient citation window, and the skewness of the underlying citation distributions. The inflation of the JIF and the weakening predictive power is discussed, as well as the adverse effects on the behaviors of individual actors and the research enterprise. Alternative journal-based indicators are described and the chapter concludes with a call for responsible application and a commentary on future developments in journal indicators.

💡 Research Summary

The chapter provides a comprehensive overview of the Journal Impact Factor (JIF), tracing its origins to the 1975 launch of the Journal Citation Reports (JCR) alongside the Science Citation Index. It explains how the JIF quickly became the dominant bibliometric indicator used by scholars, institutions, funding agencies, and publishers to assess journal prestige, researcher performance, and allocate resources. The authors detail the calculation method—citations received in a given year to items published in the two preceding years divided by the number of “citable items” (articles and reviews) published in those two years—and demonstrate, through a reproducibility exercise on four high‑profile biology journals, that the official JIF can be closely approximated when the underlying citation data are cleaned and matched.

The core of the chapter is a systematic critique of the JIF. First, the numerator/denominator asymmetry is highlighted: all citations, including those to editorials, letters, news items, and other “front matter,” are counted in the numerator, while only articles and reviews are counted in the denominator. This inflates the metric because non‑citable items can contribute citations without increasing the denominator. Second, the lack of control for journal self‑citations allows journals to boost their scores through strategic editorial policies. Third, the two‑year citation window is argued to be too short, especially for fields such as the humanities and some social sciences where citation peaks occur after three to five years. Fourth, the underlying citation distribution is extremely skewed; a small number of highly cited papers drive the average, rendering the mean‑based JIF a poor representation of typical article performance. Fifth, disciplinary differences in citation practices mean that cross‑field comparisons using JIF are fundamentally flawed.

Beyond technical flaws, the authors discuss the systemic consequences of the JIF’s dominance. “JIF engineering” describes how authors, editors, and institutions manipulate submission strategies, editorial policies, and even citation practices to improve their impact scores. This leads to a narrowing of research agendas, pressure to publish in high‑JIF venues, and potential neglect of locally relevant or interdisciplinary work. The chapter also documents the phenomenon of JIF inflation: over the past decades the average JIF has risen, yet its ability to predict future citations or research quality has weakened.

In response to these issues, the chapter surveys alternative journal‑level metrics. The Eigenfactor Score and Article Influence Score incorporate the entire citation network and weight citations by the prestige of the citing source. CiteScore, based on Scopus data, uses a three‑year window and includes all document types, offering a broader view of journal activity. The SCImago Journal Rank (SJR) applies a PageRank‑like algorithm to account for citation quality. While each alternative addresses specific shortcomings of the JIF, none fully resolves all concerns, and the authors advocate for a pluralistic, responsible approach to research evaluation.

The conclusion calls for greater transparency in data sources, the inclusion of confidence intervals, and the integration of quantitative and qualitative assessment methods. The authors stress that future developments should move away from a single‑metric culture toward a more nuanced, context‑sensitive evaluation ecosystem that better reflects the diverse ways scholarly work creates impact.

Comments & Academic Discussion

Loading comments...

Leave a Comment