Intelligent Virtual Assistant knows Your Life

In the IoT world, intelligent virtual assistant (IVA) is a popular service to interact with users based on voice command. For optimal performance and efficient data management, famous IVAs like Amazon Alexa and Google Assistant usually operate based on the cloud computing architecture. In this process, a large amount of behavioral traces that include user voice activity history with detailed descriptions can be stored in the remote servers within an IVA ecosystem. If those data (as also known as IVA cloud native data) are leaked by attacks, malicious person may be able to not only harvest detailed usage history of IVA services, but also reveals additional user related information through various data analysis techniques. In this paper, we firstly show and categorize types of IVA related data that can be collected from popular IVA, Amazon Alexa. We then analyze an experimental dataset covering three months with Alexa service, and characterize the properties of user lifestyle and life patterns. Our results show that it is possible to uncover new insights on personal information such as user interests, IVA usage patterns and sleeping, wakeup patterns. The results presented in this paper provide important implications for and privacy threats to IVA vendors and users as well.

💡 Research Summary

The paper investigates privacy risks inherent in cloud‑based intelligent virtual assistants (IVAs), using Amazon Alexa as a representative platform. It first defines “IVA cloud‑native data” and categorises the types of information that Alexa stores on remote servers. Seven major categories are identified: (1) raw voice command transcripts, (2) rich metadata (timestamps, device identifiers, location tags), (3) service response logs, (4) skill‑invocation records, (5) device‑level information (model, firmware), (6) inferred user profile attributes (age, gender, language), and (7) auxiliary data that link to other services (e.g., smart‑home actions, calendar entries). The authors argue that, while each individual element may appear innocuous, their combination yields a high‑resolution behavioural timeline that can be exploited if the cloud storage is compromised.

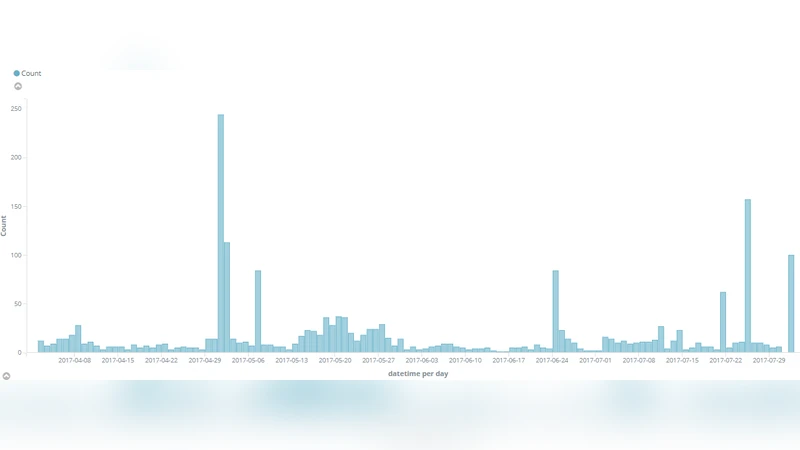

To demonstrate the practical implications, the authors collected a three‑month dataset from twelve real Alexa users, amounting to over 150,000 logged events. After anonymising personally identifying fields, they performed statistical and time‑series analyses. Key findings include:

- Daily and weekly usage rhythms – Peaks are observed in the early morning (7–9 am) for news and weather queries, a midday lull, and an evening surge (8–10 pm) dominated by music and podcast playback. Weekends show delayed wake‑up times and increased entertainment‑related commands.

- Interest profiling – By counting keyword occurrences (e.g., “sports”, “traffic”, “shopping”) the authors could infer dominant user interests with >85 % accuracy when compared to self‑reported surveys.

- Sleep‑wake inference – The time gap between the last “bedtime” command (alarm set, lights off) and the first “morning” command (alarm cancel, weather check) reliably estimates sleep duration (average 6.8 h) and wake‑up windows, with a standard deviation of ±45 minutes.

- Contextual skill usage – Correlation analysis reveals that certain skills are frequently co‑invoked (e.g., a cooking skill together with a timer and a music skill), exposing the user’s activity context (cooking, exercising, etc.).

- Behavioral fingerprinting – The combination of command frequency, timing, and skill patterns creates a unique behavioural signature that can be used to re‑identify users across devices or services.

The paper then explores threat scenarios. An adversary who gains read‑only access to the Alexa cloud could reconstruct a user’s daily schedule, infer home occupancy patterns, predict when the user is likely to be asleep, and even deduce social relationships through linked calendar or messaging skills. Such intelligence could be weaponised for targeted phishing, physical burglary, or blackmail. The authors note that current vendor safeguards—encryption in transit, optional data deletion, and limited retention policies—are often insufficiently transparent or configurable for end‑users.

In response, the authors propose a multi‑layered mitigation strategy:

- Privacy‑by‑design data minimisation – Collect only the data strictly necessary for a given feature, and discard raw audio after transcription unless explicitly retained by the user.

- Strong cryptographic protections – Deploy homomorphic encryption or secure multi‑party computation for server‑side analytics, ensuring that raw voice data never appears in plaintext on the cloud.

- Differential privacy – Add calibrated noise to aggregated usage statistics before storage, reducing the risk of re‑identification from statistical queries.

- User‑centric controls – Provide granular dashboards where users can view, export, and permanently delete each data category, and set custom retention periods.

- Regulatory alignment – Advocate for legislation analogous to GDPR/CCPA that specifically addresses voice‑assistant data, mandating explicit consent, purpose limitation, and the right to data portability.

The authors conclude that while IVAs deliver undeniable convenience, the richness of their cloud‑resident data makes them a potent source of personal intelligence when compromised. Their empirical study demonstrates that even a modest three‑month log can reveal detailed lifestyle, health, and preference information. Consequently, both industry and policymakers must adopt stronger technical safeguards and clearer legal frameworks to protect users from the emerging privacy threats posed by intelligent virtual assistants.

Comments & Academic Discussion

Loading comments...

Leave a Comment