A theory of sequence indexing and working memory in recurrent neural networks

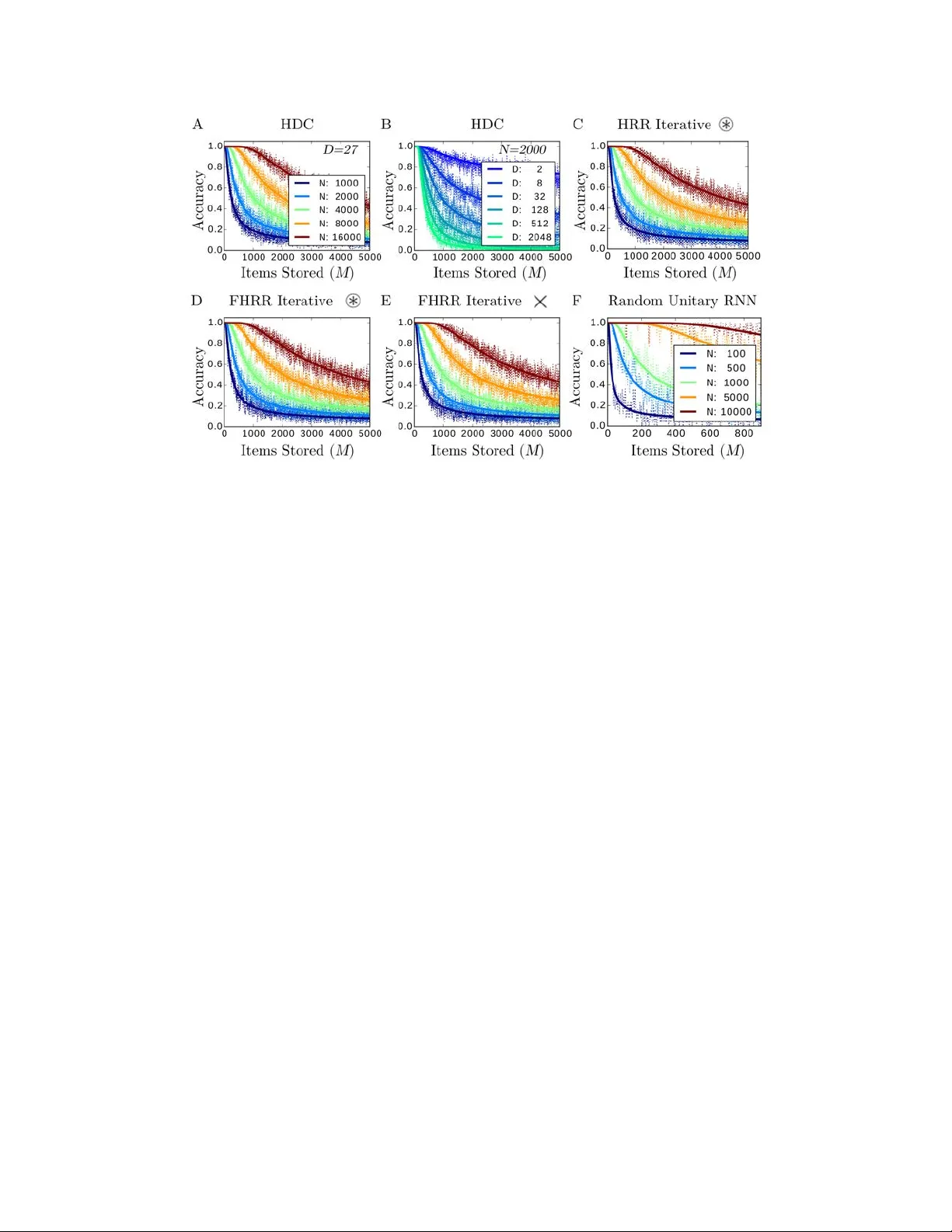

To accommodate structured approaches of neural computation, we propose a class of recurrent neural networks for indexing and storing sequences of symbols or analog data vectors. These networks with randomized input weights and orthogonal recurrent we…

Authors: 원문에 명시된 저자 리스트가 제공되지 않아 정확한 정보를 확인할 수 없습니다. 논문 원문을 참고하시기 바랍니다. ---