Chipmunk: A Systolically Scalable 0.9 mm${}^2$, 3.08 Gop/s/mW @ 1.2 mW Accelerator for Near-Sensor Recurrent Neural Network Inference

Recurrent neural networks (RNNs) are state-of-the-art in voice awareness/understanding and speech recognition. On-device computation of RNNs on low-power mobile and wearable devices would be key to applications such as zero-latency voice-based human-machine interfaces. Here we present Chipmunk, a small (<1 mm${}^2$) hardware accelerator for Long-Short Term Memory RNNs in UMC 65 nm technology capable to operate at a measured peak efficiency up to 3.08 Gop/s/mW at 1.24 mW peak power. To implement big RNN models without incurring in huge memory transfer overhead, multiple Chipmunk engines can cooperate to form a single systolic array. In this way, the Chipmunk architecture in a 75 tiles configuration can achieve real-time phoneme extraction on a demanding RNN topology proposed by Graves et al., consuming less than 13 mW of average power.

💡 Research Summary

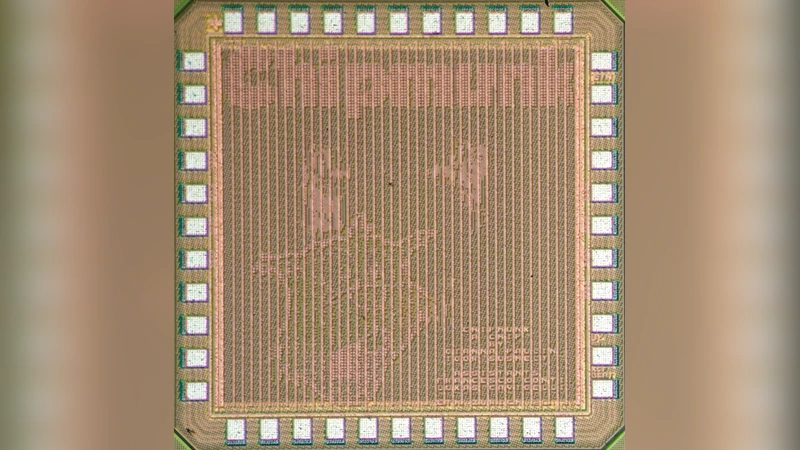

The paper presents Chipmunk, a compact hardware accelerator specifically designed for Long‑Short Term Memory (LSTM) recurrent neural networks (RNNs). Implemented in a 65 nm UMC CMOS process, a single Chipmunk tile occupies less than 1 mm² (core 0.93 mm², total die 1.57 mm²) and contains 96 parallel LSTM units. Each unit stores its weights and biases in 8‑bit fixed‑point format, while the multiply‑accumulate (MAC) datapath operates at 16‑bit precision to avoid overflow. The architecture reduces the LSTM computation to three primitive operations—matrix‑vector multiplication, element‑wise multiplication, and non‑linear activation—and implements them in a single, highly pipelined datapath.

All weight parameters are kept on‑chip in 81.7 kB of SRAM, eliminating the need for high‑bandwidth external memory accesses that dominate the energy consumption of many FPGA‑based or ASIC RNN accelerators. Input vectors (current acoustic frame xₜ and previous hidden state hₜ₋₁) are broadcast to every LSTM unit, which then perform the column‑wise inner loop of the matrix‑vector product sequentially while the row‑wise outer loop is executed in parallel across the 96 units. Sigmoid and tanh activations are realized with lookup tables, providing fast 8‑bit to 8‑bit conversion.

Measured silicon results show a peak throughput of 32.2 Gop/s (equivalent to 3.8 Gop/s) at 1.24 V and a maximum energy efficiency of 3.08 Gop/s/mW at 0.75 V. The operating frequency ranges from 20 MHz (0.75 V) to 168 MHz (1.24 V), with power consumption spanning 1.2 mW to 29 mW. These figures translate to an area efficiency of 34.4 Gop/s/mm², surpassing prior ultra‑low‑power designs such as the DNPU and far exceeding FPGA implementations.

Scalability is achieved through a systolic array of tiles. In this configuration, the input vector is partitioned and streamed vertically across columns, while each row computes a partial matrix‑vector product and passes the accumulated result horizontally to the next column. The final column produces the updated hidden state, which is then redistributed for the next time step. This approach allows the weight matrices to be split among many tiles, drastically reducing the per‑tile memory requirement and limiting inter‑tile communication to small intermediate results. A 75‑tile array (e.g., a 3 × 5 × 5 layout) can process a demanding 3‑layer, 421‑unit per layer LSTM (CTC‑3L‑421H‑UNI) in less than the 10 ms frame period required for real‑time speech processing, achieving an average power of about 12.5 mW.

The authors benchmark Chipmunk against state‑of‑the‑art solutions. Compared with the Google TPU, Chipmunk’s raw performance is lower, but its area and power are orders of magnitude smaller, making it suitable for mobile and wearable devices. Against the DNPU, Chipmunk delivers 39 % higher energy efficiency while also providing a dedicated systolic scaling mechanism to address the memory‑bounded nature of RNNs.

In summary, Chipmunk demonstrates that a sub‑millimeter‑square accelerator can deliver real‑time LSTM inference with sub‑10 mW power consumption, making on‑device speech recognition feasible without relying on cloud services. Future work may explore larger systolic fabrics, weight compression or pruning techniques, and support for more complex recurrent structures such as bidirectional LSTMs, further extending the applicability of this ultra‑efficient architecture.

Comments & Academic Discussion

Loading comments...

Leave a Comment