Directional and Causal Information Flow in EEG for Assessing Perceived Audio Quality

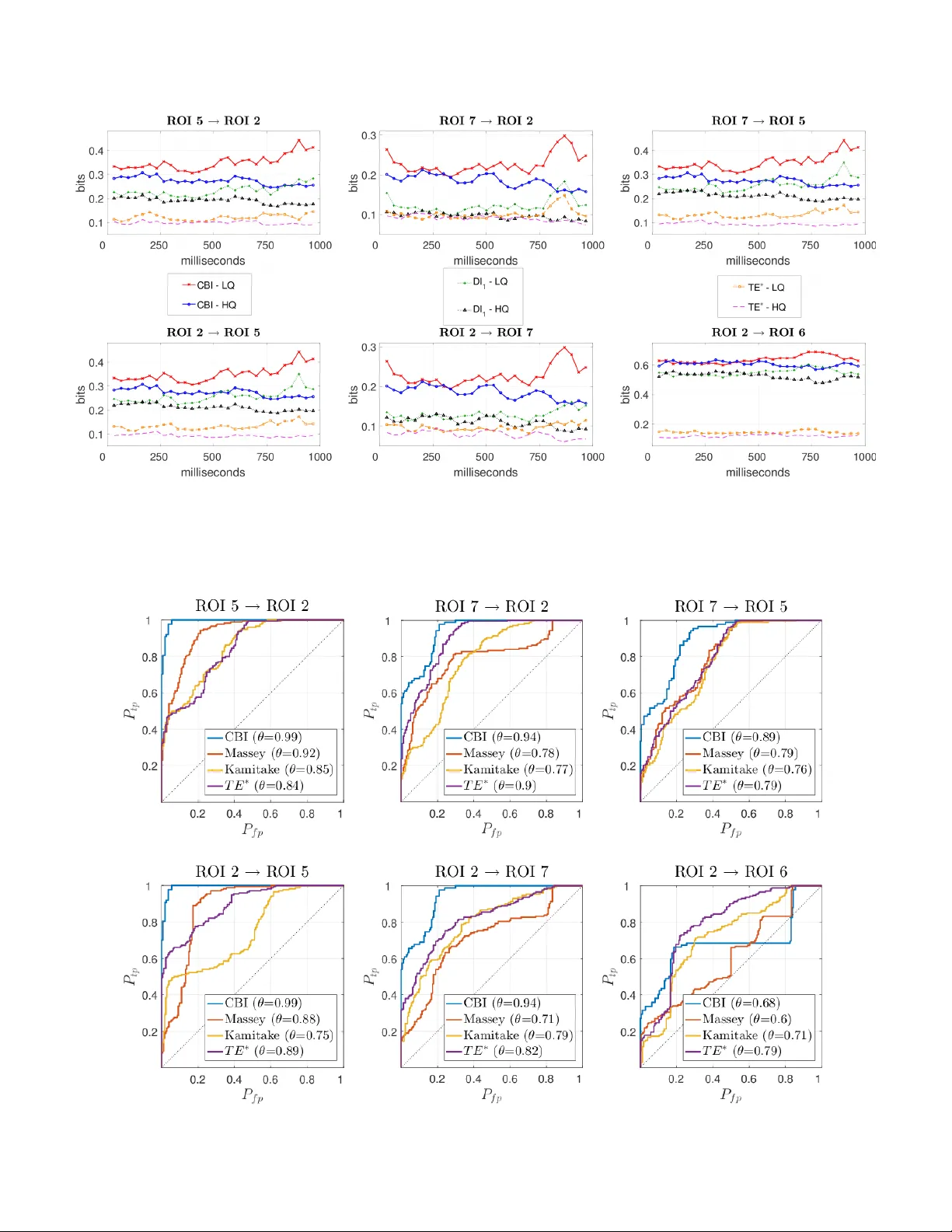

In this paper, electroencephalography (EEG) measurements are used to infer change in cortical functional connectivity in response to change in audio stimulus. Experiments are conducted wherein the EEG activity of human subjects is recorded as they li…

Authors: Ketan Mehta, Joerg Kliewer