Conditioning of three-dimensional generative adversarial networks for pore and reservoir-scale models

Geostatistical modeling of petrophysical properties is a key step in modern integrated oil and gas reservoir studies. Recently, generative adversarial networks (GAN) have been shown to be a successful method for generating unconditional simulations of pore- and reservoir-scale models. This contribution leverages the differentiable nature of neural networks to extend GANs to the conditional simulation of three-dimensional pore- and reservoir-scale models. Based on the previous work of Yeh et al. (2016), we use a content loss to constrain to the conditioning data and a perceptual loss obtained from the evaluation of the GAN discriminator network. The technique is tested on the generation of three-dimensional micro-CT images of a Ketton limestone constrained by two-dimensional cross-sections, and on the simulation of the Maules Creek alluvial aquifer constrained by one-dimensional sections. Our results show that GANs represent a powerful method for sampling conditioned pore and reservoir samples for stochastic reservoir evaluation workflows.

💡 Research Summary

The paper presents a novel framework for conditional simulation of three‑dimensional geological models using Generative Adversarial Networks (GANs). Traditional geostatistical approaches such as variogram‑based Gaussian simulation, multiple‑point statistics (MPS), and direct sampling can generate realistic models but often suffer from high computational cost and difficulty in conditioning to sparse data, especially at the pore‑scale where only two‑dimensional thin sections are typically available. Leveraging the differentiable nature of deep neural networks, the authors extend unconditional 3‑D GANs to a conditional setting by directly optimizing the latent vector z of a pre‑trained generator so that the generated volume matches prescribed lower‑dimensional observations.

The methodological core combines two loss terms. The “content loss” is a masked mean‑squared error computed only at the locations of conditioning data (e.g., 2‑D cross‑sections or 1‑D well logs). This term forces exact agreement with the observed values. The second term, “perceptual loss,” is derived from the discriminator’s output, log(1 – D(G(z))), and encourages the generated sample to remain realistic with respect to the full training distribution. The total loss L_total = L_content + λ L_perceptual is minimized with respect to z using stochastic gradient descent until the content loss falls below a predefined threshold (1e‑3 for gray‑scale images) or, for binary indicator models, until perfect matching of the conditioning points is achieved.

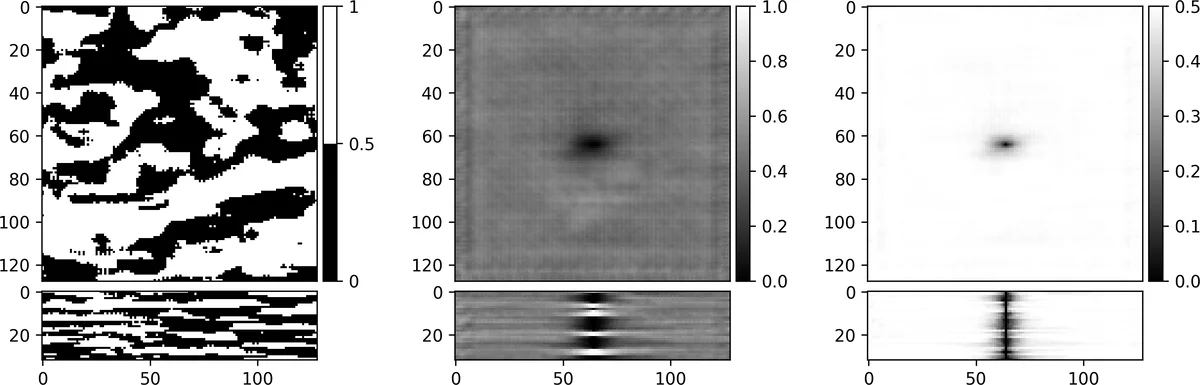

Training employs a Wasserstein‑GAN with a single‑sided gradient penalty to improve stability, and both generator and discriminator are implemented as 3‑D deep convolutional networks (DCGAN). Two case studies demonstrate the approach. The first uses a micro‑CT scan of Ketton limestone; orthogonal 2‑D slices extracted from the volume serve as conditioning data. Optimized latent vectors produce 3‑D realizations that exactly reproduce the slices while exhibiting diverse pore structures away from the conditioning planes, illustrating how low‑dimensional constraints influence the full volume. The second case involves the Maules Creek alluvial aquifer training image. Conditioning to a single central well (1‑D data) and generating 1,024 realizations on a single GPU required roughly eight hours. The ensemble mean and standard deviation maps reveal an elliptical region of influence around the well, and each realization matches the well indicator perfectly, confirming the method’s ability to honor point data while preserving variability.

The authors note minor artifacts in the ensemble mean caused by transposed convolution layers in the generator architecture, but overall the conditioned samples retain high geological realism and statistical diversity. By releasing the code (github.com/LukasMosser/geogan) and providing detailed processing steps, the work ensures reproducibility and facilitates adoption in reservoir engineering workflows such as upscaling, flow simulation, and uncertainty quantification.

In conclusion, the study demonstrates that conditional GANs, equipped with a combined content‑and‑perceptual loss, can efficiently generate three‑dimensional pore‑scale and reservoir‑scale models that satisfy sparse observational constraints. This approach bridges the gap between high‑resolution imaging and large‑scale stochastic modeling, offering a powerful tool for modern integrated reservoir studies.

Comments & Academic Discussion

Loading comments...

Leave a Comment