Automatic Conflict Detection in Police Body-Worn Audio

Automatic conflict detection has grown in relevance with the advent of body-worn technology, but existing metrics such as turn-taking and overlap are poor indicators of conflict in police-public interactions. Moreover, standard techniques to compute them fall short when applied to such diversified and noisy contexts. We develop a pipeline catered to this task combining adaptive noise removal, non-speech filtering and new measures of conflict based on the repetition and intensity of phrases in speech. We demonstrate the effectiveness of our approach on body-worn audio data collected by the Los Angeles Police Department.

💡 Research Summary

The paper addresses the problem of automatically detecting conflict in police body‑worn audio (BWA), a data source that is increasingly used for accountability but generates far more recordings than can be manually reviewed. Existing work on conflict detection in other domains (e.g., political debates) relies heavily on conversational metrics such as turn‑taking and speech overlap. The authors argue that these metrics are unreliable for police‑public interactions because BWA recordings are heavily contaminated by environmental noise (traffic, sirens, radio chatter) and because conflict often manifests as repeated, loud commands rather than interruptions.

To overcome these challenges the authors propose a three‑stage processing pipeline: (1) adaptive noise reduction, (2) speech‑vs‑non‑speech discrimination, and (3) conflict scoring based on repetition detection and intensity measurement.

Stage 1 – Adaptive Denoising

The authors combine Optimally‑Modified Log‑Spectral Amplitude (OM‑LSA) estimation with Minima‑Controlled Recursive Averaging (MCRA). After a short‑time Fourier transform (STFT) of the observed signal y(n)=x(n)+d(n), they compute a spectral gain G(k,l) that depends on the a‑posteriori speech presence probability p(k,l), the a‑priori SNR ξ(k,l), and a lower gain bound G_min. The gain is applied three times in succession to suppress persistent background noise while preserving weak speech components. Sudden bursts (e.g., sirens) are handled by a separate short‑time suppression step.

Stage 2 – Speech/Non‑Speech Filtering

The denoised signal is segmented into 60 ms frames with 20 ms overlap. For each frame 23 short‑term acoustic features are extracted: 13 MFCCs, zero‑crossing rate, energy, energy entropy, spectral centroid, spread, entropy, flux, roll‑off, fundamental frequency, and harmonic‑to‑noise ratio. Mid‑term features are obtained by averaging over 3 s windows with 0.1 s steps. A radial‑basis‑function (RBF) support vector machine (SVM) is trained on 38 min of labeled speech (22 733 frames) and 47 min of non‑speech (28 239 frames) from BWA recordings. Ten‑fold cross‑validation yields a total error of 2.31 %, substantially better than the 5–12 % reported for comparable methods on cleaner data.

Stage 3 – Conflict Scoring

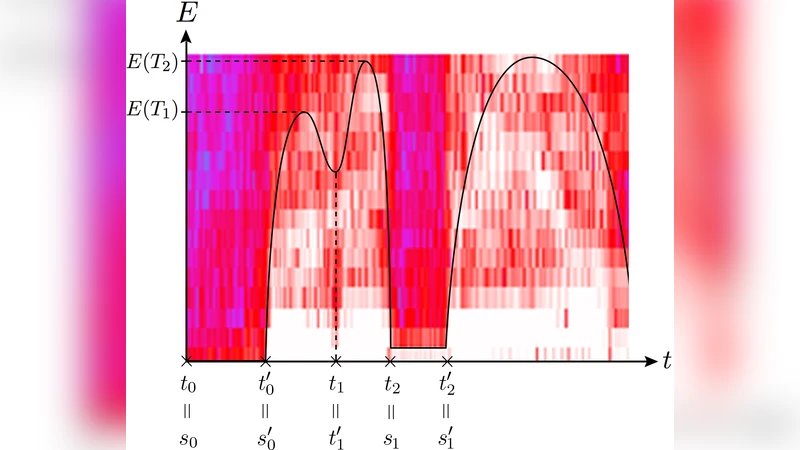

After speech extraction, the authors focus on detecting repeated utterances, which they hypothesize are a strong proxy for conflict (e.g., an officer repeatedly issuing a command). They first isolate candidate regions using an energy‑based segmentation: a band‑pass filter (300–3000 Hz) is applied, windows with energy below 0.05 are discarded, and local minima/maxima of the energy envelope define syllable‑ or word‑length segments. Segments shorter than 0.05 s are removed; longer segments are merged if the gap between them is less than 0.02 s.

Two complementary similarity measures are then computed for every pair of candidate regions:

-

Fingerprint similarity – Each region is divided into N = 0.1 s time windows and M = 32 frequency bands. Second‑order finite differences of the band‑energy matrix are binarized to produce a binary fingerprint F(n,m). The dissimilarity E is the percentage of mismatched bits. Empirically, E < 0.30 indicates a strong repeat, while E > 0.45 signals non‑repeat.

-

Fourier‑coefficient correlation – For each region the short‑time Fourier coefficients (300–3000 Hz) are computed over overlapping windows. For each frequency band m, the Pearson correlation C(m) between the two regions is calculated, and the average over all bands yields C. Values C > 0.55 denote high similarity; 0.25 ≤ C ≤ 0.55 denote moderate similarity.

The two measures are mapped to confidence functions f₁(E) and f₂(C) using piecewise linear formulas derived from empirical observations. The combined repetition score for a pair is S(E,C)=p·f₁(E)·f₂(C), where p is a scaling factor. For an entire audio file, the authors take the average of non‑zero scores among the top 5 % of unique region comparisons, producing a file‑level repetition score.

To capture the “intensity” aspect of conflict, the average energy of the same top‑5 % repeated segments is computed, and the final conflict score is the product of the repetition score and the intensity score.

Evaluation

The pipeline is evaluated on 105 BWA recordings supplied by the Los Angeles Police Department (LAPD), each 3–30 minutes long. Human annotators labeled each file as high conflict (3 files), mild conflict (15 files), or low/no conflict (87 files). The conflict scores produced by the system rank all high‑conflict files within the top 10 positions, and 14 of the 18 mild/high files appear within the top 23 positions. Consequently, reviewing only the top 23 % of files would retrieve 78 % of all conflict instances, demonstrating a substantial reduction in manual workload. Mean scores for the three classes are well separated, confirming that the repetition‑plus‑intensity metric captures the intended phenomenon.

The authors also discuss limitations and future work. Non‑officer speech (e.g., by by‑standers) often degrades performance because it is less intelligible and can generate false positives. They propose integrating speaker diarization or speaker verification (using a short clean voice sample collected from each officer) to isolate officer speech before conflict scoring. Current diarization systems achieve 18–30 % error on clean meeting data and perform worse on noisy BWA; thus, additional labeled data and domain‑specific models are needed.

Conclusion

The paper introduces a novel, noise‑robust pipeline that leverages adaptive denoising, supervised speech detection, and repetition‑based conflict metrics to automatically prioritize police body‑worn audio recordings for human review. Despite a limited number of high‑conflict examples, the system successfully isolates the most conflict‑laden files, offering a practical tool for law‑enforcement agencies. The authors suggest that incorporating officer‑only speech and treating the three sub‑scores (fingerprint similarity, Fourier correlation, intensity) as features for a supervised classifier could further improve discrimination. The work represents a concrete step toward scalable, data‑driven oversight of police‑public interactions.

Comments & Academic Discussion

Loading comments...

Leave a Comment