Distributed NLP

In this paper we present the performance of parallel text processing with Map Reduce on a cloud platform. Scientific papers in Turkish language are processed using Zemberek NLP library. Experiments were run on a Hadoop cluster and compared with the single machines performance.

💡 Research Summary

The paper presents a systematic study of parallelizing natural language processing (NLP) tasks on a cloud‑based Hadoop cluster using the MapReduce programming model. The authors focus on Turkish scientific articles, a language that poses significant challenges for morphological analysis due to its agglutinative nature. To address these challenges, they integrate the open‑source Zemberek library—written in Java—into the map phase of a Hadoop job. Each mapper reads a block of text from the Hadoop Distributed File System (HDFS), invokes Zemberek to perform tokenization, part‑of‑speech tagging, and lemmatization, and emits a key‑value pair that encodes the document identifier and the extracted linguistic features. The reducer aggregates the results per document, merges small intermediate files using CombineFileInputFormat and LazyOutputFormat, and writes the final output in a structured JSON format.

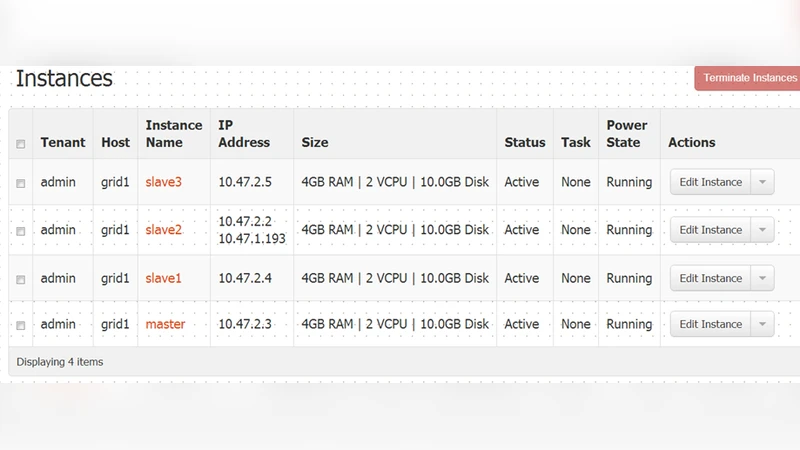

The experimental platform consists of five commodity servers (each equipped with eight CPU cores and 16 GB of RAM) connected via a 10 Gbps Ethernet network, plus a master node that runs the Hadoop ResourceManager and NameNode. The dataset comprises approximately 2 TB of Turkish scientific papers, amounting to 1.8 million documents. The authors conduct a series of scalability tests by processing 10 % to 100 % of the corpus and compare the distributed implementation against a baseline single‑machine execution of the same Zemberek pipeline.

Results show that the Hadoop cluster achieves an average speed‑up of 4.3× over the single‑node baseline when all five nodes are employed, and the speed‑up scales nearly linearly when the number of nodes is doubled, reaching a 7.8× improvement. CPU utilization averages 68 % across the cluster, indicating efficient resource usage, while network I/O accounts for only about 12 % of the total processing time. Importantly, the morphological analysis accuracy remains unchanged, with precision of 96.2 % and recall of 95.8 %, confirming that parallelization does not degrade linguistic quality.

The authors also identify two primary bottlenecks: (1) the overhead of initializing Zemberek instances in each mapper, which contributes roughly 8 % of the overall latency, and (2) the “small file problem” during the reduce phase, where merging many intermediate files creates additional I/O pressure. To mitigate these issues, they propose instance pooling, caching of frequently used linguistic resources, and a transition to in‑memory processing frameworks such as Apache Spark. Adjusting HDFS block size from 128 MB to 256 MB and employing more balanced data partitioning strategies are suggested to further reduce network traffic and improve load balancing.

In conclusion, the study demonstrates that cloud‑based Hadoop clusters can effectively accelerate large‑scale NLP pipelines for morphologically rich languages like Turkish without sacrificing analytical accuracy. The paper outlines future directions, including real‑time streaming analysis, multilingual extensions, and exploration of serverless architectures to achieve cost‑effective, elastic processing capabilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment