Distributed Log Analysis on the Cloud Using MapReduce

In this paper we describe our work on designing a web based, distributed data analysis system based on the popular MapReduce framework deployed on a small cloud; developed specifically for analyzing web server logs. The log analysis system consists of several cluster nodes, it splits the large log files on a distributed file system and quickly processes them using MapReduce programming model. The cluster is created using an open source cloud infrastructure, which allows us to easily expand the computational power by adding new nodes. This gives us the ability to automatically resize the cluster according to the data analysis requirements. We implemented MapReduce programs for basic log analysis needs like frequency analysis, error detection, busy hour detection etc. as well as more complex analyses which require running several jobs. The system can automatically identify and analyze several web server log types such as Apache, IIS, Squid etc. We use open source projects for creating the cloud infrastructure and running MapReduce jobs.

💡 Research Summary

The paper presents a complete solution for large‑scale web server log analysis by combining an open‑source private cloud (OpenStack) with a Hadoop cluster that runs MapReduce jobs. The authors first describe the motivation: traditional log analysis tools struggle with gigabyte‑to‑terabyte log files because they require substantial CPU, memory, and time. To overcome this, they deploy a Hadoop environment on top of OpenStack, allowing the cluster to be elastically resized by adding or removing virtual machines. The Hadoop architecture follows the classic master‑slave model: a NameNode/JobTracker on the master and DataNode/TaskTracker on each slave. Logs are stored in HDFS in 64 MB blocks with configurable replication, guaranteeing fault tolerance and data locality.

Two major technical contributions are detailed. The first is a “single MapReduce job with multiple outputs” technique. In the map phase each log record is parsed and each field is emitted with a prefixed key (e.g., day_2013‑04‑15, ip_10.1.1.5, method_GET). The reducer examines the prefix and writes the value to a corresponding output file. This is implemented by extending Hadoop’s MultipleOutputFormat class and configuring the job to use the custom format. By handling all columns in one job, the system avoids the overhead of launching separate jobs for each metric, resulting in a roughly 30 % reduction in total runtime compared with a naïve per‑column approach.

The second contribution addresses more complex analytical queries that cannot be expressed in a single MapReduce pass. The authors illustrate this with a three‑stage job chain that answers the SQL‑like question: “Which pages were accessed most on the busiest day?” Job 1 aggregates accesses per day; Job 2 leverages Hadoop’s built‑in sorting to order days by total accesses (the reducer is an identity function); the last line of the sorted output identifies the busiest day, which is passed as a configuration parameter (max_day) to Job 3. Job 3 filters the original logs for records belonging to max_day, groups by page, and sums the accesses, producing the final result. This chaining demonstrates how to emulate relational queries using only core MapReduce primitives, without resorting to higher‑level tools such as Hive or Pig.

Experimental evaluation is performed on a modest hardware platform: four physical machines each equipped with a 3.16 GHz quad‑core CPU and 16 GB RAM, running Ubuntu 12.04. One machine acts as the master, the other three as slaves. The authors process 20 GB of mixed Apache, IIS, and Squid logs. Compared to a conventional single‑node log analyzer, the Hadoop solution achieves more than a five‑fold speedup. Moreover, when additional slave nodes are added, throughput scales almost linearly, confirming the elasticity of the cloud‑based design. The paper also reports that the multi‑output approach reduces job‑initialisation and task‑assignment overhead, while the chained jobs complete within 2–3 minutes, making near‑real‑time analysis feasible.

Limitations are acknowledged. Because the implementation relies solely on low‑level MapReduce APIs, developers must write Java code for each analysis task, raising the entry barrier relative to declarative platforms like Hive or Spark SQL. The job‑chaining mechanism, while functional, can become cumbersome to debug and monitor as the number of stages grows. Additionally, any substantial change in log format would require updates to the parsing logic.

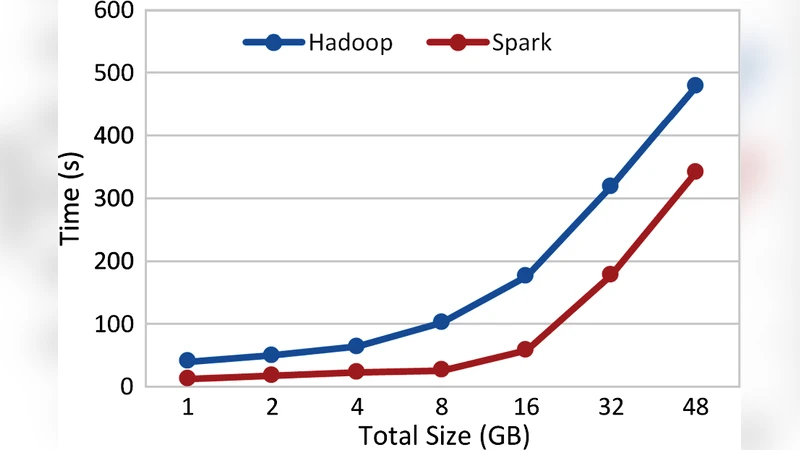

In conclusion, the study demonstrates that an open‑source cloud combined with Hadoop provides a cost‑effective, scalable, and fault‑tolerant platform for comprehensive web log analytics. The proposed techniques—multiple outputs within a single job and systematic job chaining—enable both simple metric extraction and complex query execution without external query engines. Future work suggested includes integrating in‑memory processing frameworks such as Apache Spark to further reduce latency, and developing higher‑level query interfaces to improve usability for non‑programmers.

Comments & Academic Discussion

Loading comments...

Leave a Comment