Joint Modeling of Accents and Acoustics for Multi-Accent Speech Recognition

The performance of automatic speech recognition systems degrades with increasing mismatch between the training and testing scenarios. Differences in speaker accents are a significant source of such mismatch. The traditional approach to deal with mult…

Authors: Xuesong Yang, Kartik Audhkhasi, Andrew Rosenberg

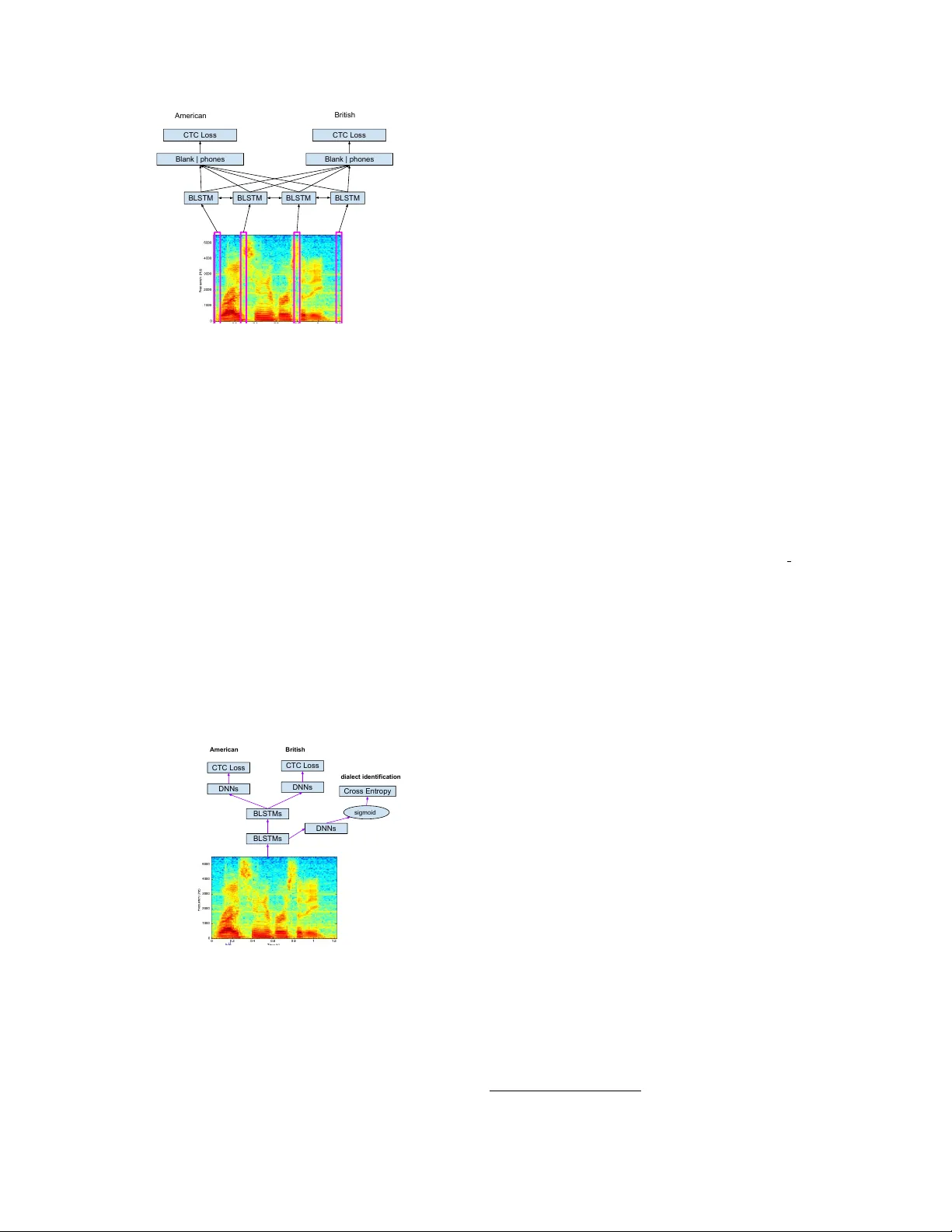

JOINT MODELING OF A CCENTS AND A COUSTICS FOR MUL TI-A CCENT SPEECH RECOGNITION Xuesong Y ang ? , Kartik Audhkhasi † , Andr ew Rosenber g † , Samuel Thomas † , Bhuvana Ramabhadran † , Mark Hase gawa-Johnson ? ? Uni versity of Illinois at Urbana-Champaign, Urbana, IL † IBM T . J. W atson Research Center , Y orkto wn Heights, NY ABSTRA CT The performance of automatic speech recognition systems de- grades with increasing mismatch between the training and testing scenarios. Differences in speaker accents are a significant source of such mismatch. The traditional approach to deal with multiple accents in volves pooling data from sev eral accents during training and building a single model in multi-task f ashion, where tasks cor - respond to indi vidual accents. In this paper , we explore an alternate model where we jointly learn an accent classifier and a multi-task acoustic model. Experiments on the American English W all Street Journal and British English Cambridge corpora demonstrate that our joint model outperforms the strong multi-task acoustic model base- line. W e obtain a 5.94% relati ve improvement in w ord error rate on British English, and 9.47% relati ve improvement on American En- glish. This illustrates that jointly modeling with accent information improv es acoustic model performance. Index T erms — End-to-end models, acoustic modeling, multi- accent speech recognition, multi-task learning 1. INTR ODUCTION Recent breakthroughs in automatic speech recognition (ASR) have resulted in a word error rate (WER) on par with human tran- scribers [1, 2] on the English Switchboard benchmark. Howe ver , dealing with acoustic condition mismatch between the training and testing data is a significant challenge that still remains unsolved. It is well-kno wn that the performance of ASR systems degrades sig- nificantly when presented with speech from speakers with different accents, dialects and speaking styles than those encountered during system training [3]. In this paper, we specifically focus on acoustic modeling for multi-accent ASR. Dialects are defined as v ariations within a language that dif fer in geographical re gions and social groups, which can be distinguished by traits of phonology , grammar , and vocab ulary [4]. Specifically , dialects may be associated with the residence, ethnicity , social class, and nativ e language of speakers. For example, in British and Amer - ican English, same words can have different spellings, like favour and favor ; or different pronunciations, such as "SEdju:l in UK En- glish vs. "skEdZUl in US English for the word schedule ; in Span- ish, vocab ulary may e volve differently between dialects, like for the phrase cell phone , Castilian Spanish uses m ´ ovil while Latin Ameri- can use celular [5]; in English, same phoneme may be realized dif- ferently , phoneme / e / in dress is pronounced as / E / in England and / e / in W ales; in Arabic, dialects may also differ in intonation and rhythm cues [6]. In this paper , we focus on the issue of differing pronunciations, while eschewing considerations of grammatical and vocab ulary differences. Acoustic modeling across multiple accents has been explored for many years, and various approaches can be summarized into three categories - Unified models , Adaptive models , and Ensemble mod- els . A unified model is trained on a limited number of accents, and can be generalized to any accent [7, 8]. An adapti ve model fine-tunes the unified model on accent-specific data assuming that the accent is known [9–11]. An ensemble model aggregates all accent-specific recognizers, and produces an optimal model by selection or combi- nation for recognition [5, 12, 13]. Experiments hav e re vealed that the unified model usually underperforms the adaptiv e model, which in turn underperforms the ensemble model [7, 8]. W e note that these prior approaches do not explicitly include accent information during training, but do so only indirectly , for example, through the dif ferent target phoneme sets for various ac- cents. This contrasts sharply with the way in which humans memo- rize the phonological and phonetic forms of accented speech: “men- tal representations of phonological forms are extremely detailed, ” and include “traces of indi vidual v oices or types of voices” [14]. In this paper , we propose to link the training of ASR acoustic models and accent identification models, in a manner similar to the linking of these two learning processes in human speech perception. W e show that this joint model not only performs well on ASR, but also on accent identification when compared to separately-trained mod- els. Giv en the recent success in end-to-end models [15–25], we use a bidirectional long short-term memory (BLSTM) recurrent neural network (RNN) acoustic model trained with the connectionist tem- poral classification (CTC) loss function for acoustic modeling. The accent identification (AID) network is also a BLSTM, but includes an average pooling layer to compute an utterance-lev el accent em- bedding. W e also introduce a joint architecture where the lo wer lay- ers of the network are trained using AID as the auxiliary task while multi-accent acoustic modeling remains the primary task of the net- work. Next, we use the AID network as a hard switch between the accent-specific output layers of the CTC AM. Preliminary experi- ments on the W all Street Journal American English and Cambridge British English corpora demonstrate that our joint model with the AID-based hard-switch achieves lower WER when compared with the state-of-the-art multi-task AM. W e also show that the AID model also benefits from joint training. The remainder of this paper is organized as follows: Section 2 revie ws relev ant literature. Section 3 introduces our AID model, multi-accent acoustic model, and switching strategy . Section 4 shows experiments and analysis, followed by the conclusion in Section 5. 2. RELA TED WORK The most closely related work to ours is from [8], which illustrated that hierarchical grapheme-based AM with auxiliary phoneme-based AMs in four English dialects trained with CTC significantly outper- formed accent-specific AMs and grapheme-based AM, respectively , while achieving competitive WER with phoneme-based multi-accent AM. Similarly , Y i et al [11] also trained a multi-accent phoneme- based AM with CTC loss, but instead, adapted accent-specific output layer using its target accent. Other rele vant work compared the performance of training ac- cent or dialect specific acoustic models and joint models. These approaches predicted context-dependent (CD) triphone states using DNNs, and used a weighted finite state transducer (WFST)-based decoder . For example, senones on accents of Chinese are predicted by assuming all accents within a language share a common CD state in ventory [9, 10]. Elfek y et al [7] implemented a dialectal multi-task learning (DMTL) frame work on three dialects of Arabic using the prediction of a unified set of CD states across all dialects prediction as the primary task and dialect identification as the secondary task. DMTL model deviated from ours in that it directly predicted CD states using con volutional-LSTM-DNNs (CLDNN), and was trained with either cross-entropy or state-level minimum Bayes risk, while ignoring the secondary dialect identification output at recognition time. This DMTL model was trained on all dialectal data and under - performed the dialect-specific model. Dialectal kno wledge distilled (DKD) model was also designed in [7], which achiev ed results com- petitiv e to, but belo w , dialect-specific models. The effecti veness of dialect-specific models motiv ated in vestiga- tions into how to use ensemble methods on multiple dialect-specific acoustic models for recognition. Soto et al [5] explored approaches of selecting and combining the best decoded hypothesis from a pool of dialectal recognizers. This work is still different from ours in that we make our selection directly using predicted dialect. Huang et al [3] used a similar strategy to ours by identifying accent first followed by acoustic model selection, ho wever , this work only con- sidered GMMs as the classifier . 3. METHOD Our proposed system consists of multiple accent-specific acoustic models and accent identification model. W e will describe these com- ponents and their joint model in this section. Acoustic model se- lection based on the hard-switch between accent-specific models is illustrated in Section 3.4. 3.1. Accent Identification Accurate identification of a speaker’ s accent is essential to the pipelined ASR systems, since accent identification (AID) errors can cause large mismatch to acoustic models. Giv en the hypothesis that accents can be discriminated by spectral features, researchers hav e attempted to model the spectral distribution of each accent using GMMs. Recently , DNNs hav e been explored as a much more expressi ve model compared to GMMs, especially in modeling probability distributions. W e implemented an independent AID that summarizes low-le vel acoustic features of an utterance by a stack of bidirectional LSTMs (BLSTMs) and DNN projection layers. An average-pooling layer is applied on top of transformed acoustic features, because the acous- tic realization of a speaker’ s accent may not be observ able in each frame. Applying av erage-pooling giv es us a more rob ust estimate of accent-dependent acoustic features. W e note that we assume that the speaker’ s accent is fixed ov er the entire utterance. Figure 1 depicts details of this AID model. A single sigmoidal neuron is used at the output layer for classification because we are only classifying between accents of English - US and UK. W e trained the AID network using the cross-entropy loss function. Sigmoid LSTM LSTM LSTM LSTM LSTM LSTM LSTM LSTM Averaged Pooling DNN DNN DNN DNN DNN DNN DNN DNN Cross Entropy Fig. 1 : Proposed accent identification (AID) model with BLSTMs and av erage-pooling. 3.2. Multi-Accent Acoustic Modeling Recently , end-to-end (E2E) systems have achieved comparable performance to traditional pipelined systems such as hybrid DNN- HMM systems. These E2E systems come with the benefit of avoid- ing time-consuming iterations between alignment and model b uild- ing. RNNs using the CTC loss function are a popular approach to E2E systems [15]. The CTC loss computes the total likelihood of the output label sequence giv en the input acoustics over all possible alignments. It achieves this by introducing a special blank symbol that augments the label sequence to make its length equal to the length of the input sequence. Clearly , there are multiple such aug- mented sequences, and CTC uses the forward-backward algorithms to ef ficiently sum the likelihoods of such sequences. The CTC loss is p ( l | x ) = X π ∈B − 1 ( l ) p ( π | x ) (1) where l is the output label sequence, x is the input acoustic sequence, π is a blank-augmented sequence for l , and B − 1 ( l ) is the set of all such sequences. During decoding, the target label sequences can be obtained by either greedy search or a WFST -based decoder . Our multi-accent acoustic model combines two CTC-based AMs, one for each accent. W e applied multiple BLSTM lay- ers shared by two accents to capture accent-independent acoustic features, and placed separate DNNs for each AM to extract accent- specific features. Figure 2 describes the structure of multi-accent acoustic model. Both AMs are jointly trained with an average of the two accent-specific CTC losses. At test time, this multi-accent model requires knowledge of the speaker’ s accent to pick out of the two accent-specific targets. W e experimented with both the oracle accent label, and using a trained AID network to make this decision. BLSTM BLSTM BLSTM BLSTM CTC Loss Blank | phones American CTC Loss Blank | phones British Fig. 2 : This figure sho ws the multi-accent acoustic model. 3.3. Joint Acoustic Modeling with AID The previous multi-accent model assumes that multi-tasking be- tween the phone sets of the two accents is sufficient to make the network learn accent-specific acoustic information. An alternate approach is to explicitly supervise the network with accent informa- tion. This leads us to our joint model, with multi-accent acoustic modeling as primary tasks at higher layers, and with AID as an auxiliary task at lower lev el layers, as shown in Figure 3. This joint model aggregates two modules with the same structures to the fore- mentioned models in Section 3.1 and 3.2, and can be jointly trained in an end-to-end fashion with the objecti ve function, min Θ L Joint (Θ) = (1 − α ) ∗ L AM (Θ) + α ∗ L AID (Θ) where α is an interpolation weight balancing between CTC loss of multi-accent AMs and the cross-entropy loss of AID, and Θ is the model parameters. CTC loss L AM sums up the probabilities of all possible paths corresponding to Equation (1), while AID classifica- tion loss L AID is cross-entropy . The two losses are at dif ferent scales, so the optimal value of α needs to be tuned on development data. BLSTMs BLSTMs DNNs DNNs CTC Loss DNNs CTC Loss American British sigmoid Cross Entropy dialect identification Fig. 3 : Proposed joint model for accent identification and acoustic modeling. 3.4. Model Selection by Hard-Switch Giv en a trained CTC-based multi-accent acoustic model and AID classifier , we apply maximum likelihood estimation to switch be- tween the accent-specific output layers y US and y UK . Let P AID ( US | x ) denote the probability of the US accent estimated by AID. W e threshold this probability at 0 . 5 to obtain the accent hard-switch s AID ( US | x ) . Hence, we pick the output layer as follows: y = y US if s AID ( US | x ) = 1 y UK else W e note that this strategy applies to both the multi-accent model and the joint model. 4. EXPERIMENTS W e perform experiments on two dialects of English corpora–W all Street Journal-1 American English and Cambridge British English. They contain overlapping, but distinct phone sets of 42 and 45 phones respectively . Both corpora contain approximately 15 hours each of audio. W e held-out 5% of the training data as a de velopment set. The window size of each speech frame is 25ms with a frame shift of 10ms. W e extracted 40-dimensional log-Mel scale filter banks and performed per-utterance cepstral mean subtraction. W e did not use any v ocal tract length normalization. W e then stacked neighbor- ing frames and picked e very alternate frame to get a 80-dimensional acoustic feature stream at half the frame rate. V arious models are compared in terms of phone error rate (PER) and word error rate (WER). Particularly , we obtain the PER after simple frame-wise greedy decoding from the DNN projection outputs after removing repeated phones and the blank symbol. The Attila toolkit [26] is used to report WER by applying WFST -based decoding. Ev aluation is performed on eval93 1 American English and si dt5b 2 British English. Our joint model uses four BLSTM layers where the lo west layer is attached to the AID netw ork and the highest single layer connects to two accent-specific softmax layers. A single DNN layer with 320 hidden units is used for each task. The weights for all models are initialized uniformly from [ − 0 . 01 , 0 . 01] . Adam [27] optimizer with initial learning rate 5 e − 4 is used, and the gradients are clipped to the range [ − 10 , 10] . W e discard the training utterances that are longer than 2000 frames. New-bob annealing [28] on the held-out data is used for early stopping, where the learning rate is cut in half whenev er the held-out loss does not decrease. For the purpose of fair comparison, we used a four layer BLSTM for the baseline acoustic models as well. V arious models are briefly described as follows: • ASpec: phoneme-based accent-specific AMs that are trained separately on mono-accent data. • MTLP: phoneme-based multi-accent AMs that are jointly trained on two accents. • Joint: proposed phoneme-based joint acoustic model with AID. 4.1. Empirical weights for balancing differ ent losses Our joint model is sensitiv e to the interpolation weight α between the AM CTC and AID cross-entropy losses. W e tuned α on de- velopment data. Figure 4 depicts relationship between overall PER of two accents and different α v alues. When α goes lar ger, o verall PER increases but with small fluctuations, especially at α of 0.01 and 0.2. The PER tends to be the largest if α is 1.0, which is ex- pected since the weights of neural networks are updated only using the AID errors. W e found the optimal value of α to be 0.001, which 1 catalog.ldc.upenn.edu/ldc93s6a 2 catalog.ldc.upenn.edu/LDC95S24 Fig. 4 : PER of joint acoustic model ov er AID loss weights α Fig. 5 : Accuracy of AID o ver v arious AID weights α achiev ed minimum PER of 12.02%. Figure 5 illustrates the trend of AID accuracy over dif ferent α values. W eights between 0.001 and 0.8 all perform well with accuracies greater than 92%, while tail v al- ues lead to e ven worse performance. When α is 0.5 and 0.005, the best performance is achiev ed with 97.77% accuracy . 4.2. Oracle performance f or multi-accent acoustic models W e first evaluate the oracle performance of various models in T a- ble 1. These results assume that the correct accent of each utterance is provided for all models. In other words, the acoustic model corresponds to the correct accent, i.e. the relev ant target accent- specific softmax layer is used. It can be seen that the proposed joint model significantly outperforms the accent-specific model (ASpec) by 17.97% relative improvement in overall WER, and multi-task ac- cent model (MTLP) by 6.81%. This observation indicates that deep BLSTM layers shared with multiple accent AMs can learn expres- siv e accent-independent features that refine accent-specific AMs. The auxiliary task, accent identification, also helps by introducing extra accent-specific information. The advantage of augmenting general acoustic features with specific information both implicitly learned by our joint model is observed in natural language process- ing [29] tasks as well. The value of implicit feature augmentation is a rich area for future in vestigation. 4.3. Hard-switch using distorted AID The oracle experiments in Section 4.2 demonstrate the value of our proposed joint model and the MTLP model when the AID classifier operates perfectly . This section demonstrates the impact of imper- fect AID on the performance using hard-switch. T able 2 sho ws the T able 1 : Oracle performance in word error rates that assumes that the true accent ID is known in advance. W ord error rates is calculated after decoding with a WFST -graph incorporating a LM; the relati ve improv ement ( r el. ) for each model ov er ASpec are reported in the parenthesis. corpus ASpec MTLP (rel.) Proposed Model (rel.) British 11.5 10.1 (-12.17) 9.5 (-17.39) American 10.2 9.0 (-11.76) 8.3 (-18.63) av erage 10.85 9.55 (-11.98) 8.9 (-17.97) results. Given a well-trained independent AID (ind. AID), our joint model still significantly outperforms the two baseline models, and MTLP achie ves better WER than ASpec. In comparison to oracle WERs of all models, British WERs are relati vely constant without any distortion, howe ver , American English WERs deteriorate ac- cordingly . This is because independent AID has 100% recall for British English utterances on the test data. It is interesting to note that the biggest impro vement over ASpec in WER comes when using the joint model (21.62%) instead of the MTLP model (14.41%) with an independent AID model. The im- prov ement upon further using the AID from the joint model itself is still larger (22.52%). This indicates that the joint model has already learned sufficient accent-specific information through the accent su- pervision in the lower layers. T able 2 : WERs of hard-switch using distorted AID. The r el. shows the relative improv ement o ver ASpec; ind. AID applies an indepen- dent neural AID trained separately . Our Pr oposed Model applies the AID jointly learn with multi-accent AMs. Corpus Pipelines with ind. AID Proposed ASpec MTLP (rel.) Joint (rel.) Model (rel.) British 11.5 10.1 9.5 9.5 (-12.17) (-17.39) (-17.39) American 11.1 9.5 8.7 8.6 (-14.41) (-21.62) (-22.52) 5. CONCLUSION This paper studies state-of-the-art approaches of acoustic modeling across multiple accents. W e note that these prior approaches do not explicitly include accent information during training, but do so only indirectly , for example through the different phone in ventories for v arious accents. W e propose an end-to-end multi-accent acous- tic modeling approach that can be jointly trained with accent iden- tification. W e use BLSTM-RNNs to design acoustic models that can be trained with CTC, and apply an av erage pooling to com- pute utterance-lev el accent embedding. Experiments show that both multi-accent acoustic models and accent identification benefit each other , and our joint model using hard-switch outperforms the state- of-the-art multi-accent acoustic model baseline with a separately- trained AID network. W e obtain a 5.94% relativ e improv ement in WER on British English, and 9.47% on American English. 6. REFERENCES [1] W ayne Xiong, Jasha Droppo, Xuedong Huang, Frank Seide, Mike Seltzer , Andreas Stolcke, Dong Y u, and Geof frey Zweig, “ Achie ving human parity in conv ersational speech recogni- tion, ” arXiv preprint , 2016. [2] George Saon, Gakuto Kurata, T om Sercu, Kartik Audhkhasi, Samuel Thomas, Dimitrios Dimitriadis, Xiaodong Cui, Bhu- vana Ramabhadran, Michael Picheny , L ynn-Li Lim, et al., “English conv ersational telephone speech recognition by hu- mans and machines, ” arXiv preprint , 2017. [3] Chao Huang, T ao Chen, and Eric Chang, “ Accent issues in large vocab ulary continuous speech recognition, ” International Journal of Speech T ec hnology , vol. 7, no. 2, pp. 141–153, 2004. [4] Janet Holmes, An introduction to sociolinguistics , Routledge, 2013. [5] V ictor Soto, Olivier Siohan, Mohamed Elfeky , and Pedro Moreno, “Selection and combination of hypotheses for di- alectal speech recognition, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Confer ence on . IEEE, 2016, pp. 5845–5849. [6] Fadi Biadsy and Julia Hirschberg, “Using prosody and phono- tactics in arabic dialect identification, ” in T enth Annual Confer- ence of the International Speech Communication Association , 2009. [7] Mohamed Elfeky , Meysam Bastani, Xavier V elez, Pedro Moreno, and Austin W aters, “T ow ards acoustic model unifi- cation across dialects, ” in Spoken Langua ge T echnology W ork- shop (SLT), 2016 IEEE . IEEE, 2016, pp. 624–628. [8] Kanishka Rao and Has ¸ im Sak, “Multi-accent speech recogni- tion with hierarchical grapheme based models, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2017 IEEE Interna- tional Confer ence on . IEEE, 2017, pp. 4815–4819. [9] Y an Huang, Dong Y u, Chaojun Liu, and Y ifan Gong, “Multi- accent deep neural network acoustic model with accent- specific top layer using the kld-regularized model adaptation, ” in F ifteenth Annual Conference of the International Speech Communication Association , 2014. [10] Mingming Chen, Zhanlei Y ang, Jizhong Liang, Y anpeng Li, and W enju Liu, “Improving deep neural networks based multi- accent mandarin speech recognition using i-vectors and accent- specific top layer , ” in Sixteenth Annual Conference of the In- ternational Speech Communication Association , 2015. [11] Jiangyan Y i, Hao Ni, Zhengqi W en, Bin Liu, and Jianhua T ao, “Ctc regularized model adaptation for improving lstm rnn based multi-accent mandarin speech recognition, ” in Chi- nese Spoken Language Pr ocessing (ISCSLP), 2016 10th Inter- national Symposium on . IEEE, 2016, pp. 1–5. [12] Y anli Zheng, Richard Sproat, Liang Gu, Izhak Shafran, Haolang Zhou, Y i Su, Daniel Jurafsky , Rebecca Starr, and Su-Y oun Y oon, “ Accent detection and speech recognition for shanghai-accented mandarin., ” in Interspeech , 2005, pp. 217– 220. [13] Mohamed Elfeky , Pedro Moreno, and V ictor Soto, “Multi- dialectical languages effect on speech recognition: T oo much choice can hurt, ” in International Conference on Natural Lan- guage and Speec h Pr ocessing (ICNLSP) , 2015. [14] Janet Pierrehumbert, “Phonological representation: Beyond abstract versus episodic, ” Annu. Rev . Linguist. , vol. 2, pp. 33– 52, 2016. [15] Alex Graves and Navdeep Jaitly , “T ow ards end-to-end speech recognition with recurrent neural networks, ” in Pr oceedings of the 31st International Conference on Machine Learning (ICML-14) , 2014, pp. 1764–1772. [16] Dario Amodei, Sundaram Ananthanarayanan, Rishita Anub- hai, Jingliang Bai, Eric Battenberg, Carl Case, Jared Casper, Bryan Catanzaro, Qiang Cheng, Guoliang Chen, et al., “Deep speech 2: End-to-end speech recognition in english and man- darin, ” in International Conference on Machine Learning , 2016, pp. 173–182. [17] Has ¸ im Sak, Andre w Senior, Kanishka Rao, and Franc ¸ oise Bea- ufays, “Fast and accurate recurrent neural network acoustic models for speech recognition, ” in Proceedings of INTER- SPEECH , 2015. [18] Y ajie Miao, Mohammad Gowayyed, and Florian Metze, “Eesen: End-to-end speech recognition using deep rnn models and wfst-based decoding, ” in Automatic Speech Recognition and Understanding (ASRU), 2015 IEEE W orkshop on . IEEE, 2015, pp. 167–174. [19] A wni Hannun, Carl Case, Jared Casper, Bryan Catanzaro, Greg Diamos, Erich Elsen, Ryan Prenger , Sanjee v Satheesh, Shubho Sengupta, Adam Coates, et al., “Deep speech: Scaling up end- to-end speech recognition, ” arXiv preprint , 2014. [20] Andrew Maas, Ziang Xie, Dan Jurafsky , and Andrew Ng, “Lexicon-free conv ersational speech recognition with neural networks, ” in Pr oceedings of the 2015 Conference of the North American Chapter of the Association for Computational Lin- guistics: Human Language T echnologies , 2015, pp. 345–354. [21] Jan Chorowski, Dzmitry Bahdanau, Kyungh yun Cho, and Y oshua Bengio, “End-to-end continuous speech recognition using attention-based recurrent nn: first results, ” arXiv preprint arXiv:1412.1602 , 2014. [22] Liang Lu, Xingxing Zhang, Kyunghyun Cho, and Steve Re- nals, “ A study of the recurrent neural network encoder-decoder for large vocab ulary speech recognition., ” in INTERSPEECH , 2015, pp. 3249–3253. [23] Dzmitry Bahdanau, Jan Chorowski, Dmitriy Serdyuk, Phile- mon Brakel, and Y oshua Bengio, “End-to-end attention-based large vocabulary speech recognition, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Confer- ence on . IEEE, 2016, pp. 4945–4949. [24] William Chan, Navdeep Jaitly , Quoc Le, and Oriol V inyals, “Listen, attend and spell: A neural network for large vocab u- lary con versational speech recognition, ” in Acoustics, Speech and Signal Processing (ICASSP), 2016 IEEE International Confer ence on . IEEE, 2016, pp. 4960–4964. [25] K. Audhkhasi, B. Ramabhadran, G. Saon, M. Picheny , and D. Nahamoo, “Direct acoustics-to-word models for English con versational speech recognition, ” in Pr oc. Interspeech , 2017, pp. 959–963. [26] H. Soltau, G. Saon, and B. Kingsbury , “The ibm attila speech recognition toolkit, ” in Spoken Language T ec hnology W ork- shop (SLT) , 2010, pp. 97–102. [27] Diederik Kingma and Jimmy Ba, “ Adam: A method for stochastic optimization, ” arXiv pr eprint arXiv:1412.6980 , 2014. [28] H. Bourlard and N. Morgan, “Generalization and parameter es- timation in feedforward nets: Some experiments, ” in Advances in Neural Information Processing Systems , 1990, vol. II, pp. 630–637. [29] Hal Daum ´ e III, “Frustratingly easy domain adaptation, ” arXiv pr eprint arXiv:0907.1815 , 2009.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment