Tool-mediated HCI Modeling Instruction in a Campus_based Software Quality Course

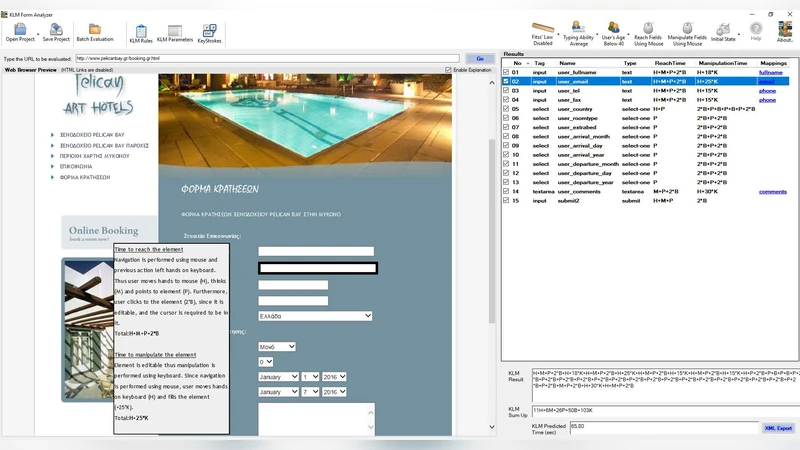

The Keystroke Level Model (KLM) and Fitts Law constitute core teaching subjects in most HCI courses, as well as many courses on software design and evaluation. The KLM Form Analyzer (KLM_FA) has been introduced as a practitioner s tool to facilitate web form design and evaluation, based on these established HCI predictive models. It was also hypothesized that KLMFA can also be used for educational purposes, since it provides step by step tracing of the KLM modeling for any web form filling task, according to various interaction strategies or users characteristics. In our previous work, we found that KLM-FA supports teaching and learning of HCI modeling in the context of distance education. This paper reports a study investigating the learning effectiveness of KLM-FA in the context of campus-based higher education. Students of a software quality course completed a knowledge test after the lecture- based instruction (pre-test condition) and after being involved in a KLMFA mediated learning activity (post-test condition). They also provided posttest ratings for their educational experience and the tool s usability. Results showed that KLM-FA can significantly improve learning of the HCI modeling. In addition, participating students rated their perceived educational experience as very satisfactory and the perceived usability of KLM-FA as good to excellent.

💡 Research Summary

**

This paper investigates the instructional impact of the Keystroke Level Model Form Analyzer (KLM‑FA), a software tool that automates the application of two foundational Human‑Computer Interaction (HCI) predictive models—Keystroke Level Model (KLM) and Fitts’ Law—to the design and evaluation of web forms. While prior work demonstrated KLM‑FA’s usefulness in distance‑learning contexts, the authors sought to determine whether the same tool could enhance learning outcomes in a traditional, campus‑based setting.

The study was conducted in the “Software Quality Assurance and Standards” elective (CEID_NE5577) at the University of Patras, Greece. The course is taken by final‑year Computer Engineering and Informatics students and includes 14 lectures, of which lectures 2–5 introduce the Human Information Processor model, KLM, and Fitts’ Law. After the theoretical instruction, students were required to complete a 15‑day essay assignment that involved modeling the interaction time of various web‑based signup forms using KLM‑FA. The tool automatically extracts form elements, allows the user to specify interaction strategies (keyboard vs. mouse), user characteristics (age, typing skill), and then computes a step‑by‑step KLM sequence and the associated time estimates, applying Fitts’ Law where appropriate. A short (6 min 23 s) YouTube tutorial accompanied the assignment.

Participants: Of the 108 enrolled students, only 22 attended all lectures, completed the essays, and thus qualified for analysis. Their ages ranged from 21 to 26 (M = 22.1, SD = 0.97); 7 were female. The class exhibited high engagement on the university’s e‑learning platform (average 13 messages per student).

Assessment instruments:

- A 14‑item multiple‑choice knowledge test covering KLM and Fitts’ Law concepts, administered twice—once after the lectures (pre‑test) and again after the essay submission (post‑test).

- A five‑item Likert scale (1–5) measuring perceived educational experience with KLM‑FA.

- The System Usability Scale (SUS), a ten‑item standardized questionnaire yielding a 0–100 score.

- A seven‑point adjective rating (worst‑imagined to best‑imagined).

Data analysis: Internal consistency was examined with Cronbach’s α. The full 14‑item knowledge test showed low reliability (α = 0.402); after removing two ambiguous items, reliability improved to α = 0.782 (12 items). The educational experience scale (α = 0.873) and SUS (α = 0.760) were acceptable. Normality of the difference scores was ambiguous (Shapiro‑Wilk non‑significant, Kolmogorov‑Smirnov significant), so both parametric (paired‑samples t) and non‑parametric (Wilcoxon signed‑rank) tests were reported.

Key findings:

- Learning performance: Mean pre‑test score was 62.9 % (SD = 15.4); mean post‑test score rose to 72.0 % (SD = 13.0). The paired‑samples t test indicated a significant gain (t(21)=2.890, p = 0.009) with a large effect size (r = 0.533). The Wilcoxon test confirmed this gain (z = 2.601, p = 0.009, r = 0.390).

- Influence of prior knowledge: Participants were split at the median pre‑test score (66.7 %). Low‑performing students (N = 10) improved from 48.1 % to 66.7 % (normalized gain ≈ 34 %). High‑performing students (N = 12) improved from 74.2 % to 80.3 % (normalized gain ≈ 21 %). An independent‑samples t test on post‑test scores showed a significant difference (t(20)=2.589, p = 0.018), indicating that higher‑initial performers still scored higher after the intervention. However, a Mann‑Whitney U test on normalized gains did not reveal a statistically significant disparity, suggesting that KLM‑FA benefits both groups, with low‑performers achieving proportionally larger gains.

- Educational experience: The five‑item scale averaged 4.0 / 5 (95 % CI

Comments & Academic Discussion

Loading comments...

Leave a Comment