Proposed Spreadsheet Transparency Definition and Measures

Auditors demand financial models be transparent yet no consensus exists on what that means precisely. Without a clear modeling transparency definition we cannot know when our models are “transparent”. The financial modeling community debates which methods are more or less transparent as though transparency is a quantifiable entity yet no measures exist. Without a transparency measure modelers cannot objectively evaluate methods and know which improves model transparency. This paper proposes a definition for spreadsheet modeling transparency that is specific enough to create measures and automation tools for auditors to determine if a model meets transparency requirements. The definition also provides modelers the ability to objectively compare spreadsheet modeling methods to select which best meets their goals.

💡 Research Summary

The paper addresses a long‑standing gap in the audit of spreadsheet‑based financial models: while auditors repeatedly demand that models be “transparent,” the term has never been rigorously defined, nor have any quantitative measures been offered to assess it. Without a clear definition, practitioners debate the relative merits of different modeling techniques as if transparency were a measurable attribute, yet they lack any objective yardstick. The authors therefore set out to (1) formulate a precise, operational definition of spreadsheet modeling transparency, (2) break that definition into measurable components, (3) propose a composite “Transparency Score” (TS) that can be calculated automatically, and (4) demonstrate how the score can be used by auditors and modelers to evaluate, compare, and improve model designs.

Definition and Conceptual Framework

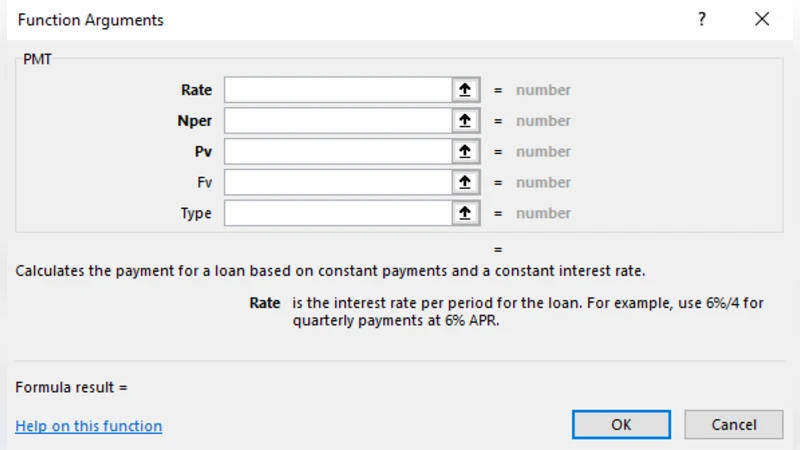

Transparency is defined as “the degree to which a user can, with minimal effort, fully understand a cell’s inputs, the logic that processes those inputs, and the resulting output.” The definition is deliberately user‑centric, emphasizing the cognitive effort required to trace a value from source data through calculation to final presentation. It is decomposed into three orthogonal dimensions:

- Input Visibility – Are the raw data or assumptions clearly labeled, validated, and documented (e.g., through data‑validation rules, comments, or structured input tables)?

- Logic Visibility – How complex is the formula that generates a value, and how long is its dependency chain? Metrics include the number of nested functions, use of array formulas or user‑defined functions, and the count and “distance” (sheet hops) of precedent cells.

- Result Visibility – Is the output presented in an easily interpretable format (summary tables, charts, dashboards) and does the model provide a traceable link back to the underlying inputs and logic?

Each dimension is scored on a 0‑10 scale. The authors recommend a default weighting of 30 % for Input Visibility, 40 % for Logic Visibility, and 30 % for Result Visibility, but they explicitly allow auditors to adjust weights to reflect regulatory focus, risk appetite, or model complexity. The weighted average yields the Transparency Score (TS), a single numeric indicator ranging from 0 (completely opaque) to 100 (fully transparent).

Automation Prototype

To operationalize the framework, the authors built a prototype tool that combines VBA macros for in‑Excel scanning with a Python back‑end for data aggregation and visualization. The tool parses every worksheet, extracts formula trees, counts validation rules, gathers comments, and maps precedent‑dependent relationships. It then computes the three sub‑scores for each cell, aggregates them to a sheet‑level and workbook‑level TS, and presents the results in an interactive dashboard. The dashboard highlights “transparency hotspots” – cells or regions where scores fall below a user‑defined threshold – and automatically generates remediation suggestions (e.g., “add comment to cell B12,” “split nested IF statements,” “move input data to a dedicated Input sheet”).

Empirical Validation

The authors applied the methodology to two versions of a cash‑flow forecasting model built for a mid‑size manufacturing firm. Version A was a monolithic sheet where all inputs, calculations, and outputs co‑existed. Version B employed a modular architecture: a dedicated Input sheet, separate calculation sheets for each functional area, and a clean Output dashboard. After scanning both workbooks, Version B achieved a TS of 78 versus 52 for Version A. The most pronounced difference lay in Logic Visibility: Version B’s formulas averaged three nested functions and a dependency depth of two, whereas Version A’s formulas often contained six or more nested functions and dependency depths of five or more.

A follow‑up audit simulation showed that after auditors used the tool to identify low‑scoring cells and applied the suggested fixes (adding labels, breaking complex formulas, consolidating outputs), the TS for Version A rose by an average of 15 points, narrowing the gap with the modular design. This case study demonstrates that the TS is sensitive to concrete design choices and can guide iterative improvement.

Limitations and Future Work

The authors acknowledge that the current framework focuses on quantitative, structural aspects of transparency and does not directly capture user expertise, organizational culture, or the semantic richness of documentation. They propose extending the model with survey‑based weighting, incorporating natural‑language processing to evaluate comment quality, and exploring machine‑learning models that predict transparency based on historical audit outcomes.

Conclusion

By providing a clear, measurable definition of spreadsheet transparency and a practical scoring system that can be automated, the paper equips auditors with an objective tool for compliance verification and gives modelers a concrete benchmark for design decisions. The Transparency Score bridges the gap between vague “best practice” advice and actionable, data‑driven evaluation, promising higher‑quality financial models and more efficient audit processes.

Comments & Academic Discussion

Loading comments...

Leave a Comment