Comparing approaches for mitigating intergroup variability in personality recognition

Personality have been found to predict many life outcomes, and there have been huge interests on automatic personality recognition from a speaker's utterance. Previously, we achieved accuracies between 37%-44% for three-way classification of high, me…

Authors: Guozhen An, Rivka Levitan

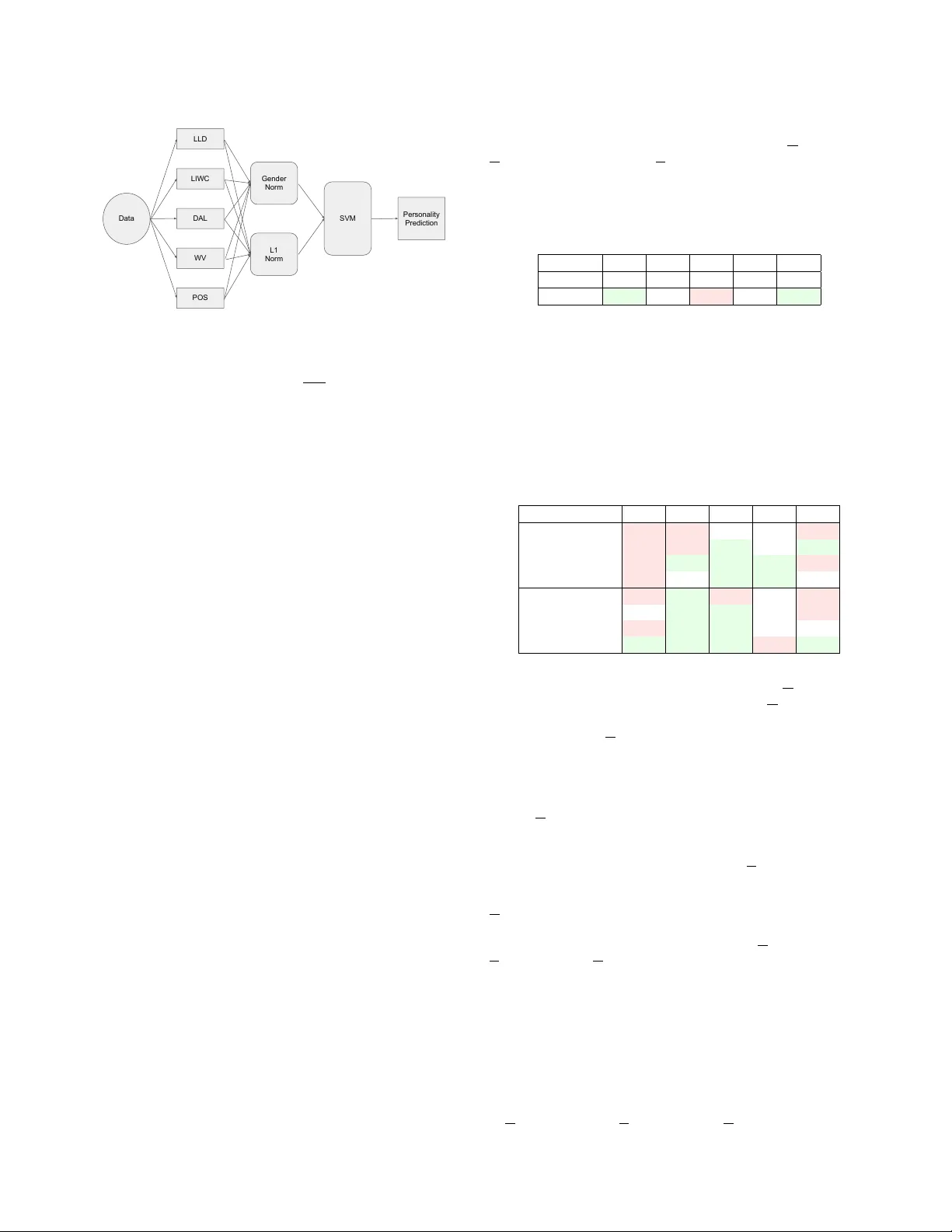

COMP ARING APPR O A CHES FOR MITIGA TING INTERGR OUP V ARIABILITY IN PERSONALITY RECOGNITION Guozhen An 1 , 2 , Rivka Levitan 1 , 3 1 Department of Computer Science, CUNY Graduate Center , USA 2 Department of Mathematics and Computer Science, Y ork College (CUNY), USA 3 Department of Computer and Information Science, Brooklyn College (CUNY), USA ABSTRA CT Personality hav e been found to predict many life outcomes, and there hav e been huge interests on automatic personality recognition from a speaker’ s utterance. Previously , we achiev ed accuracies between 37%–44% for three-way classification of high, medium or low for each of the Big Fiv e personality traits (Openness to Experience, Conscientiousness, Extrav ersion, Agreeableness, Neuroticism). W e show here that we can improve performance on this task by account- ing for heterogeneity of gender and L1 in our data, which has En- glish speech from female and male nativ e speakers of Chinese and Standard American English (SAE). W e experiment with personaliz- ing models by L1 and gender and normalizing features by speaker , L1 group, and/or gender . Index T erms — personality recognition, self-reported personal- ity , decepti ve speech, homogenized model, normalized model 1 Intr oduction “Personality” is defined by the Encyclopedia of Psychology [1] as “individual dif ferences in characteristic patterns of thinking, feeling and behaving, ” and is considered a primary source of inter-personal variation. The NEO-FFI fiv e factor model of personality traits is the most commonly used model of personality , also known as the Big Five: Openness to Experience (having wide interests, imagi- nativ e, insightful), Conscientiousness (organized, thorough, a plan- ner), Extrov ersion (talkative, ener getic, assertiv e), Agreeableness (sympathetic, kind, affectionate), and Neuroticism (tense, moody , anxious) [2]. These traits were originally identified by sev eral re- searchers working independently [3] and the model has been em- ployed to characterize personality in multiple cultures [4]. Of particular interest to researchers is the potential for person- ality traits to account for or mitigate the interpersonal variation that makes deception detection a uniquely difficult task. Previous work has found personality-based dif ferences in deception as well as de- ception detection [5, 6]. This work, which is based on data collected in the context of a deceptive task, can be used to improve deception detection by incorporating automatically-detected information about personality . Our corpus includes English speech from female and male speakers who are nativ e speakers of Standard American English (SAE) and Mandarin Chinese (MC). W e hypothesize that intra- group differences inhibit the performance of models trained on this heterogeneous data. Female and male speakers are known to hav e different v ocal characteristics and use language differently (e.g. [7, 8, 9, 10]). The same is true for speakers with dif ferent nati ve languages (L1) [11]. Furthermore, additional intra-group variability may stem from the fact that personality can be expressed dif ferently in different cultures [12]. In our current work we experiment with ways to automatically identifying the NEO-FFI Big Fiv e personality traits from speech, which will be useful for applications such as dialogue systems. Al- though there is previous research on this task, most has focused on predicting personality scores labeled by annotators asked to identify personality traits of others, rather than from self-reported personal- ity in ventories. Although ratings by observ ers who kno w the subject well are considered valid in personality research, ratings by strangers hav e been sho wn to correlate only weakly with self reports, and hav e moderate to weak internal consistency (as measured by Cronbach’ s alpha) [13]. W e experiment with two different methods for accounting for this variation: homogenization (partitioning the training data into homogeneous models) and normalization. Homogenization ensures that we train and test on data of the same kind, with the disadvantage of less training data per model. Normalization accounts for differen- tial vocal characteristics and uses of language, while allo wing for the use of the entire dataset, but does not handle dif ferential expressions of personality . W e compare the two approaches for each personality trait and gender/L1 group and show that partitioning the data into homogeneous models improv es performance in most cases. 2 Related W ork Numerous studies have successfully predicted observer reports of personality from speech and te xt [14]. Mohammadi et al.[15] used prosodic features to detect personality from ten second au- dio clips labeled for personality by human judges based on the au- dio clips alone, and reported recognition rates ranging from 64.7% ( A greeableness ) to 79.4% ( Extraversion ) for the binary classifica- tion problem deriv ed from splitting personality scores at the mean. Argamon et al. [8] used stylistic lexical features to classify stu- dent essays as high or low (top or bottom third) for Extrav ersion and Neuroticism . Linguistic Inquiry and W ord Count (LIWC) [16] categories, ha ve been shown to correlate with Big Fiv e personality traits, both in writing samples [17] and in spoken dialogue [18]. In a v ery comprehensiv e study , Mairesse et al. predicted self and observer personality scores from essay and con versational data, us- ing LIWC, psycholinguistic, and prosodic feature sets. Their results indicate that observer reports are easier to predict, they achiev ed good results with models of observed personality but no results abov e baseline with models of self-reported personality . In previous work [19], we predicted self -reports of personality scores using acoustic-prosodic and language features and found that the best performance for each trait was achieved by models with different combinations of feature sets. Performance ranged from 37- 44%, significantly abov e the baseline for each trait. This work similarly predicts self -reports, a more difficult task than predicting stranger/observer reports. Although ratings by ob- servers who know the subject well are considered valid in personal- ity research, ratings by strangers have been shown to correlate only weakly with self reports, and hav e moderate to weak internal con- sistency (as measured by Cronbach’ s alpha) [13]. Additionally , the HI/ME/LO classes we predict are determined by population norms, a more moti vated criterion than the nai ve statistical thresholds used by most studies. Our hypothesis that intra-group dif ferences in gender and L1 af- fect personality expression is motiv ated by Scherer’ s survey of per- sonality markers in speech [12], which cites studies showing that f0 (pitch), for example, is associated with dominance in American males, discipline in German males, and dullness in American fe- males, among other similar findings. 3 Data The collection and design of the corpus analyzed here is described in more detail by [6, 20, 7]. It contains within-subject deceptiv e and non-deceptive English speech from nati ve speakers of Standard American English (SAE) and Mandarin Chinese (MC), with native language defined as the language spoken at home until age fiv e. There are approximately 125 hours of speech in the corpus from 173 subject pairs and 346 individual speak ers. The data was collected using a fake resume paradigm, where pairs of took turns interviewing their partner and being interviewed from a set of 24 biographical questions such as “What is your mother’ s job?” Subjects were instructed to lie in their answers to a predetermined subset of the questions. The interviews took place in in a soundproof booth and the subjects were recorded by close-talk headsets. Before the recorded intervie ws, subjects filled out the NEO-FFI (Fiv e Factor) personality in ventory [2], yielding scores for Openness to Experience (O), Conscientiousness (C), Extrav ersion (E), Agree- ableness (A), and Neuroticism (N). W e also collected a 3-4 minute baseline sample of speech from each subject for use in speaker normalization, in which the experi- menter asked the subject open-ended questions (e.g., What do you like the best/worst about living in NYC?). Subjects were instructed to be truthful in answering. Once both subjects had completed all the questionnaires and we had collected both baselines, they began the lying game. T ranscripts for the recordings were obtained using Amazon Me- chanical Turk 1 (AMT). Three transcripts for each audio segment from different ‘T urkers’ were obtained, and combined using r over techniques [21], producing a ro ver output score measuring the agree- ment between the initial three transcripts. For clips with a score lower than 70% (9.7% of the clips), transcripts were manually cor- rected. 4 Method 4.1 F eatures W e use the feature sets described in our pre vious work [19], which include acoustic-prosodic low-le vel descriptor features ( LLD ); word category features from LIWC (Linguistic Inquiry and W ord Count) [16]; and word scores for pleasantness, activ ation and im- agery from the Dictionary of Affect in Language ( D AL ) [22]. W e also add two new feature sets, word vectors ( WV ) and part-of-speech counts ( POS ). W ord vectors (WV) . Continuous vector representations of words (word vectors) hav e been used in statistical language model- ing [23], speech recognition [24] and a wide range of NLP tasks [25] 1 https://www.mturk.com LIWC LLD WV POS DAL Gender/L1 Filter Data En/Male SVM Personality Prediction En/Female SVM Ch/Male SVM Ch/Female SVM Fig. 1 . Homogeneous model. with considerable success. Motiv ated by these findings, we use the Gensim library [26] to extract word vector features using Google’ s pre-trained word vector model [27]. In order to calculate the vector representation of turn lev el segment, we extract a 300 dimensional word vector for each word of the turn segment, and then av erage them to get a 300 dimensional vector representation of the entire turn segment. Part-of-speech counts (POS). Previous research has shown that Part-Of-Speech (POS) features predict personality effecti vely [28]. In order to capture this information, we extract 45 POS features using Natural Language T oolkit [29] with the Stanford POS tag- ger [30]. W e count the occurrence of 45 POS types from each turn in the corpus. 4.2 Homogeneous models Our data includes approximately equal amounts of English speech from female and male speakers of Standard American English (SAE) and Mandarin Chinese (MC). In order to mitigate the ef fect of gender- and L1-based acoustic-prosodic and language v ariation, as well as variation in the expression of personality , we train four sep- arate models for each personality trait, each using half the data and homogeneous with respect to either gender or L1 (Figure 1). That is, we train a model using only female speech, another using only male speech, and two more using only speech from native SAE or MC speakers, respecti vely . At test time, the personality of each speaker is predicted using the corresponding homogeneous model. W e experiment with with both single-gender and single-L1 models. T o e valuate the effecti ve- ness of this technique for mitigating data heterogeneity , we match speakers to models using the gold-standard gender and L1 labels recorded during data collection. In an online application where such labels might not be av ailable, they could be automatically predicted; accuracy for gender prediction is as high as 95% in deployed sys- tems [31, 9], while L1 detection has accuracies of about 80% on essay data [32, 11]. 4.3 Normalization The disadv antage of partitioning the training data into homogeneous models is the consequent reduction in training data size (Figure 2). W e e xperiment with an alternate approach, using all the training data in a single model but first normalizing the data by speaker , gender , or L1. Normalization can account for population-characteristic dif- ferences in vocal qualities and language use, but does not directly mitigate dif ferential expression of personality traits. W e use z -score normalization, which represents each v alue in terms of standard deviations from the population mean, so that a high-pitched female voice, for example, would be comparable to a high-pitched male voice, disregarding that the raw female pitch value LIWC LLD WV POS DAL Gender Norm L1 Norm Data SVM Personality Prediction Fig. 2 . Normalization model. may be as much as 200 Hz higher than the raw male pitch value. For each value x , the normalized value is x − µ σ , where µ and σ are the corresponding population mean and standard deviation, respectiv ely . W e experiment with speaker , gender , and L1 normalization. For gender and L1 normalization, µ and σ are calculated from the por- tion of the training data corresponding to that gender or L1, and used to normalize both the training and test data. For speaker normal- ization, µ and σ are calculated separately for each speaker in both the training and test data. This corresponds to a likely use of these models, in which the tar get speaker’ s data is av ailable but an entire population’ s is not. 4.4 Machine learning experiments [33] experimented with personality recognition as a classification, regression, and ranking problem, finding that ranking models per- formed best overall. W e believ e that classification is the best for- mat for using personality scores in downstream applications such as deception detection or adapting dialogue systems. Rather than split scores into equal bins, we label the NEO scores as “High” (HI), “Medium” (ME) or “Low” (LO) for each dimension, based on thresholds derived from population norms from a large sample of administered NEO-FFI tests. W e used W eka’ s SVM classifier for these experiments. The unit of classification used is the turn, which were extracted in the follo w- ing manner: the manual orthographic transcription was force-aligned with the audio, and the speech was segmented if there was a silence of more than 0.5 seconds. In total, there are approximately 30000 turn-level instances, which are upsampled during training so that the HI, ME and LO labels, which are normally distributed, will be balanced in the train- ing set. The average duration of each instance is 3.77s, though there are some particularly long or short outliers. T o focus the analysis on the homogenization and normalization techniques discussed here, we combine all feature sets together , e ven though we previously found [19] that performance was higher for some traits using a subset of the features. Future work will explore more effectiv e methods for combining feature sets. As in previous work, we predict each trait separately . 5 Results 5.1 Baseline The baseline for these experiments is a model for each personal- ity factor including all feature sets (LLD, LIWC, DAL, WV , POS) and all genders and L1s, without normalization. The accuracies for each trait are shown in T able 1. In this and all subsequent tables, highlighted results are significant at a two-tailed .95 confidence in- terval. The baseline surpasses a chance baseline of 0.33 (for a three- way classification of HI vs. MED vs. LO) for only Openness and Neuroticism . The baseline Extrav ersion model performs worse than chance. T able 1 . Baseline accuracy for each trait. Green and bold cells are significantly better than chance (with 95% confidence); red cells are significantly worse than chance. O C E A N Chance 0.33 0.33 0.33 0.33 0.33 All Feat 0.39 0.33 0.31 0.33 0.35 5.2 Homogeneous models T able 2 sho ws the performances of the homogeneous models. The disparate results reinforce our previous observation [19] that each personality factor can be considered a separate task requiring its o wn approach. T able 2 . Accuracies for homogeneous models. Green and bold cells are significantly better than the baseline model (with 95% con- fidence); red cells are significantly worse than the baseline model. O C E A N male 0.32 0.31 0.31 0.34 0.29 female 0.34 0.26 0.35 0.32 0.43 chinese 0.34 0.37 0.35 0.39 0.26 english 0.36 0.34 0.35 0.36 0.34 male/chinese 0.35 0.39 0.26 0.34 0.25 male/english 0.38 0.36 0.37 0.33 0.32 female/chinese 0.35 0.37 0.33 0.32 0.36 female/english 0.48 0.38 0.47 0.27 0.40 Sev en of the eight homogeneous models for Openness per- formed worse than the baseline heterogeneous Openness model, which had the highest accuracy (0.39) for this trait, as in our pre- vious work [19]. Neuroticism , which had the second highest baseline (heterogeneous) accuracy , had worse performance for the male, Chinese L1, and male/L1 models, b ut a performance im- prov ement of 8 percentage points for the female model, yielding an accuracy of 0.43, and 5 percentage points for the female/English model. Conscientiousness had worse performance for the gender- homogeneous models, but improved for the L1-homogeneous mod- els and gender/L1-homogeneous models. The female, Chinese, English and all the gender/L1-homogeneous Extraversion models except male/Chinese outperformed chance, which the correspond- ing baseline model failed to clear, as did the L1-homogeneous Agreeableness models. It is especially noteworthy that the Chinese-L1 models out- performed the heterogeneous baseline for Conscientiousness , Extrav ersion and Agreeableness . The subset of the corpus that was av ailable at the time of analysis was not balanced with respect to L1, and the size of the Chinese-L1 training data was only 30-39% of the heterogeneous training data (the exact percentage was dif ferent for each trait). That the Chinese-L1 models achie ved better perfor- mance with approximately a third of the training data indicates that the English-L1 instances did not contribute information useful for the classification of the Chinese-L1 instances, and that future work should not combine data from different L1 speakers indiscriminately . W e might also suggest, based on these results, that the expression of Conscientiousness , Extra version and Agreeableness is affected by cultural differences between native speakers of MC and SAE. Con versely , the success of the heterogeneous Openness model com- pared to the four corresponding homogeneous models suggests the interpretation that Openness is expressed similarly across cultural backgrounds. The results for the gender-homogeneous models are mixed. The male models do not outperform the baseline for any trait, and per- form worse in most cases; the female models fall short of the base- line for Openness and Conscientiousness but outperform it signif- icantly for Extrav ersion and Neuroticism . It is unclear whether data from the other gender contributes v aluable information in cases where the baseline dominates, or whether the benefits of a homoge- neous model were not enough to outweigh the reduction in training data, which is 40-49% for the male models. When the data is broken down further to train models homoge- neous with respect to both gender and L1, performance improv es notably for Conscientiousness for all four groups, and for all traits except Agreeableness for English-speaking females. The improv ed performance in Openness for female/English (10 percentage points) is the only improvement we achiev e for that trait over all experi- ments. 5.3 Normalization T able 3 shows the results for speaker , gender and L1 normalization. T able 3 . Accuracies for normalized models. Green and bold cells are significantly better than the baseline model (with 95% confi- dence); red cells are significantly worse than the baseline model. O C E A N Speaker 0.33 0.33 0.33 0.33 0.33 Gender 0.38 0.35 0.33 0.33 0.33 L1 0.34 0.36 0.32 0.32 0.33 The speaker -normalized models do not outperform chance for any trait, though the Extrav ersion model does outperform the below-chance heterogeneous baseline; the baseline surpasses the Openness and Agreeableness models. Of the gender -normalized models, only the Conscientiousness model outperforms the base- line. The Openness model has higher accuracy , but does not out- perform its relati vely strong baseline. Similarly , the L1 -normalized Conscientiousness model outperforms its baseline; the Openness model does worse than its baseline, and normalization does not affect the performance of the Extrav ersion , Agr eeableness and Neuroticism models. 5.4 Homogenization vs. normalization T able 4 shows the differences in performance between homogeneous vs. normalized models. Since there are eight separate homogeneous models, each including a subset of the training data, we compare each normalization method with the best homogeneous model and the averag e performance on that trait. For e very normalization model, there is at least one homoge- neous model, representing one subset of the population in the corpus, that significantly outperforms it. Furthermore, none of the normal- ization models significantly outperform the avera ge homogeneous performance, and several do significantly worse. As a technique, our analysis sho ws that breaking do wn the data into homogeneous models is preferable to normalization almost across the board, de- spite the diminution of the training data. An interpretation of this finding is that intra-group differences in the expression of personal- ity are more detrimental to classification accuracy than dif ferences in vocal characteristics or language use. T able 4 . Performance differences between homogenized vs. nor- malized models. O C E A N Best homogeneous performance Speaker +0.15 +0.06 +0.14 +0.06 +0.11 Gender +0.10 +0.03 +0.14 +0.06 +0.10 Language +0.14 +0.03 +0.15 +0.06 +0.10 A verage homogeneous performance Speaker +0.04 +0.02 +0.02 0.00 0.00 Gender -0.01 -0.01 +0.02 0.00 0.00 Language +0.02 -0.01 +0.03 +0.01 0.00 6 Discussion and Conclusions This paper discusses two approaches for dealing with data hetero- geneity , along two dimensions of heterogeneity , for fiv e orthogonal personality traits. From our comparisons between each approach, we can conclude the following: • performance on this task can be improved by mitigating the heterogeneity of the training data • partitioning the data into homogeneous models works better for this purpose than normalization • partitioning with respect to both gender and L1 works better than partitioning along just one dimension. More specific trends that we observe, which we present as hy- potheses to be verified on other datasets rather than concrete conclu- sions, include: • Openness is better predicted with a full heterogeneous dataset than with reduced homogeneous models. • Con versely , Conscientiousness is best predicted with homo- geneous models. • The personality of female nativ e speakers of SAE is predicted better with homogeneous models. • The personality of native MC speakers is predicted better with homogeneous models. In future work we will explore the fusion of these findings with more sophisticated machine learning models, including late-fusion techniques for combining the various feature sets and multi-task learning for modeling the prediction of the fiv e personality traits to- gether , and ev aluate whether the gains achiev ed here are orthogonal to other methods for improving performance. 7 Acknowledgements W e thank Julia Hirschberg, Andrew Rosenberg, Sarah Ita Le vitan, and Michelle Levine. This work was partially funded by AFOSR F A9550-11-1-0120. 8 Refer ences [1] Alan E Kazdin, “Encyclopedia of psychology , ” 2000. [2] Paul T Costa and Robert R MacCrae, Revised NEO personal- ity in ventory (NEO PI-R) and NEO five-factor in ventory (NEO FFI): Pr ofessional manual , Psychological Assessment Re- sources, 1992. [3] John M Digman, “Personality structure: Emer gence of the fiv e-factor model, ” Annual revie w of psychology , vol. 41, no. 1, pp. 417–440, 1990. [4] Robert R McCrae, “T rait psychology and culture: Exploring intercultural comparisons, ” Journal of personality , vol. 69, no. 6, pp. 819–846, 2001. [5] Frank Enos, Stefan Benus, Robin L Cautin, Martin Graciarena, Julia Hirschber g, and Elizabeth Shriberg, “Personality factors in human deception detection: comparing human to machine performance., ” in INTERSPEECH , 2006. [6] Sarah Ita Levitan, Michelle Levine, Julia Hirschber g, Nishmar Cestero, Guozhen An, and Andrew Rosenberg, “Individual differences in deception and deception detection, ” 2015. [7] Sarah Ita Levitan, Y ochev ed Levitan, Guozhen An, Michelle Levine, Rivka Le vitan, Andrew Rosenberg, and Julia Hirschberg, “Identifying individual differences in gender , eth- nicity , and personality from dialogue for deception detection, ” in Pr oceedings of NAA CL-HLT , 2016, pp. 40–44. [8] Shlomo Arg amon, Sushant Dhawle, Moshe Koppel, and James W Pennebaker , “Lexical predictors of personality type, ” in Pr oceedings of the 2005 J oint Annual Meeting of the Inter- face and the Classification Society of North America , 2005. [9] Izhak Shafran, Michael Riley , and Mehryar Mohri, “V oice sig- natures, ” in Automatic Speech Recognition and Understanding, 2003. ASRU’03. 2003 IEEE W orkshop on . IEEE, 2003, pp. 31– 36. [10] Y u-Min Zeng, Zhen-Y ang W u, T iago Falk, and W ai-Y ip Chan, “Robust gmm based gender classification using pitch and rasta- plp parameters of speech, ” in Machine Learning and Cybernet- ics, 2006 International Confer ence on . IEEE, 2006, pp. 3376– 3379. [11] Joel T etreault, Daniel Blanchard, and Aoife Cahill, “ A report on the first nati ve language identification shared task, ” in Pr o- ceedings of the eighth workshop on innovative use of NLP for building educational applications , 2013, pp. 48–57. [12] Klaus Rainer Scherer , P ersonality markers in speech , Cam- bridge Univ ersity Press, 1979. [13] Peter Borkenau and Anette Liebler , “Trait inferences: Sources of validity at zero acquaintance., ” Journal of P ersonality and Social Psychology , v ol. 62, no. 4, pp. 645, 1992. [14] Guozhen An, Literatur e r eview for Deception detection , Ph.D. thesis, The City Univ ersity of New Y ork, 2015. [15] Gelareh Mohammadi, Alessandro V inciarelli, and Marcello Mortillaro, “The voice of personality: Mapping non verbal vocal behavior into trait attributions, ” in Pr oceedings of the 2nd international workshop on Social signal pr ocessing . ACM, 2010, pp. 17–20. [16] James W Pennebaker , Martha E Francis, and Roger J Booth, “Linguistic inquiry and word count: Liwc 2001, ” Mahway: Lawr ence Erlbaum Associates , vol. 71, pp. 2001, 2001. [17] James W Pennebaker and Laura A King, “Linguistic styles: language use as an indi vidual dif ference., ” Journal of person- ality and social psychology , v ol. 77, no. 6, pp. 1296, 1999. [18] Matthias R Mehl, Samuel D Gosling, and James W Pen- nebaker , “Personality in its natural habitat: manifestations and implicit folk theories of personality in daily life., ” J ournal of personality and social psychology , vol. 90, no. 5, pp. 862, 2006. [19] Guozhen An, Sarah Ita Levitan, Rivka Levitan, Andrew Rosen- berg, Michelle Le vine, and Julia Hirschberg, “ Automatically classifying self-rated personality scores from speech, ” Inter- speech 2016 , pp. 1412–1416, 2016. [20] Sarah I Le vitan, Guzhen An, Mandi W ang, Gideon Mendels, Julia Hirschberg, Michelle Levine, and Andrew Rosenberg, “Cross-cultural production and detection of deception from speech, ” in Proceedings of the 2015 ACM on W orkshop on Multimodal Deception Detection . A CM, 2015, pp. 1–8. [21] Jonathan G Fiscus, “ A post-processing system to yield re- duced word error rates: Recognizer output voting error reduc- tion (rover), ” in Automatic Speech Recognition and Under- standing, 1997. Pr oceedings., 1997 IEEE W orkshop on . IEEE, 1997, pp. 347–354. [22] Cynthia Whissell, Michael Fournier , Ren ´ e Pelland, Deborah W eir, and Katherine Makarec, “ A dictionary of affect in lan- guage: Iv . reliability , validity , and applications, ” P er ceptual and Motor Skills , vol. 62, no. 3, pp. 875–888, 1986. [23] Y oshua Bengio, R ´ ejean Ducharme, Pascal V incent, and Chris- tian Jauvin, “ A neural probabilistic language model, ” Journal of machine learning resear ch , vol. 3, no. Feb, pp. 1137–1155, 2003. [24] Holger Schwenk, “Continuous space language models, ” Com- puter Speech & Languag e , vol. 21, no. 3, pp. 492–518, 2007. [25] Xavier Glorot, Antoine Bordes, and Y oshua Bengio, “Do- main adaptation for large-scale sentiment classification: A deep learning approach, ” in Pr oceedings of the 28th interna- tional confer ence on machine learning (ICML-11) , 2011, pp. 513–520. [26] Radim ˇ Reh ˚ u ˇ rek and Petr Sojka, “Software Framew ork for T opic Modelling with Large Corpora, ” in Pr oceedings of the LREC 2010 W orkshop on New Challenges for NLP F rame- works , V alletta, Malta, May 2010, pp. 45–50, ELRA. [27] T omas Mikolov , Ilya Sutskev er , Kai Chen, Greg S Corrado, and Jeff Dean, “Distributed representations of words and phrases and their compositionality , ” in Advances in neural in- formation pr ocessing systems , 2013, pp. 3111–3119. [28] W illiam R Wright and David N Chin, “Personality profiling from text: introducing part-of-speech n-grams, ” in Interna- tional Confer ence on User Modeling, Adaptation, and P erson- alization . Springer , 2014, pp. 243–253. [29] Stev en Bird, “Nltk: the natural language toolkit, ” in Proceed- ings of the COLING/A CL on Interactive pr esentation sessions . Association for Computational Linguistics, 2006, pp. 69–72. [30] Kristina T outanov a, Dan Klein, Christopher D Manning, and Y oram Singer, “Feature-rich part-of-speech tagging with a cyclic dependency network, ” in Pr oceedings of the 2003 Con- fer ence of the North American Chapter of the Association for Computational Linguistics on Human Language T echnology- V olume 1 . Association for Computational Linguistics, 2003, pp. 173–180. [31] Sarah Ita Levitan, T aniya Mishra, and Srini vas Bangalore, “ Automatic identification of gender from speech, ” in Speec h Pr osody , 2016. [32] Julian Brooke and Graeme Hirst, “Nativ e language detection with cheaplearner corpora, ” in T wenty Y ears of Learner Cor- pus Resear ch. Looking Back, Moving Ahead: Proceedings of the F irst Learner Corpus Researc h Conference (LCR 2011) . Presses univ ersitaires de Louvain, 2013, v ol. 1, p. 37. [33] Franc ¸ ois Mairesse, Marilyn A W alker , Matthias R Mehl, and Roger K Moore, “Using linguistic cues for the automatic recognition of personality in con versation and text, ” Journal of artificial intelligence r esearc h , pp. 457–500, 2007.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment