The SARptical Dataset for Joint Analysis of SAR and Optical Image in Dense Urban Area

The joint interpretation of very high resolution SAR and optical images in dense urban area are not trivial due to the distinct imaging geometry of the two types of images. Especially, the inevitable layover caused by the side-looking SAR imaging geometry renders this task even more challenging. Only until recently, the “SARptical” framework [1], [2] proposed a promising solution to tackle this. SARptical can trace individual SAR scatterers in corresponding high-resolution optical images, via rigorous 3-D reconstruction and matching. This paper introduces the SARptical dataset, which is a dataset of over 10,000 pairs of corresponding SAR, and optical image patches extracted from TerraSAR-X high-resolution spotlight images and aerial UltraCAM optical images. This dataset opens new opportunities of multisensory data analysis. One can analyze the geometry, material, and other properties of the imaged object in both SAR and optical image domain. More advanced applications such as SAR and optical image matching via deep learning [3] is now also possible.

💡 Research Summary

The paper presents the SARptical dataset, a large‑scale collection of precisely co‑registered very‑high‑resolution synthetic aperture radar (SAR) and optical image patches for dense urban environments. The authors begin by outlining the fundamental challenges of jointly interpreting SAR and optical data: SAR’s side‑looking geometry induces layover and shadowing, while optical imagery suffers from occlusions and illumination variability. Traditional 2‑D co‑registration is insufficient without an accurate three‑dimensional (3‑D) model of the scene.

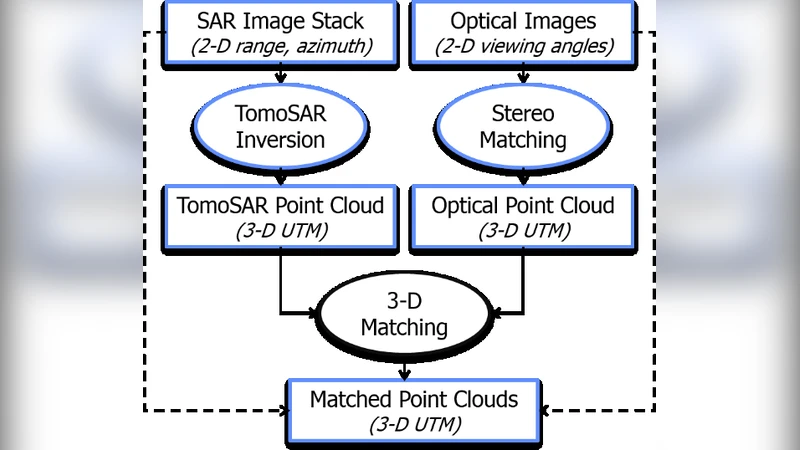

To overcome these obstacles, the authors build upon their previously introduced SARptical framework, which fuses 3‑D reconstructions derived independently from SAR and optical data. The SAR component uses differential SAR tomography (D‑TomoSAR) on a stack of 109 TerraSAR‑X spotlight images acquired over Berlin between 2009 and 2013, each with approximately 1 m ground resolution. This yields a dense point cloud with meter‑level height accuracy. The optical component employs multi‑view stereo matching on nine UltraCAM aerial photographs (20 cm ground spacing) covering the same area, producing a complementary high‑density optical point cloud.

The two point clouds are aligned in a common coordinate system without requiring an external digital elevation model. The alignment accuracy, governed by the number and quality of SAR and optical acquisitions, typically reaches 1–2 m. Once the 3‑D correspondence is established, any SAR pixel can be projected into the optical domain (and vice‑versa). For each selected SAR pixel, a square patch of 112 × 112 pixels (≈ 100 × 100 m on the ground) is extracted, and a matching optical patch is cropped around the projected location. Rotation and pixel‑spacing adjustments are applied so that the two patches are approximately aligned at the pixel level. Because the optical dataset contains multiple viewing angles, a single SAR patch may correspond to up to nine optical patches, each capturing a different façade or side of a building.

The final dataset comprises 32 446 SAR pixels, which generate 89 502 optical patches, resulting in over 10 000 paired SAR‑optical patches. Each pair is accompanied by comprehensive metadata, including geographic coordinates, elevation, SAR amplitude and phase, and the viewing geometry of the optical image. The authors provide illustrative examples: one pair shows the roof of Berlin Central Station, and another captures a curved building at the intersection of Reinhardtstraße and Luisenstraße. A schematic figure demonstrates the workflow from raw data to the final co‑registered patches.

The SARptical dataset is released publicly (http://www.sipeo.bgu.tum.de/downloads) to foster research in multimodal remote sensing. Potential applications include joint classification of urban features using both SAR and optical cues, transfer learning between modalities, deep‑learning based SAR‑optical patch matching, and urban change detection that leverages multi‑view optical information. The authors emphasize that the dataset enables new scientific inquiries into the geometry, material properties, and structural dynamics of urban objects, which were previously difficult to study due to SAR layover and optical occlusion.

In conclusion, the paper contributes a valuable resource that bridges the gap between SAR and optical remote sensing in complex urban settings. By providing a rigorously validated, large‑scale set of co‑registered image patches, the work opens avenues for advanced data‑fusion algorithms, deep‑learning models, and practical urban monitoring applications. Future work will focus on extending the methodology to other cities, improving 3‑D reconstruction accuracy, and exploring novel multimodal learning paradigms that exploit the rich information contained in the SARptical dataset.

Comments & Academic Discussion

Loading comments...

Leave a Comment