Introducing ReQuEST: an Open Platform for Reproducible and Quality-Efficient Systems-ML Tournaments

Co-designing efficient machine learning based systems across the whole hardware/software stack to trade off speed, accuracy, energy and costs is becoming extremely complex and time consuming. Researchers often struggle to evaluate and compare different published works across rapidly evolving software frameworks, heterogeneous hardware platforms, compilers, libraries, algorithms, data sets, models, and environments. We present our community effort to develop an open co-design tournament platform with an online public scoreboard. It will gradually incorporate best research practices while providing a common way for multidisciplinary researchers to optimize and compare the quality vs. efficiency Pareto optimality of various workloads on diverse and complete hardware/software systems. We want to leverage the open-source Collective Knowledge framework and the ACM artifact evaluation methodology to validate and share the complete machine learning system implementations in a standardized, portable, and reproducible fashion. We plan to hold regular multi-objective optimization and co-design tournaments for emerging workloads such as deep learning, starting with ASPLOS'18 (ACM conference on Architectural Support for Programming Languages and Operating Systems - the premier forum for multidisciplinary systems research spanning computer architecture and hardware, programming languages and compilers, operating systems and networking) to build a public repository of the most efficient machine learning algorithms and systems which can be easily customized, reused and built upon.

💡 Research Summary

The paper addresses the growing complexity of co‑designing machine‑learning (ML) systems that span the entire hardware/software stack. Researchers must navigate a bewildering array of choices—network architectures, frameworks, libraries, compiler optimizations, and target platforms—each of which can dramatically affect speed, accuracy, energy consumption, and cost. Moreover, reproducing results across different environments is increasingly difficult, hampering fair comparison and progress.

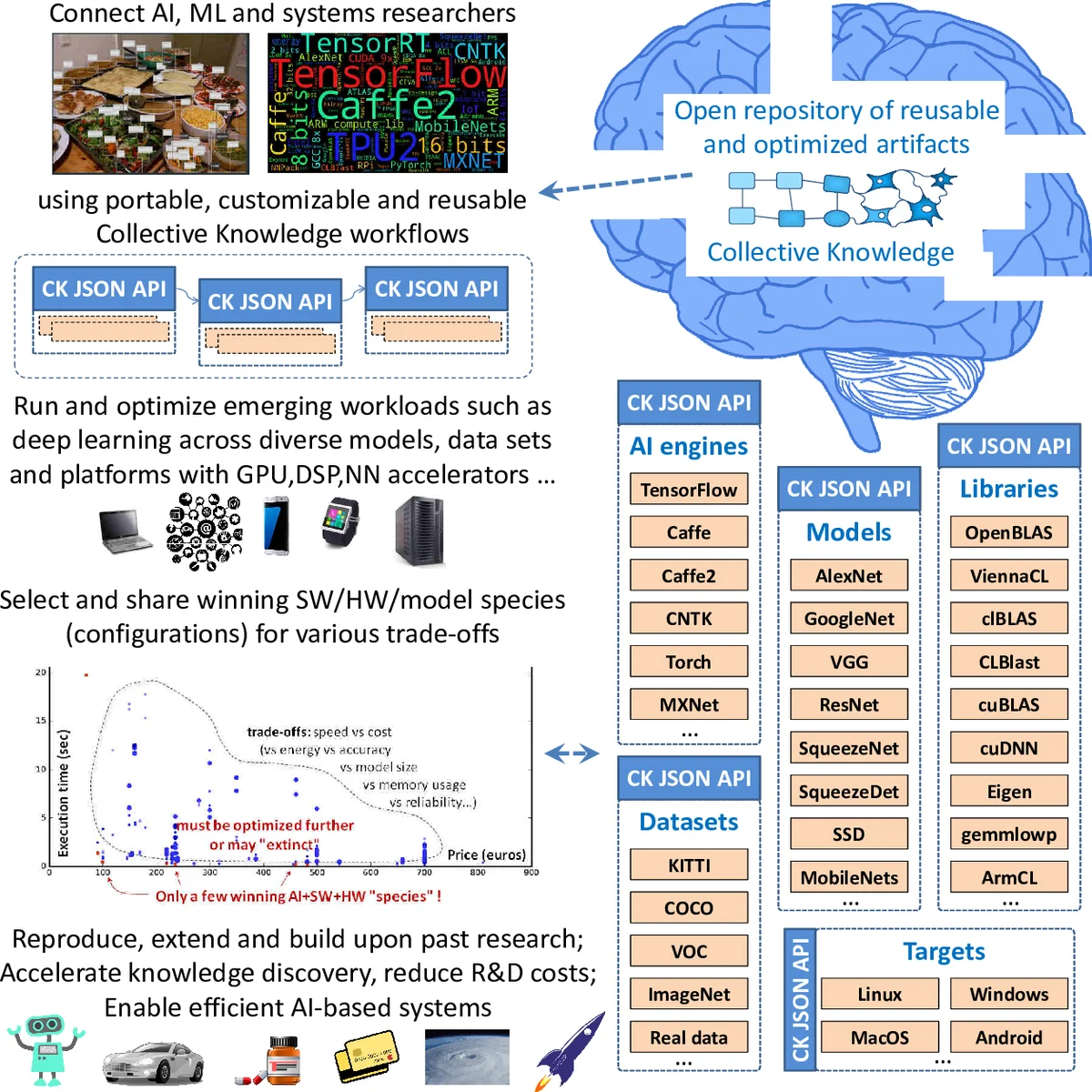

To tackle these challenges, the authors propose ReQuEST (Reproducible Quality‑Efficient Systems Tournament), an open, community‑driven platform that combines two established infrastructures: the Collective Knowledge (CK) framework and the ACM Artifact Evaluation methodology. CK provides a Python‑based wrapper that packages code, data, libraries, and hardware configuration into reusable, version‑controlled plugins described by a common JSON schema. This enables automated deployment, benchmarking, and multi‑objective optimization across heterogeneous platforms (CPU, GPU, DSP, FPGA, analog accelerators, etc.) and operating systems (Linux, Windows, macOS, Android).

The ACM Artifact Evaluation process supplies a rigorous, standardized validation pipeline: submitted artifacts are checked for completeness, reproducibility, and compliance with a predefined set of metrics before they are admitted to the public leaderboard. This ensures that every reported result has been independently verified.

ReQuEST’s central feature is a live, multi‑dimensional scoreboard that visualizes Pareto‑optimal trade‑offs among several key objectives: model accuracy, inference latency, power/energy consumption, hardware footprint, and monetary cost. The paper illustrates this with a prototype dashboard (Figure 2) that aggregates inference speed versus platform cost across multiple deep‑learning frameworks (TensorFlow, Caffe, MXNet, etc.), models (AlexNet, ResNet, MobileNet, etc.), datasets, and Android devices contributed by volunteers. Red dots on the plot indicate configurations that lie on the Pareto frontier, allowing participants to instantly see which software/hardware/model combinations dominate under specific constraints.

ReQuEST is organized as a bi‑annual tournament alternating between systems‑focused and ML‑focused conferences. The inaugural event is scheduled for ASPLOS 2018, where participants will submit complete ML system artifacts for inference workloads. Submissions will be evaluated by an artifact‑evaluation committee, and top‑performing “quality‑efficient” implementations will receive awards and potentially be published as ACM proceedings papers. An industrial panel will discuss technology transfer, helping shape future tournament themes, datasets, workloads, and target hardware (including emerging analog, neuromorphic, stochastic, and quantum accelerators).

Beyond the initial tournament, the authors envision a sustainable ecosystem: a public repository of reusable artifacts, continuous expansion of supported workloads (vision, speech, NLP, etc.), and inclusion of a broad spectrum of hardware from data‑center GPUs to edge‑device SoCs. Planned future enhancements include standardizing APIs and meta‑descriptions, integrating architectural simulators for pre‑silicon evaluation, exposing lower‑level optimization knobs, and adding richer metrics such as memory bandwidth or thermal envelope.

In summary, ReQuEST contributes (1) a reproducible artifact packaging and sharing mechanism, (2) a rigorous, community‑validated evaluation workflow, (3) a transparent multi‑objective benchmarking platform that highlights Pareto‑optimal solutions, and (4) a recurring, community‑driven forum that bridges academia and industry. By automating and standardizing the co‑design process, ReQuEST aims to accelerate research productivity, reduce R&D costs, and facilitate the transfer of state‑of‑the‑art ML systems into real‑world applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment