A methodology for calculating the latency of GPS-probe data

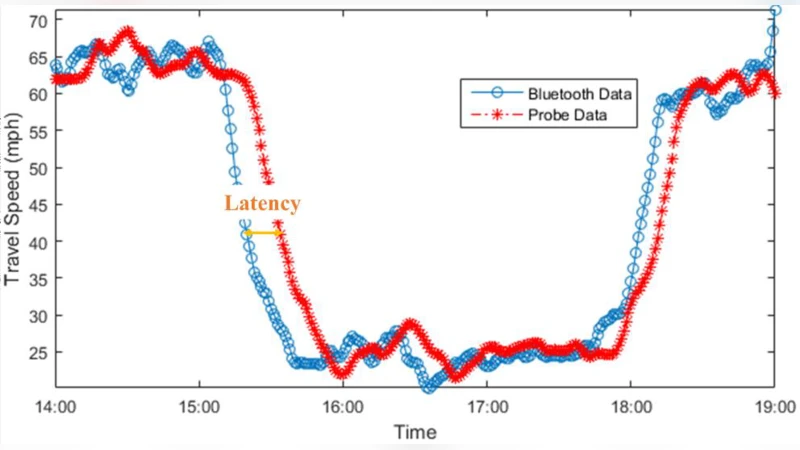

Crowdsourced GPS probe data has been gaining popularity in recent years as a source for real-time traffic information. Efforts have been made to evaluate the quality of such data from different perspectives. A quality indicator of any traffic data source is latency that describes the punctuality of data, which is critical for real-time operations, emergency response, and traveler information systems. This paper offers a methodology for measuring the probe data latency, with respect to a selected reference source. Although Bluetooth re-identification data is used as the reference source, the methodology can be applied to any other ground-truth data source of choice (i.e. Automatic License Plate Readers, Electronic Toll Tag). The core of the methodology is a maximum pattern matching algorithm that works with three different fitness objectives. To test the methodology, sample field reference data were collected on multiple freeways segments for a two-week period using portable Bluetooth sensors as ground-truth. Equivalent GPS probe data was obtained from a private vendor, and its latency was evaluated. Latency at different times of the day, the impact of road segmentation scheme on latency, and sensitivity of the latency to both speed slowdown, and recovery from slowdown episodes are also discussed.

💡 Research Summary

The paper presents a systematic methodology for quantifying the latency of commercial GPS‑probe traffic data by using Bluetooth re‑identification measurements as a ground‑truth reference. Recognizing that latency—the time lag between a traffic disturbance on the road and its appearance in a data feed—is a critical quality metric for real‑time traffic management, the authors develop a step‑by‑step procedure that can be applied to any probe data source.

First, Bluetooth sensors are installed at the upstream and downstream ends of selected freeway segments. Each sensor records the MAC address and detection time of every Bluetooth‑enabled device. By matching MAC addresses between the two sensors, individual vehicle travel times are obtained; dividing by the known segment length yields space‑mean speeds. These speeds are aggregated into one‑minute intervals, forming the reference time series.

To ensure the reference data are reliable, a multi‑stage filtering process is applied: (1) speeds outside ±1.5 standard deviations of the interval mean are discarded, (2) intervals with a coefficient of variation greater than 1 are removed, and (3) intervals with fewer than a minimum number of observations are excluded. Missing minutes are filled by linear interpolation only when the gap is five minutes or less; longer gaps are omitted from the analysis. Both the Bluetooth and probe series undergo smoothing with a zero‑phase 5‑minute moving‑average filter (filtfilt in MATLAB) to suppress random spikes while avoiding phase shift.

The core latency estimation is an iterative time‑shift search. Starting from a zero‑minute offset, the probe series is shifted forward in one‑minute steps up to a predefined upper bound (e.g., 15 minutes). For each offset three fitness metrics are computed: (a) Absolute Vertical Distance (AVD) – the sum of absolute differences between Bluetooth and shifted probe speeds; (b) Square Vertical Distance (SVD) – the sum of squared differences, which penalizes larger mismatches; and (c) Pearson Correlation (COR) – the linear correlation coefficient between the two series. The offset that minimizes AVD and SVD or maximizes COR is taken as the estimated latency. Using speed rather than travel time aligns the metric with the segment‑based nature of probe data.

The methodology is validated on two freeway corridors in South Carolina: a 7.07‑mile stretch of I‑85 and a 4.67‑mile stretch of I‑26. Data were collected over a two‑week period (December 3–15, 2015) for both directions. GPS‑probe speeds, supplied by a private vendor at a one‑minute resolution, were processed to match the Bluetooth aggregation. Results show an overall average latency of about 5 minutes, with notable variations: during peak‑hour congestion the latency drops to 4–6 minutes, while off‑peak it rises to 6–8 minutes. When the segment is subdivided into shorter (≈0.5 mile) pieces, latency increases by roughly one minute, reflecting the additional aggregation delay inherent in probe data. Moreover, the latency is asymmetric with respect to traffic dynamics: it shortens (2–3 minutes) during rapid speed drops (e.g., incidents) but lengthens (5–7 minutes) during recovery phases, suggesting that the probe data processing pipeline reacts faster to deceleration than to acceleration. All three fitness functions converge on similar optimal offsets, and the correlation values during peaks exceed 0.92, confirming the robustness of the approach.

The study’s contributions are threefold. First, it provides an empirical benchmark for GPS‑probe latency that moves beyond anecdotal estimates (e.g., “≤ 8 minutes”) by grounding the measurement in field‑collected vehicle trajectories. Second, the use of multiple fitness criteria mitigates the risk of relying on a single, potentially biased metric. Third, the detailed sensitivity analysis (time‑of‑day, segment length, traffic state) offers practical guidance for agencies that need to compensate for latency in real‑time applications such as incident detection, traveler information, and adaptive signal control.

Limitations include dependence on sufficient Bluetooth re‑identification rates (low penetration leads to sparse samples), possible distortion of the true latency by the one‑minute interpolation and smoothing steps, and the fact that Pearson correlation captures only linear similarity, which may be inadequate for highly nonlinear traffic patterns. Future work is suggested in three directions: (i) integrating additional ground‑truth sources (loop detectors, video analytics) to strengthen the reference; (ii) adopting Bayesian or Kalman filtering frameworks to quantify uncertainty and provide probabilistic latency estimates; and (iii) automating the entire pipeline for real‑time deployment, enabling dynamic latency correction in operational traffic management systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment