An Analysis of Two Common Reference Points for EEGs

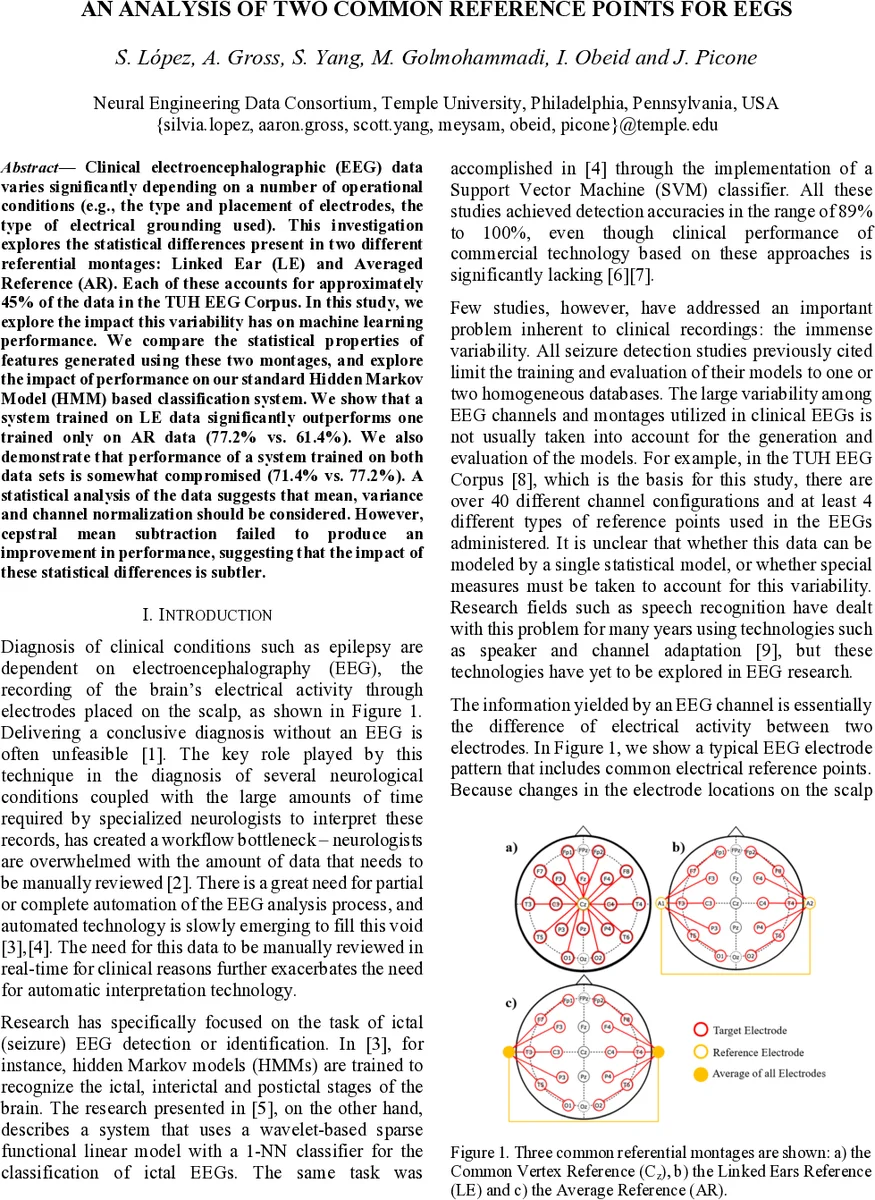

Clinical electroencephalographic (EEG) data varies significantly depending on a number of operational conditions (e.g., the type and placement of electrodes, the type of electrical grounding used). This investigation explores the statistical differences present in two different referential montages: Linked Ear (LE) and Averaged Reference (AR). Each of these accounts for approximately 45% of the data in the TUH EEG Corpus. In this study, we explore the impact this variability has on machine learning performance. We compare the statistical properties of features generated using these two montages, and explore the impact of performance on our standard Hidden Markov Model (HMM) based classification system. We show that a system trained on LE data significantly outperforms one trained only on AR data (77.2% vs. 61.4%). We also demonstrate that performance of a system trained on both data sets is somewhat compromised (71.4% vs. 77.2%). A statistical analysis of the data suggests that mean, variance and channel normalization should be considered. However, cepstral mean subtraction failed to produce an improvement in performance, suggesting that the impact of these statistical differences is subtler.

💡 Research Summary

This paper investigates how the choice of reference electrode montage—Linked Ears (LE) versus Average Reference (AR)—affects the statistical properties of EEG signals and the performance of a hidden Markov model (HMM)–based seizure detection system. Using the publicly available TUH EEG Corpus, which contains roughly equal amounts of LE (≈43.8 %) and AR (≈46.5 %) recordings, the authors extracted a standard 26‑dimensional feature set (9 base spectral features plus first and second derivatives) with 0.1 s frames and 0.2 s windows. For the statistical analysis only the 9 base features were considered.

First, descriptive statistics (mean and variance) were computed for each feature across the two reference groups. The results showed substantial differences in both central tendency and dispersion, indicating that the reference point materially alters the observed spectral characteristics.

Second, a principal component analysis (PCA) was performed on the 9‑dimensional base feature vectors. The covariance matrices yielded eigenvalues and eigenvectors that revealed distinct variance structures: the first principal component accounted for ~45 % of the total variance in LE data but only ~30 % in AR data, suggesting that LE recordings are more dominated by a single underlying factor. Eigenvector comparisons highlighted that low‑order cepstral coefficients and energy features behaved similarly across montages, whereas higher‑order cepstral coefficients (associated with β‑band activity) exhibited opposite polarity, reflecting montage‑dependent phase and amplitude shifts.

Third, the authors evaluated three HMM‑based classifiers: one trained exclusively on LE data, one on AR data, and one on a combined LE + AR dataset. Training used 44 recordings per class, and testing used 10 recordings per class, all from distinct patients (total 108 patients). Detection performance was measured with DET curves and overall accuracy. The LE‑only model achieved the highest accuracy (77.2 %), the AR‑only model performed considerably worse (61.4 %), and the mixed‑data model attained an intermediate accuracy (71.4 %). Notably, the mixed model performed best when evaluated on LE data but suffered a drop on AR data, confirming that the statistical bias between montages hampers a single model’s generalization.

The study also attempted cepstral mean normalization (CMN), a technique successful in speech recognition for mitigating channel effects. Contrary to expectations, CMN either failed to improve or actually degraded performance across most configurations, suggesting that EEG’s non‑stationary, non‑linear nature renders simple mean subtraction insufficient for eliminating montage bias.

In discussion, the authors argue that reference choice influences mean, variance, and higher‑order structure of EEG features, and that machine‑learning systems must either incorporate montage‑specific adaptation or develop reference‑independent representations. While their HMM baseline shows some robustness, the observed performance gap underscores the need for more sophisticated normalization or domain‑adaptation strategies.

The paper concludes with three key take‑aways: (1) LE and AR recordings exhibit statistically significant differences; (2) these differences translate into measurable drops in seizure detection accuracy when a single model is applied to both; and (3) techniques borrowed from speech processing, such as CMN, do not directly solve the problem for EEG. Future work is recommended to explore adaptive normalization, multi‑domain training, or feature extraction methods that are inherently reference‑agnostic, thereby enabling the full TUH corpus to be leveraged without sacrificing detection performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment