Evaluation of Machine Learning Algorithms for Intrusion Detection System

Intrusion detection system (IDS) is one of the implemented solutions against harmful attacks. Furthermore, attackers always keep changing their tools and techniques. However, implementing an accepted IDS system is also a challenging task. In this paper, several experiments have been performed and evaluated to assess various machine learning classifiers based on KDD intrusion dataset. It succeeded to compute several performance metrics in order to evaluate the selected classifiers. The focus was on false negative and false positive performance metrics in order to enhance the detection rate of the intrusion detection system. The implemented experiments demonstrated that the decision table classifier achieved the lowest value of false negative while the random forest classifier has achieved the highest average accuracy rate.

💡 Research Summary

The paper investigates the application of several supervised machine learning classifiers to intrusion detection, using the well‑known KDD‑99 benchmark dataset. The authors implement seven algorithms—J48 (C4.5 decision tree), Random Forest, Random Tree, Decision Table, Multi‑Layer Perceptron (MLP), Naïve Bayes, and Bayes Network—and evaluate them primarily on four performance metrics: overall accuracy, average accuracy, false‑positive rate, and false‑negative rate. The motivation is to identify a model that not only achieves high detection accuracy but also minimizes missed attacks (false negatives), which are especially critical in security contexts.

The introductory sections outline the evolution of network attacks (DoS, R2L, U2R, Probe) and the two main IDS paradigms (host‑based vs. network‑based, anomaly‑based vs. misuse‑based). A concise literature review highlights prior work that employed SVM, neural networks, fuzzy ART‑MAP, genetic algorithms, and various feature‑selection techniques on the same dataset, emphasizing that most studies focus on a single classifier or a limited set of attack types.

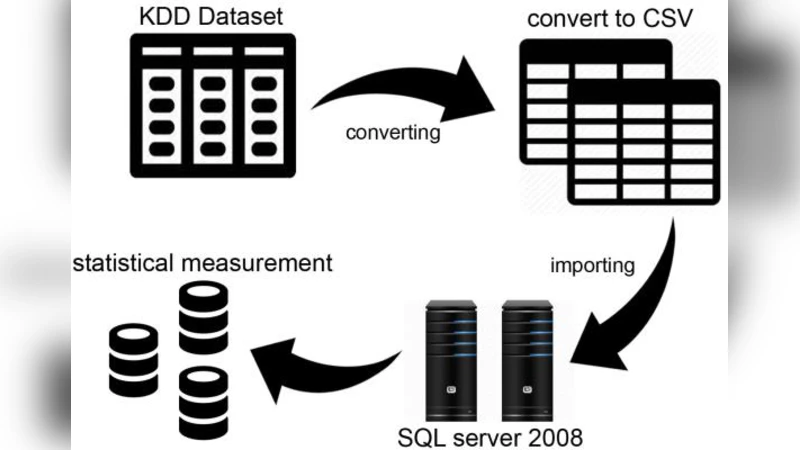

In the methodology, the authors import the full KDD‑99 dataset (≈4.9 million records, 41 attributes) into Microsoft SQL Server 2008, perform basic statistical profiling, and then extract a relatively small subset of 1,487,53 records for training and testing. The paper provides tables describing the distribution of attack categories (DoS dominates with ~79 % of instances) and the 41 basic TCP/IP attributes. However, the preprocessing steps (handling of missing values, encoding of categorical features, normalization, or class‑imbalance mitigation) are not described in detail.

Each classifier is briefly introduced: J48 as an improved C4.5 tree, Random Forest as an ensemble of decision trees, Random Tree as a single tree built on randomly selected attributes, Decision Table as a rule‑based lookup table, MLP as a feed‑forward neural network with various activation functions, Naïve Bayes as a probabilistic classifier based on Bayes’ theorem, and Bayes Network as a graphical model representing conditional dependencies. The authors note the theoretical strengths of each method but do not discuss hyper‑parameter tuning, cross‑validation, or computational cost.

Experimental results show that Random Forest achieves the highest average accuracy among the tested models, while the Decision Table yields the lowest false‑negative rate, indicating superior capability to avoid missing actual attacks. Naïve Bayes and Bayes Network perform comparatively poorly, with higher false‑positive rates. The paper concludes that a combination of high‑accuracy (Random Forest) and low‑miss (Decision Table) characteristics could be beneficial for practical IDS deployment.

Critical analysis reveals several methodological shortcomings. The use of a tiny sample from the original dataset raises concerns about representativeness and overfitting; the extreme class imbalance is not addressed through techniques such as SMOTE, cost‑sensitive learning, or class weighting, potentially biasing accuracy toward the majority DoS class. The evaluation lacks comprehensive metrics like precision, recall, F1‑score, and ROC‑AUC, which are essential for security‑oriented classification where false alarms and missed detections have asymmetric costs. Moreover, the absence of k‑fold cross‑validation or repeated experiments prevents assessment of statistical significance and model stability. The reliance on the outdated KDD‑99 dataset, without comparison to newer benchmarks (e.g., NSL‑KDD, UNSW‑NB15, CIC‑IDS2017), limits the relevance of the findings to contemporary network environments. Finally, the paper contains numerous typographical and grammatical errors that impede readability.

In summary, the study provides a straightforward comparative snapshot of several classic machine‑learning classifiers on a subset of the KDD‑99 data, highlighting that Random Forest excels in overall accuracy while Decision Table minimizes false negatives. However, to draw robust conclusions applicable to real‑world IDS, future work should employ larger, balanced datasets, adopt rigorous validation protocols, incorporate a broader set of performance metrics, and explore modern algorithms such as deep learning or ensemble hybrids.

Comments & Academic Discussion

Loading comments...

Leave a Comment