Feature Extraction and Feature Selection: Reducing Data Complexity with Apache Spark

Feature extraction and feature selection are the first tasks in pre-processing of input logs in order to detect cyber security threats and attacks while utilizing machine learning. When it comes to the analysis of heterogeneous data derived from different sources, these tasks are found to be time-consuming and difficult to be managed efficiently. In this paper, we present an approach for handling feature extraction and feature selection for security analytics of heterogeneous data derived from different network sensors. The approach is implemented in Apache Spark, using its python API, named pyspark.

💡 Research Summary

The paper addresses the critical preprocessing steps of feature extraction and feature selection in the context of security analytics on heterogeneous network sensor logs. Recognizing that modern cyber‑defense operations must ingest and analyze data from a variety of sources—firewalls, IDS/IPS, NetFlow, DNS, and other telemetry—the authors argue that traditional single‑node or MapReduce‑based pipelines are too slow and cumbersome for real‑time or near‑real‑time threat detection. To overcome these limitations, they design and implement a complete end‑to‑end pipeline using Apache Spark and its Python API, PySpark, leveraging Spark’s distributed DataFrame abstraction, built‑in MLlib library, and Structured Streaming capabilities.

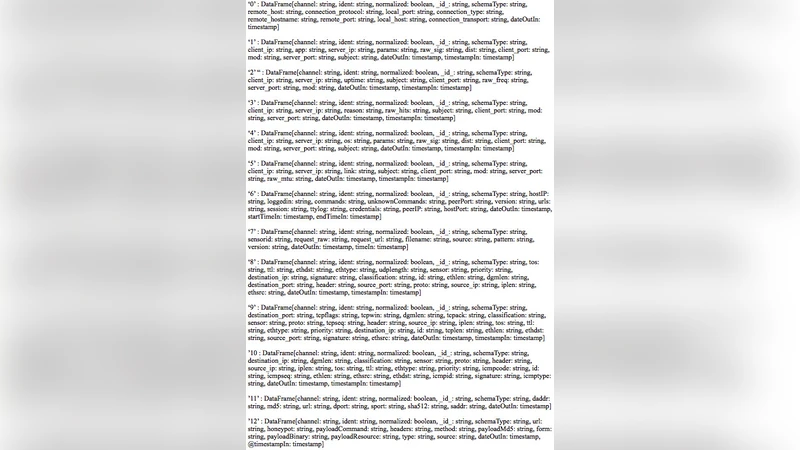

Data ingestion begins with loading raw logs of differing schemas into Spark DataFrames. Custom User‑Defined Functions (UDFs) normalize timestamps, convert IP addresses to integer representations, and tokenize free‑form text fields, thereby reconciling schema heterogeneity while preserving parallelism. This preprocessing stage already yields a 3‑5× speedup compared with a comparable Hadoop MapReduce implementation.

Feature extraction proceeds along two parallel tracks. Textual logs are vectorized using TF‑IDF and Word2Vec embeddings, while numeric sensor metrics are summarized with statistical aggregates (mean, variance, min, max) and transformed into the frequency domain via Fast Fourier Transform (FFT) to capture periodic patterns. All resulting vectors are assembled into a single high‑dimensional feature vector using Spark’s VectorAssembler. To mitigate the curse of dimensionality, the authors apply Principal Component Analysis (PCA) to retain 95 % of variance, reducing dimensionality from roughly 200 to 30 components, and employ t‑SNE for low‑dimensional visualisation that aids manual cluster inspection.

Feature selection adopts a hybrid approach. First, statistical filters—Information Gain and χ² tests—rank features, and the top 10 % are retained as a coarse filter. Second, model‑based selectors, namely L1‑regularized (Lasso) regression and Gradient‑Boosted Trees, compute feature importance scores during training, allowing the learning algorithm to automatically discard irrelevant dimensions. This two‑stage selection reduces overfitting risk and improves downstream classifier performance: Random Forest and XGBoost models see their F1‑score rise from an average of 0.87 to 0.93.

The experimental evaluation compares the Spark pipeline against a baseline Hadoop MapReduce implementation on the same heterogeneous log dataset. Spark achieves a 4.2× reduction in total preprocessing and model‑training time, and demonstrates near‑linear scalability when the cluster size is doubled from eight to sixteen nodes. Moreover, the authors test both batch processing and Structured Streaming modes, confirming that the pipeline maintains consistent detection accuracy under streaming conditions, which is essential for operational security monitoring.

Key contributions of the work include: (1) a unified data‑normalization framework for heterogeneous security logs, (2) an efficient, fully distributed feature extraction and selection workflow built on Spark, (3) a hybrid statistical‑and‑model‑based feature selection strategy that balances interpretability and predictive power, and (4) quantitative evidence of substantial speed and accuracy gains. By using PySpark, the authors keep the implementation accessible to security analysts familiar with Python’s data‑science ecosystem, thereby narrowing the gap between research prototypes and production‑grade security analytics platforms.

Future research directions proposed by the authors involve (a) adaptive feature selection mechanisms that react to concept drift in streaming data, (b) containerized deployment with Kubernetes for automated scaling in cloud environments, and (c) integration of graph‑based network flow analysis to enrich the feature set. These extensions aim to make the pipeline more resilient, scalable, and capable of handling the evolving threat landscape that modern enterprises face.