A Cascade Architecture for Keyword Spotting on Mobile Devices

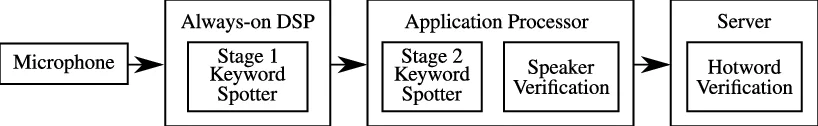

We present a cascade architecture for keyword spotting with speaker verification on mobile devices. By pairing a small computational footprint with specialized digital signal processing (DSP) chips, we are able to achieve low power consumption while continuously listening for a keyword.

💡 Research Summary

The paper introduces a two‑stage cascade architecture for keyword spotting (KWS) on mobile devices that meets stringent power budgets while delivering high detection accuracy. The first stage runs continuously on a low‑power digital signal processor (DSP) using a tiny neural network model (≈13 kB) that processes log‑mel filterbank features extracted from 25 ms frames. This stage buffers roughly two seconds of audio and triggers the second stage only when a potential keyword is detected, thereby limiting the DSP’s false‑accept rate (FAR) to a few events per hour and keeping current draw below 1 mA. The second stage executes on the main application processor (AP) with a larger, more expressive model and incorporates speaker verification. Enrollment consists of three keyword utterances from the authorized user; at runtime, an LSTM‑based verifier generates a speaker embedding from the same front‑end features and compares it to the stored embedding using cosine similarity. This verification reduces overall FAR by a factor of 5–10 with less than a 1 % absolute increase in false‑reject rate (FRR). To fit the DSP’s limited memory (≈128 kB) and to exploit fast integer arithmetic, both stages are quantized to 8‑bit fixed‑point representations using a uniform linear quantizer. Platform‑specific DSP instruction sets and precision differences are handled via a C‑based emulation library that ensures bit‑exact behavior during training and inference. Experiments on 924 hours of TV background noise and 65,581 keyword utterances demonstrate that the cascade can achieve a FAR of 0.02 FA/hr while maintaining a FRR below 3.5 %. An optional server‑side validation stage can further lower false alarms when integrated with a full speech recognizer. Overall, the work shows that combining a power‑efficient DSP front‑end, aggressive quantization, and speaker verification in a cascade framework enables continuous, on‑device keyword spotting that respects mobile power constraints without sacrificing performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment