Video Inpainting of Complex Scenes

We propose an automatic video inpainting algorithm which relies on the optimisation of a global, patch-based functional. Our algorithm is able to deal with a variety of challenging situations which naturally arise in video inpainting, such as the cor…

Authors: Alasdair Newson, Andres Almansa (LTCI), Matthieu Fradet

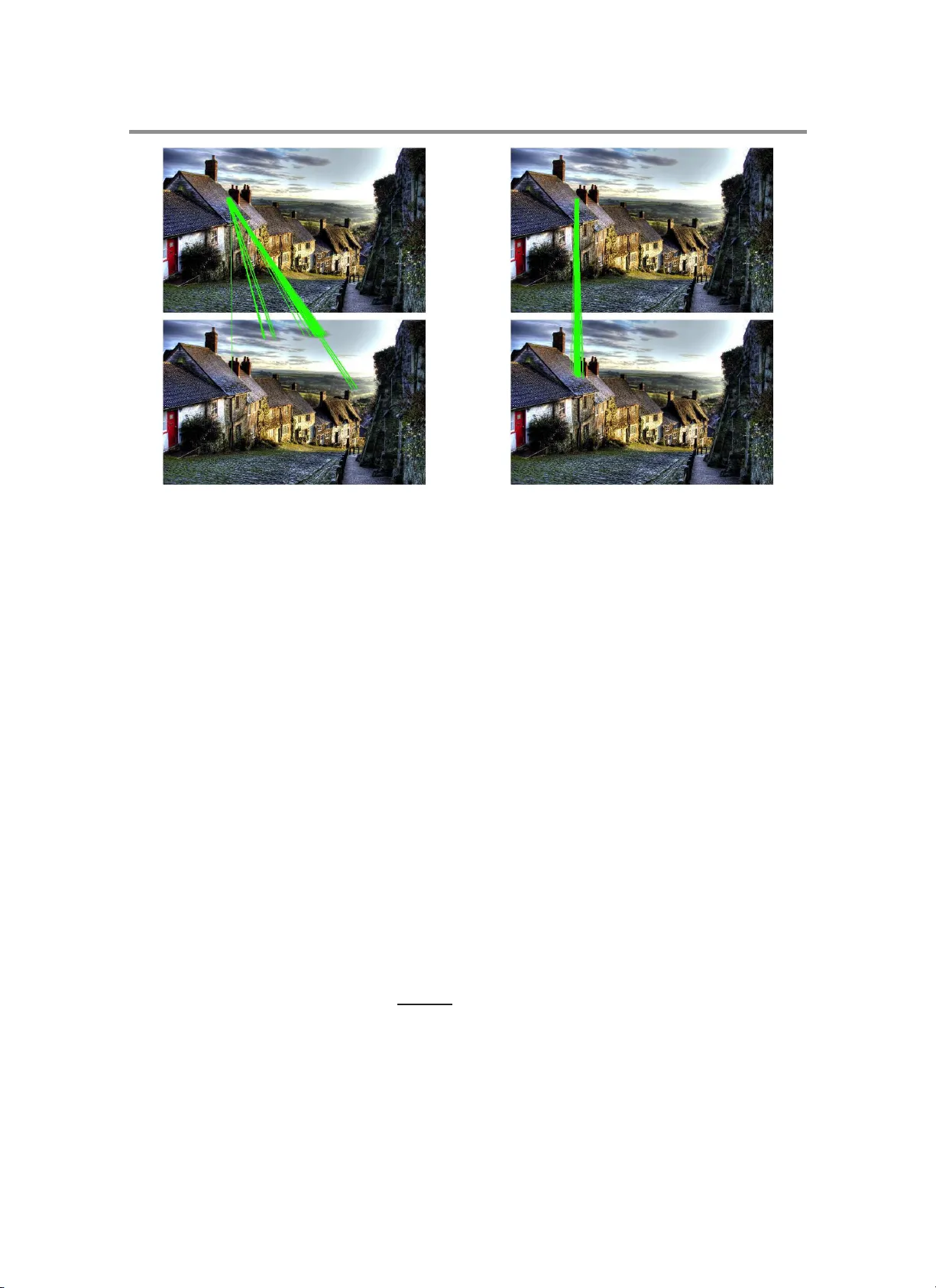

VIDEO INP AINTING OF COMPLEX SCENES Alasdai r Newson † ‡ , Andr´ es Almansa ‡ , M atthieu Fradet † , Y ann Gousseau ‡ , and P atrick P ´ erez † Abstract. W e prop ose an automatic v ideo inpainting algorithm whic h relies on the optimisation of a global, patch-based functional. Our algorithm is able to deal with a v ariety of chall enging situations whic h naturally arise in video in p ainting, suc h as the correct reconstruction of dynamic tex tures, multiple mo ving ob jects and moving bac k ground. F u rthermore, w e ac hieve this in an order of magnitude less execution time with resp ect to the state-of-the-art. W e are also able to achiev e goo d qualit y results on high defin ition videos. Finally , w e provide specific algorithmic details to make implemen tation of our algorithm as easy as possible. The resulting algo rithm requires no segmen tation or man ual input other than the definition of the inp ainti ng mask, and can d eal with a wider v ariety of situations than is handled by previous work. Key words. Video inpainting, p atc h-b ased inpainting, v ideo tex tures, moving background. AMS s ubject c lassifications. 68U10, 65K10, 65C20, 1. Intro duction. Adv anced image and video editing tec h niques are in cr easingly common in the image pro cessing and computer vision world, and are also starting to b e used in media en tertainmen t. One common and d ifficult task closely linked to the world of video editing is image and video “inpain ting”. Generally sp eaking, this is the task of replacing the con ten t of an image or vid eo w ith some other con ten t which is visu ally p leasing. This sub ject h as b een extensiv ely studied in the case of images, to suc h an exten t that commercial image inpain ting pro du cts destined for the general pu blic are av ailable, suc h as Ph otoshop’s “Con ten t Aw are fill” [ 1 ]. Ho wev er, while some impressiv e r esu lts ha v e b een obtained in the case of videos, the sub ject has b een studied far less extensiv ely than image inp ain ting. This relativ e lac k of re- searc h can largely b e attributed to high time complexit y d u e to the added temp oral dimension. Indeed, it has only very recen tly b eco me p ossible to pro duce goo d qu alit y inpainting results on high definition videos, and this only in a semi-automatic manner. Nev ertheless, h igh-qualit y video inpainting h as many imp orta nt and useful applications s uc h as film restorati on, pr o- fessional p ost- pro duction in cinema and v id eo editing for p ersonal use. F or this reason, we b eliev e that an automatic, generic vid eo inpain ting algorithm w ould b e extremely usefu l f or b oth academic and professional comm unities. 1.1. Prio r wo rk. Th e generic goa l of replacing areas of arbitrary s hap es and sizes in images b y some other cont en t wa s fir st p resen ted by Masnou and Morel in [ 30 ]. This metho d used lev el-lines to diso c clude the r egion to inp ain t. The term “inpain ting” wa s fi rst introd uced by Bertalmio et al . in [ 7 ]. S ubsequently , a v ast amount of researc h wa s done in the area of image inpain ting [ 6 ], and to a lesser extent in vide o inpainti ng. Generally sp eaking, video inpain ting algo rithms b elo ng to either the “ob ject-based” or “patc h -based” category . Ob ject-based algorithms usu ally segmen t the video into m o ving fore- † T ec hnicolor, 35570 Cesson-S´ evign´ e, F rance ‡ T ´ el ´ ecom Paris T ec h, CNRS L TCI, 75013 Paris, F rance 1 2 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ ground ob jects and b ac kground that is either still or displa ys s imple motion. These segmen ted image sequences are then inpainted using separate algorithms. The bac kground is often in - pain ted using image inpain ting metho ds su c h as [ 13 ], whereas mo ving ob jects are often copied in to the o cclusion as smo othly as p ossible. Unfortunately , su c h metho d s includ e restrictiv e h yp otheses on the mo ving ob jects’ motion, such as strict p erio dicit y . Some ob ject-base d metho ds includ e [ 12 , 24 , 28 ]. P atc h -based metho d s are based on the int uitiv e idea of cop ying and pasting small video “patc h es” (rectangular cub oids of video in f ormation) in to the o ccluded area. These patc hes are very useful as they pro vide a pr actical wa y of enco din g lo cal texture, structure and motion (in the video case). P atc h es were first introdu ced for texture synthesis in images [ 18 ], and subsequently used with great success in image inpainti ng [ 8 , 13 , 17 ]. T h ese metho d s cop y and paste patc hes into the o c clusion in a greedy f ash ion, wh ic h m eans that no global coherence of the solution can b e guaran teed. This general appr oac h w as extend ed by Pa t w ardhan et al . to the spatio-temp oral case in [ 34 ]. In [ 35 ], this app roac h w as f urther improv ed s o that mo ving cameras could b e dealt with. This is reflected by the fact that a go o d segmentat ion of the scene into mo vin g foreground ob jects and backg round is needed to pro duce go o d qualit y results. This lac k of global coherence can b e a dr awbac k, esp e cially f or the correct inpainting of moving ob jects. Another metho d leading to a different family of algorithms was presented b y Dema net et al . in [ 15 ]. T he k ey ins ight here is that inpainti ng can b e viewe d as a lab ell ing problem: eac h o cc luded pixel can b e associated w ith an uno ccluded pixel, and the final lab els of the pixels r esult from a d iscrete optimisati on pro cess. This id ea was subsequently follo w ed b y a series of image inpain ting m etho ds [ 22 , 25 , 29 , 36 ] whic h u se optimisation tec hn iques suc h as graph cu ts algorithms [ 9 ], to minim ise a global patc h-based fun ctional. Th e vect or field represent ing the corresp ondences b et w een occluded and uno ccluded p ixels is referred to as the shift map by Pritc h et al . [ 36 ], a term w hic h we sh all use in the current work. This idea w as extended to the vid eo case by Granados et al . in [ 20 ]. They prop ose a semi-automatic algorithm whic h optimises th e spatio-t emp o ral s hift map . Th is algorithm pr esen ts impressiv e results on higher resolution images than are p reviously foun d in th e literature (up to 1120 × 754 pixels). Ho wev er, in order to red u ce the large searc h space and h igh time complexit y of the optimisation metho d, manual trac king of movi ng o ccluded ob jects is required. T o the b est of our kno wledge, the inpainting results of Gr anados et al . are the most adv anced to date, and w e sh all therefore compare our algorithm with these r esults. W e also n ote the wo rk of Herling and Broll [ 23 ] wh ose goal is “dimin ished realit y”, w hic h considers the inpain ting task coupled with a trac kin g pr oblem. This is the only approac h of whic h we are aw are whic h inpaint s videos in a real-time mann er. Ho wev er, the metho d r elies on r estrictiv e h yp otheses on the natur e of the s cene to inpain t and can therefore only deal with tasks suc h as r emo ving a static ob ject from a rigid platform. Another f amily of patc h-based video inpaint ing metho ds wa s int ro d uced in the seminal w ork of W exler et al . [ 38 ]. This pap er p rop oses an iterativ e metho d that ma y b e s een as an heuristic to solv e a global optimisation problem. This wo rk is w id ely cited and wel l-kno wn in th e video inpainting domain, mainly b ecause it en s ures global coherency in an automatic manner. Th is m etho d is in fact closely linked to methods such as non-lo cal denoising [ 10 ]. This link wa s also noted in th e work of Arias et al . [ 3 ], which introdu ced a general non-lo cal VIDEO INP AINTING 3 patc h-based v ariational framew ork for in p ain ting in the image case. In f act, the algorithm of W exler et al . may b e seen as a sp ecial case of th is fr amew ork. W e shall refer to this general approac h as the non-lo c al p atch-b ase d appr oac h. Darabi et al . ha v e p resen ted another v ariation on the wo rk of W exler et al . for image inpainting pu rp o ses in [ 14 ]. In the video inpain ting case, the high d imensionalit y of the pr oblem mak es suc h approac hes extremely slow, in particular due to the n earest neigh b our searc h , requiring up to sev eral days for a few seconds of V GA video. This p roblem w as considered in our pr evious w ork [ 31 ], whic h repr esen ted a first step to wards ac hieving h igh qualit y video inp ain ting results even on high resolution videos. Giv en the fl exibilit y and p oten tial of the non-lo cal patc h-based approac h, we u se it here to f orm the core of the prop ose d metho d. In order to obtain an algorithm whic h can deal with a wide v ariet y of complex situations, w e consider and prop ose solutions to some of the m ost imp ortant questions w hic h arise in video inpain ting. 1.2. Outline and contributions of the pap er. The u ltimate goal of our wo rk is to pr o duce an automatic and generic video in pain ting algorithm whic h can deal with complex and v aried situations. In Section 2 , w e presen t the v ariational framew ork and the notations whic h w e shall use in this pap er. The prop osed algorithm and con trib u tions are presente d in Section 3 . These contributions can b e summarised with the follo w ing p o ints: • we greatly accelerate the nearest neighbour searc h, using an extension of the Pa tc h- Matc h algorithm [ 5 ] to the spatio-temp oral case (Section 3.1 ); • we introdu ce texture features to the patc h distance in order to correctly inpaint vid eo textures (Section 3.3 ); • we deal with the p roblem of mo ving bac kground (Section 3.4 ) in videos, using a robust affine estimation of the d omin an t motion in the vid eo; • we describ e our initialisati on s cheme (Section 3.5 ), whic h is often left unsp ec ified in previous work; • we giv e precise details concerning the imp lemen tation of the multi- resolution sc heme (Section 3.6 ). As shown in Section 4 , the pr op osed algorithm pro du ces h igh qualit y results in an auto- matic manner, in a wide range of complex video inpain ting situations: moving cameras, mul- tiple mo ving ob j ects, changing bac kground and d ynamic video textures. No p r e-segmen tatio n of the video is r equ ired. One of the most significant adv antag es of the p rop osed metho d is that it deals w ith all these s ituations in a single fr amew ork, rather than ha ving to resort to separate algorithms for eac h case, as in [ 20 ] and [ 19 ] for instance. In p articular, the p roblem of reconstructing dynamic video textures has not b een previously addressed in other inpainting algorithms. T h is is a wo rthwhile adv antag e since the problem of syn thesising video textures, whic h is us ually done with dedicated algorithms, can b e ac hiev ed in this single, coheren t framew ork. Finally , our algorithm do es not need an y man ual in p ut other than the inpainting mask, and do es not r ely on foreground /bac kground segmen tation, w h ic h is th e case of man y other appr oac h es [ 12 , 20 , 24 , 28 , 35 ]. W e pr o vide an implementa tion of our algorithm, which is a v ailable at the follo w ing ad d ress: http://w ww.telec om- paristech.fr/ ~ gousseau /video_i npainting . 2. V a riational f r amew o rk and notation. As w e h a v e stated in the introd uction, our video inpain ting algorithm tak es a non-lo cal p atc h -based approac h. A t the heart of suc h algorithms 4 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ Figure 1. Il lustr ation of the notation use d for pr op ose d vide o inp ainting algorithm. lies a global patc h-based fun ctional which is to b e optimised. This optimisation is carried out us in g an iterativ e algorithm inspir ed b y the w ork of W exler et al . [ 39 ]. The cen tral mac hinery of the algorithm is based on the alternation of t w o core steps: a searc h for the nearest neigh b our s of p atc h es which con tain o ccluded pixels, and a reconstruction step based on the aggrega tion of the information pro vided b y the nearest neigh b o urs. This iterativ e algorithm is em b edded in a m ulti-resolution pyramid sc h eme, similarly to [ 17 , 39 ]. The multi-resolutio n s c heme is vital for the correct reconstruction of structur es and mo ving ob jects in large o cclusions. T he pr ecise details concerning the m ulti-resolution sc heme can b e found in Section 3.6 . A summary of the differen t steps in our algorithm can b e seen in Figure 2 , and the whole algorithm may b e seen in Alg. 4 . Notation. Before describing our algorithm, let us fir st of all set down some notation. A diagram wh ic h s u mmarises this notation can b e seen in Figure 1 . Let u : Ω → R 3 represent the colour vid eo con ten t, defin ed o v er a sp atio-te mp oral volume Ω. In order to simplify the notation, u will corresp ond b oth to the in formation b eing reconstructed inside the o cclusion, and the uno ccluded inf ormation wh ic h will b e used f or inpainting. W e denote a spatio- temp oral p osition in the video as p = ( x, y , t ) ∈ Ω and by u ( p ) ∈ R 3 the v ector cont aining th e colour v alues of the vid eo at this p osition. Let H b e the sp atio-te mp oral occlusion (the “hole” to inp ain t) and D the data set (the uno ccluded area). Note that H and D corresp ond to spatio-temporal p ositions r ather than actual video con ten t and they form a partition of Ω, that is Ω = H ∪ D and H ∩ D = ∅ . Let N p b e a spatio-temp oral n eighb ou r ho o d of p . Th is neigh b ourh o od is defined as a rectangular cub oid centred on p . Th e video p atch cen tered at p is defined as ve ctor W u p = ( u ( q 1 ) · · · u ( q N )) of size 3 × N , where the N pixels in N p , q 1 · · · q N , are ordered in a pr ed efined w a y . Let us note ˜ D = { p ∈ D : N p ⊂ D } the set of uno ccluded p ixels whose neigh b orho o d is also uno ccluded (video patc h W u p is only comp osed of kno wn color v alues). W e sh all only use patc hes stemming from ˜ D to inpaint th e o cclusion. Also, let ˜ H = ∪ p ∈H N p represent a dilated v ersion of H . Giv en a distance d ( · , · ) b et w een video patc hes, a ke y to ol for patc h-based inp ainting is to define a corresp ondence map th at asso ciates to eac h pixel p ∈ Ω (notably those in o cclusion) VIDEO INP AINTING 5 another p ositio n q ∈ ˜ D , s uc h that patc hes W u p and W u q are as similar as p ossible. This can b e formalized using the so-called shift map φ : Ω → R 3 that captures the shift b et w een a p ositio n and its corresp ondent, that is q = p + φ ( p ) is the “corresp ondent” of p . This map m ust v erify that p + φ ( p ) ∈ ˜ D , ∀ p (see Figure 1 f or an illustration). Mimizing a non-local pa tch-based functional . The cost function whic h we u se, follo wing the w ork of W exler et al . [ 39 ], h as b oth u and φ as arguments: (2.1) E ( u, φ ) = X p ∈H d 2 ( W u p , W u p + φ ( p ) ) , with (2.2) d 2 ( W u p , W u p + φ ( p ) ) = 1 N X q ∈N p k u ( q ) − u ( q + φ ( p )) k 2 2 . In all that follo ws, in ord er to av oid cumb ersome notation we s h all drop the u fr om W u p and simply denote patc h es as W p . W e sho w in App endix A , that this functional is in f act a sp ecial case of the form ulation of Arias et al . [ 3 ]. As men tioned at the b eginning of this Section, this functional is optimised using the follo wing t w o steps: Matc hing Giv en current video u , fin d in ˜ D the ne ar est neighb our (NN) of eac h patc h W p that h as pixels in in p ain ting domain H , that is, the map φ ( p ) , ∀ p ∈ Ω \ ˜ D . Reconstruction Giv en shift map φ , attribute a n ew v alue u ( p ) to eac h pixel p ∈ H . These steps are iterated so as to conv erge to a satisfactory solution. The pro c ess ma y b e seen as an alternated min im isation of cost ( 2.1 ) ov er th e shift map φ and th e video con ten t u . As in man y image pr o ce ssing and computer vision problems, this approac h is implemen ted in a multi- resolution framework in order to impr o v e r esults and a v oid lo cal minima. 3. Prop osed algo rithm. Now that w e ha v e giv en a general outline of our algorithm, we pro ceed to address some of the k ey challe nges in video inpain ting. The first of these concerns the searc h for the nearest neigh b ours of patc hes cen tred on pixels whic h n eed to b e inpainte d. 3.1. App ro ximate Neare st Neighb our (ANN) search. When considering the high com- plexit y of th e NN searc h step, it quic kly b eco mes app aren t that searching for exact n earest neigh b ours w ould take far to o long. Therefore, an ap pr oximate ne ar est neighb our (ANN) searc h is carried out. W exler et al . prop osed the k-d tree based approac h of Arya and Moun t [ 4 ] f or this step, but this approac h remains quite slo w. F or example, one ANN searc h step tak es ab out an hour for a video conta ining 120 × 340 × 10 0 pixels, with ab out 422 , 000 missing pixels, which r epresen ts a r elativ ely small o cclusion (the equiv alen t of a 65 × 65 pixel b o x in eac h frame). W e shall address this problem here, in particular by using an extension of the P atc h Matc h algorithm [ 5 ] to the spatio-temporal case. W e note that the P atc hMatc h alg o- rithm has also b een used in conju nction w ith a 2D ve rsion of W exler’s algorithm for image inpain ting, in the Con ten t-Aw are Fill to ol of P h otoshop [ 1 ], and by Darabi et al . [ 14 ]. Barnes et al .’s P atc hMatc h is a conceptually sim p le algorithm b ased on the h yp othesis that, in the case of image patc hes, the shift map defined b y the sp atial offsets b et we en ANNs is piece-wise constant. This is essen tially b ecause the image elemen ts wh ic h the ANNs connect 6 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ Figure 2. Di agr am of the pr op ose d vide o inp ainting algorithm. VIDEO INP AINTING 7 are often on rigid ob jects of a certain size. In essence, the algorithm looks r andomly for ANNs and tr ies to “spread” those whic h are go o d . W e extend this principle to the spatio-temp oral setting. Ou r spatio-temp oral extension of the Patc hMatc h algorithm consists of three steps: (i) initialisation,(ii) pr opagation and (iii) random searc h . Let us recall that ˜ H is a dilated v ersion of H . In itialisati on consists of rand omly asso ciating an ANN to eac h p atc h W p , p ∈ ˜ H , whic h giv es an initial ANN shift map , φ . In fact, apart from the first iteration, w e already ha v e a go o d initialisation: the shift map φ fr om the previous iteration. Therefore, except durin g the in itialisati on s tep (see S ection 3.5 ), w e use this p r evious sh ift map in our algorithm instead of initialising randomly . The p r opagation step encourages shifts in φ wh ic h lead to go o d ANNs to b e spread throughout φ . I n this step, all p o sitions in the video v olume are scanned lexicographically . F or a giv en patc h W p at location p = ( x, y , t ), the algorithm considers the follo wing three candidates : W p + φ ( x − 1 ,y ,t ) , W p + φ ( x,y − 1 ,t ) and W p + φ ( x,y ,t − 1) . If one of th ese three patc hes h as a smaller p atc h d istance with resp ect to W p than W p + φ ( p ) , then φ ( p ) is replaced w ith the new, b etter shift. The scanning ord er is rev ersed for the next iteration of the propagation, and the algorithm tests W p + φ ( x +1 ,y ,t ) , W ( p + φ ( x,y +1 ,t ) and W p + φ ( x,y ,t +1) . In th e tw o d ifferen t scanning orderings, the imp ortan t p oin t is ob viously to use the patc hes whic h h av e already b een pr o cessed in the current propagation step. The third step, the random searc h, consists in lo oking randomly for b etter ANNs of eac h W p in an increasingly sm all area aroun d p + φ ( p ), starting w ith a m axim um searc h distance. A t iteration k , the random candidates are cent red at the follo wing p ositi ons: (3.1) q = p + φ ( p ) + ⌊ r max ρ k δ k ⌋ , where r max is the maximum searc h radius around p + φ ( p ), δ k is a 3-dimensional v ector d ra wn from the u niform distribu tion o v er unit cub e [ − 1 , 1] × [ − 1 , 1] × [ − 1 , 1] and ρ ∈ (0 , 1) is the reduction factor of the searc h w indo w size. In the original P atc h Matc h, ρ is set to 0.5. This random searc h a v oids the algorithm getting stuck in lo cal minima. Th e maxim um searc h parameter r max is set to the maxim um dimension of the video, at the curren t resolution lev el. The propagation and r andom searc h steps are iterated sev eral times to con verge to a go o d solution. In our w ork, we set this n umb er of iterations to 10. F or further d etails concernin g the P atc h Matc h algorithm in the 2D case, see [ 5 ]. Ou r spatio-temp oral extension is su m marized in Algorithm 1 . W e n ote here th at other ANN search metho ds for image patc hes exist which outp erform P atc h Matc h [ 21 , 26 , 33 ]. Ho wev er, in pr actice, P atc hMatc h app eared to b e a goo d option b ecause of its conceptual simp licit y and non etheless very go o d p erf ormance. F urthermore, to tak e the example of the “T r eeCANN” metho d of Olonetsky an d Avid an [ 33 ], the rep orted re- duction in execution time is largely based on a very go o d ANN shift map initialisation f ollo we d b y a small n um b er of propagation steps. In our case, w e already h a v e a go o d initialisation (from the pr evious iteratio n), whic h mak es the usefuln ess of such approac hes questionable. Ho w ev er, further accel eration is certainly something whic h could b e dev elop ed in the futur e. 3.2. Video reconstruction. Concerning the reconstruction step, we use a a w eigh ted mean based approac h, inspired by the work of W exler et al ., in whic h eac h p ixel is reconstructed in 8 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ Algorithm 1: ANN searc h with 3D Patc hMatc h Data : Current inpain ting confi gu r ation u , φ , ˜ H Result : ANN shift map φ for k = 1 to 10 do if k is even then /* Propa gation on even iteration */ for p = p 1 to p | ˜ H| (pixels in ˜ H lexic o gr aphic al ly or der e d) do a = p − (1 , 0 , 0), b = p − (0 , 1 , 0), c = p − (0 , 0 , 1); q = arg min r ∈{ p,a,b,c } d ( W u p , W u p + φ ( r ) ); if p + φ ( q ) ∈ ˜ D then φ ( p ) ← φ ( q ) end else /* Propagati on on odd it eration */ for p = p | ˜ H| to p 1 do a = p + (1 , 0 , 0), b = p + (0 , 1 , 0), c = p + (0 , 0 , 1); q = arg min r ∈{ p,a,b,c } d ( W u p , W u p + φ ( r ) ); if p + φ ( q ) ∈ ˜ D then φ ( p ) ← φ ( q ) end end for p = p 1 to p | ˜ H| do /* Random search */ q = p + φ ( p ) + ⌊ r max ρ k RandUniform([ − 1 , 1] 3 ) ⌋ ; if d ( W u p , W u p + φ ( q ) ) < d ( W u p , W u p + φ ( p ) ) and p + φ ( q ) ∈ ˜ D then φ ( p ) ← φ ( q ) end end the follo wing mann er : (3.2) u ( p ) = P q ∈N p s q p u ( p + φ ( q )) P q ∈N p s q p , ∀ p ∈ H , with (3.3) s q p = exp − d 2 ( W q , W q + φ ( q ) ) 2 σ 2 p ! . W exler et al . prop osed the use of an additional weig hting term to giv e more weig ht to the inf ormation near the o cclusion b order. W e disp ens e with this term, since in our sc heme it is somewhat r ep laced by our metho d of initialising th e solution which will b e detailed in Section 3.5 . P arameter σ p is defined as the 75th p ercent ile of all distances { d ( W q , W q + φ ( q ) ) , q ∈ N p } as in [ 39 ]. Observe that in ord er to minimise ( 2.1 ), the n atural approac h would b e to do the recon- struction with th e non-we igh ted sc h eme ( s q p = 1 in Equation 3.2 ) that stems from ∂ E ∂ u ( p ) = 0. Ho w ev er, the weigh ted sc heme ab o ve tends to accelerate the con v ergence of the algorithm, meaning that w e pro d u ce go o d results faster. VIDEO INP AINTING 9 Original frame : “Crossin g ladies” [ 39 ] W eigh ted av erage Mean sh ift Best patch Figure 3. Comparison of diff erent final reconstruction me thods . We observe that the pr op ose d r e c onstruction using onl y the b est p atch at the end of the algorithm pr o duc es similar r esults to the use of the me an shift algorithm, avoiding blur induc e d by weighte d p atch aver aging, while b eing less c omputational ly exp ensive. Ple ase note that the blurring effe ct is b est viewe d in the p df version of the p ap er. An imp orta nt observ ation is that, in the case of regions with h igh f requency details, the use of this mean reconstruction (w eigh ted or unw eight ed) often leads to blurry results, ev en if the correct patc hes hav e b ee n identified. This ph en omenon w as also noted in [ 39 ]. Although w e shall p rop ose in Section 3.3 a metho d to correctly identify textured patc hes in the matc hing steps, this d o es n ot deal with the r e c onstruction of the video. W e th us n eed to add ress this problem, at least in the final stage of the appr oac h : throughout the algorithm, w e use the unw eigh ted mean giv en in Equation 3.2 and , at the end of the algorithm, wh en the solution has con v erged at the finest pyramid lev el, we simply inpaint the o cclusion using the b est patc h among the contributing ones. This corresp onds to s etting σ p to 0 in ( 3.2 - 3.3 ) or, seen in another ligh t, may b e view ed as a very crude annealing pr o cedure. Final reconstruction at p osition p ∈ H reads: (3.4) u (final) ( p ) = u ( p + φ (final) ( q ∗ )) , with q ∗ = arg min q ∈N p d ( W (final − 1) q , W q + φ (final) ( q ) ) . Another solution to this problem based on the mean shift algorithm was prop osed by W exler et al . in [ 39 ], but su c h an approac h in creases the complexit y and execution time of the algorithm. Figure 3 sho ws that v ery similar results to those in [ 39 ] ma y b e obtained with our m uc h simpler app roac h. 3.3. Video texture pyramid. In order for any patc h -based inpaint ing algorithm to work, it is necessary that the p atch distance iden tify “correct” p atc h es. This is not the case in 10 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ Appro ximate nearest n eigh b ours without texture features Appro ximate nearest n eigh b ours with texture features Figure 4. Illustration of the necessity of texture features for inpainting . Without the textur e fe atur es, the c orr e ct textur e m ay not b e found. sev eral situations. Firstly , as noticed by Liu and Caselles in [ 29 ], the use of multi -resolution p yramids can make patc h comparisons ambiguous, esp ecia lly in the case of textures, in images and videos. Secondly , it tur n s out that the commonly used ℓ 2 patc h distance is ill-adapted to comparing textured p atches. Thir dly , Pa tc hMatc h itself can con tribute to the id en tification of incorrect patc hes. These reasons are explored and explained more extensively in App endix B . A visual illustration of the problem ma y b e seen in Figure 4 . W e note here th at sim ilar am biguities were also iden tified by Bugeau et al . in [ 11 ], b ut their int erpretation of the phenomenon was somewhat differen t. W e prop ose a solution to this pr oblem in the form of a m ulti-resolution p yramid whic h reflects the textural nature of the video. W e shall refer to this as the textur e fe atur e pyr amid . The information in this texture feature pyramid is ad d ed to the patc h distance in order to iden tify th e correct patc hes. In order to iden tify textures, we s hall consider a simple, gradient -based texture attribute. F ollo wing Liu and C aselles [ 29 ], we consider the absolute v alue of the image d eriv ativ es, a v eraged ov er a certain spatial neigh b ourho o d ν . Ob viously , man y other attributes could b e considered, h o w ev er they s eemed to o inv olv ed for our purp ose s. More form ally , we introdu ce the t w o-dimensional texture feature T = ( T x , T y ), compu ted at eac h pixel p ∈ Ω: (3.5) T ( p ) = 1 card( ν ) X q ∈ ν ( | I x ( q ) | , | I y ( q ) | ) , where I x ( q ) (resp. I y ( q )) is the deriv ativ e of the image intensit y (grey-lev el) in the x (resp . VIDEO INP AINTING 11 y ) direction at the pixel q . The squared patc h distance is no w d efined as (3.6) d 2 ( W p , W q ) = 1 N X r ∈N p k u ( r ) − u ( r − p + q )) k 2 2 + λ k T ( r ) − T ( r − p + q ) k 2 2 , where λ is a weig ht ing scalar. The feature pyramid is then set up by sub sampling the texture features of the full- resolution inpu t video u . W e note here that ea c h lev el is obtained by subsampling the in- formation con tained at th e finest pyr amid r esolution , and not b y calculating T ℓ based on the subsampled video at the leve l ℓ : (3.7) ∀ ( x, y , t ) ∈ Ω ℓ , T ℓ ( x, y , t ) = T (2 ℓ x, 2 ℓ y , t ) , ℓ = 1 · · · L. This is an imp ortant p oint , since the required textural information do es not exist at coarser lev els. F eatures are not filtered b efore subsampling, since they hav e already b een a v eraged o v er the neighbour ho o d ν . I n the exp eriments done in this p ap er, this neigh b ou r ho o d is set, b y default, to th e area to whic h a coarsest-lev el p ixel corr esp onds, whic h is a square of size 2 L − 1 , as is done in [ 29 ]. Ho w ev er, in a more general setting, the size of this area sh ould b e indep en d en t of the n umber of lev els, so care should b e tak en in the case wh ere few p yramid lev els are used. A notable difference with resp ect to the work of Liu and Caselles [ 29 ] is the fact that we use the texture features at al l p yramid lev els. Liu and Caselles do not do this, sin ce they p erform grap h cut b ased optimisation at the coarsest level , and at the fi n er leve ls only consider small relativ e sh if ts with resp ect to the coarse solution. A final c h oice w hic h must b e made when u s ing the texture features is ho w they are themselves reconstructed. In shift maps b ased algorithms, this is not a pr oblem, since by definition an occluded pixel tak es on the c h aracteristics of its corresp ondent in D (colo ur, texture features or an ything else). In our case, we inp ain t the texture features usin g the same r econstruction sc heme as is used for colour in formation (see Eq. 3.2 ): (3.8) T ( p ) = P q ∈N p s q p T ( p + φ ( q )) P q ∈N p s q p , ∀ p ∈ H . Conceptually , the use of these f eatures is quite simple, and easily fits into our inpain ting framew ork. T o summarise, these features may b e seen as sim p le texture d escrip tors whic h help th e algorithm a v oid making m istakes wh en c ho osing the area to use for inpain ting. The metho d ology w hic h w e ha v e prop ose d f or d ealing with d ynamic vid eo textures is imp ortant for the follo wing reasons. Firstly , to the b est of our kno wledge, this is the first inpain ting appr oac h whic h prop ose s a global optimisation and which can deal correctly with textures in images and vid eos, without restricting the searc h space (con trary to [ 13 , 14 , 20 , 29 , 36 ]). Secondly , while the pr oblem of recreating video textures is a researc h sub ject in its o wn righ t and algorithms hav e b een d evelo p e d for their syn thesis [ 16 , 27 , 37 ], ours is the fir s t algorithm to ac h ieve this within an inpain ting framework. Finally we note th at algorithms suc h 12 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ Original frame : “Jum ping man” [ 19 ] Inpaint ing without realignemen t Inpaint ing with realignemen t Figure 5. A comparison of inpainting results with and without affine reali gnment . Notic e the inc oher ent r e c onstruction on the steps, due to r andom c amer a motion which makes sp atio-temp or al p atches difficult to c omp ar e. These r andom motions ar e c orr e cte d with affine motion estimation and inp ainting i s p erforme d in the r e aligne d domain. as that of Granados et al . [ 19 ], whic h are sp ecifically d edicated to bac kgroun d reconstru ction, cannot deal with bac k grou n d video textures (su ch as wa v es), since they supp ose that th e bac kground is rigid. This hyp othesis is clearly not true for video textures. An example of the impact of the texture features in video in pain ting ma y b e seen in Figure 7 . 3.4. Inpainting with mobile background. W e no w turn to another common case of video inpain ting, that of mobile bac kgrounds . This is the case, for example, wh en hand-held cameras are used to capture th e inpu t video. There are several p ossible solutions to this prob lem. Pa t w ardhan et al . [ 35 ] segment the video in to mo ving foreground and bac kground (whic h may also d ispla y motion) usin g motion estimation with blo c k m atc h ing. Once the mo vin g foreground is inpain ted, the bac kground is r ealigned with resp ect to a motio n estimated with blo c k matc hin g, an d the bac k grou n d is fi lled b y cop ying and pasting bac kground pixels. In this case, the bac kground sh ould b e p erfectly r ealigned. Granados et al . [ 19 ] prop o se a homograph y-based algorithm for this task. They estimate a set of homographies b et wee n eac h frame and c ho ose which homography should b e used for eac h o c cluded pixel b elonging to the bac kground. Both of these algorithms require th at the bac kground and foreground b e segment ed, which w e wish to a v oid here. F u rthermore, they ha ve the quite strict limitation that pixels are simply copied from their realigned p ositio ns, m eanin g that the realignmen t m ust b e extremely accurate. Here, we p rop ose a solution w hic h allo w s us to use our patc h-based v ariational framew ork for b oth tasks (foreground and b ac kground inpaint ing) sim ultaneously , without an y segmenta tion of the video in to foreground and bac kground. The fund amen tal hypothesis b ehind patc h -based metho d s in images or videos is that con ten t is red u ndant and rep etitiv e. This is easy to see in images, and ma y app ear to b e the case in videos. Ho w ev er, the temp oral dimension is added in video patc hes, meaning that a se quenc e of im age patc h es sh ould b e rep ea ted thr ou gh ou t the video. This is not the case when a video displays r andom motion (as with a mobile camera): ev en if the requir ed con tent VIDEO INP AINTING 13 Algorithm 2: Pre-pro cessing s tep for realigning the input video. Data : Input vid eo u Result : Aligned video and affine w arps N f = num b er of fr ames in v id eo; N m = ⌊ N f 2 ⌋ ; for n = 1 to N f − 1 do θ n,n +1 ← EstimateAffineMotion( u n , u n +1 ); end for n = 1 to N f do if n < N m then θ n,N m = θ N m − 1 ,N m ◦ · · · ◦ θ n,n +1 if n > N m then θ n,N m = θ − 1 N m − 1 ,N m ◦ · · · ◦ θ − 1 n,n +1 u n ← AffineW rap( u n , θ n,N m ); end app ears at some p oint in the sequence, there is no guaran tee that the required spatio-temp oral patc hes will rep eat themselve s with the same motion. Em pirically , we h av e observ ed that this is a significan t problem ev en in the case of motions with small amplitude. T o coun ter this pr oblem, w e estimate a dominant , affine motion b etw een eac h pair of successiv e frames, and us e this to realign eac h frame w ith resp ect to one reference f rame. In our w ork, we c hose the r eference frame to b e the midd le frame of the sequence (this sh ould b e adapted for larger sequen ces). W e use the work of Odob ez and Bouthem y [ 32 ] to realign the frames. The colour v alues of the pixels in the realigned frames are obtained using linear in terp olation. T he occlusion H is obviously also realigned. Once the frames are r ealigned with the reference frame (Alg. 2 ), we inpain t the video as usual. Finally , wh en inpain ting is finished, w e p erform th e inv erse affine transformation on the images and paste the solution in to the origi nal o ccluded area. Figure 5 compares th e results with and w ithout this p re- pro cessing step, on a pr otot yp ical example. Without it, it is not p ossib le to fi nd coherent patc hes whic h r esp ect the b order conditions. 3.5. Initialisation of the solution. The iterativ e p ro cedure at the heart of our algorithm relies on an initial inpaint ing s olution. The initialisation step is very often left unsp ecified in w ork on video inpainting. As w e sh all see in this S ection, it pla ys a vital role, and we therefore explain our c hosen initialisation metho d in d etail. W e inp ain t at the coarsest lev el u sing an “onion p eel” approac h, th at is to sa y we inp ain t one la y er of the occlusion at a time, eac h la ye r b eing one pixel thic k. More formally , let H ′ ⊂ H b e th e current occlusion, and ∂ H ′ ⊂ H ′ the cur ren t la y er to inpain t. W e define the uno c clude d neigh b our h o o d N ′ p of a pixel p , with resp e ct to the cur ren t o cclusion H ′ as: (3.9) N ′ p = { q ∈ N p , q / ∈ H ′ } . Some choice s are needed to implemen t this initialisation metho d. First of all , w e only compare the uno ccluded p ixels du ring a patc h comparison. The distance b et we en tw o patc hes 14 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ (a) Occluded in p ut image (b) Random initialisatio n (c) Sm o oth zero-Laplacia n in terp olation (d) Onion p e el initialisatio n (a) Or iginal image (b) Inp ain ting after random initialisatio n (c) In p ain ting after zero Laplacian (d) Inpain ting after onion p eel initialisatio n Figure 6. Impact of ini ti a l isation sc hemes on inpainting results . Note that the only scheme which suc c essful ly r e c onstructs the c ar db o ar d tub e is the “onion p e el” appr o ach wher e first fil ling at the c o arsest r esolution is c onducte d in a gr e e dy fashion. W p and W p + φ ( p ) is therefore r edefined as: (3.10) d 2 ( W p , W p + φ ( p ) ) = 1 |N ′ p | X q ∈N ′ p k u ( q ) − u ( q + φ ( p )) k 2 2 + λ k T ( q ) − T ( q + φ ( p )) k 2 2 . W e also need to c ho ose whic h n eigh b ourin g patc hes to use for reconstruction. Some will b e quite u n reliable, as only a small part of the patc hes are compared. In our implemen tation, w e only use the ANNs of patc hes whose cent res are lo cated outside the curren t o cc lusion la y er. F ormally , we r econstruct the pixels in the current la y er b y usin g the follo wing form ula, mo dified f rom Equ ation 3.2 : (3.11) u p = P q ∈N ′ p s q p u ( p + φ ( q )) P q ∈N ′ p s q p . The same reconstruction is applied to the texture f eatures. A pseu do-cod e for the initialisation pro cedur e ma y b e s een in Algorithm 3 . VIDEO INP AINTING 15 Algorithm 3: Inpaint ing in itialisatio n Data : Coarse lev el in p uts u L , T L , φ L , H L Result : Coarse initial filling u L , T L and m ap φ L B ← 3 × 3 × 3 structuring elemen t; H ′ ← H L ; while H ′ 6 = ∅ do ∂ H ′ ← H ′ \ Erosion( H ′ , B ); φ L ← ANNsearc h( u L , T L , φ L , ∂ H ′ ) ; // Alg . 1 , with partial distance ( 3.10 ) u L ← Reconstruction( u L , φ L , ∂ H ′ ) ; // Eq. 3.11 T L ← Reconstruction( T L , φ L , ∂ H ′ ) ; // Eq. 3.11 H ′ ← Erosion( H ′ , B ); end Figure 6 shows some evidence to supp ort a careful initialisa tion sc h eme. Three d ifferen t initialisatio ns h a v e b een tested: random initialisat ion, zero-Laplacian interp olation and onion p eel. Random initialisation is ac h iev ed by initialising the o cclusion w ith pixels c hosen ran- domly from the image. Zero Laplacian (harmonic) interp olation is the solution of th e Laplace equation ∆ u = 0 with Dirichlet b oun dary conditions stemming fr om D . It ma y b e seen (Fig- ure 6 ) that the fi rst t w o in itialisations are unable to join the tw o parts of the cardb oard tu b e together, and th at the subsequent iterati ons do not imp ro v e the situation. In contrast, the prop osed initialisat ion p r o duces a satisfactory result. In order to m ak e ou r metho d as easy as p ossible to r eimplemen t, we no w present some further algorithmic details, whic h are in fact v ery imp ortant for ac hieving go o d results. 3.6. Implementation of the m ult i-resolution scheme. Our fir st remarks concern the implemen tation of the m ulti-resolution p yramids. W exler et al . a nd Gr an ad os et al . b oth note that temp or al subs ampling can b e detrimen tal to inpainti ng results. This is du e to the difficult y of represen ting motion at coarser lev els. F or this reason we do not subs ample in the temp oral direction, as in [ 20 ]. The only case w here w e n eed to temp orally subs ample is when th e ob jects sp end a long time b ehind th e o cclusion, (this was only done in the “Jump ing girl” sequ ence). This is quite a h ard problem to s olve sin ce it b eco mes increasingly difficult to decide what motion an occlud ed ob ject should ha v e w hen the occlusion time grows longer, unless there is strictly p eriodic motion. W e lea ve th is as an op en question, whic h could b e in v estigated in further work. A cru cial c h oice when us in g m ulti-resolution sc hemes is the num b er of pyramid lev els to use. Most other m etho ds leav e this parameter u nsp e cified, or fix the size of th e image/video at the coarsest scale, and d etermine the resulting num b er of level s [ 20 , 29 , 36 ]. In fact, wh en one considers the problem in more d etail, it b ec omes apparent that the num b er of lev els sh ould b e set so that the o cclusion size is not to o large in comparison to the patc h size. Th is in tuition is su pp o rted by exp e riments in v ery simple image inpainting situations, whic h show ed that the o cclusion size sh ould b e somewhat less th an twic e the patc h size. In our exp erimen ts, w e follo w this general rule of thum b . 16 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ Original frame : “W av es” Inpaint ing without features Inpaint ing with features Figure 7. Use fulness of the proposed tex ture features . Without the fe atur es, the algorithm fails to r e cr e ate c orr e ctly the waves, which is a typic al example of c omplex vide o textur e. Another question which is of in terest is how to p ass from one pyramid lev el to another. W exler et al . p resen ted quite an intricate sc heme in [ 39 ] to do this, whereas Granados e t al . prop ose a simple u psampling of the sh ift map . This is conceptually simpler than the approac h of W exler et al . and, after exp erimentati on, w e chose this option as we ll. T herefore, the sh ift map φ is upsamp led using n earest neighbour s in terp olation, and b oth the higher resolution video and the higher r esolution texture features are reconstructed using Equation 3.2 . O ne final note on this p oint is that we use the up sampled v ersion of φ as an in itialisati on for the P atc h Matc h algorithm at eac h level apart from the coarsest (this d iffers to our previous w ork in [ 31 ]). 3.7. V a rious implementation details. W e also requ ire a th reshold whic h will stop the iterations of the ANN s earch and reconstruction steps. In our w ork, w e use the a v erage colour difference in eac h c h annel p er pixel b et we en iteratio ns as a stopping criterion. If this falls b elo w a certain thresh old, w e stop the iteration at the cu rrent lev el. W e set this th reshold to 0.1. In order to a void iterating for to o long, s o w e also imp ose a maxim um num b er of 20 iterations at any giv en p yramid leve l. The patc h size p arameters we re set to 5 × 5 × 5 in all of ou r exp erimen ts. W e set the texture feature parameter λ to 50. Concernin g the spatio-temp oral P atc h Matc h , w e u s e ten iterations of propagation/random searc h du ring the ANN s earc h algorithm and set the wind o w size red uction factor β to 0.5 (as in the p ap er of Barnes et al . [ 5 ]). The complete algorithm is su mmarized in Alg. 4 . 4. Experimental results. The goal of our work is to ac hiev e high qualit y inpainting results in v aried, complex video inpainting situations, with redu ced execution time. Therefore, we shall ev aluate our results in terms of visual qualit y and execution time. W e compare our work to that of W exler et al. [ 39 ] and to the most r ecent video inpain ting metho d of Granados et al . [ 20 ]. All of the videos in this p ap er (and more) can b e view ed and do wnloaded along with o cc lusion masks at http://w ww.telec om- paristech.fr/ ~ gousseau /video_i npaintin g An imp lemen tation of our metho d is also a v ailable at this address. 4.1. Visual evaluations. First of all, we ha v e tested our algorithm on the vid eos prop osed b y W exler et al . [ 39 ] and Granados et al [ 20 ]. The visual results of our algorithm ma y b e VIDEO INP AINTING 17 Original frame : “Girl” [ 19 ] Result from [ 19 ] Our r esu lt Figure 8. A comparison of our inpainting resul t with the that of the backgr ound inpain ting algorithm of Granados et al. [ 19 ] . In such c ases with moving b ackgr ound, we ar e able to achieve high quality r esults (as do Gr anados et al .), but we do this in one, unifie d algorithm. This il lustr ates the c ap acity of our algorithm to p erform wel l in a wide r ange of inp ainting situations. “Beac h Umbrella” “Crossing Ladies” “Jumping girl” Original frames Inpaint ing result from [ 39 ] Our inp ain ting result Figure 9. Com parison with W exle r et al . We achieve r esults of similar visual quality c om p ar e d to those i n [ 39 ], with a r e duction of the ANN se ar ch time by a f actor of up to 50 times. seen in Figures 9 and 10 . W e note that the inpain ting results of the previous authors on these examples are visually almost p erfect , so v ery little qualita tiv e impro ve ment can b e made. It ma y b e seen that our results are of similarly high qu alit y to th e those of the previous algorithms. In particular w e are able to deal w ith situations w here sev eral moving ob ject s m ust b e correctl y recreated, w ithout requirin g man ual segment ation as in [ 20 ]. W e also achiev e these results in at least an order of magnitude less time than the previous algorithms. W e 18 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ Original frame : “Duo” Original frame : “Museum” Inpaint ing result from [ 20 ] Our inp ain ting result Figure 10. Comparison with Granados e t al . We achieve simil ar r esults to those of [ 20 ] in an or der of m agnitude less time, without user intervent ion. The o c clusion masks ar e highlighte d in gr e en. VIDEO INP AINTING 19 Algorithm 4: Prop osed video inpainting algorithm. Data : Input vid eo u o v er Ω, o cclusion H , r esolution num b er L Result : Inpainte d video ( u, θ ) ← AlignVideo( u ) ; // Alg. 2 { u ℓ } L ℓ =1 ← ImagePyramid( u ); { T ℓ } L ℓ =1 ← T extureF eat urePyr amid( u ); // Eqs. 3.5 - 3.7 {H ℓ } L ℓ =1 ← OcclusionPyramid( H ); φ L ← Random; ( u L , T L , φ L ) ← Initialisation( u L , T L , φ L , H L ); // Alg. 3 for ℓ = L to 1 do k = 0, e = 1; while e > 0 . 1 and k < 20 do v = u ℓ ; φ ℓ ← ANNsearc h ( u ℓ , T ℓ , φ ℓ , H ℓ ) ; // Alg. 1 u ℓ ← Reconstruction( u ℓ , φ ℓ , H ℓ ) ; // Eqs. 3 .2 - 3.3 T ℓ ← Reconstruction( T ℓ , φ ℓ , H ℓ ); e = 1 3 |H ℓ | k u ℓ H ℓ − v H ℓ k 2 ; k ← k + 1; end if ℓ = 1 then u ← FinalReconstruction( u 1 , φ 1 , H ) ; // Eq. 3.4 else φ ℓ − 1 ← UpSample( φ ℓ , 2) ; // Sec. 3.6 u ℓ − 1 ← Reconstruction( u ℓ − 1 , φ ℓ − 1 , H ℓ − 1 ); T ℓ − 1 ← Reconstruction( T ℓ − 1 , φ ℓ − 1 , H ℓ − 1 ); end end u ← Unw arpVideo( u, θ ) note that it is not feasible to apply th e metho d of W exler et al . to the examples of [ 20 ], wh ose resolution is to o large (up to 1120 × 75 4 × 200 pixels). Next, we pro vide exp erimen tal evidence to sho w the abilit y of our algorithm to deal w ith v arious situations wh ich app e ar frequent ly in r eal videos, some of wh ic h are not dealt with b y pr evious metho ds. Figure 7 shows an example of the u tilit y of using texture features in the inpain ting pr o cess: without them, the inpain ting result is quite clea rly unsatisfactory . W e hav e n ot d irectly compared these r esults with previous wo rk. Ho w ev er it is quite clear that the metho d of [ 20 ] cannot deal with suc h situations. This metho d supp oses that the bac kground is s tatic, and in th e case of dyn amic textures, it is not p ossible to r estrict the searc h space as prop osed in the same metho d for mo ving ob jects. F urtherm ore, the bac kground inpain ting algorithm of Granados et al . [ 19 ] su pp oses that mo ving b ac kground un dergo es a homographic trans f ormation, whic h is clearly not the case f or video textures. By r elying on 20 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ a plain colour distance b et we en patc hes, the algorithm of W exler et al . is lik ely to p r o duce results similar to the one wh ic h ma y b e seen in Figure 7 (midd le image). Fin ally , to tak e another algorithm of the literature, the metho d of Pat wardhan et al . [ 35 ] w ould encounter the same pr oblems as that of [ 19 ], since they cop y-and-paste pixels dir ectly after comp ensating for a lo cally estimated motion. More examples of vid eos conta ining d ynamic textures can b e seen at http:// www.tele com-pari stech.fr/~gousseau/video_inpainting . Our algorithm’s capacit y to deal with moving bac kground is illustrated by Figure 8 . W e do this in the s ame un ified fr amew ork u sed for all other examp les in this pap er, wh ereas a sp ecific algorithm is needed by Granados et al . [ 19 ] to ac h iev e this. Thus, w e see that the same core algorithm (iterat iv e ANN searc h and reconstruction) can b e used in order to deal with a series of inpainting tasks and situations. F u rthermore, we note that no foreground /bac k grou n d segmen tation w as needed for our algorithm to p r o duce satisfactory results. Finally , w e n ote that s u c h situations are not managed using the algorithm of [ 39 ]. Again, examples contai ning mo ving bac k grou n ds can b e view ed at the referenced w ebsite. The generic nature of the p rop osed app roac h represen ts a significant adv ant age o ve r pre- vious metho d s, and allo ws u s to deal with many different situations without ha ving to resort to manual in terv en tion or the creation of sp ec ific algorithms. 4.2. Exec ution times. One of the goals of our work w as to accelerate the video inpaint ing task, since th is was previously the greatest barrier to devel opment in this area. T h erefore, w e compare our execution times to those of [ 39 ] and [ 20 ] in T able 1 . Algorithm ANN ex ecu tion t imes for all occluded pix els at full resolution. Beac h Umbrella Crossing Ladies Jumping Girl Duo Museum 264 × 68 × 98 170 × 80 × 87 300 × 100 × 239 960 × 704 × 154 1120 × 754 × 200 W exler (k dT rees) 985 s 942 s 7877 s - - Ours (3D Patc h Matc h) 50 s 28 s 155 s 29 min 44 min Algorithm T otal execution time Granados 11 hours - - - 90.0 h ours Ours 24 mins 15 mins 40 mins 5.35 hours 6.2 h ours Ours w/o texture 14 mins 12 mins 35 mins 4.07 hou rs 4.0 hours T able 1 P artial and total e xecution times on different examples . The p artial inp ainting times r epr esent the time taken f or the ANN se ar ch for al l o c cl ude d p atches at the ful l r esolution. Note that f or the “museum” example, Gr anados’s algorithm is p ar al lelise d over the differ ent o c clude d obje cts and the b ackgr ound, wher e as ours i s not. Comparisons with W exler’s algorithm should b e ob tained carefully , since sev eral crucial parameters are not sp ecified. In p articular, the ANN searc h sc heme used b y W exler et al . requires a parameter, ε , wh ic h determines th e accuracy of the ANNs. More formally , if W p is a source patc h, W q is th e exact NN of W p and W r is an ANN of W p , then the w ork of [ 4 ] guaran tees that d ( W p , W r ) ≤ (1 + ε ) d ( W p , W q ). Th is parameter has a large influence on the computational times. F or our comparisons, w e set this parameter to 10, w hic h pro d uced ANNs with a similar a v erage err or p er patc h comp onent as our spatio-temp oral Pa tc hMatc h . Another p arameter w hic h is left unsp ec ified by W exler et al . is the n um b er of iterations of ANN searc h/reconstruction steps p er p yr amid lev el. This h as a v ery large influence on the total execution time. Therefore, instead of comparing total exec ution times w e s imply VIDEO INP AINTING 21 compare the ANN searc h times, as this step represents the ma jorit y of the computational load. W e obtain a s p eedup of 20-50 times o ver the metho d of [ 4 ]. W e also include our total execution times, to giv e a general id ea of the time taken with resp ec t to the video size. T h ese results show a sp ee dup of around an order of magnitude w ith resp ect to th e semi-automatic metho ds of Granados e t al [ 20 ]. In T able 1 , we hav e also add ed our execution times without the use of texture f eatures to illustrate the additional computational load whic h this adds . These compu tation times sho w that our algorithm is clea rly faster than the app roac hes of [ 39 ] and [ 20 ]. This adv antag e is significan t b ecause not only is the algorithm more pr actical to use, bu t it is also m uc h easier to exp eriment and ther efore mak e pr ogress in the d omain of video inpainting. 5. F urther wo rk. Seve ral p oints could b e impr o v ed u p on in the p resen t pap er. Firstly , the case w here a mo ving ob ject is o ccluded for long p eriods remains v ery d iffi cu lt and is n ot dealt with in a unified manner h ere. The one solution to this problem (temp oral subs amp ling) do es not p erform w ell when complex m otion is p r esen t. Therefore, other solutions could b e in teresting to explore. Secondly , w e hav e observe d that using a multi-resolution texture feature p yramid pr o duces v ery in teresting results. T herefore, we could p erh aps enric h the patc h space with other features, suc h as s p atio-temporal gradien ts. Finally , it is ackno wledged that videos of high r esolutions still tak e quite a long time to pro ce ss (up to several hours). F u rther accele ration could b e ac hiev ed by dimensionalit y-reducing trans formations of the p atc h space. 6. Conclusion. In this pap er, we ha ve prop osed a non-lo cal patc h-based app roac h to video inpainting w h ic h pro d uces go o d qualit y results in a wid e range of situatio ns, and on high definition videos, in a completely automatic manner. Our extension of the P atc h Matc h ANN searc h scheme to the spatio-temp oral case redu ces the time complexity of the algorithm, so that high definition videos can b e pr o cessed. W e hav e also introdu ced a texture feature p yramid whic h ensures th at dynamic video textur es are correctly inpainte d. The case of mobile cameras and mo ving bac kground is dealt with by using a global, affin e estimation of the dominant motion in eac h frame. The resulting algorithm p erforms well in a v ariety of situations, and do es not requir e any man ual in put or segmen tation. In particular, the sp ecific p roblem of inpainting textures in videos has b ee n add ressed, leading to muc h more realistic results than other inpain ting algo- rithms. Video inpain ting has y et not b een extensiv ely u s ed, in a large part d ue to prohibitiv e execution times and/or necessary manual input. W e h av e directly addressed this pr ob lem in the presen t work. W e hop e that this algorithm will mak e video inpain ting more accessible to a wider comm unit y , and help it to b ecome a more common to ol in v arious other d omains, suc h as video p ost-pro d uction, restoration and p ersonal vid eo enh ancemen t. 7. Ackno wledgments. Th e authors wo uld like to express their thanks to Miguel Granados for h is kin d help, for making his video con ten t p ublicly a v ailable and for answe ring seve ral questions concerning h is w ork. T he authors also thank P ablo Arias, Vicen t Caselles and Gabriele F accio lo for numerous in s igh tful discussions and for their help in u n derstanding their inpain ting m etho d. App endix A. On the link betw een the non-lo cal patch-based and shift map-based fo rmulations. 22 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ In the inpainti ng literature, t w o of th e main approac hes whic h optimise a global ob ject iv e functional include n on-lo cal patc h-based metho ds [ 3 , 39 ], which are closely link ed to Non- Lo cal Means denoising [ 10 ], and shift map-based formulations such as [ 20 , 29 , 36 ]. W e will sho w here that the t w o formulat ions are closely link ed, and in particular that the shift map form ulation is a sp ecific case of the v ery general f orm ulation of Arias et al . [ 3 , 2 ]. As far as p ossible w e kee p the notation introdu ced previously . In p articular p is a p osition in H , q is a p ositio n in N p and r is a p ositio n in ˜ D . In its most general v ersion, the v ariational form ulation of Arias et al . is n ot based on a one-to-one shift map, bu t rather on a p o sitiv e w eigh t fu nction w : Ω × Ω → R + that is constrained by P r w ( p, r ) = 1 to b e a probabilit y distribution for eac h p o int p . Inp ain ting is cast as the minimisation of the follo wing energy functional (that we rewrite h ere in its discretised v ersion): (A.1) E arias ( u, w ) = X p ∈H X r ∈ ˜ D w ( p, r ) d 2 ( W p , W r ) + γ X r ∈ ˜ D w ( p, r ) log w ( p, r ) , with γ a p ositiv e parameter. The fir st term is a “soft assignment” version of ( 2.1 ), while the second term is a r egulariser that fa v ors large en trop y w eigh t maps. Arias et al . prop ose the follo wing patc h distance: (A.2) d 2 ( W p , W r ) = X q ∈N p g a ( p − q ) ϕ [ u ( q ) − u ( r + ( p − q ))] , where g a is th e centered Gaussian with standard deviation a (also called the in tra-patc h w eigh t function), and ϕ is a squared norm. This is a v ery general and flexible formula tion. Arias et al . optimise this function using an alternate minimisation o ver u and w , and deriv e s olutions for v arious patc h distances. Let us c ho ose the ℓ 2 distance for d ( W p , W r ), as is the case in man y inpain ting formulat ions (and in particular the one whic h w e u se in our w ork). In this case, the minimisation s cheme leads to the follo wing expressions: w ( p, r ) = 1 Z p exp − d 2 ( W p , W r ) γ , (A.3) u ( p ) = X q ∈N p g a ( p − q ) X r ∈ ˜ D w ( q , r ) u ( r + ( p − q )) . (A.4) The parameter γ con trols the selectivit y of the w eigh ting fu nction w . Let us consider the case wh ere eac h wei gh t function w ( p, . ) is equal to a single Dirac cen tered at a single matc h p + φ ( p ). If, in addition, w e consider that the in tra-patc h w eigh ting is uniform, in other words a = ∞ , the cost fu n ction E arias reduces to: (A.5) E arias ( u, φ ) = X p ∈H X q ∈N p k u ( q ) − u ( q + φ ( p )) k 2 2 , whic h is the formulat ion of W exler et al . [ 39 ]. Rewriting ( A.4 ) in the particular case just describ e d, yields the optimal inpain ted image (A.6) u ( p ) = 1 |N p | X q ∈N p u ( p + φ ( q )) , ∀ p ∈ H , VIDEO INP AINTING 23 as the (aggrega ted) av erage of the examples indicated by the NNs of the patc hes wh ic h con tain p . Supp ose that eac h pixel is reconstructed using the NN of the patc h cent red on it: (A.7) u ( p ) = u ( p + φ ( p )) , ∀ p ∈ H , as is the case in the s h ift map-b ased formulatio ns. Then the functional b ecome s 1 : (A.8) E arias ( u, φ ) = X p ∈H X q ∈N p k u ( q + φ ( q )) − u ( q + φ ( p )) k 2 2 , whic h effectiv ely dep ends only up on φ . If we lo ok at the shift map form ulation p rop osed b y Pritc h et al ., w e fi nd the follo win g cost fu nction o v er the shift map only: (A.9) E pritch ( φ ) = X p ∈H X q ∈N p k u ( q ) − u ( q + φ ( p )) k 2 2 + k∇ u ( q ) − ∇ u ( q + φ ( p )) k 2 2 . Let us consider the first part, concerning the image colour v alues. Since we ha v e u ( p ) = u ( p + φ ( p )) , w e obtain again: (A.10) E pritch ( φ ) = X p ∈H X q ∈N ( p ) k u ( q + φ ( q )) − u ( q + φ ( p )) k 2 2 . Th us, the shift map cost fu nction ma y b e seen as a s p ecial case of the non-lo cal patc h-based form ulation of Arias et al . u nder the follo wing conditions : • d 2 ( W p , W r ) = P q ∈N p || u ( q ) − u ( r + ( p − q )) || 2 2 ; • γ = 0; • Th e in tra-patc h we igh ting function g a is un iform; • u ( p ) = u ( p + φ ( p )), that is, u ( p ) is reconstru cted usin g its corresp onden t acco rding to a single shift m ap. Arias et al . [ 2 ] h a v e sho wn the existence of optimal corresp ondance maps for a relaxed v ersion of W exler’s energy (Equation A.1 ), and also that the m in ima of Equation A.1 ) con v erge to the min im a of Equation A.5 as γ → 0. S uc h r esults also h ighlight the link b et we en different inpain ting ener gies. Ho w ev er, one should k eep in mind that the t w o formulati ons which we ha v e considered (that of Arias e t al . and Pritc h et al .) are certainly not equiv alen t, for reasons suc h as the difference in optimisation metho dology and th e presence of a gradient term in the formulatio n of Pr itc h e t al . F urthermore, the c h oice of the reconstru ction u ( p ) = u ( p + φ ( p )) wa s not considered by Arias et al ., meaning th at r esu lts ma y differ. App endix B. Comparing textured patches. In this App endix, we lo ok in further detail at th e r easons w h y textures ma y p ose a problem when comparing patc hes for th e purp oses of inpainting. Liu and Caselles noted in [ 29 ] that the su bsampling n ecessary for the use of multi-resol ution pyramids inevitably ent ails a loss 1 W e note that using the reconstru ct ion of Equation A.7 p oses problems on the occlusion b order, but w e ignore this here for the sake of simplicity and clarity . 24 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ P atc h size 3 × 3 5 × 5 3 × 3 × 3 7 × 7 9 × 9 11 × 11 5 × 5 × 5 Probabilit y 8 × 10 − 2 10 − 2 6 × 10 − 3 4 . 1 × 10 − 4 5 . 5 × 10 − 6 3 × 10 − 7 2 × 10 − 7 T able 2 Probability of producing a random 2D or 3D patc h that is closer to a random reference patc h than to a constant one with same m ean v alue . V alues ar e obtaine d thr ough numeric al simulations aver age d over ten runs for e ach exp eriment. Comp onents of r andom p atches ar e i.i.d. ac c or di ng to the c entr e d normal law with a gr ey level varianc e of 25. of detail, leading to difficu lties in correctly iden tifying textures. In fact, we found that this difficult y ma y o ccur at al l the pyramid lev els in images and videos. Roughly sp e aking, w e observ ed that textured patc hes are quite lik ely to b e matc hed w ith smo oth ones. The follo wing simple compu tations quantify this phenomenon. B.1. Compa ring patches with the classic al ℓ 2 distance. The fi r st r eason concerns the patc h d istance. Let us consider a wh ite noise p atch, W , whic h is a v ector of i.i.d. rand om v ariables W 1 · · · W N , where N is th e n umb er of comp onen ts in the patc h (num b er of p ixels for grey-lev el p atc h es), and the distribution of all W i ’s is f W . Let µ an d σ 2 b e, resp ectiv ely , the av erage and v ariance of f W . Let us consider another random patc h V follo wing same distribution, and the constan t patc h Z , comp osed of Z i = µ , i = 1 · · · N . In this simple situation, w e see that E [ k W − V k 2 2 ] = 2 E [ k W − Z k 2 2 ]. Therefore, on a v erage, the su m-of-squared-differences (SSD) b et w een t w o patc hes of the same d istribution is twic e as great as the SS D b et we en a rand omly distr ibuted patc h and a constan t patc h of v alue µ . The previous remark is only v alid on a v erage b et we en three patc hes W , V and Z . In realit y , w e hav e man y rand om patc hes V to c h o ose from, and it is sufficien t that one of th ese b e b et ter than Z for the least p atc h distance to identify a “textured” patc h. Therefore, a more in teresting question is th e follo wing. Give n a white noise patc h W , w hat is the probabilit y that the patc h V will b e b etter than the constan t patc h Z . Th is is sligh tly more inv olv ed, and we shall limit ourselve s to the case where W and V consist of i.i.d. pixels with a normal distribution. The SS D patc h distance b et w een W and V follo ws a c hi-square distrib u tion χ 2 (0 , 2 σ 2 ), and that b e t we en W and Z follo ws χ 2 (0 , σ 2 ). With this, w e ma y n umerically compute the probabilit y of a rand om patc h b eing b etter than a constan t one. Since the c hi-squared la w is tabulated, it is m uc h the same thing to use numerical simulatio ns. In T able 2 , we sho w the corresp ondin g n umerical v alues for b oth 2D (image) and 3D (video) patc hes. It ma y b e seen that for a patc h of size 9 × 9, there is ve ry little c hance of finding a b ette r p atc h than the constan t p atc h . In the video case, we see that in the case of 5 × 5 × 5 patc hes, there is a 2 × 10 − 7 probabilit y of creating a b etter p atc h r andomly . This corresp onds to n eeding an area of 170 × 170 × 170 pixels in a vid eo in order to pro d uce on a v erage one b ette r random patc h. While this is p ossible, esp ec ially in higher-definition v id eos, it r emains un lik ely f or many situations. The question naturally arises of wh y the pr oblem of comparing textures h as not b ee n more discussed in the patc h-based inpain ting literature. Indeed, to the b est of our knowledge , only Bugea u et al . [ 11 ] and L iu and Caselles [ 29 ] ha v e clearly ident ified this problem in the case of image inpainti ng. This is due to the fact th at most other inpaint ing algorithms VIDEO INP AINTING 25 Inpaint ing without texture features Inpaint ing without texture features, with o c clusion b order outlined Inpaint ing with texture features Inpaint ing with texture features, with o c clusion b order outlined Figure 11. A toy example of the utility of the textures features . Wi th them, we ar e able to distinguish b etwe en white noise (right) and the c onstant ar e a (left), and thus r e cr e ate the noise. r estrict the ANN se ar ch sp ac e to a lo cal neighbour ho o d around the o cclusion. Unfortunately , this restriction principle do es not h old in video inpain ting since the information can b e found an ywhere in the vid eo v olume, in particular w hen a complex mov ement must b e reconstructed. B.2. ANN sea r ch with PatchMatch. W e h a v e indicated that the ℓ 2 grey-lev el/colour patc h d istance is problematic for inpain ting in the p r esence of textures. Additionally , this problem is exacerbated b y the use of Patc hMatc h. Indeed, the v alues of φ whic h lead to textures are not piecewise constan t, and are therefore not prop agated through φ durin g the P atc h Matc h algorithm. O n the other h and, smo oth patc hes represent on av erage a go o d com- promise as ANNs and are the shifts w hic h lead to them are piecewise constan t and therefore w ell propagated throughout φ . Another problem whic h m a y lead to sm o oth patc hes b eing used is th e weigh ted a verag e reconstruction sc heme. This can lead to blurr y results which in turn means that smo oth p atc h es are identified. One solution to these problems is the use of our texture feature p yramid ( § 3.3 ). This p yramid is inpain ted sim ultaneously with the colour video pyramid, and thus helps to guide the algorithm in the choic e of which patc h es to us e for inpainting. Figure 11 s h o ws an in teresting situation: w e wish to inpaint a region whic h con tains white noise. Th is to y example serv es as an illustration of the app eal of our texture f eatures. Indeed, it is quite clear that without them, ther e is no c hance of in pain ting the o cclusion in a manner whic h would seem “natural” to a h uman observer, whereas with them it is p ossible, in effect, to “inpaint noise”. REFERENCES [1] http://www.photoshop essentials.c om/photo-e diting/c ont ent-awar e- fil l-cs 5/ . [2] P ablo Aria s, Vicen t Case lles, and G abriele F acciol o , Analysis of a variational fr amework f or exemplar-b ase d inp ainting , Multiscale Modeling & Simulation, 10 (2012), p p. 473–51 4. [3] P ablo A rias, Gabriele F acciol o, Vicent Caselles, and Guillermo Sapiro , A variational fr ame- work for exemplar-b ase d i mage inp ainting , Int. J. Compu t . Vision, 93 (2011), pp. 319–347. 26 NEWSON, ALMANSA, FRADET, GOUSSEAU A ND P ´ EREZ [4] Suni l Ar y a and Da vi d Mount , Appr oximate ne ar est neighb or queries in fixe d dimensions , in ACM- SIAM Symp. Discrete algorithms, 1993. [5] Connell y Ba rnes, Eli Shechtman, Adam Finkelstein, and Dan Goldman , Patchmatch: a r an- domize d c orr esp ondenc e algorithm f or structur al image e diting , AC M T rans. on Graph ics (Proc. SIG- GRAPH), 28 (2009). [6] Marcelo Ber t almio, Vicent Caselles, Si m on Masnou, and Gillermo Sap iro , Encyclop e dia of Computer Vision , Springer, 2011, ch. In p ainting. [7] Marcelo Ber t almi o, Guillermo S a piro, Vice nt Caselles, a n d Coloma Ballester , Image in- p ainting , in ACM SIGGRAPH, 2000. [8] Rapha ¨ el Bornard, Emmanu e lle Lecan, Louis Laborelli, and Jean Chenot , Missing data c or- r e ction in stil l i mages and image se quenc es , in ACM Multimedia, 2002 . [9] Yuri B oyk ov, Olga Veksler, and Ramin Zabih , F ast appr oximate ener gy mini mization via gr aph cuts , IEEE T rans. Pa ttern A nal. Mac hine Intell., 23 (2001), pp. 1222–1239. [10] Anthoni Buad es, Bar tomeu Coll, and Jean-Michel Morel , A non-lo c al al gorithm f or image de- noising , in Int. Conf. Computer Vision and Pattern Recognition (CVPR), 2005. [11] Aur ´ elie Bugeau, Ma rcell o Ber t almi o, Vi cent Caselles, an d Guillermo Sapi ro , A c ompr ehen- sive fr am ework for i mage inp ainting , Image Processing, IEEE T ransactions on, 19 (2010), pp. 2634– 2645. [12] Sen-Chi n. Cheung, Jian Zhao, and M. Vija y V enka tesh , Efficient obje ct-b ase d vide o inp ainting , in Int. Conf. Image Pro cessing (I CIP), 2006. [13] Antonio Criminisi, P a trick P ´ erez, and Kent a ro To y ama , Obje ct r emoval by exemplar-b ase d in- p ainting , in Int. Conf. Computer Vision and Pattern Recognition ( CVPR ), 2003. [14] Soheil Darabi, Eli Shechtma n , Connell y Barnes, Dan Goldman, an d Pradeep Sen , Image melding: c ombining inc onsistent images usi ng pa tch-b ase d synthesis , ACM T rans. Graph., 31 (2012). [15] Laurent Demane t, Bing Song, and Tony Chan , Image inp ainting by c orr esp ondenc e maps: a deter- ministic appr o ach , tech. rep ort, UCLA CAM, 2003. [16] Gianfra nco Doretto, Alessan d ro Chiuso, Ying Nian Wu, and S te f a no Soa tto , Dynamic tex- tur es , Int. J. Comput. Vision (IJCV), 51 (2003), pp. 91–109 . [17] Iddo Drori, Daniel C. Or, an d H ezy Yeshurun , F r agment-b ase d image c ompletion , ACM T rans. Graph., 22 (2003), pp. 303–312. [18] Alexei Efros and Thomas Leung , T extur e synthesis by non-p ar ametric sampling , in Int. Conf. Com- puter Vision (ICCV), 1999. [19] Miguel Granados, Kw an gIn Kim, Jame s Tompkin, Jan Kautz, and Christian Theobal t , Back- gr ound inp ainting f or vide os wi th dynamic obje cts and a fr e e-moving c amer a , in Europ. Conf. Com- puter Vision (ECCV), 2012. [20] M. Granados, J. T ompkin, K. Kim, O. Grau, J. Kautz, and C. Theobal t , How not to b e se en: Obje ct r emoval fr om vide os of cr owde d sc enes , Comp. Graph. F orum (EUROGRAPHICS), (2012). [21] Kaimi n g He and Jian S u n , Computing ne ar est-neighb or fields via pr op agation-assiste d kd-tr e es , in Int. Conf. Computer Vision and Pattern R ecognition (CVPR), 2012. [22] , Statistics of p atch offsets f or image c ompletion , in Int. Conf. Computer Vision (ECCV), 2012. [23] Jan Herling and Wolf gang B roll , Pixmix: A r e al-time appr o ach to high-quality dim i nishe d r e ality , in Int. Symp . Mixed and Augmented R eality (ISMAR), 2012. [24] Jia y a Jia, Yu-W ing T ai, T ai-P ang W u, and Chi-Ke u ng T ang , Vide o r ep ai ring under variable il lumi- nation using cyclic m otions , I EEE T rans. Pattern Anal. and Machine Intell., 28 (2006), pp. 832–839 . [25] Nikos Komod akis and George Tzirit as , I m age c ompletion using effici ent b el ief pr op agation via pri- ority sche duling and dynamic pruning , I EET trans. Image Processing, 16 (2007), pp. 2649–266 1. [26] Simon Korman and Shai A vid an , Coher ency sensitive hashing , in I nt. Conf. Computer Vision ( ICCV), 2011. [27] Vivek Kw a tra, Arno Sch ¨ odl, Irf an Essa, Greg Turk, and Aaron Bobick , Gr aphcut textur es: image and vide o synthes is using gr aph cuts , ACM T rans. Graph., 22 (2003), pp . 277–286. [28] Chih-Hu n g Li ng, Chia-Wen Lin, Chi h-Wen Su, Yong-She ng Chen, a n d Hong-Yuan M. Liao , Virtual c ontour guide d vide o obje ct inp ainting using p ostur e mapping and r etrieval , IEEE T rans. Multimedia, 13 (2011), pp . 292–302. [29] Yunqiang Liu and Vicent Caselles , Exemplar-b ase d i mage inp ainting usi ng multisc ale gr aph cuts , VIDEO INP AINTING 27 IEEE T rans. Image Proc., 22 (2013), pp. 1699–171 1. [30] Simon Masnou and Jean-Miche l M orel , L evel li nes b ase d diso c clusion , in Int. Conf. Image Processing (ICIP), 1998. [31] Alasdair Newson, Andr ´ es Almansa, Ma tthieu Fradet, Y ann Gousseau, and P a trick P ´ erez , T owar ds fast, generic vi de o inp ainting , in Eur. Conf. Visual Media Pro duct ion (CVMP), 2013. [32] Jean-Marc Odobez a nd P a trick Bouthemy , R obust multir esolution estimation of p ar ametric motion mo dels , J. V is. Comm. and Im age Representati on, 6 (1995), pp . 348–365. [33] Igor Olonetsky and Shai A vidan , T r e e c ann - k-d tr e e c oher enc e appr oximate ne ar est neighb or algo- rithm , in Eur. Conf. Computer V ision (ECCV), 2012. [34] Kedar P a tw ardhan, Guillermo Sapiro, and Marcelo B er t a lmi o , Vide o inp ai nting of o c cludi ng and o c clude d obje cts , in Int. Conf. Image Pro cessing (ICIP), 2005. [35] , Vide o inp ainting under c onstr aine d c amer a motion , IEEE T rans. Image Processing, 16 (2007), pp. 545–553. [36] Y ael Pritch, Eit am Ka v-Venaki, and Sh muel Peleg , Shi ft-map i mage e diting , in Int. Conf. Com- puter Vision (ICCV), 2009. [37] Arno Sch ¨ odl, Richard Szeliski, Da vi d H. Sale si n, and Irf an Essa , Vide o textur es , in AC M SIG- GRAPH, 2000. [38] Yona t an Wexler, Eli She ch tm a n, and Michal Irani , Sp ac e-time vide o c ompletion , in Int. Conf. Computer Vision and Pattern Recognition (CVPR), 2004. [39] , Sp ac e-time c ompletion of vide o , IEEE. T rans. P attern Anal. Machine Intel., 29 (2007), pp. 463–47 6.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment