Enhancing Robustness of Machine Learning Systems via Data Transformations

We propose the use of data transformations as a defense against evasion attacks on ML classifiers. We present and investigate strategies for incorporating a variety of data transformations including dimensionality reduction via Principal Component Analysis and data `anti-whitening’ to enhance the resilience of machine learning, targeting both the classification and the training phase. We empirically evaluate and demonstrate the feasibility of linear transformations of data as a defense mechanism against evasion attacks using multiple real-world datasets. Our key findings are that the defense is (i) effective against the best known evasion attacks from the literature, resulting in a two-fold increase in the resources required by a white-box adversary with knowledge of the defense for a successful attack, (ii) applicable across a range of ML classifiers, including Support Vector Machines and Deep Neural Networks, and (iii) generalizable to multiple application domains, including image classification and human activity classification.

💡 Research Summary

This paper presents a novel defense mechanism against evasion attacks in machine learning (ML) systems by strategically applying linear data transformations. Evasion attacks, where adversaries craft subtle perturbations to test inputs to cause misclassification, pose a significant threat to deployed ML models. The authors argue that existing defenses are often limited to specific classifier types or attack methods.

The core proposal involves integrating a linear transformation step into both the training and inference pipelines. The defense algorithm operates as follows: First, a transformation matrix (B) is selected based on properties of the training data, with Principal Component Analysis (PCA) for dimensionality reduction being a primary example. Second, a new classifier is trained not on the original data (X), but on the transformed data (BX). The final deployed classifier applies the same transformation B to any input before making a prediction.

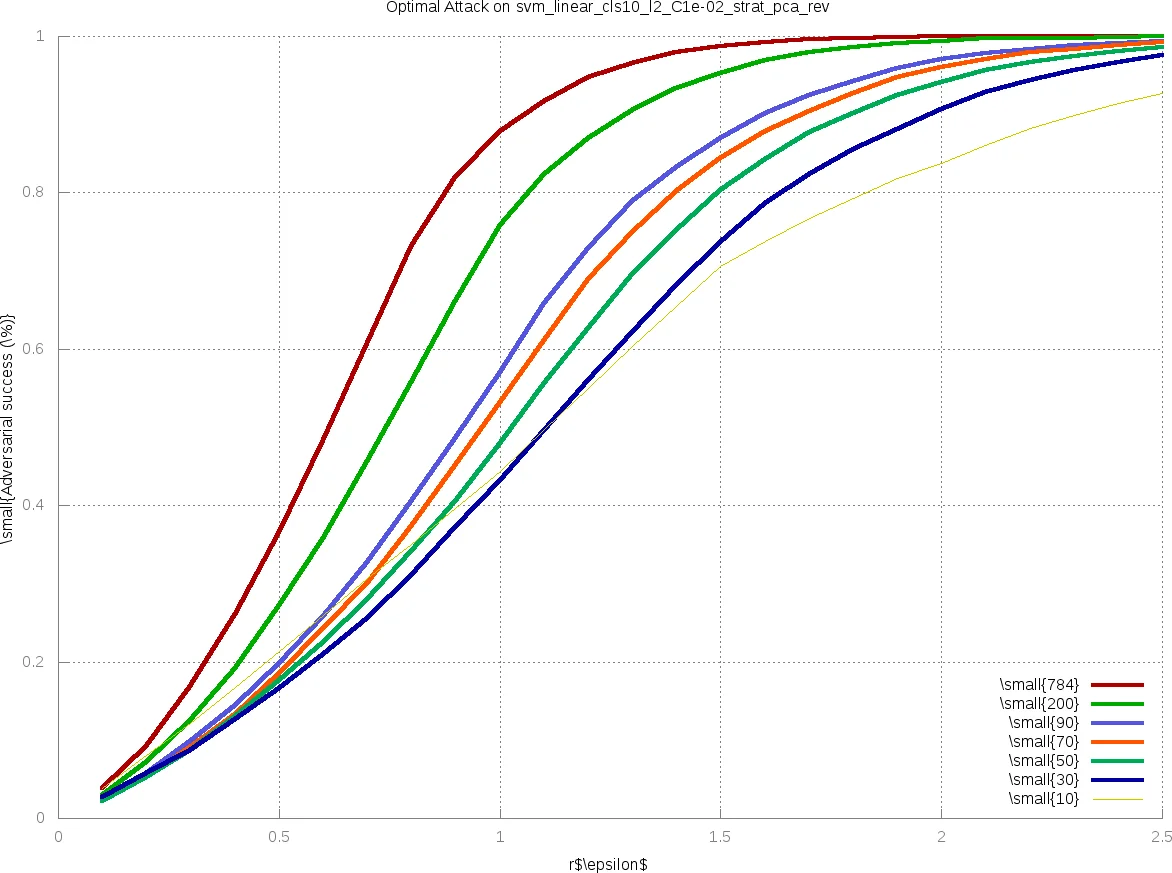

The research conducts a comprehensive empirical evaluation to validate the defense’s effectiveness and generality. Experiments are performed on two real-world datasets: the MNIST image dataset and the UCI Human Activity Recognition (HAR) dataset. The defense is tested against multiple state-of-the-art evasion attacks, including an optimal attack on Linear Support Vector Machines (SVMs), the Fast Gradient Sign Method (FGSM) and a customized Fast Gradient (FG) attack for Deep Neural Networks (DNNs), and the powerful optimization-based Carlini & Wagner (C&W) attack. Critically, the evaluation considers adversaries with varying levels of knowledge: a powerful white-box adversary with full knowledge of the defense, a classifier mismatch setting where the adversary knows only the original undefended model, and an architecture mismatch setting simulating a more practical black-box scenario.

Key findings demonstrate that the proposed defense significantly increases robustness across all settings. Even against a white-box adversary, the defense raises the cost of attack substantially. Results show up to a 5x increase in the magnitude of perturbation (e.g., L2 norm) required to achieve a successful attack, and equivalently, a reduction in adversarial success rates by factors ranging from 2x to 50x at fixed perturbation budgets. This security improvement comes at a modest cost, typically a 0.5-2% drop in classification accuracy on benign samples. Furthermore, the defense proves to be general: it is effective when applied to different classifier families (SVMs and DNNs) and across disparate application domains (image classification and time-series activity classification).

The paper concludes that while the data transformation defense does not completely eliminate the threat of evasion attacks, it provides a classifier-agnostic and dataset-agnostic tool that measurably increases the adversary’s effort. Its tunable nature allows system designers to navigate the utility-security trade-off. The authors suggest that their method can be synergistically combined with other techniques like adversarial training to build more comprehensively robust ML systems, paving the way for more secure deployments in adversarial environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment