The Partially Observable Hidden Markov Model and its Application to Keystroke Dynamics

The partially observable hidden Markov model is an extension of the hidden Markov Model in which the hidden state is conditioned on an independent Markov chain. This structure is motivated by the presence of discrete metadata, such as an event type, …

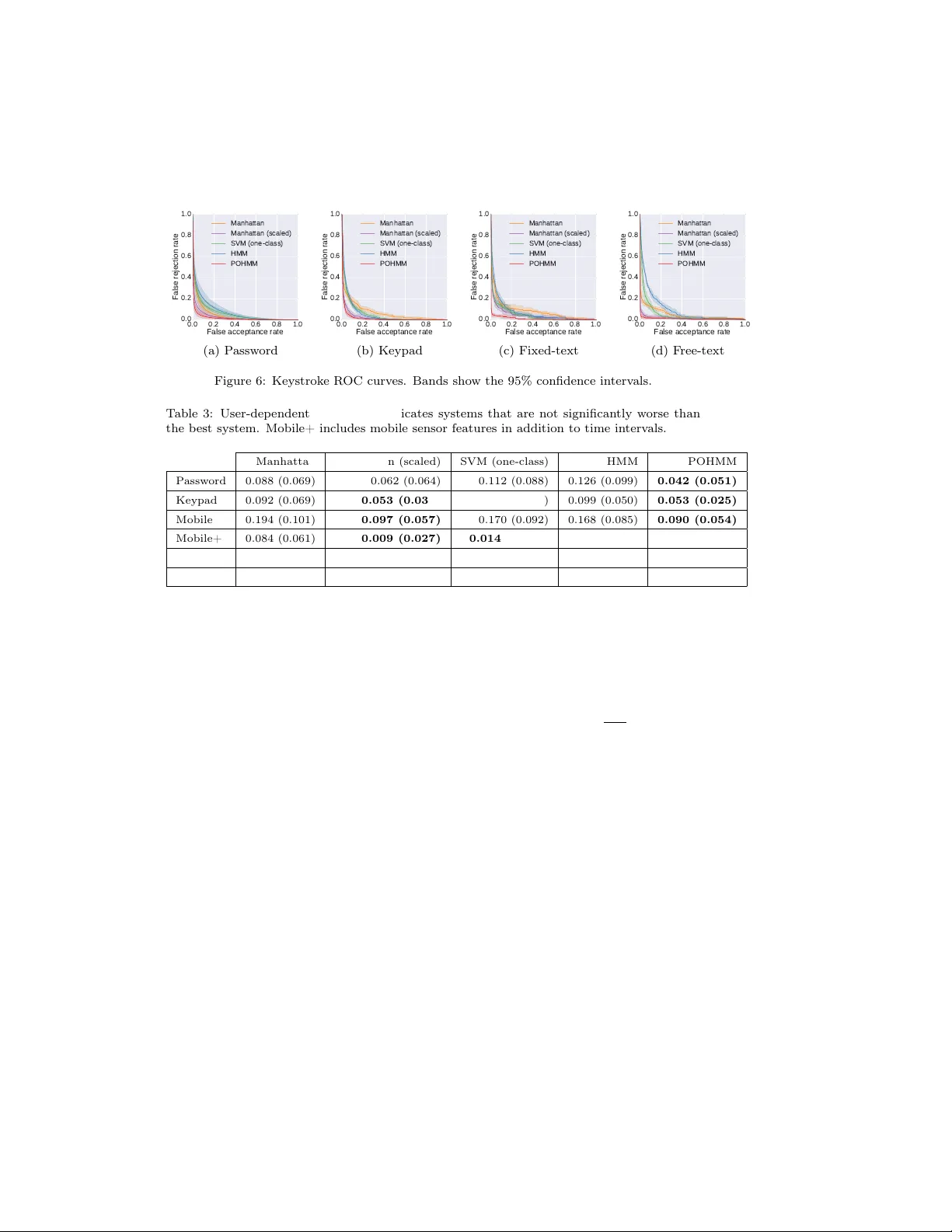

Authors: John V. Monaco, Charles C. Tappert