Neuromorphic Silicon Photonic Networks

Photonic systems for high-performance information processing have attracted renewed interest. Neuromorphic silicon photonics has the potential to integrate processing functions that vastly exceed the capabilities of electronics. We report first observations of a recurrent silicon photonic neural network, in which connections are configured by microring weight banks. A mathematical isomorphism between the silicon photonic circuit and a continuous neural network model is demonstrated through dynamical bifurcation analysis. Exploiting this isomorphism, a simulated 24-node silicon photonic neural network is programmed using “neural compiler” to solve a differential system emulation task. A 294-fold acceleration against a conventional benchmark is predicted. We also propose and derive power consumption analysis for modulator-class neurons that, as opposed to laser-class neurons, are compatible with silicon photonic platforms. At increased scale, Neuromorphic silicon photonics could access new regimes of ultrafast information processing for radio, control, and scientific computing.

💡 Research Summary

The paper presents a pioneering demonstration of a neuromorphic silicon photonic processor built around the broadcast‑and‑weight architecture. Each neuron emits a wavelength‑division‑multiplexed (WDM) optical carrier that is broadcast across the chip. Reconfigurable microring resonator (MRR) weight banks impose continuous‑valued weights on the incoming WDM signals, after which balanced photodetectors convert the weighted optical power into electrical currents. These currents drive Mach‑Zehnder modulators (MZMs) that both provide the nonlinear activation function (a sinusoidal electro‑optic transfer) and regenerate the optical carriers, thus closing the recurrent loop.

To prove that the physical system is mathematically isomorphic to a continuous‑time recurrent neural network (CTRNN), the authors experimentally observe two canonical bifurcations. In a single‑node configuration, varying the self‑feedback weight (w_{11}) produces a cusp bifurcation: the measured state surface exhibits the characteristic pitch‑fork, bistable, and cusp curves predicted by the CTRNN model equations (Supplementary Eq. S1.1‑S1.4). In a two‑node network with asymmetric off‑diagonal weights and equal diagonal weights, a Hopf bifurcation is induced by sweeping the feedback weight (W_F). The onset of sustained oscillations, the parabolic amplitude‑versus‑weight relationship, and the linear increase of oscillation frequency near the critical point all match the analytical CTRNN predictions (Eq. S1.8, S1.10). The agreement confirms that the photonic circuit faithfully reproduces the dynamical behavior of the abstract neural model, provided that low‑pass electronic filtering suppresses unwanted delay‑induced dynamics. Minor discrepancies in the transition region are attributed to thermal cross‑talk between adjacent MRRs, which slightly perturbs the effective weights.

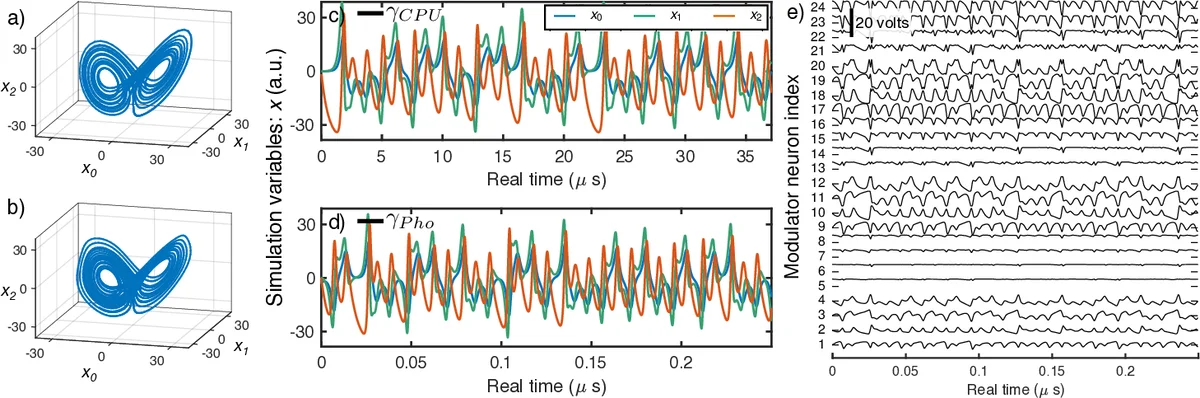

Leveraging this established isomorphism, the authors simulate a 24‑node photonic CTRNN programmed via the Neural Engineering Framework (NEF), a “neural compiler” that maps high‑level algorithms onto neural dynamics. The target task is solving the Lorenz attractor, a chaotic system of three coupled ordinary differential equations. The photonic simulation reproduces the attractor’s phase‑space trajectories and time series with high fidelity when compared to a conventional CPU implementation. By introducing a time‑scaling factor (\gamma) that relates physical nanosecond‑scale dynamics to the virtual simulation time, the authors estimate a computational speed‑up of roughly 294× over the CPU baseline. This acceleration stems from the intrinsic picosecond‑scale propagation of light and the massive parallelism afforded by wavelength multiplexing.

A critical contribution of the work is the power‑consumption analysis for modulator‑class neurons, which are compatible with standard silicon photonic foundries. Unlike laser‑based neurons that require continuous optical gain, the MZM‑based neurons consume only the electrical drive power (tens of milliwatts per node) and the modest static bias of the photodetectors. The authors derive scaling laws showing that total power grows linearly with node count, suggesting that large‑scale photonic neural networks could remain within practical thermal budgets.

In summary, the paper demonstrates that a silicon photonic chip can implement a fully reconfigurable, recurrent neural network whose dynamics are mathematically identical to a CTRNN. Experimental verification of cusp and Hopf bifurcations validates the physical‑to‑algorithmic mapping. Simulations of a 24‑node network solving a chaotic ODE illustrate a potential 300‑fold speed advantage over electronic CPUs, while the proposed modulator‑based neuron architecture promises low power consumption and CMOS‑compatible fabrication. The authors argue that, at scale, neuromorphic silicon photonics could open new regimes for ultrafast information processing in radio‑frequency front‑ends, real‑time control, and scientific computing, positioning photonic neural hardware as a viable complement—or even alternative—to traditional electronic processors.

Comments & Academic Discussion

Loading comments...

Leave a Comment