Should You Derive, Or Let the Data Drive? An Optimization Framework for Hybrid First-Principles Data-Driven Modeling

Mathematical models are used extensively for diverse tasks including analysis, optimization, and decision making. Frequently, those models are principled but imperfect representations of reality. This is either due to incomplete physical description of the underlying phenomenon (simplified governing equations, defective boundary conditions, etc.), or due to numerical approximations (discretization, linearization, round-off error, etc.). Model misspecification can lead to erroneous model predictions, and respectively suboptimal decisions associated with the intended end-goal task. To mitigate this effect, one can amend the available model using limited data produced by experiments or higher fidelity models. A large body of research has focused on estimating explicit model parameters. This work takes a different perspective and targets the construction of a correction model operator with implicit attributes. We investigate the case where the end-goal is inversion and illustrate how appropriate choices of properties imposed upon the correction and corrected operator lead to improved end-goal insights.

💡 Research Summary

The paper addresses a fundamental challenge in scientific and engineering modeling: first‑principles (physics‑based) models are often imperfect because of incomplete governing equations, simplified boundary conditions, or numerical approximations. Such misspecification leads to biased predictions and sub‑optimal decisions when the model is used for analysis, optimization, or inversion. The authors propose a systematic framework that augments a misspecified model M with a data‑driven correction operator C, forming a corrected model M + C that better serves a prescribed end‑goal (e.g., solving an inverse problem).

The framework is built around three mutually reinforcing criteria:

-

Fidelity – a quantitative measure of how closely the corrected model reproduces data generated by the fully‑specified (ground‑truth) model T. The authors denote this by a functional F(M, C, D, Q) that compares model outputs (through an observation operator P) with a training set of controls Q and observations D. The overall objective includes a noise model G, typically the squared Frobenius norm of the residual.

-

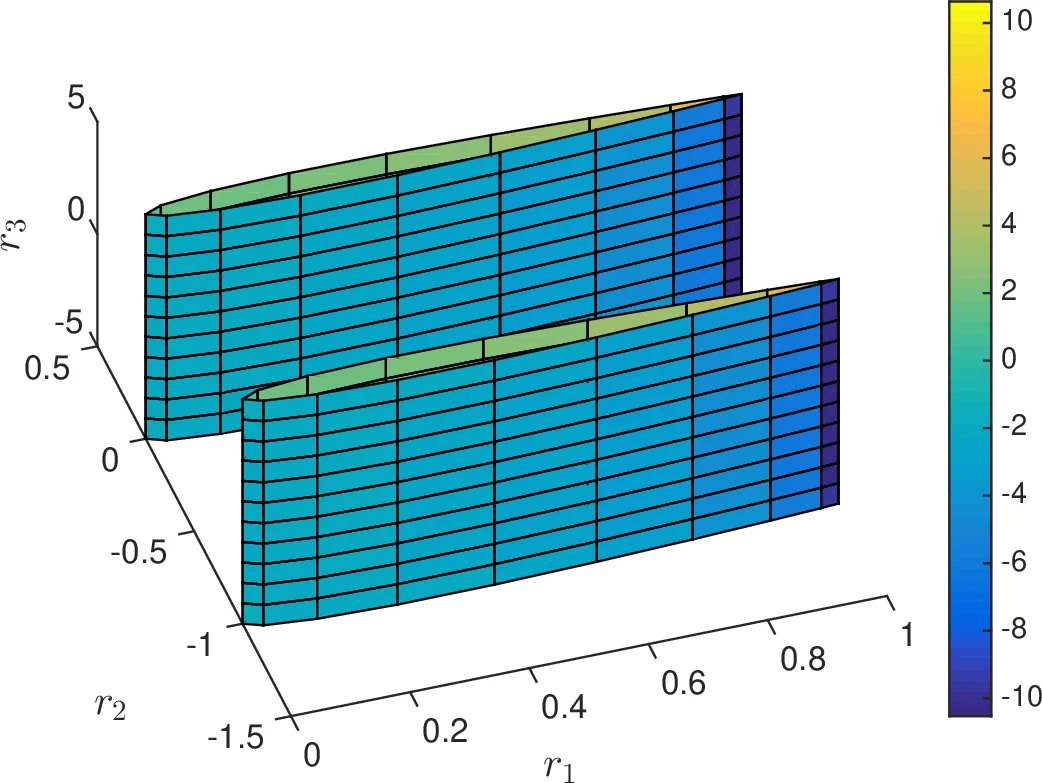

Virtue – a property that the corrected model should possess for the specific task. In the illustrative linear case the virtue is a low condition number κ(M + C), which ensures numerical stability and fast convergence of iterative solvers when the corrected model is inverted.

-

Structure – a regularization that keeps the correction “small” and prevents over‑fitting. The authors enforce low rank (or, in practice, a nuclear‑norm bound) on C, reflecting the intuition that only a limited number of physical mechanisms are missing from M.

Mathematically, the problem is posed as:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment