Private Learning on Networks: Part II

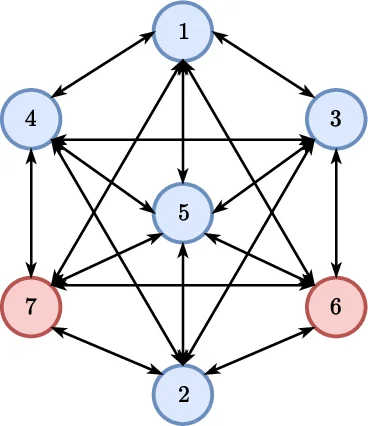

This paper considers a distributed multi-agent optimization problem, with the global objective consisting of the sum of local objective functions of the agents. The agents solve the optimization problem using local computation and communication between adjacent agents in the network. We present two randomized iterative algorithms for distributed optimization. To improve privacy, our algorithms add “structured” randomization to the information exchanged between the agents. We prove deterministic correctness (in every execution) of the proposed algorithms despite the information being perturbed by noise with non-zero mean. We prove that a special case of a proposed algorithm (called function sharing) preserves privacy of individual polynomial objective functions under a suitable connectivity condition on the network topology.

💡 Research Summary

This paper tackles the problem of distributed optimization in a multi‑agent network where each agent i possesses a local objective function f_i(x) and the global goal is to minimize the sum f(x)=∑_{i=1}^n f_i(x) over a convex compact feasible set X. Classical distributed gradient descent (DGD) achieves consensus on an optimal point by repeatedly exchanging raw state estimates among neighbors, but the unprotected exchange leaks information about each agent’s private data (e.g., local loss functions derived from proprietary datasets). To address this privacy vulnerability, the authors introduce two “structured randomization” schemes that deliberately corrupt the exchanged states with carefully designed random noise while still guaranteeing deterministic convergence to a true optimum.

The first scheme, Randomized State Sharing – Network Balanced (RSS‑NB), modifies DGD by adding a perturbation d_j^k to each agent’s current state x_j^k before transmission. The perturbation is computed as d_j^k = Σ_{i∈N_j} (s_{i,j}^k – s_{j,i}^k), where s_{i,j}^k are random vectors exchanged between neighboring agents. By construction Σ_{j=1}^n d_j^k = 0 for every iteration, ensuring that the network‑wide average perturbation is zero. Each agent then sends the same perturbed estimate w_j^k = x_j^k + α_k d_j^k to all its neighbors, receives perturbed estimates from them, performs a consensus step using a doubly‑stochastic matrix B^k, and finally executes a projected gradient descent update. The magnitude of each perturbation is bounded by a design parameter Δ (‖d_j^k‖ ≤ Δ), which directly controls the privacy‑accuracy trade‑off: larger Δ yields stronger obfuscation but slower convergence.

The second scheme, Randomized State Sharing – Locally Balanced (RSS‑LB), relaxes the requirement that an agent send the same perturbed value to all neighbors. Instead, each agent independently draws a distinct noise vector d_{j,i}^k for each neighbor i, subject to the local balance condition Σ_{i∈N_j} B_{i,j}^k d_{j,i}^k = 0 and d_{j,j}^k = 0. The perturbed message w_{j,i}^k = x_j^k + α_k d_{j,i}^k is sent only to neighbor i. This design eliminates the need for coordinated generation of the s‑vectors, allowing each node to generate its own noise locally while still guaranteeing that the weighted sum of all injected noises cancels out globally. The same consensus‑gradient steps follow as in RSS‑NB.

Both algorithms retain the classic step‑size schedule (α_k non‑increasing, Σ α_k = ∞, Σ α_k^2 < ∞) and are proved to converge deterministically to a point x* ∈ X* that minimizes the global objective, despite the presence of non‑zero‑mean, bounded perturbations. The convergence proof hinges on showing that the perturbed updates remain a non‑expansive mapping and that the cumulative effect of the bounded noise vanishes under the diminishing step‑size regime. The authors also derive explicit bounds linking Δ, the Lipschitz constant of the gradients, and the spectral properties of B^k to the convergence rate.

A special case of RSS‑NB, called Function Sharing (FS), is examined for polynomial local objectives. In FS the perturbation is made state‑dependent, effectively “sharing” a noisy version of each agent’s objective function with its neighbors. Under the additional graph connectivity condition that the communication network be (n‑1)‑connected, the authors prove that no adversarial agent can recover the exact coefficients of another agent’s polynomial loss, establishing a strong information‑theoretic privacy guarantee.

Empirical validation is performed on two standard machine‑learning benchmarks. First, a three‑layer deep neural network is trained on the MNIST digit‑recognition dataset; second, a logistic regression model is trained on the Reuters news‑article classification dataset. The experiments compare vanilla DGD, RSS‑NB, RSS‑LB, and the FS variant across several Δ values. When Δ is modest (e.g., 0.05–0.1), the test accuracies of the randomized algorithms are virtually indistinguishable from DGD (≈98 % for MNIST, ≈92 % for Reuters). As Δ increases, accuracy degrades by only a few percentage points while the variance of the transmitted messages grows substantially, confirming the intended privacy amplification. The FS algorithm achieves comparable accuracy while guaranteeing that the underlying polynomial loss functions remain hidden.

In summary, the paper contributes a novel privacy‑preserving framework for distributed optimization that leverages structured, globally‑balanced or locally‑balanced random perturbations. It provides rigorous deterministic convergence guarantees despite non‑zero‑mean noise, offers explicit privacy‑accuracy trade‑offs via the Δ parameter, and demonstrates strong privacy for polynomial objectives under mild connectivity assumptions. The work opens avenues for extending structured randomization to asynchronous settings, time‑varying topologies, and non‑polynomial loss functions, thereby broadening the applicability of privacy‑aware distributed learning in real‑world networked systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment