A Comprehensive Survey on Fog Computing: State-of-the-art and Research Challenges

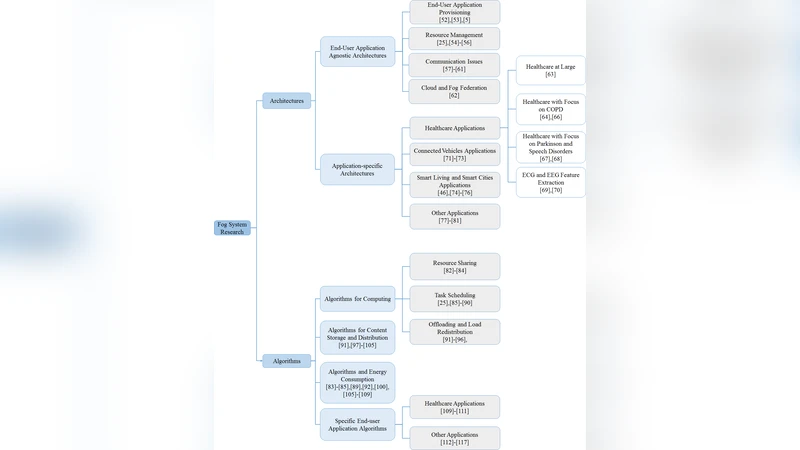

Cloud computing with its three key facets (i.e., IaaS, PaaS, and SaaS) and its inherent advantages (e.g., elasticity and scalability) still faces several challenges. The distance between the cloud and the end devices might be an issue for latency-sensitive applications such as disaster management and content delivery applications. Service Level Agreements (SLAs) may also impose processing at locations where the cloud provider does not have data centers. Fog computing is a novel paradigm to address such issues. It enables provisioning resources and services outside the cloud, at the edge of the network, closer to end devices or eventually, at locations stipulated by SLAs. Fog computing is not a substitute for cloud computing but a powerful complement. It enables processing at the edge while still offering the possibility to interact with the cloud. This article presents a comprehensive survey on fog computing. It critically reviews the state of the art in the light of a concise set of evaluation criteria. We cover both the architectures and the algorithms that make fog systems. Challenges and research directions are also introduced. In addition, the lessons learned are reviewed and the prospects are discussed in terms of the key role fog is likely to play in emerging technologies such as Tactile Internet.

💡 Research Summary

**

The surveyed paper provides a thorough and systematic overview of fog computing, positioning it as a complementary paradigm to cloud computing that addresses latency, locality, and Service Level Agreement (SLA) constraints inherent in many emerging applications. The authors begin by highlighting the limitations of traditional cloud infrastructures—particularly the physical distance between data centers and end‑devices, which hampers latency‑sensitive services such as disaster response, real‑time content delivery, and the nascent Tactile Internet. Fog computing is defined as a distributed layer of compute, storage, and networking resources situated at the edge of the network, between the cloud and the end‑devices, and is described as an “extension of the cloud” rather than a replacement.

The paper classifies fog architectures into four hierarchical layers: (1) Device layer (sensors, actuators, smartphones), (2) Edge layer (routers, gateways, small switches), (3) Fog layer (micro‑data‑centers, mini‑servers, edge clusters), and (4) Cloud layer (large‑scale data centers). Within this taxonomy, the authors distinguish between single‑layer, multi‑layer, and hybrid deployments, analyzing trade‑offs in scalability, management complexity, and fault tolerance. A concise set of five evaluation criteria is introduced to assess existing fog proposals: (i) architectural layering, (ii) resource management efficiency, (iii) security and privacy mechanisms, (iv) standardization and interoperability, and (v) experimental validation/benchmarking. Each surveyed work is scored against these criteria, revealing that most research concentrates on resource management and architectural design, while standardization and comprehensive security remain under‑explored.

Resource‑management techniques receive extensive treatment. Scheduling algorithms range from heuristic latency‑aware dispatchers to reinforcement‑learning based dynamic schedulers that adapt to fluctuating workloads and mobility patterns. Service placement and migration strategies address the need for seamless hand‑off as users move across fog nodes, often employing cost‑performance models that balance latency reduction against migration overhead. Data management approaches include edge caching, prefetching, and the use of distributed file systems (e.g., IPFS, EdgeFS) to minimize round‑trip times. Security solutions discussed comprise lightweight encryption, blockchain‑based trust anchors, and multi‑factor authentication tailored for resource‑constrained edge devices. Energy‑efficiency research focuses on dynamic voltage/frequency scaling, power‑profile aware task allocation, and the integration of renewable energy sources into fog nodes.

The authors identify several critical challenges that must be resolved before fog computing can achieve widespread adoption. First, the lack of a unified standard—despite initiatives such as ETSI MEC, OpenFog, and IEEE drafts—impedes interoperability across vendors and domains. Second, automated management of large, heterogeneous fog fleets remains immature; orchestration frameworks need to incorporate AI‑driven provisioning, health monitoring, and over‑the‑air updates. Third, security threats are amplified at the edge due to physical exposure and limited computational capacity, demanding novel lightweight yet robust protection schemes. Fourth, guaranteeing Quality of Service (QoS) for diverse applications (e.g., ultra‑low latency for tactile feedback versus high‑throughput for video analytics) requires multi‑objective optimization and SLA‑driven resource contracts. Finally, environmental sustainability is a concern; fog nodes must be designed for low power consumption and, where possible, powered by renewable sources.

Future research directions are outlined accordingly: (1) development of a comprehensive, cross‑industry fog standard that harmonizes APIs, data models, and security primitives; (2) integration of machine‑learning driven orchestration for dynamic scaling, fault prediction, and self‑healing; (3) design of cryptographic primitives and trust frameworks optimized for edge constraints; (4) formulation of multi‑QoS scheduling and SLA‑aware placement algorithms that can be verified analytically; and (5) exploration of green fog architectures, including energy‑harvesting hardware and workload‑aware power management.

In the concluding section, the paper emphasizes fog computing’s pivotal role in enabling next‑generation technologies such as the Tactile Internet, autonomous vehicles, smart cities, and Industry 4.0. By coupling fog with emerging 5G/6G networks—particularly through network slicing and edge‑native services—operators can deliver customized, low‑latency experiences that meet strict SLA requirements. The authors assert that while fog is not a substitute for cloud, its strategic placement and complementary capabilities make it an essential building block for future distributed systems. Continued collaboration between academia, industry, and standards bodies is deemed essential to transform fog from a research concept into a robust, production‑grade infrastructure.

Comments & Academic Discussion

Loading comments...

Leave a Comment